使用简单神经网络进行手写数字辨识

使用pytorch构建神经网络系列

第一章 手写数字辨识初体验

本篇文章是记录使用简单神经网络进行对手写数字辨识

1.定义一下后续使用的函数

代码如下(示例):

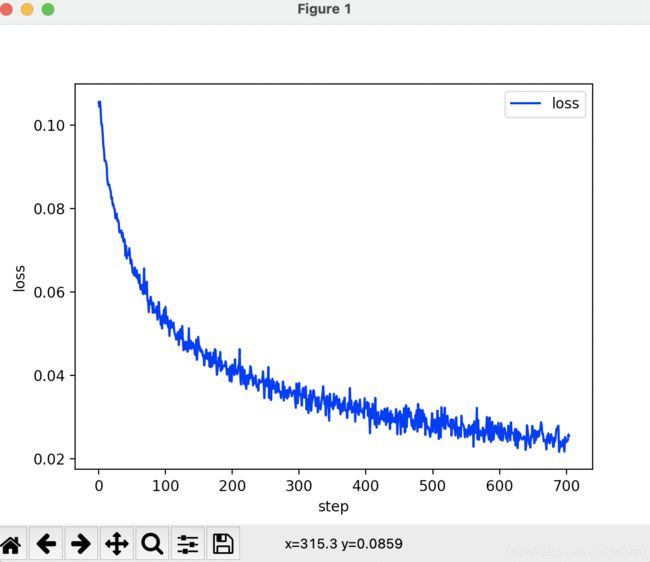

可视化loss下降曲线

import torch

from matplotlib import pyplot as plt

#loss curve

def plot_curve(data):

fig = plt.figure()

plt.plot(range(len(data)), data, color = 'blue')

plt.legend(['value'], loc = 'upper right') #graphic symbol

plt.xlabel('step')

plt.ylabel('value')

plt.show()

可视化识别结果

# visualization of result

def plot_image(image, label, name):

fig = plt.figure()

for i in range(6):

plt.subplot(2, 3, i+1)

plt.tight_layout()

plt.imshow(img[i][0]*0.3081+0.1307, cmap='gray', interpolation='none')

plt.title("{0}: {1}".format(name, label[i].item()))

plt.xticks([])

plt.yticks([])

plt.show()

对标签进行one-hot编码

def one_hot(label, depth=10):

out = torch.zeros(label.size(0), depth)

idx = torch.LongTensor(label).view(-1,1)

out.scatter_(dim=1, index=idx, value=1)

return out

2.读入数据

代码如下(示例):

import torch

from torch import nn

from torch.nn import functional as F

from torch import optim

import torchvision

from matplotlib import pyplot as plt

from utils import plot_image, plot_curve, one_hot

batch_size选择256,需要对训练数据进行随机打散,测试集不需要。

batch_size = 256

#load dataset

train_loader = torch.utils.data.DataLoader(

torchvision.datasets.MNIST('mnist_data', train=True, download=True,

transform=torchvision.transforms.Compose([

torchvision.transforms.ToTensor(),

torchvision.transforms.Normalize(

(0.1307,), (0.3081)) #mean=0.1307,sd=0.3081

])),

batch_size=batch_size, shuffle=True)

test_loader = torch.utils.data.DataLoader(

torchvision.datasets.MNIST('mnist_data/', train=False, download=True,

transform=torchvision.transforms.Compose([

torchvision.transforms.ToTensor(),

torchvision.transforms.Normalize(

(0.1307,), (0.3081)) #mean=0.1307,sd=0.3081

])),

batch_size=batch_size, shuffle=False)

可以看一下我们数据中的一个sample

x, y = next(iter(train_loader))

print(x.shape, y.shape, x.min(),x.max())

plot_image(x, y, 'sample')

3.构建简单神经网络

我们写一个简单的神经网络,对一般的线性回归进行3层嵌套,也就是对y = w*x + b 进行3层嵌套,每一层再加一个ReLu激活函数,代码如下所示:

class Net(nn.Module):

def __init__(self):

super(Net, self).__init__()

# xw+b

self.fc1 = nn.Linear(28*28, 256)

self.fc2 = nn.Linear(256, 64)

self.fc3 = nn.Linear(64, 10)

def forward(self, x):

# x:[b,1,28,28]

# h1 = relu(xw1+b)

x = F.relu(self.fc1(x))

# h2 = relu(h1w2 + b)

x = F.relu(self.fc2(x))

# h3 = h2w3 + b

x = self.fc3(x)

return x

4.训练神经网络

接下来对神经网络进行训练(3次),并通过SGDM求解最优解,设置learning rate = 0.01,动量momentum=0.9,loss函数为MSE

net = Net()

optimizer = optim.SGD(net.parameters(), lr=0.01, momentum=0.9)

train_loss = []

for epoch in range(3):

for batch_idx, (x, y) in enumerate(train_loader):

# x: [b, 1, 28, 28], y:[256]

# [b, 1, 28, 28] ==> [b, 784]

x = x.view(x.size(0), 28*28)

# ==> [b, 10]

out = net(x)

y_onehot = one_hot(y)

# loss = mse(out, y_onehot)

loss = F.mse_loss(out, y_onehot)

optimizer.zero_grad()

loss.backward()

# w' = w - lr*grad

optimizer.step()

train_loss.append(loss.item())

if batch_idx % 10 == 0:

print(epoch, batch_idx, loss.item())

#get optimal w1 b1 w2...

对loss的变化进行可视化,我们可以很清楚的看到loss不断下降

plot_curve(train_loss)

5.对测试集预测,计算准确率Accuracy

最后我们使用训练好的神经网络对测试集进行预测,准确率有90%多,效果还可以。

total_correct = 0

for x, y in test_loader:

x = x.view(x.size(0), 28*28)

out = net(x)

# out:[b, 10] ==> pred[b]

pred = out.argmax(dim = 1)

correct = pred.eq(y).sum().float().item()

total_correct += correct

total_sum = len(test_loader.dataset)

acc = total_correct / total_sum

print("test accuracy:", acc)

test accuracy: 0.9141

总结

以上就是使用简单的神经网络对手写数字进行辨识,

代码内容出自网易云课程,本篇blog是对该课程进行简单记录和总结