pytorch实现 Restnet18

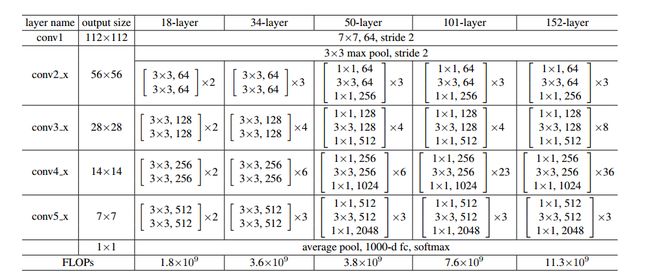

残差网络结构参数:

pytroch实现代码,Resnet-18:

import torch

import torch.nn as nn

class ResNetBasicBlock(nn.Module):

def __init__(self, in_channels, out_channels, stride):

super(ResNetBasicBlock, self).__init__()

# padding: 表示 四周 补0的个数, 卷积 权重 和 偏置 随机分配

# 卷积核大小 (3,3), 输入数据 四周 补 0 个数 为 1, 四周 补 一圈 0; 卷积之后, 原数据 长宽不变。

self.conv1 = nn.Conv2d(in_channels, out_channels, kernel_size=3, stride=stride, padding=1, bias=False)

self.bn1 = nn.BatchNorm2d(out_channels) # 在通道上 归一化 ? 理解不够深刻

self.relu = nn.ReLU(inplace=True)

self.conv2 = nn.Conv2d(out_channels, out_channels, kernel_size=3, stride=stride, padding=1, bias=False)

self.bn2 = nn.BatchNorm2d(out_channels) # BatchNorm2d 有学习参数 a,b

# 两层 卷积层 都保持 输入大小不变

self.stride = stride

def forward(self, x):

residual = x

output = self.conv1(x)

output = self.relu(self.bn1(output)) # inplace 直接对传过来的值进行修改,不再经过中间变量; bn在激活函数之前

output = self.conv2(output)

output = self.bn2(output)

output += residual # 残差 # 图像大小 相同,才能相加

return torch.relu(output)

class BaseRestBlock_Downsample(nn.Module):

def __init__(self, in_channels, out_channels, stride):

super(BaseRestBlock_Downsample, self).__init__()

# 卷积, stride=2, 图像大小减半, 通道加倍

self.conv1 = nn.Conv2d(in_channels, out_channels, kernel_size=3, stride=stride[0], padding=1, bias=False)

self.bn1 = nn.BatchNorm2d(out_channels) # 在通道上 归一化 ? 理解不够深刻

self.relu = nn.ReLU(inplace=True)

self.conv2 = nn.Conv2d(out_channels, out_channels, kernel_size=3, stride=stride[1], padding=1, bias=False)

self.bn2 = nn.BatchNorm2d(out_channels) # BatchNorm2d 有学习参数 a,b

# 两层 卷积层 都保持 输入大小不变

self.stride = stride

self.downsample = nn.Sequential(

# 下采样, 不填充, 卷积核为1, 步长为2 -》 图像大小减半。 # 通过 卷积 来下采样, 图像减半 而不是 池化

nn.Conv2d(in_channels, out_channels, kernel_size=1, stride=stride[0], padding=0, bias=False),

nn.BatchNorm2d(out_channels)

)

def forward(self, x):

residual = x

residual = self.downsample(residual) # 图像大小减半

output = self.conv1(x)

output = self.relu(self.bn1(output))

output = self.conv2(output)

output = self.bn1(output)

output += residual

return torch.relu(output)

class Resnet_18(nn.Module):

def __init__(self):

super(Resnet_18, self).__init__()

# 卷积 (W-F+2p)/stride[取下] + 1

self.conv1 = nn.Conv2d(3, 64, kernel_size=7, stride=2, padding=3, bias=False) # same 卷积 (stride=2)图像大小 减半

self.bn1 = nn.BatchNorm2d(64)

self.relu = nn.ReLU(inplace=True)

self.maxpool = nn.MaxPool2d(kernel_size=3, stride=2, padding=1) # 根据 池化核 补偿, 池化后 图像大小减半

# 每层 两个 残差块

self.layer1 = nn.Sequential(ResNetBasicBlock(64, 64, 1), # 残差块 图像大小 不变

ResNetBasicBlock(64, 64, 1)) # (64, 64, (3, 3)) * 2

self.layer2 = nn.Sequential(BaseRestBlock_Downsample(64, 128, [2, 1]), # 图像大小 减倍

ResNetBasicBlock(128, 128, 1))

self.layer3 = nn.Sequential(BaseRestBlock_Downsample(128, 256, [2, 1]), # 通道加倍,图像大小减半

ResNetBasicBlock(256, 256, 1))

self.layer4 = nn.Sequential(BaseRestBlock_Downsample(256, 512, [2, 1]), # 通道加倍,图像大小减半

ResNetBasicBlock(512, 512, 1))

self.avgpool = nn.AdaptiveAvgPool2d(output_size=(1, 1)) # 平均池化 输出小 图像大小为 (1, 1)

self.fc = nn.Linear(512, 1000, bias=True) # 平均池化(1,1)可以确定 输入个数

def forward(self, x):

output = self.layer1(x)

output = self.layer2(output)

output = self.layer3(output)

output = self.layer4(output)

output = self.avgpool(output)

batch_size = output.shape[0]

output = output.reshape(batch_size, -1)

output = self.fc(output)

return output

resnet18 = Resnet_18() # 共18个卷积层

print(resnet18)输入结果:

Resnet_18(

(conv1): Conv2d(3, 64, kernel_size=(7, 7), stride=(2, 2), padding=(3, 3), bias=False)

(bn1): BatchNorm2d(64, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu): ReLU(inplace=True)

(maxpool): MaxPool2d(kernel_size=3, stride=2, padding=1, dilation=1, ceil_mode=False)

(layer1): Sequential(

(0): ResNetBasicBlock(

(conv1): Conv2d(64, 64, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

(bn1): BatchNorm2d(64, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu): ReLU(inplace=True)

(conv2): Conv2d(64, 64, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

(bn2): BatchNorm2d(64, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

)

(1): ResNetBasicBlock(

(conv1): Conv2d(64, 64, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

(bn1): BatchNorm2d(64, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu): ReLU(inplace=True)

(conv2): Conv2d(64, 64, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

(bn2): BatchNorm2d(64, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

)

)

(layer2): Sequential(

(0): BaseRestBlock_Downsample(

(conv1): Conv2d(64, 128, kernel_size=(3, 3), stride=(2, 2), padding=(1, 1), bias=False)

(bn1): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu): ReLU(inplace=True)

(conv2): Conv2d(128, 128, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

(bn2): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(downsample): Sequential(

(0): Conv2d(64, 128, kernel_size=(1, 1), stride=(2, 2), bias=False)

(1): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

)

)

(1): ResNetBasicBlock(

(conv1): Conv2d(128, 128, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

(bn1): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu): ReLU(inplace=True)

(conv2): Conv2d(128, 128, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

(bn2): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

)

)

(layer3): Sequential(

(0): BaseRestBlock_Downsample(

(conv1): Conv2d(128, 256, kernel_size=(3, 3), stride=(2, 2), padding=(1, 1), bias=False)

(bn1): BatchNorm2d(256, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu): ReLU(inplace=True)

(conv2): Conv2d(256, 256, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

(bn2): BatchNorm2d(256, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(downsample): Sequential(

(0): Conv2d(128, 256, kernel_size=(1, 1), stride=(2, 2), bias=False)

(1): BatchNorm2d(256, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

)

)

(1): ResNetBasicBlock(

(conv1): Conv2d(256, 256, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

(bn1): BatchNorm2d(256, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu): ReLU(inplace=True)

(conv2): Conv2d(256, 256, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

(bn2): BatchNorm2d(256, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

)

)

(layer4): Sequential(

(0): BaseRestBlock_Downsample(

(conv1): Conv2d(256, 512, kernel_size=(3, 3), stride=(2, 2), padding=(1, 1), bias=False)

(bn1): BatchNorm2d(512, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu): ReLU(inplace=True)

(conv2): Conv2d(512, 512, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

(bn2): BatchNorm2d(512, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(downsample): Sequential(

(0): Conv2d(256, 512, kernel_size=(1, 1), stride=(2, 2), bias=False)

(1): BatchNorm2d(512, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

)

)

(1): ResNetBasicBlock(

(conv1): Conv2d(512, 512, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

(bn1): BatchNorm2d(512, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu): ReLU(inplace=True)

(conv2): Conv2d(512, 512, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

(bn2): BatchNorm2d(512, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

)

)

(avgpool): AdaptiveAvgPool2d(output_size=(1, 1))

(fc): Linear(in_features=512, out_features=1000, bias=True)

)