python爬虫疫情数据到MySQL再到可视化

python爬虫疫情数据到MySQL

出于群成员的再三说辞决定把自己爬虫的过程记录分享出来,这是第一次写csdn,还望大家只品内容,切勿喷格式。

主要过程分为以下几点

1.爬取疫情数据

2.MySQL建立数据库表

3.truncate表以及插入表数据

4.根据表关系可视化数据

1.爬取疫情数据

数据来源,话不多说先上链接[添加链接描述](https://ncov.dxy.cn/ncovh5/view/pneumonia?from=timeline&isappinstalled=0)

本人先是再百度等实时查询爬取过数据,发现很多数据都是存在js代码里面script标签里面,因此我们爬取到数据的时候可以用bf过滤到script标签然后找到数据。

直接上干货,先上代码

from os import path

import requests

from bs4 import BeautifulSoup

import json

import pymysql

import numpy as np

url = 'https://ncov.dxy.cn/ncovh5/view/pneumonia?from=timeline&isappinstalled=0' #请求地址

headers = {

'user-agent':'Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/74.0.3729.131 Safari/537.36'}#创建头部信息

response = requests.get(url,headers = headers) #发送网络请求

#print(response.content.decode('utf-8'))#以字节流形式打印网页源码

content = response.content.decode('utf-8')

#print(content)

soup = BeautifulSoup(content, 'html.parser')

listA = soup.find_all(name='script',attrs={

"id":"getAreaStat"})

#世界确诊

listB = soup.find_all(name='script',attrs={

"id":"getListByCountryTypeService2"})

#listA = soup.find_all(name='div',attrs={"class":"c-touchable-feedback c-touchable-feedback-no-default"})

account = str(listA)

world_messages = str(listB)[87:-21]

messages = account[52:-21]

messages_json = json.loads(messages)

world_messages_json = json.loads(world_messages)

valuesList = []

cityList = []

worldList = []

for k in range(len(world_messages_json)):

worldvalue = (world_messages_json[k].get('id'),world_messages_json[k].get('createTime'),world_messages_json[k].get('modifyTime'),world_messages_json[k].get('tags'),

world_messages_json[k].get('countryType'),world_messages_json[k].get('continents'),world_messages_json[k].get('provinceId'),world_messages_json[k].get('provinceName'),

world_messages_json[k].get('provinceShortName'),world_messages_json[k].get('cityName'),world_messages_json[k].get('currentConfirmedCount'),world_messages_json[k].get('confirmedCount'),

world_messages_json[k].get('suspectedCount'),world_messages_json[k].get('curedCount'),world_messages_json[k].get('deadCount'),world_messages_json[k].get('locationId'),

world_messages_json[k].get('countryShortCode'),)

worldList.append(worldvalue)

for i in range(len(messages_json)):

#value = messages_json[i]

value = (messages_json[i].get('provinceName'),messages_json[i].get('provinceShortName'),messages_json[i].get('currentConfirmedCount'),messages_json[i].get('confirmedCount'),messages_json[i].get('suspectedCount'),messages_json[i].get('curedCount'),messages_json[i].get('deadCount'),messages_json[i].get('comment'),messages_json[i].get('locationId'),messages_json[i].get('statisticsData'))

valuesList.append(value)

cityValue = messages_json[i].get('cities')

#print(cityValue)

for j in range(len(cityValue)):

cityValueList = (cityValue[j].get('cityName'),cityValue[j].get('currentConfirmedCount'),cityValue[j].get('confirmedCount'),cityValue[j].get('suspectedCount'),cityValue[j].get('curedCount'),cityValue[j].get('deadCount'),cityValue[j].get('locationId'),messages_json[i].get('provinceShortName'))

#print(cityValueList)

cityList.append(cityValueList)

#cityList.append(cityValue)

db = pymysql.connect("localhost", "root", "your_password", "your_db_name", charset='utf8')

cursor = db.cursor()

array = np.asarray(valuesList[0])

sql_clean_world = "TRUNCATE TABLE world_map"

sql_clean_city = "TRUNCATE TABLE city_map"

sql_clean_json = "TRUNCATE TABLE province_data_from_json"

sql_clean_province = "TRUNCATE TABLE province_map"

sql1 = "INSERT INTO city_map values (%s,%s,%s,%s,%s,%s,%s,%s)"

sql_world = "INSERT INTO world_map values (%s,%s,%s,%s,%s,%s,%s,%s,%s,%s,%s,%s,%s,%s,%s,%s,%s)"

#sql = "INSERT INTO province_map values (0,'%s','%s','%s','%s','%s','%s','%s','%s','%s','%s') "

sql = "INSERT INTO province_map values (%s,%s,%s,%s,%s,%s,%s,%s,%s,%s) "

#sql = "INSERT INTO province_map (provinceName,provinceShortName,correntConfirmedCount,confirmedCount,suspectedCount,curedCount,deadCount,comment,locationId,statisticsData) values (0,'%s','%s','%s','%s','%s','%s','%s','%s','%s','%s') "

#sql = """INSERT INTO province_map (provinceName,provinceShortName,correntConfirmedCount,confirmedCount,suspectedCount,curedCount,deadCount,comment,locationId,statisticsData) values ('湖北省', '湖北', 43334, 64786, 0, 18889, 2563, '', 420000, 'https://file1.dxycdn.com/2020/0223/618/3398299751673487511-135.json')"""

value_tuple = tuple(valuesList)

cityTuple = tuple(cityList)

worldTuple = tuple(worldList)

#print(cityTuple)

#print(tuple(value_tuple))

try:

#cursor.execute(sql_clean_city)

cursor.execute(sql_clean_province)

#cursor.executemany(sql, value_tuple)

#cursor.executemany(sql1,cityTuple)

db.commit()

except:

print('执行失败,进入回调1')

db.rollback()

try:

cursor.execute(sql_clean_city)

#cursor.execute(sql_clean_province)

#cursor.executemany(sql, value_tuple)

#cursor.executemany(sql1,cityTuple)

db.commit()

except:

print('执行失败,进入回调2')

db.rollback()

try:

#cursor.execute(sql_clean_city)

#cursor.execute(sql_clean_province)

cursor.executemany(sql, value_tuple)

#cursor.executemany(sql1,cityTuple)

db.commit()

except:

print('执行失败,进入回调3')

db.rollback()

try:

#cursor.execute(sql_clean_city)

#cursor.execute(sql_clean_province)

#cursor.executemany(sql, value_tuple)

cursor.executemany(sql1,cityTuple)

db.commit()

except:

print('执行失败,进入回调4')

db.rollback()

#print(messages_json)

#print(account[52:-21])

# soupDiv = BeautifulSoup(listA,'html.parser')

# listB = soupDiv.find_all(name='div',attrs={"class":"c-gap-bottom-zero c-line-clamp2"})

#for i in listA:

#print(i)

#listA[12]

#print(listA)

db.close()

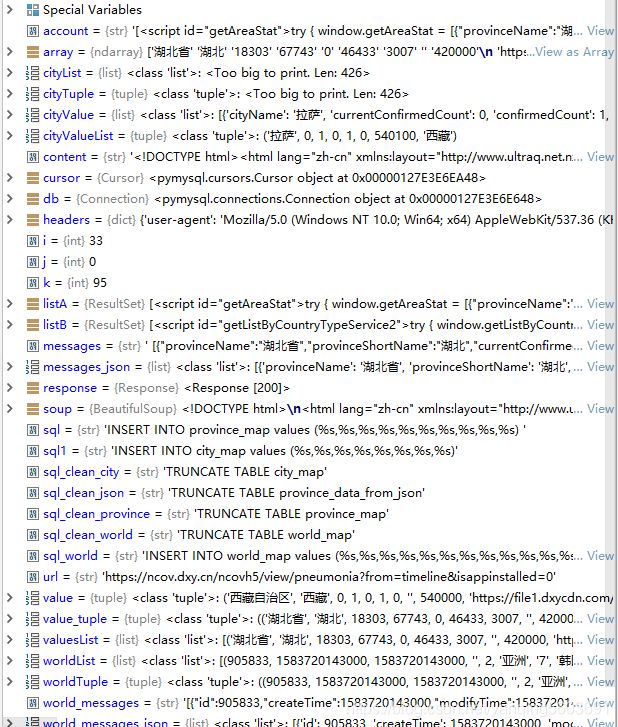

完整代码已经贴出来了,里面涉及到的内容需要先解释一下,首先有三个表,需要先根据爬取的数据,debug之后,打印出字段名,根据字段名,然后先建立表结构,最后插入到表中。

建立MySQL表结构

数据库目录图如下:

city_map表字段及部分截图如下:

province_map表字段及部分截图数据如下:

world_map表数据字段截取的不完整,建议大家先做前面两个,然后自己去根据前面两个和我提供的代码找出字段名,然后自我完成建表当作联系,也可以加入python学习群771689878,我是管理员

具体导入的sql文件就不导出上传了,所有字段都是varchar类型,不需要主外键

3. truncate表以及插入表数据

该部分内容已经完全放在代码部分,找到包含sql的即可轻松看懂。

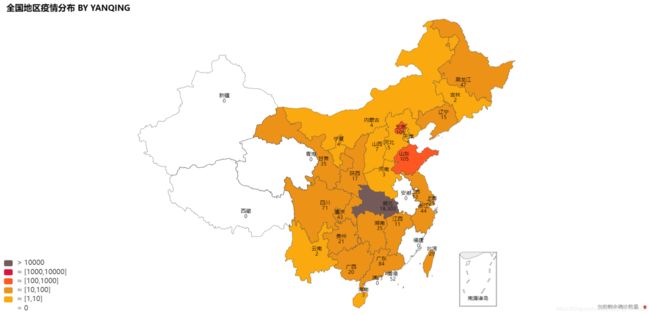

4.根据表关系可视化数据

这是一张全国地图可视化,颜色有点模仿百度的16进制颜色来的

这是上面可以访问的中国地图链接

这是世界地图

以及上海人数波动的图,由于我很早就爬虫了上海数据,导致最近数据地图没有更新,需要更新操作可以群里面找我,梦醒暮晨曦

最后提醒一下大家要学会发现数据,debug的时候 很多数据都能查看到,至于如何取就取决于你自己

运行时,可以查看的数据