pytorch学习笔记 --- 口罩识别的模型训练及应用2

本次实践中,将选一种图像分类的模型,通过重新训练,获得新的模型,数据集使用我们的原来的口罩检测的数据集,打算从头训练出一个识别是否佩戴口罩的模型.

考虑到模型的精度和速度,选择resnet18模型.

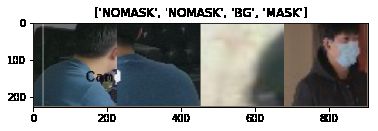

数据集分为: train和val 类别为: BG -> 背景 MASK -> 佩戴口罩 NOMASK -> 未佩戴口罩

%matplotlib inline

# 引入必要的库

from __future__ import print_function, division

import torch

import torch.nn as nn

import torch.optim as optim

from torch.optim import lr_scheduler

import numpy as np

import torchvision

from torchvision import datasets, models, transforms

import matplotlib.pyplot as plt

import time

import os

import copy

plt.ion() # interactive mode

# 加载数据集

data_transforms = {

'train': transforms.Compose([

transforms.RandomResizedCrop(224),

transforms.RandomHorizontalFlip(),

transforms.ToTensor(),

transforms.Normalize([0.485, 0.456, 0.406], [0.229, 0.224, 0.225])

]),

'val': transforms.Compose([

transforms.Resize(256),

transforms.CenterCrop(224),

transforms.ToTensor(),

transforms.Normalize([0.485, 0.456, 0.406], [0.229, 0.224, 0.225])

]),

}

data_dir = 'data/mask'

image_datasets = {x: datasets.ImageFolder(os.path.join(data_dir, x),

data_transforms[x])

for x in ['train', 'val']}

dataloaders = {x: torch.utils.data.DataLoader(image_datasets[x], batch_size=4,

shuffle=True, num_workers=4)

for x in ['train', 'val']}

dataset_sizes = {x: len(image_datasets[x]) for x in ['train', 'val']}

class_names = image_datasets['train'].classes

device = torch.device("cuda:0" if torch.cuda.is_available() else "cpu")

print(image_datasets['train'][0])

print(class_names)

(tensor([[[ 0.1768, 0.1768, 0.1768, ..., 0.3138, 0.3138, 0.3138],

[ 0.1768, 0.1768, 0.1768, ..., 0.3138, 0.3138, 0.3138],

[ 0.1768, 0.1768, 0.1768, ..., 0.3138, 0.3138, 0.3138],

...,

[-0.7479, -0.7479, -0.7479, ..., 0.7077, 0.7077, 0.7077],

[-0.7479, -0.7479, -0.7479, ..., 0.7077, 0.7077, 0.7077],

[-0.7479, -0.7479, -0.7479, ..., 0.7077, 0.7077, 0.7077]],

[[ 0.2577, 0.2577, 0.2577, ..., 0.3277, 0.3277, 0.3277],

[ 0.2577, 0.2577, 0.2577, ..., 0.3277, 0.3277, 0.3277],

[ 0.2577, 0.2577, 0.2577, ..., 0.3277, 0.3277, 0.3277],

...,

[-0.6001, -0.6001, -0.6001, ..., 0.9230, 0.9230, 0.9230],

[-0.6001, -0.6001, -0.6001, ..., 0.9230, 0.9230, 0.9230],

[-0.6001, -0.6001, -0.6001, ..., 0.9230, 0.9230, 0.9230]],

[[ 0.2173, 0.2173, 0.2173, ..., 0.2696, 0.2696, 0.2696],

[ 0.2173, 0.2173, 0.2173, ..., 0.2696, 0.2696, 0.2696],

[ 0.2173, 0.2173, 0.2173, ..., 0.2696, 0.2696, 0.2696],

...,

[-0.4624, -0.4624, -0.4624, ..., 1.1237, 1.1237, 1.1237],

[-0.4624, -0.4624, -0.4624, ..., 1.1237, 1.1237, 1.1237],

[-0.4624, -0.4624, -0.4624, ..., 1.1237, 1.1237, 1.1237]]]), 0)

['BG', 'MASK', 'NOMASK']

# 可视化数据集中的图片

def imshow(inp, title=None):

"""Imshow for Tensor."""

inp = inp.numpy().transpose((1, 2, 0))

mean = np.array([0.485, 0.456, 0.406])

std = np.array([0.229, 0.224, 0.225])

inp = std * inp + mean

inp = np.clip(inp, 0, 1)

plt.imshow(inp)

if title is not None:

plt.title(title)

plt.pause(0.001) # pause a bit so that plots are updated

# Get a batch of training data

inputs, classes = next(iter(dataloaders['train']))

# Make a grid from batch

out = torchvision.utils.make_grid(inputs)

imshow(out, title=[class_names[x] for x in classes])

# 训练数据的主函数

def train_model(model, criterion, optimizer, scheduler, num_epochs=25):

since = time.time()

best_model_wts = copy.deepcopy(model.state_dict())

best_acc = 0.0

for epoch in range(num_epochs):

print('Epoch {}/{}'.format(epoch, num_epochs - 1))

print('-' * 10)

# Each epoch has a training and validation phase

for phase in ['train', 'val']:

if phase == 'train':

model.train() # Set model to training mode

else:

model.eval() # Set model to evaluate mode

running_loss = 0.0

running_corrects = 0

# Iterate over data.

for inputs, labels in dataloaders[phase]:

inputs = inputs.to(device)

labels = labels.to(device)

# zero the parameter gradients

optimizer.zero_grad()

# forward

# track history if only in train

with torch.set_grad_enabled(phase == 'train'):

outputs = model(inputs)

_, preds = torch.max(outputs, 1)

loss = criterion(outputs, labels)

# backward + optimize only if in training phase

if phase == 'train':

loss.backward()

optimizer.step()

# statistics

running_loss += loss.item() * inputs.size(0)

running_corrects += torch.sum(preds == labels.data)

if phase == 'train':

scheduler.step()

epoch_loss = running_loss / dataset_sizes[phase]

epoch_acc = running_corrects.double() / dataset_sizes[phase]

print('{} Loss: {:.4f} Acc: {:.4f}'.format(

phase, epoch_loss, epoch_acc))

# deep copy the model

if phase == 'val' and epoch_acc > best_acc:

best_acc = epoch_acc

best_model_wts = copy.deepcopy(model.state_dict())

print()

time_elapsed = time.time() - since

print('Training complete in {:.0f}m {:.0f}s'.format(

time_elapsed // 60, time_elapsed % 60))

print('Best val Acc: {:4f}'.format(best_acc))

# load best model weights

model.load_state_dict(best_model_wts)

return model

def visualize_model(model, num_images=6):

was_training = model.training

model.eval()

images_so_far = 0

fig = plt.figure()

with torch.no_grad():

for i, (inputs, labels) in enumerate(dataloaders['val']):

inputs = inputs.to(device)

labels = labels.to(device)

outputs = model(inputs)

_, preds = torch.max(outputs, 1)

for j in range(inputs.size()[0]):

images_so_far += 1

ax = plt.subplot(num_images//2, 2, images_so_far)

ax.axis('off')

ax.set_title('predicted: {}'.format(class_names[preds[j]]))

imshow(inputs.cpu().data[j])

if images_so_far == num_images:

model.train(mode=was_training)

return

model.train(mode=was_training)

# 定义模型

model_ft = models.resnet18(pretrained=True)

num_ftrs = model_ft.fc.in_features

# Here the size of each output sample is set to 3.

# Alternatively, it can be generalized to nn.Linear(num_ftrs, len(class_names)).

model_ft.fc = nn.Linear(num_ftrs, 3)

model_ft = model_ft.to(device)

criterion = nn.CrossEntropyLoss()

# Observe that all parameters are being optimized

optimizer_ft = optim.SGD(model_ft.parameters(), lr=0.001, momentum=0.9)

# Decay LR by a factor of 0.1 every 7 epochs

exp_lr_scheduler = lr_scheduler.StepLR(optimizer_ft, step_size=7, gamma=0.1)

# 开始训练

model_ft = train_model(model_ft, criterion, optimizer_ft, exp_lr_scheduler,

num_epochs=25)

Epoch 0/24

----------

/usr/local/lib/python3.6/site-packages/PIL/TiffImagePlugin.py:742: UserWarning: Corrupt EXIF data. Expecting to read 2 bytes but only got 0.

warnings.warn(str(msg))

train Loss: 0.4244 Acc: 0.8462

val Loss: 0.1306 Acc: 0.9525

Epoch 1/24

----------

/usr/local/lib/python3.6/site-packages/PIL/TiffImagePlugin.py:742: UserWarning: Corrupt EXIF data. Expecting to read 2 bytes but only got 0.

warnings.warn(str(msg))

train Loss: 0.2558 Acc: 0.9039

val Loss: 0.1194 Acc: 0.9591

Epoch 2/24

----------

/usr/local/lib/python3.6/site-packages/PIL/TiffImagePlugin.py:742: UserWarning: Corrupt EXIF data. Expecting to read 2 bytes but only got 0.

warnings.warn(str(msg))

train Loss: 0.2167 Acc: 0.9183

val Loss: 0.1010 Acc: 0.9668

Epoch 3/24

----------

/usr/local/lib/python3.6/site-packages/PIL/TiffImagePlugin.py:742: UserWarning: Corrupt EXIF data. Expecting to read 2 bytes but only got 0.

warnings.warn(str(msg))

train Loss: 0.1863 Acc: 0.9287

# 保存训练模型

PATH = './mask.pth'

torch.save(model_ft.state_dict(), PATH)

# 可视化检验训练集

visualize_model(model_ft)