- 通过TensorFlow实现简单深度学习模型(2)

yyc_audio

人工智能深度学习python机器学习

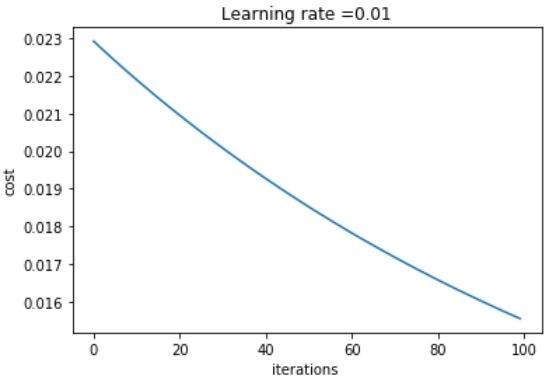

前文我们已经实现了对每批数据的训练,下面继续实现一轮完整的训练。完整的训练循环一轮训练就是对训练数据的每个批量都重复上述训练步骤,而完整的训练循环就是重复多轮训练。deffit(model,images,labels,epochs,batch_size=128):forepoch_counterinrange(epochs):print(f"Epoch{epoch_counter}")batch_

- AI探索笔记:浅谈人工智能算法分类

安意诚Matrix

机器学习笔记人工智能笔记

人工智能算法分类这是一张经典的图片,基本概况了人工智能算法的现状。这张图片通过三个同心圆展示了人工智能、机器学习和深度学习之间的包含关系,其中人工智能是最广泛的范畴,机器学习是其子集,专注于数据驱动的算法改进,而深度学习则是机器学习中利用多层神经网络进行学习的特定方法。但是随着时代的发展,这张图片表达得也不是太全面了。我更喜欢把人工智能算法做如下的分类:传统机器学习算法-线性回归、逻辑回归、支持向

- 自动驾驶之BEVDet

maxruan

BEV自动驾驶自动驾驶人工智能机器学习

BEVDet主要分为4个模块:1、图像视图编码器(Image-viewEncoder):就是一个图像特征提取的网络,由主干网络backbone+颈部网络neck构成。经典主干网络比如resnet,SwinTransformer等。neck有==FPN==,PAFPN等。例如输入环视图像,记作Tensor([bs,N,3,H,W]),提取多尺度特征;其中bs=batchsize,N=环视图像的个数,

- 如何处理报错"UDT column batch insert" has not been implemented yet

数据库

问题现象YashanDB中的ST_GEOMETRY类型是数据库内置的一种自定义类型,用于存储和访问符合开放地理空间信息联盟(OpenGeospatialConsortium,简称OGC)制定的SFASQL标准的几何对象。在批量插入(例如insertintoselect或使用yasldr导入数据)的时候,如果表有ST_GEOMETRY类型的字段,则会报错YAS-00004feature"UDTcol

- 零基础学习机器学习分类模型

可喜~可乐

机器学习机器学习学习分类人工智能数据挖掘

下面将带你通过一个简单的机器学习项目,使用Python实现一个常见的分类问题。我们将使用著名的Iris数据集,来构建一个机器学习模型,进行花卉品种的分类。整个过程会包含:原理介绍:机器学习的基本概念。数据加载和预处理:如何加载数据并进行必要的处理。模型训练和评估:使用经典的分类算法——逻辑回归。代码解释:逐步分析代码实现。拓展内容:如何优化和扩展该项目。1.原理介绍1.1机器学习基本概念机器学习(

- AI人工智能机器学习之监督线性模型

rockfeng0

人工智能机器学习sklearn

1、概要 本篇学习AI人工智能机器监督学习框架下的线性模型,以LinearRegression线性回归和LogisticRegression逻辑回归为示例,从代码层面测试和讲述监督学习中的线性模型。2、监督学习之线性模型-简介监督学习和线性模型是的两个重要概念。监督学习是一种机器学习任务,其中模型在已标记的数据集上进行训练。线性模型是一类通过线性组合输入特征来进行预测的模型。线性模型的基本形式可

- 从零开始玩转TensorFlow:小明的机器学习故事 4

山海青风

机器学习tensorflow人工智能

探索深度学习1场景故事:小明的灵感前不久,小明一直在用传统的机器学习方法(如线性回归、逻辑回归)来预测学校篮球比赛的胜负。虽然在朋友们看来已经很不错了,但小明发现一个问题:当比赛数据越来越多、球队的特征越来越复杂时,模型的准确率提升得很慢。有一天,小明在学校图书馆翻看杂志时,看到这样一句话:“就像人的大脑有上百亿神经元,神经网络能够学习复杂的信息映射,从而取得卓越的表现。”他瞬间来了灵感:“或许我

- Spark Streaming 容错机制详解

goTsHgo

spark-streaming大数据分布式spark-streaming大数据分布式

SparkStreaming是Spark生态系统中用于处理实时数据流的模块。它通过微批处理(micro-batch)的方式将实时流数据进行分片处理,每个批次的计算本质上是Spark的批处理作业。为了保证数据的准确性和系统的可靠性,SparkStreaming实现了多种容错机制,包括数据恢复、任务失败重试、元数据恢复等。接下来,我们将从底层原理和源代码的角度详细解释SparkStreaming是如何

- 梯度累加(结合DDP)梯度检查点

糖葫芦君

LLM算法人工智能大模型深度学习

梯度累加目的梯度累积是一种训练神经网络的技术,主要用于在内存有限的情况下处理较大的批量大小(batchsize)。通常,较大的批量可以提高训练的稳定性和效率,但受限于GPU或TPU的内存,无法一次性加载大批量数据。梯度累积通过多次前向传播和反向传播累积梯度,然后一次性更新模型参数,从而模拟大批量训练的效果。总结:显存限制:GPU/TPU显存有限,无法一次性加载大批量数据。训练稳定性:大批量训练通常

- Tensorflow2.x框架-神经网络八股扩展-acc曲线与loss曲线

诗雨时

loss/loss可视化,可视化出准确率上升、损失函数下降的过程博主微信公众号(左)、Python+智能大数据+AI学习交流群(右):欢迎关注和加群,大家一起学习交流,共同进步!目录摘要一、acc曲线与loss曲线二、完整代码摘要loss/loss可视化,可视化出准确率上升、损失函数下降的过程一、acc曲线与loss曲线history=model.fit(训练集数据,训练集标签,batch_siz

- 从零开始玩转TensorFlow:小明的机器学习故事 3

山海青风

#机器学习机器学习tensorflow人工智能

下面是一篇以小明为主角,尝试用TensorFlow预测校园活动参与率的学习故事。我们会在故事情境中穿插对线性回归和逻辑回归的原理介绍,并附带必要的代码示例,帮助你从零基础理解并动手实践。文章结尾还有简要的分析总结。小明的第一次机器学习实验场景:预测校园活动的参与率小明最近加入了学生会,负责策划校园活动。每次活动都需要准备场地、宣传物料和餐饮,但经常会出现场地过小或准备物资不足等问题。为了让活动准备

- 卷积神经网络八股(一)------20行代码搞定鸢尾花分类

有幸添砖java

opencv

编写不易,未有VIP但想白嫖文章的朋友可以关注我的个人公众号“不秃头的码农”直接查看文章,后台回复java资料、单片机、安卓可免费领取资源。你的支持是我最大的动力!卷积神经网络八股(一)------20行代码搞定鸢尾花分类引言用TensorflowAPI:tf.keras实现神经网络搭建八股Sequential的用法compile的用法fit的用法(batch是每次喂入神经网络的样本数、epoch

- 神经网络八股(3)

SylviaW08

神经网络人工智能深度学习

1.什么是梯度消失和梯度爆炸梯度消失是指梯度在反向传播的过程中逐渐变小,最终趋近于零,这会导致靠前层的神经网络层权重参数更新缓慢,甚至不更新,学习不到有用的特征。梯度爆炸是指梯度在方向传播过程中逐渐变大,权重参数更新变化较大,导致损失函数的上下跳动,导致训练不稳定可以使用一些合理的损失函数如relu,leakRelu,归一化处理,batchnorm,确保神经元的输出值在合理的范围内2.为什么需要特

- ACM算法与竞赛基地:蓝桥备战 --- 二分篇

NONE-C

蓝桥杯算法数据结构

ACM基地:蓝桥备战—二分篇什么是二分?二分是一种搜索策略,类似于高速中学到的梯度下降法,当我们落在某一点是沿着该点斜率,我们可以像最优处移动,二分也是样的策略,但其更加严格,现代算法,如模拟退火,蚁群算法,BP算法针对的都是存在多种最优解,解决的问题也更加宽泛,而作为传统算法的二分,有着更加严格的限制,想要理解二分,必须要对该限制有深刻理解。接下来我们将展开对二分的学习二分查找+二分答案key1

- PyTorch实战:手把手教你完成MNIST手写数字识别任务

吴师兄大模型

PyTorchpytorch人工智能python手写数字数别MNIST深度学习开发语言

系列文章目录Pytorch基础篇01-PyTorch新手必看:张量是什么?5分钟教你快速创建张量!02-张量运算真简单!PyTorch数值计算操作完全指南03-Numpy还是PyTorch?张量与Numpy的神奇转换技巧04-揭秘数据处理神器:PyTorch张量拼接与拆分实用技巧05-深度学习从索引开始:PyTorch张量索引与切片最全解析06-张量形状任意改!PyTorchreshape、tra

- 【YashanDB 知识库】如何处理报错"UDT column batch insert"

数据库运维

问题现象YashanDB中的ST_GEOMETRY类型是数据库内置的一种自定义类型,用于存储和访问符合开放地理空间信息联盟(OpenGeospatialConsortium,简称OGC)制定的SFASQL标准的几何对象。在批量插入(例如insertintoselect或使用yasldr导入数据)的时候,如果表有ST_GEOMETRY类型的字段,则会报错YAS-00004feature"UDTcol

- 逻辑回归分类python实例_Python逻辑回归原理及实际案例应用

Zcc四月

逻辑回归分类python实例

前言目录1.逻辑回归2.优缺点及优化问题3.实际案例应用4.总结正文在前面所介绍的线性回归,岭回归和Lasso回归这三种回归模型中,其输出变量均为连续型,比如常见的线性回归模型为:其写成矩阵形式为:现在这里的输出为连续型变量,但是实际中会有'输出为离散型变量'这样的需求,比如给定特征预测是否离职(1表示离职,0表示不离职).显然这时不能直接使用线性回归模型,而逻辑回归就派上用场了.1.逻辑回归引用

- MongoDB#数据删除优化

许心月

#MongoDBmongodb数据库

分批删除constbatchSize=10000;//每批删除10,000条数据letdeletedCount=0;do{constresult=db.xxx_collection.deleteMany({createTime:{$lt:newDate(Date.now()-1*24*60*60*1000)}},{limit:batchSize});deletedCount=result.dele

- MongoDB#常用脚本

许心月

#MongoDBmongodb数据库

批量插入数据脚本constoneDayAgo=newDate(Date.now()-1*24*60*60*1000);constdocuments=[];for(leti=1;idoc.xxxId);//2.删除集合A中符合条件的文档constresult=db.A.deleteMany({xxxId:{$in:xxxIdsToDelete}},{limit:batchSize}//每批最多删除b

- 深度学习(5)-卷积神经网络

yyc_audio

深度学习cnn人工智能

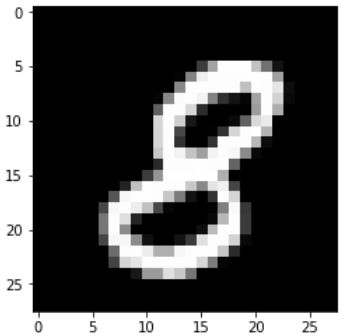

我们将深入理解卷积神经网络的原理,以及它为什么在计算机视觉任务上如此成功。我们先来看一个简单的卷积神经网络示例,它用干对MNIST数字进行分类。这个任务在第2章用密集连接网络做过,当时的测试精度约为97.8%。虽然这个卷积神经网络很简单,但其精度会超过第2章的密集连接模型。代码8-1给出了一个简单的卷积神经网络。它是conv2D层和MaxPooling2D层的堆叠,你很快就会知道这些层的作用。我们

- 解锁机器学习核心算法|朴素贝叶斯:分类的智慧法则

紫雾凌寒

AI炼金厂#机器学习算法机器学习算法分类朴素贝叶斯python深度学习人工智能

一、引言在机器学习的庞大算法体系中,有十种算法被广泛认为是最具代表性和实用性的,它们犹如机器学习领域的“十大神器”,各自发挥着独特的作用。这十大算法包括线性回归、逻辑回归、决策树、随机森林、K-近邻算法、K-平均算法、支持向量机、朴素贝叶斯算法、主成分分析(PCA)、神经网络。它们涵盖了回归、分类、聚类、降维等多个机器学习任务领域,是众多机器学习应用的基础和核心。而在这众多的算法中,朴素贝叶斯算法

- 【机器学习算法选型:分类与回归】 常见分类算法介绍

云博士的AI课堂

哈佛博后带你玩转机器学习机器学习分类回归分类与回归机器学习算法选型深度学习人工智能

第2节:常见分类算法介绍在机器学习中,分类算法是用于预测一个样本所属类别的工具。无论是在金融风控、医疗诊断、图像识别还是推荐系统等领域,分类算法都扮演着至关重要的角色。不同的分类算法各自有不同的优缺点和应用场景,因此了解这些算法的特点及其适用条件,是构建高效分类模型的关键。1.逻辑回归(LogisticRegression)介绍逻辑回归是一种广泛应用于二分类问题的线性模型,其目标是根据输入特征预测

- Linux之Shell:Shell/Shell脚本(sh)的简介、使用方法、案例应用之详细攻略

一个处女座的程序猿

Tool/IDEetc成长书屋linuxshellbash

Linux之Shell:Shell/Shell脚本(sh)的简介、使用方法、案例应用之详细攻略目录相关文章Windows之Batch:Batch批处理脚本(bat/cmd)的简介、使用方法、案例应用之详细攻略Linux:Linux系统的简介、基础知识、最强学习路线(以Ubuntu系统为例—安装/各自命令行技能/文件系统/Shell脚本编程/权限网络和系统管理/高级语言编程)、常用案例(图文教程)之

- 手写数字识别 neuralnet_mnist.py 代码解读 来自GPT

阿崽meitoufa

python开发语言神经网络深度学习gpt

这段代码是一个手写数字识别程序,使用的是一个简单的神经网络模型。通过加载训练好的模型(sample_weight.pkl),它对MNIST测试集进行预测,并计算模型的准确率。接下来,我会逐步解析这段代码的主要部分。1.导入所需库importsys,ossys.path.append(os.pardir)#为了导入父目录的文件而进行的设定importnumpyasnpimportpicklefrom

- 用deepseek学大模型05逻辑回归

wyg_031113

逻辑回归机器学习人工智能

deepseek.com:逻辑回归的目标函数,损失函数,梯度下降标量和矩阵形式的数学推导,pytorch真实能跑的代码案例以及模型,数据,预测结果的可视化展示,模型应用场景和优缺点,及如何改进解决及改进方法数据推导。逻辑回归全面解析一、数学推导模型定义:逻辑回归模型为概率预测模型,输出P(y=1∣x)=σ(w⊤x+b)P(y=1\mid\mathbf{x})=\sigma(\mathbf{w}^\

- Spark MLlib中的机器学习算法及其应用场景

Java资深爱好者

深度学习推荐算法

SparkMLlib是ApacheSpark框架中的一个机器学习库,提供了丰富的机器学习算法和工具,用于处理和分析大规模数据。以下是SparkMLlib中的机器学习算法及其应用场景的详细描述:一、SparkMLlib中的机器学习算法分类算法:逻辑回归:用于二分类问题,通过最大化对数似然函数来估计模型参数。支持向量机(SVM):用于分类和回归问题,通过寻找一个超平面来最大化不同类别之间的间隔。决策树

- MyBatis-Plus常用增删改查方法

Warren98

Javamybatistomcatspringboot笔记mysql

MP常用方法方法作用对应SQLinsert(user)插入数据INSERTINTO...selectById(id)根据ID查询SELECT*FROM...WHEREid=?selectBatchIds(ids)批量查询SELECT*FROM...WHEREidIN(?,?,?)selectList(wrapper)条件查询SELECT*FROM...WHERE...updateById(user

- 嵌入式人工智能应用-第四章 逻辑回归 8

数贾电子科技

嵌入式人工智能应用人工智能逻辑回归算法

逻辑回归1逻辑回归介绍1.1背景介绍1.2原理1.2.1预测函数1.2.2判定边界1.2.3损失函数1,2,4梯度下降函数1.2.5分类拓展1.2.6正则化2实验代码3实验结果说明1逻辑回归介绍1.1背景介绍逻辑回归的过程可以概括为:面对一个回归或者分类问题,建立代价函数,然后通过优化方法迭代求解出最优的模型参数,然后测试验证我们这个求解的模型的好坏。Logistic回归虽然名字里带“回归”,但是

- 神经网络模型训练中的相关概念:Epoch,Batch,Batch size,Iteration

一杯水果茶!

视觉与网络神经网络batchepochIteration

神经网络模型训练中的相关概念如下:Epoch(时期/回合):当一个完整的数据集通过了神经网络一次并且返回了一次,这个过程称为一次epoch。也就是说,所有训练样本在神经网络中都进行了一次正向传播和一次反向传播。一个epoch是将所有训练样本训练一次的过程。Batch(批/一批样本):将整个训练样本分成若干个batch。每个batch中包含一部分训练样本,每次送入网络中进行训练的是一个batch。B

- 机器学习的模型类型(Model Types)

路野yue

人工智能机器学习

1.传统机器学习模型线性模型(LinearModels):线性回归(LinearRegression):用于回归任务,拟合线性关系。逻辑回归(LogisticRegression):用于分类任务,输出概率值。岭回归(RidgeRegression)和Lasso回归(LassoRegression):带正则化的线性回归。树模型(Tree-basedModels):决策树(DecisionTree):

- java工厂模式

3213213333332132

java抽象工厂

工厂模式有

1、工厂方法

2、抽象工厂方法。

下面我的实现是抽象工厂方法,

给所有具体的产品类定一个通用的接口。

package 工厂模式;

/**

* 航天飞行接口

*

* @Description

* @author FuJianyong

* 2015-7-14下午02:42:05

*/

public interface SpaceF

- nginx频率限制+python测试

ronin47

nginx 频率 python

部分内容参考:http://www.abc3210.com/2013/web_04/82.shtml

首先说一下遇到这个问题是因为网站被攻击,阿里云报警,想到要限制一下访问频率,而不是限制ip(限制ip的方案稍后给出)。nginx连接资源被吃空返回状态码是502,添加本方案限制后返回599,与正常状态码区别开。步骤如下:

- java线程和线程池的使用

dyy_gusi

ThreadPoolthreadRunnabletimer

java线程和线程池

一、创建多线程的方式

java多线程很常见,如何使用多线程,如何创建线程,java中有两种方式,第一种是让自己的类实现Runnable接口,第二种是让自己的类继承Thread类。其实Thread类自己也是实现了Runnable接口。具体使用实例如下:

1、通过实现Runnable接口方式 1 2

- Linux

171815164

linux

ubuntu kernel

http://kernel.ubuntu.com/~kernel-ppa/mainline/v4.1.2-unstable/

安卓sdk代理

mirrors.neusoft.edu.cn 80

输入法和jdk

sudo apt-get install fcitx

su

- Tomcat JDBC Connection Pool

g21121

Connection

Tomcat7 抛弃了以往的DBCP 采用了新的Tomcat Jdbc Pool 作为数据库连接组件,事实上DBCP已经被Hibernate 所抛弃,因为他存在很多问题,诸如:更新缓慢,bug较多,编译问题,代码复杂等等。

Tomcat Jdbc P

- 敲代码的一点想法

永夜-极光

java随笔感想

入门学习java编程已经半年了,一路敲代码下来,现在也才1w+行代码量,也就菜鸟水准吧,但是在整个学习过程中,我一直在想,为什么很多培训老师,网上的文章都是要我们背一些代码?比如学习Arraylist的时候,教师就让我们先参考源代码写一遍,然

- jvm指令集

程序员是怎么炼成的

jvm 指令集

转自:http://blog.csdn.net/hudashi/article/details/7062675#comments

将值推送至栈顶时 const ldc push load指令

const系列

该系列命令主要负责把简单的数值类型送到栈顶。(从常量池或者局部变量push到栈顶时均使用)

0x02 &nbs

- Oracle字符集的查看查询和Oracle字符集的设置修改

aijuans

oracle

本文主要讨论以下几个部分:如何查看查询oracle字符集、 修改设置字符集以及常见的oracle utf8字符集和oracle exp 字符集问题。

一、什么是Oracle字符集

Oracle字符集是一个字节数据的解释的符号集合,有大小之分,有相互的包容关系。ORACLE 支持国家语言的体系结构允许你使用本地化语言来存储,处理,检索数据。它使数据库工具,错误消息,排序次序,日期,时间,货

- png在Ie6下透明度处理方法

antonyup_2006

css浏览器FirebugIE

由于之前到深圳现场支撑上线,当时为了解决个控件下载,我机器上的IE8老报个错,不得以把ie8卸载掉,换个Ie6,问题解决了,今天出差回来,用ie6登入另一个正在开发的系统,遇到了Png图片的问题,当然升级到ie8(ie8自带的开发人员工具调试前端页面JS之类的还是比较方便的,和FireBug一样,呵呵),这个问题就解决了,但稍微做了下这个问题的处理。

我们知道PNG是图像文件存储格式,查询资

- 表查询常用命令高级查询方法(二)

百合不是茶

oracle分页查询分组查询联合查询

----------------------------------------------------分组查询 group by having --平均工资和最高工资 select avg(sal)平均工资,max(sal) from emp ; --每个部门的平均工资和最高工资

- uploadify3.1版本参数使用详解

bijian1013

JavaScriptuploadify3.1

使用:

绑定的界面元素<input id='gallery'type='file'/>$("#gallery").uploadify({设置参数,参数如下});

设置的属性:

id: jQuery(this).attr('id'),//绑定的input的ID

langFile: 'http://ww

- 精通Oracle10编程SQL(17)使用ORACLE系统包

bijian1013

oracle数据库plsql

/*

*使用ORACLE系统包

*/

--1.DBMS_OUTPUT

--ENABLE:用于激活过程PUT,PUT_LINE,NEW_LINE,GET_LINE和GET_LINES的调用

--语法:DBMS_OUTPUT.enable(buffer_size in integer default 20000);

--DISABLE:用于禁止对过程PUT,PUT_LINE,NEW

- 【JVM一】JVM垃圾回收日志

bit1129

垃圾回收

将JVM垃圾回收的日志记录下来,对于分析垃圾回收的运行状态,进而调整内存分配(年轻代,老年代,永久代的内存分配)等是很有意义的。JVM与垃圾回收日志相关的参数包括:

-XX:+PrintGC

-XX:+PrintGCDetails

-XX:+PrintGCTimeStamps

-XX:+PrintGCDateStamps

-Xloggc

-XX:+PrintGC

通

- Toast使用

白糖_

toast

Android中的Toast是一种简易的消息提示框,toast提示框不能被用户点击,toast会根据用户设置的显示时间后自动消失。

创建Toast

两个方法创建Toast

makeText(Context context, int resId, int duration)

参数:context是toast显示在

- angular.identity

boyitech

AngularJSAngularJS API

angular.identiy 描述: 返回它第一参数的函数. 此函数多用于函数是编程. 使用方法: angular.identity(value); 参数详解: Param Type Details value

*

to be returned. 返回值: 传入的value 实例代码:

<!DOCTYPE HTML>

- java-两整数相除,求循环节

bylijinnan

java

import java.util.ArrayList;

import java.util.List;

public class CircleDigitsInDivision {

/**

* 题目:求循环节,若整除则返回NULL,否则返回char*指向循环节。先写思路。函数原型:char*get_circle_digits(unsigned k,unsigned j)

- Java 日期 周 年

Chen.H

javaC++cC#

/**

* java日期操作(月末、周末等的日期操作)

*

* @author

*

*/

public class DateUtil {

/** */

/**

* 取得某天相加(减)後的那一天

*

* @param date

* @param num

*

- [高考与专业]欢迎广大高中毕业生加入自动控制与计算机应用专业

comsci

计算机

不知道现在的高校还设置这个宽口径专业没有,自动控制与计算机应用专业,我就是这个专业毕业的,这个专业的课程非常多,既要学习自动控制方面的课程,也要学习计算机专业的课程,对数学也要求比较高.....如果有这个专业,欢迎大家报考...毕业出来之后,就业的途径非常广.....

以后

- 分层查询(Hierarchical Queries)

daizj

oracle递归查询层次查询

Hierarchical Queries

If a table contains hierarchical data, then you can select rows in a hierarchical order using the hierarchical query clause:

hierarchical_query_clause::=

start with condi

- 数据迁移

daysinsun

数据迁移

最近公司在重构一个医疗系统,原来的系统是两个.Net系统,现需要重构到java中。数据库分别为SQL Server和Mysql,现需要将数据库统一为Hana数据库,发现了几个问题,但最后通过努力都解决了。

1、原本通过Hana的数据迁移工具把数据是可以迁移过去的,在MySQl里面的字段为TEXT类型的到Hana里面就存储不了了,最后不得不更改为clob。

2、在数据插入的时候有些字段特别长

- C语言学习二进制的表示示例

dcj3sjt126com

cbasic

进制的表示示例

# include <stdio.h>

int main(void)

{

int i = 0x32C;

printf("i = %d\n", i);

/*

printf的用法

%d表示以十进制输出

%x或%X表示以十六进制的输出

%o表示以八进制输出

*/

return 0;

}

- NsTimer 和 UITableViewCell 之间的控制

dcj3sjt126com

ios

情况是这样的:

一个UITableView, 每个Cell的内容是我自定义的 viewA viewA上面有很多的动画, 我需要添加NSTimer来做动画, 由于TableView的复用机制, 我添加的动画会不断开启, 没有停止, 动画会执行越来越多.

解决办法:

在配置cell的时候开始动画, 然后在cell结束显示的时候停止动画

查找cell结束显示的代理

- MySql中case when then 的使用

fanxiaolong

casewhenthenend

select "主键", "项目编号", "项目名称","项目创建时间", "项目状态","部门名称","创建人"

union

(select

pp.id as "主键",

pp.project_number as &

- Ehcache(01)——简介、基本操作

234390216

cacheehcache简介CacheManagercrud

Ehcache简介

目录

1 CacheManager

1.1 构造方法构建

1.2 静态方法构建

2 Cache

2.1&

- 最容易懂的javascript闭包学习入门

jackyrong

JavaScript

http://www.ruanyifeng.com/blog/2009/08/learning_javascript_closures.html

闭包(closure)是Javascript语言的一个难点,也是它的特色,很多高级应用都要依靠闭包实现。

下面就是我的学习笔记,对于Javascript初学者应该是很有用的。

一、变量的作用域

要理解闭包,首先必须理解Javascript特殊

- 提升网站转化率的四步优化方案

php教程分享

数据结构PHP数据挖掘Google活动

网站开发完成后,我们在进行网站优化最关键的问题就是如何提高整体的转化率,这也是营销策略里最最重要的方面之一,并且也是网站综合运营实例的结果。文中分享了四大优化策略:调查、研究、优化、评估,这四大策略可以很好地帮助用户设计出高效的优化方案。

PHP开发的网站优化一个网站最关键和棘手的是,如何提高整体的转化率,这是任何营销策略里最重要的方面之一,而提升网站转化率是网站综合运营实力的结果。今天,我就分

- web开发里什么是HTML5的WebSocket?

naruto1990

Webhtml5浏览器socket

当前火起来的HTML5语言里面,很多学者们都还没有完全了解这语言的效果情况,我最喜欢的Web开发技术就是正迅速变得流行的 WebSocket API。WebSocket 提供了一个受欢迎的技术,以替代我们过去几年一直在用的Ajax技术。这个新的API提供了一个方法,从客户端使用简单的语法有效地推动消息到服务器。让我们看一看6个HTML5教程介绍里 的 WebSocket API:它可用于客户端、服

- Socket初步编程——简单实现群聊

Everyday都不同

socket网络编程初步认识

初次接触到socket网络编程,也参考了网络上众前辈的文章。尝试自己也写了一下,记录下过程吧:

服务端:(接收客户端消息并把它们打印出来)

public class SocketServer {

private List<Socket> socketList = new ArrayList<Socket>();

public s

- 面试:Hashtable与HashMap的区别(结合线程)

toknowme

昨天去了某钱公司面试,面试过程中被问道

Hashtable与HashMap的区别?当时就是回答了一点,Hashtable是线程安全的,HashMap是线程不安全的,说白了,就是Hashtable是的同步的,HashMap不是同步的,需要额外的处理一下。

今天就动手写了一个例子,直接看代码吧

package com.learn.lesson001;

import java

- MVC设计模式的总结

xp9802

设计模式mvc框架IOC

随着Web应用的商业逻辑包含逐渐复杂的公式分析计算、决策支持等,使客户机越

来越不堪重负,因此将系统的商业分离出来。单独形成一部分,这样三层结构产生了。

其中‘层’是逻辑上的划分。

三层体系结构是将整个系统划分为如图2.1所示的结构[3]

(1)表现层(Presentation layer):包含表示代码、用户交互GUI、数据验证。

该层用于向客户端用户提供GUI交互,它允许用户