基于keras的fashion_mnist模型训练过程

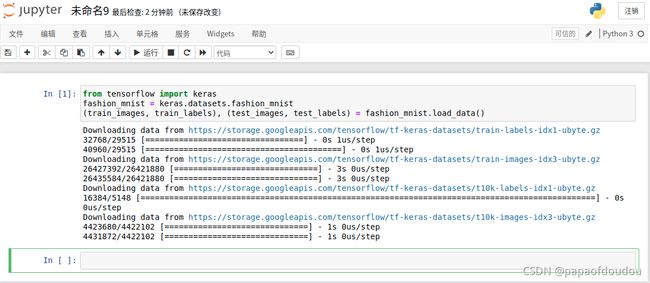

1.下载fashion_mnist数据

使用以下三行代码下载fashion mnist数据

from tensorflow import keras

fashion_mnist = keras.datasets.fashion_mnist

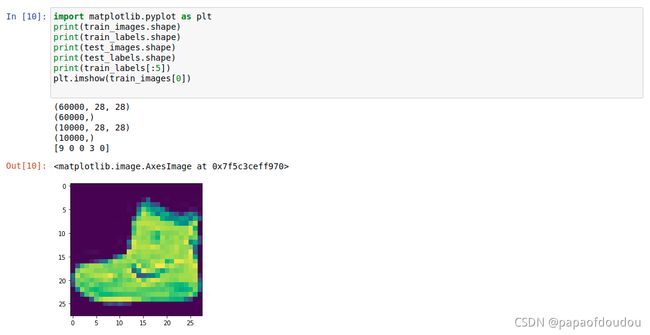

(train_images, train_labels), (test_images, test_labels) = fashion_mnist.load_data()查看数据信息:

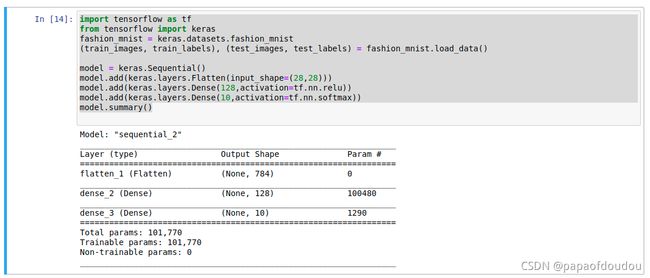

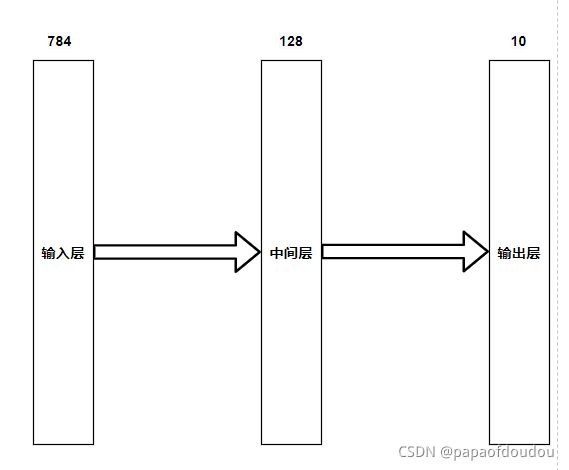

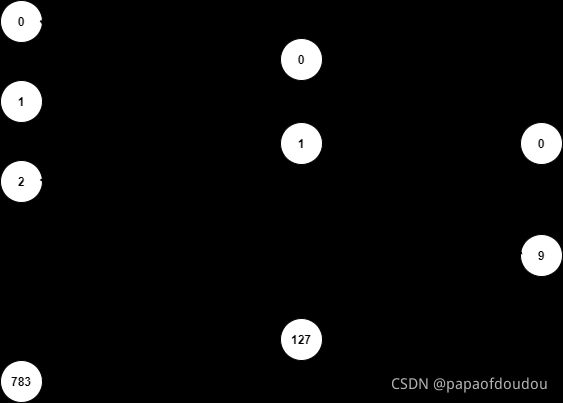

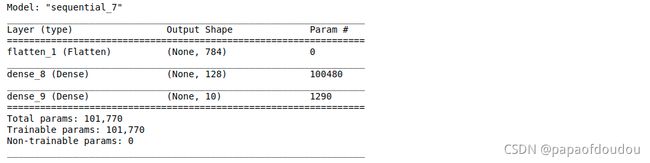

构建网络:

import tensorflow as tf

from tensorflow import keras

fashion_mnist = keras.datasets.fashion_mnist

(train_images, train_labels), (test_images, test_labels) = fashion_mnist.load_data()

model = keras.Sequential()

model.add(keras.layers.Flatten(input_shape=(28,28)))

model.add(keras.layers.Dense(128,activation=tf.nn.relu))

model.add(keras.layers.Dense(10,activation=tf.nn.softmax))

model.summary()模型参数

Model: "sequential_2"

_________________________________________________________________

Layer (type) Output Shape Param #

=================================================================

flatten_1 (Flatten) (None, 784) 0

_________________________________________________________________

dense_2 (Dense) (None, 128) 100480

_________________________________________________________________

dense_3 (Dense) (None, 10) 1290

=================================================================

Total params: 101,770

Trainable params: 101,770

Non-trainable params: 0每一层均是全连接

中间层的参数计算过程为:

784 * 128(weight) + 128(bias) = 100480.

最终层的参数计算过程为:

128 * 10(weight) + 10(bias) = 1290.

计算结果符合summary方法给出的统计数据。

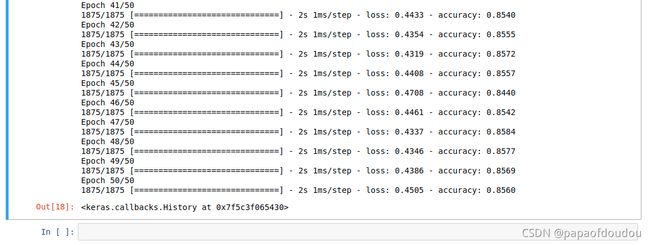

训练模型

model.compile(optimizer=tf.optimizers.Adam(),loss=tf.losses.sparse_categorical_crossentropy,metrics=['accuracy'])

model.fit(train_images,train_labels,epochs=50)Epoch 1/50

1875/1875 [==============================] - 2s 1ms/step - loss: 0.5283 - accuracy: 0.8235

Epoch 2/50

1875/1875 [==============================] - 2s 1ms/step - loss: 0.5076 - accuracy: 0.8300

Epoch 3/50

1875/1875 [==============================] - 2s 1ms/step - loss: 0.4954 - accuracy: 0.8316

Epoch 4/50

1875/1875 [==============================] - 2s 1ms/step - loss: 0.4892 - accuracy: 0.8350

Epoch 5/50

1875/1875 [==============================] - 2s 1ms/step - loss: 0.4853 - accuracy: 0.8364

Epoch 6/50

1875/1875 [==============================] - 2s 1ms/step - loss: 0.4847 - accuracy: 0.8388

Epoch 7/50

1875/1875 [==============================] - 2s 1ms/step - loss: 0.4769 - accuracy: 0.8414

Epoch 8/50

1875/1875 [==============================] - 2s 1ms/step - loss: 0.4733 - accuracy: 0.8407

Epoch 9/50

1875/1875 [==============================] - 2s 1ms/step - loss: 0.4796 - accuracy: 0.8410

Epoch 10/50

1875/1875 [==============================] - 2s 1ms/step - loss: 0.4935 - accuracy: 0.8381: 0s - loss: 0.4940 - accura

Epoch 11/50

1875/1875 [==============================] - 2s 1ms/step - loss: 0.4756 - accuracy: 0.8408

Epoch 12/50

1875/1875 [==============================] - 2s 1ms/step - loss: 0.4745 - accuracy: 0.8424

Epoch 13/50

1875/1875 [==============================] - 2s 1ms/step - loss: 0.4707 - accuracy: 0.8434

Epoch 14/50

1875/1875 [==============================] - 2s 1ms/step - loss: 0.4693 - accuracy: 0.8434

Epoch 15/50

1875/1875 [==============================] - 2s 1ms/step - loss: 0.4614 - accuracy: 0.8463

Epoch 16/50

1875/1875 [==============================] - 2s 1ms/step - loss: 0.4617 - accuracy: 0.8468

Epoch 17/50

1875/1875 [==============================] - 2s 1ms/step - loss: 0.4481 - accuracy: 0.8492

Epoch 18/50

1875/1875 [==============================] - 2s 1ms/step - loss: 0.4638 - accuracy: 0.8464

Epoch 19/50

1875/1875 [==============================] - 2s 1ms/step - loss: 0.4624 - accuracy: 0.8481

Epoch 20/50

1875/1875 [==============================] - 2s 1ms/step - loss: 0.4500 - accuracy: 0.8501

Epoch 21/50

1875/1875 [==============================] - 2s 1ms/step - loss: 0.4546 - accuracy: 0.8479

Epoch 22/50

1875/1875 [==============================] - 2s 1ms/step - loss: 0.4560 - accuracy: 0.8495

Epoch 23/50

1875/1875 [==============================] - 2s 1ms/step - loss: 0.4552 - accuracy: 0.8490

Epoch 24/50

1875/1875 [==============================] - 2s 1ms/step - loss: 0.4492 - accuracy: 0.8501

Epoch 25/50

1875/1875 [==============================] - 2s 1ms/step - loss: 0.4497 - accuracy: 0.8525

Epoch 26/50

1875/1875 [==============================] - 2s 1ms/step - loss: 0.4489 - accuracy: 0.8495

Epoch 27/50

1875/1875 [==============================] - 2s 1ms/step - loss: 0.4464 - accuracy: 0.8515

Epoch 28/50

1875/1875 [==============================] - 2s 1ms/step - loss: 0.4529 - accuracy: 0.8507

Epoch 29/50

1875/1875 [==============================] - 2s 1ms/step - loss: 0.4495 - accuracy: 0.8526

Epoch 30/50

1875/1875 [==============================] - 2s 1ms/step - loss: 0.4454 - accuracy: 0.8508

Epoch 31/50

1875/1875 [==============================] - 2s 1ms/step - loss: 0.4578 - accuracy: 0.8513

Epoch 32/50

1875/1875 [==============================] - 2s 1ms/step - loss: 0.4434 - accuracy: 0.8534

Epoch 33/50

1875/1875 [==============================] - 2s 1ms/step - loss: 0.4387 - accuracy: 0.8541

Epoch 34/50

1875/1875 [==============================] - 2s 1ms/step - loss: 0.4481 - accuracy: 0.8536

Epoch 35/50

1875/1875 [==============================] - 2s 1ms/step - loss: 0.4448 - accuracy: 0.8536:

Epoch 36/50

1875/1875 [==============================] - 2s 1ms/step - loss: 0.4459 - accuracy: 0.8540

Epoch 37/50

1875/1875 [==============================] - 2s 1ms/step - loss: 0.4397 - accuracy: 0.8537

Epoch 38/50

1875/1875 [==============================] - 2s 1ms/step - loss: 0.4399 - accuracy: 0.8559

Epoch 39/50

1875/1875 [==============================] - 2s 1ms/step - loss: 0.4528 - accuracy: 0.8530

Epoch 40/50

1875/1875 [==============================] - 2s 1ms/step - loss: 0.4404 - accuracy: 0.8561

Epoch 41/50

1875/1875 [==============================] - 2s 1ms/step - loss: 0.4433 - accuracy: 0.8540

Epoch 42/50

1875/1875 [==============================] - 2s 1ms/step - loss: 0.4354 - accuracy: 0.8555

Epoch 43/50

1875/1875 [==============================] - 2s 1ms/step - loss: 0.4319 - accuracy: 0.8572

Epoch 44/50

1875/1875 [==============================] - 2s 1ms/step - loss: 0.4408 - accuracy: 0.8557

Epoch 45/50

1875/1875 [==============================] - 2s 1ms/step - loss: 0.4708 - accuracy: 0.8440

Epoch 46/50

1875/1875 [==============================] - 2s 1ms/step - loss: 0.4461 - accuracy: 0.8542

Epoch 47/50

1875/1875 [==============================] - 2s 1ms/step - loss: 0.4337 - accuracy: 0.8584

Epoch 48/50

1875/1875 [==============================] - 2s 1ms/step - loss: 0.4346 - accuracy: 0.8577

Epoch 49/50

1875/1875 [==============================] - 2s 1ms/step - loss: 0.4386 - accuracy: 0.8569

Epoch 50/50

1875/1875 [==============================] - 2s 1ms/step - loss: 0.4505 - accuracy: 0.8560

可以看到,经过50次的训练,正确率在%85左右徘徊 ,中间经过几次周旋倒退,最终总体上还是在正确率上升的轨道上进行,稳定副在%85.6

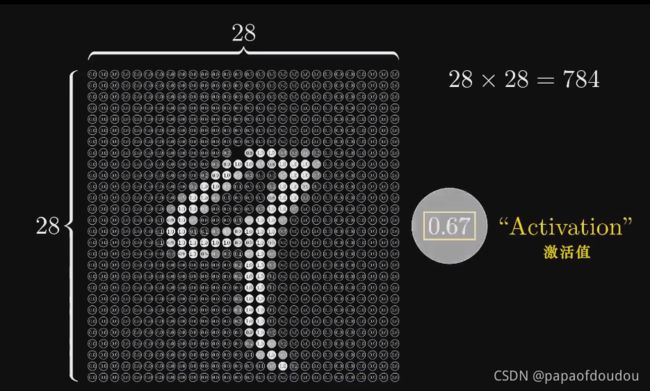

加入normalization,提升正确率

说白了,正规化就是这样一个过程:

将数据正规化,转换为[0,1]区间的数据

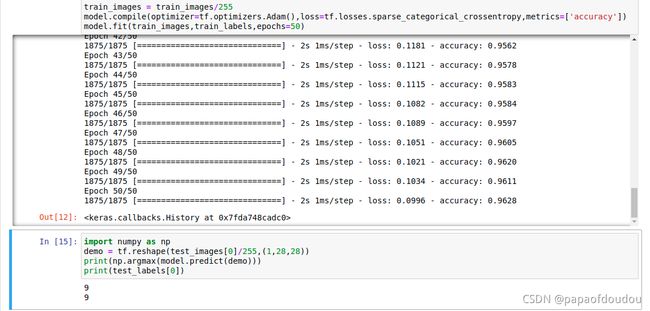

train_images = train_images/255

model.compile(optimizer=tf.optimizers.Adam(),loss=tf.losses.sparse_categorical_crossentropy,metrics=['accuracy'])

model.fit(train_images,train_labels,epochs=50)Epoch 1/50

1875/1875 [==============================] - 2s 1ms/step - loss: 1.0806 - accuracy: 0.6693

Epoch 2/50

1875/1875 [==============================] - 2s 1ms/step - loss: 0.6354 - accuracy: 0.7707

Epoch 3/50

1875/1875 [==============================] - 2s 1ms/step - loss: 0.5593 - accuracy: 0.8002

Epoch 4/50

1875/1875 [==============================] - 3s 1ms/step - loss: 0.5169 - accuracy: 0.8167

Epoch 5/50

1875/1875 [==============================] - 3s 1ms/step - loss: 0.4904 - accuracy: 0.8270

Epoch 6/50

1875/1875 [==============================] - 2s 1ms/step - loss: 0.4709 - accuracy: 0.8342

Epoch 7/50

1875/1875 [==============================] - 2s 1ms/step - loss: 0.4553 - accuracy: 0.8400

Epoch 8/50

1875/1875 [==============================] - 2s 1ms/step - loss: 0.4430 - accuracy: 0.8442

Epoch 9/50

1875/1875 [==============================] - 2s 1ms/step - loss: 0.4333 - accuracy: 0.8471

Epoch 10/50

1875/1875 [==============================] - 2s 1ms/step - loss: 0.4246 - accuracy: 0.8502

Epoch 11/50

1875/1875 [==============================] - 2s 1ms/step - loss: 0.4172 - accuracy: 0.8526

Epoch 12/50

1875/1875 [==============================] - 2s 1ms/step - loss: 0.4102 - accuracy: 0.8553

Epoch 13/50

1875/1875 [==============================] - 2s 1ms/step - loss: 0.4045 - accuracy: 0.8586

Epoch 14/50

1875/1875 [==============================] - 2s 1ms/step - loss: 0.3987 - accuracy: 0.8605

Epoch 15/50

1875/1875 [==============================] - 3s 1ms/step - loss: 0.3939 - accuracy: 0.8608

Epoch 16/50

1875/1875 [==============================] - 2s 1ms/step - loss: 0.3904 - accuracy: 0.8626

Epoch 17/50

1875/1875 [==============================] - 2s 1ms/step - loss: 0.3858 - accuracy: 0.8647

Epoch 18/50

1875/1875 [==============================] - 2s 1ms/step - loss: 0.3818 - accuracy: 0.8654

Epoch 19/50

1875/1875 [==============================] - 2s 1ms/step - loss: 0.3778 - accuracy: 0.8677

Epoch 20/50

1875/1875 [==============================] - 2s 1ms/step - loss: 0.3745 - accuracy: 0.8683

Epoch 21/50

1875/1875 [==============================] - 2s 1ms/step - loss: 0.3718 - accuracy: 0.8690

Epoch 22/50

1875/1875 [==============================] - 3s 1ms/step - loss: 0.3680 - accuracy: 0.8701

Epoch 23/50

1875/1875 [==============================] - 2s 1ms/step - loss: 0.3653 - accuracy: 0.8717

Epoch 24/50

1875/1875 [==============================] - 2s 1ms/step - loss: 0.3620 - accuracy: 0.8724

Epoch 25/50

1875/1875 [==============================] - 2s 1ms/step - loss: 0.3594 - accuracy: 0.8736

Epoch 26/50

1875/1875 [==============================] - 2s 1ms/step - loss: 0.3569 - accuracy: 0.8743

Epoch 27/50

1875/1875 [==============================] - 2s 1ms/step - loss: 0.3543 - accuracy: 0.8750

Epoch 28/50

1875/1875 [==============================] - 2s 1ms/step - loss: 0.3521 - accuracy: 0.8763

Epoch 29/50

1875/1875 [==============================] - 2s 1ms/step - loss: 0.3492 - accuracy: 0.8766

Epoch 30/50

1875/1875 [==============================] - 2s 1ms/step - loss: 0.3467 - accuracy: 0.8773

Epoch 31/50

1875/1875 [==============================] - 2s 1ms/step - loss: 0.3445 - accuracy: 0.8788

Epoch 32/50

1875/1875 [==============================] - 2s 1ms/step - loss: 0.3424 - accuracy: 0.8783

Epoch 33/50

1875/1875 [==============================] - 2s 1ms/step - loss: 0.3403 - accuracy: 0.8801

Epoch 34/50

1875/1875 [==============================] - 2s 1ms/step - loss: 0.3379 - accuracy: 0.8795

Epoch 35/50

1875/1875 [==============================] - 2s 1ms/step - loss: 0.3360 - accuracy: 0.8816

Epoch 36/50

1875/1875 [==============================] - 2s 1ms/step - loss: 0.3338 - accuracy: 0.8817

Epoch 37/50

1875/1875 [==============================] - 2s 1ms/step - loss: 0.3317 - accuracy: 0.8824

Epoch 38/50

1875/1875 [==============================] - 2s 1ms/step - loss: 0.3296 - accuracy: 0.8832

Epoch 39/50

1875/1875 [==============================] - 2s 1ms/step - loss: 0.3271 - accuracy: 0.8837

Epoch 40/50

1875/1875 [==============================] - 2s 1ms/step - loss: 0.3257 - accuracy: 0.8842

Epoch 41/50

1875/1875 [==============================] - 2s 1ms/step - loss: 0.3236 - accuracy: 0.8846

Epoch 42/50

1875/1875 [==============================] - 2s 1ms/step - loss: 0.3211 - accuracy: 0.8864

Epoch 43/50

1875/1875 [==============================] - 2s 1ms/step - loss: 0.3196 - accuracy: 0.8859

Epoch 44/50

1875/1875 [==============================] - 2s 1ms/step - loss: 0.3174 - accuracy: 0.8868

Epoch 45/50

1875/1875 [==============================] - 2s 1ms/step - loss: 0.3162 - accuracy: 0.8874

Epoch 46/50

1875/1875 [==============================] - 2s 1ms/step - loss: 0.3144 - accuracy: 0.8877

Epoch 47/50

1875/1875 [==============================] - 2s 1ms/step - loss: 0.3124 - accuracy: 0.8884

Epoch 48/50

1875/1875 [==============================] - 2s 1ms/step - loss: 0.3108 - accuracy: 0.8894

Epoch 49/50

1875/1875 [==============================] - 2s 1ms/step - loss: 0.3086 - accuracy: 0.8895

Epoch 50/50

1875/1875 [==============================] - 2s 1ms/step - loss: 0.3074 - accuracy: 0.8897

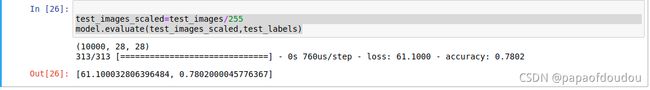

可以看到,最终的正确率比正规化之前有所提升。

利用模型进行推理

test_images_scaled=test_images/255

model.evaluate(test_images_scaled,test_labels)验证非正规化的参数推理:

import numpy as np

demo = tf.reshape(test_images[0],(1,28,28))

print(np.argmax(model.predict(demo)))

print(test_labels[0])如果训练的时候,输入经过了正规化,则推理也需要正规化:

通过上面几次的训练过程输出的准确率差异我们可以总结出,同一个网络模型,不同的时间进行训练,可以得到完全不同的正确率,这也说明模型本身的复杂性和时变性,至于为何会发生, 模型的大量参数组成一个高维的数据空间,我们用梯度下降法寻找最优解的时候,依赖于初始参数权重和BIAS在高维空间中的位置,不同次的训练过程,初始化参数在空间中的位置不同,优化的方向则不同,最终的最优解也不相同,所以可以看出,模型训练是一个非常复杂的数学计算过程,并且很可能寻找到的最优解是局部最优解,而并非全局最优解。

通过上面几次的训练过程输出的准确率差异我们可以总结出,同一个网络模型,不同的时间进行训练,可以得到完全不同的正确率,这也说明模型本身的复杂性和时变性,至于为何会发生, 模型的大量参数组成一个高维的数据空间,我们用梯度下降法寻找最优解的时候,依赖于初始参数权重和BIAS在高维空间中的位置,不同次的训练过程,初始化参数在空间中的位置不同,优化的方向则不同,最终的最优解也不相同,所以可以看出,模型训练是一个非常复杂的数学计算过程,并且很可能寻找到的最优解是局部最优解,而并非全局最优解。

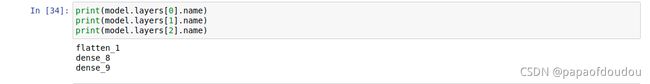

调试

得到每层的名称:

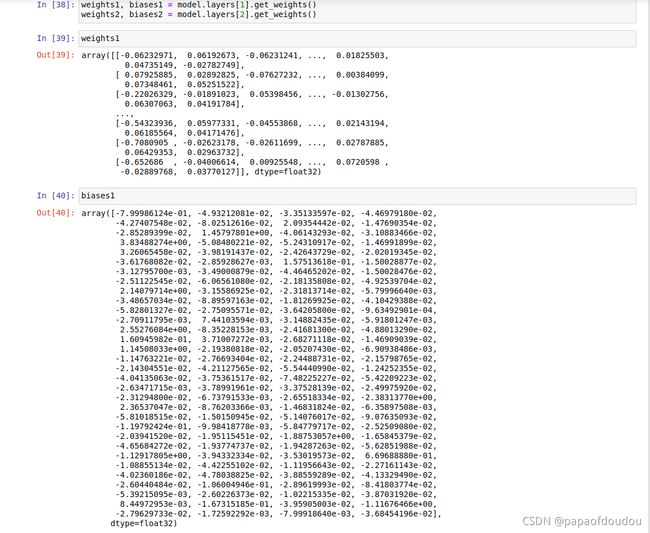

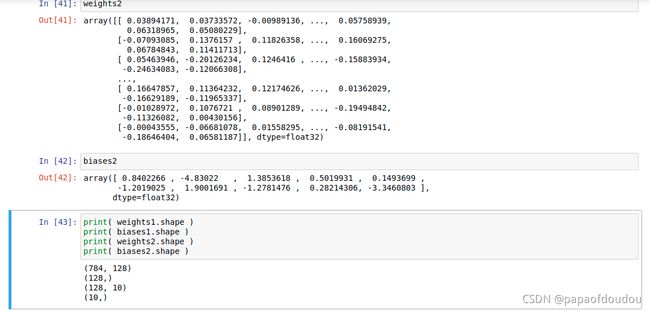

得到权重和BIAS

可以看到,权重的维度和上文中的计算是相符合的。

参考排错:

ValueError: Input 0 of layer dense is incompatible with the layer: expected axis -1 of input shape_善良995的博客-CSDN博客