k8s部署zookeeper+kafka集群

一、相关版本信息

k8s v1.17.16

kafka registry.hub.docker.com/leey18/k8skafka:v1

zookeeper registry.hub.docker.com/linzhaoming/k8szk:v2

我们是部署集群3台kafka,3台zookeeper。

二、Persistent Volume存储的创建

1.创建pv

(1)分别登录3台worker服务器,创建yaml中对应的文件夹路径,对应创建多个PV,zookeeper服务和kafka服务各3个,一共创建6个目录,采取14.25.36命名方式创建文件

基于NFS的PV创建示例如下:

apiVersion: v1

kind: PersistentVolume

metadata:

name: zookeeper-v1

annotations:

volume.beta.kubernetes.io/storage-class: "anything"

spec:

capacity:

storage: 5Gi

accessModes:

- ReadWriteMany

persistentVolumeReclaimPolicy: Recycle

nfs:

path: /exports/zookeeper-v1

server: 192.168.0.154

---

apiVersion: v1

kind: PersistentVolume

metadata:

name: zookeeper-v4

annotations:

volume.beta.kubernetes.io/storage-class: "anything"

spec:

capacity:

storage: 5Gi

accessModes:

- ReadWriteMany

persistentVolumeReclaimPolicy: Recycle

nfs:

path: /exports/zookeeper-v4

server: 192.168.0.154

---

apiVersion: v1

kind: PersistentVolume

metadata:

name: zookeeper-v2

annotations:

volume.beta.kubernetes.io/storage-class: "anything"

spec:

capacity:

storage: 5Gi

accessModes:

- ReadWriteMany

persistentVolumeReclaimPolicy: Recycle

nfs:

path: /exports/zookeeper-v2

server: 192.168.0.155

---

apiVersion: v1

kind: PersistentVolume

metadata:

name: zookeeper-v5

annotations:

volume.beta.kubernetes.io/storage-class: "anything"

spec:

capacity:

storage: 5Gi

accessModes:

- ReadWriteMany

persistentVolumeReclaimPolicy: Recycle

nfs:

path: /exports/zookeeper-v5

server: 192.168.0.155

---

apiVersion: v1

kind: PersistentVolume

metadata:

name: zookeeper-v3

annotations:

volume.beta.kubernetes.io/storage-class: "anything"

spec:

capacity:

storage: 5Gi

accessModes:

- ReadWriteMany

persistentVolumeReclaimPolicy: Recycle

nfs:

path: /exports/zookeeper-v3

server: 192.168.0.156

---

apiVersion: v1

kind: PersistentVolume

metadata:

name: zookeeper-v6

annotations:

volume.beta.kubernetes.io/storage-class: "anything"

spec:

capacity:

storage: 5Gi

accessModes:

- ReadWriteMany

persistentVolumeReclaimPolicy: Recycle

nfs:

path: /exports/zookeeper-v6

server: 192.168.0.156

---

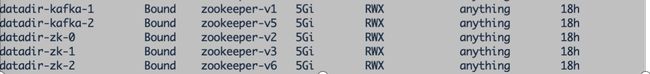

2.创建pvc

登录(master)服务器执行yaml文件创建pvc。

kubectl create -f pvc.yaml

三、安装zookeeper集群

1.创建zookeeper

kubectl create -f zookeeper.yaml

创建完成后查看zookeeper

apiVersion: v1

kind: Service

metadata:

name: zk-hs

labels:

app: zk

spec:

selector:

app: zk

ports:

- port: 2888

name: server

- port: 3888

name: leader-election

- port: 2181

name: client

clusterIP: None

---

apiVersion: v1

kind: ConfigMap

metadata:

name: zk-config

data:

ensemble: "zk-0;zk-1;zk-2"

replicas: "3"

jvm.heap: "512M"

tick: "2000"

init: "10"

sync: "5"

client.cnxns: "60"

snap.retain: "3"

purge.interval: "1"

---

apiVersion: policy/v1beta1

kind: PodDisruptionBudget

metadata:

name: zk-pdb

spec:

selector:

matchLabels:

app: zk

minAvailable: 2

---

apiVersion: apps/v1

kind: StatefulSet

metadata:

name: zk

spec:

selector:

matchLabels:

app: zk

serviceName: zk-hs

replicas: 3

updateStrategy:

type: RollingUpdate

podManagementPolicy: OrderedReady

template:

metadata:

labels:

app: zk

spec:

affinity:

podAntiAffinity:

requiredDuringSchedulingIgnoredDuringExecution:

- labelSelector:

matchExpressions:

- key: "app"

operator: In

values:

- zk

topologyKey: "kubernetes.io/hostname"

containers:

- name: zk

image: registry.hub.docker.com/linzhaoming/k8szk:v2

imagePullPolicy: IfNotPresent

ports:

- containerPort: 2181

name: client

- containerPort: 2888

name: server

- containerPort: 3888

name: leader-election

resources:

requests:

cpu: "500m"

memory: "512Mi"

env:

- name : ZK_ENSEMBLE

valueFrom:

configMapKeyRef:

name: zk-config

key: ensemble

- name : ZK_REPLICAS

valueFrom:

configMapKeyRef:

name: zk-config

key: replicas

- name : ZK_HEAP_SIZE

valueFrom:

configMapKeyRef:

name: zk-config

key: jvm.heap

- name : ZK_TICK_TIME

valueFrom:

configMapKeyRef:

name: zk-config

key: tick

- name : ZK_INIT_LIMIT

valueFrom:

configMapKeyRef:

name: zk-config

key: init

- name : ZK_SYNC_LIMIT

valueFrom:

configMapKeyRef:

name: zk-config

key: tick

- name : ZK_MAX_CLIENT_CNXNS

valueFrom:

configMapKeyRef:

name: zk-config

key: client.cnxns

- name: ZK_SNAP_RETAIN_COUNT

valueFrom:

configMapKeyRef:

name: zk-config

key: snap.retain

- name: ZK_PURGE_INTERVAL

valueFrom:

configMapKeyRef:

name: zk-config

key: purge.interval

- name: ZK_CLIENT_PORT

value: "2181"

- name: ZK_SERVER_PORT

value: "2888"

- name: ZK_ELECTION_PORT

value: "3888"

command:

- sh

- -c

- zkGenConfig.sh && zkServer.sh start-foreground

readinessProbe:

exec:

command:

- "zkOk.sh"

initialDelaySeconds: 15

timeoutSeconds: 5

livenessProbe:

exec:

command:

- "zkOk.sh"

initialDelaySeconds: 15

timeoutSeconds: 5

volumeMounts:

- name: data

mountPath: /var/lib/zookeeper

volumes:

- name: data

emptyDir: {}

securityContext:

runAsUser: 1000

fsGroup: 1000

volumeClaimTemplates:

- metadata:

name: datadir

annotations:

volume.beta.kubernetes.io/storage-class: anything

spec:

accessModes: [ "ReadWriteMany" ]

resources:

requests:

storage: 5Gi

四、部署kafka

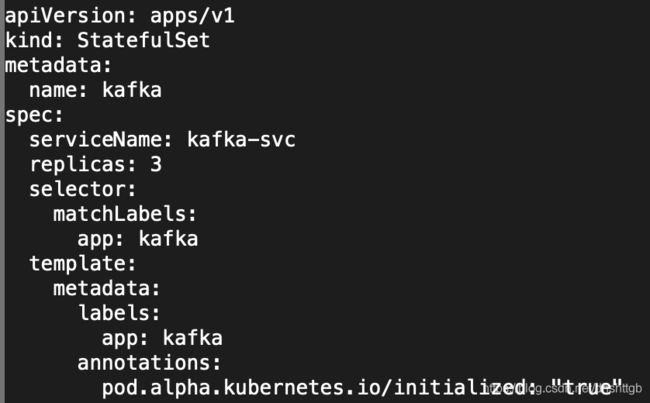

1.安装kafka集群

kubectl create -f kafka-petset.yaml

安装完成后查看kafka

---

apiVersion: v1

kind: Service

metadata:

name: kafka-svc

labels:

app: kafka

spec:

ports:

- port: 9093

name: server

clusterIP: None

selector:

app: kafka

---

apiVersion: apps/v1

kind: StatefulSet

metadata:

name: kafka

spec:

serviceName: kafka-svc

replicas: 3

selector:

matchLabels:

app: kafka

template:

metadata:

labels:

app: kafka

annotations:

pod.alpha.kubernetes.io/initialized: "true"

spec:

terminationGracePeriodSeconds: 0

dnsPolicy: ClusterFirst

restartPolicy: Always

serviceAccount: ""

containers:

- name: k8skafka

imagePullPolicy: Always

image: registry.hub.docker.com/leey18/k8skafka

resources:

requests:

memory: "1Gi"

cpu: 500m

ports:

- containerPort: 9093

name: server

command:

- sh

- -c

- "exec kafka-server-start.sh /opt/kafka/config/server.properties --override broker.id=${HOSTNAME##*-} \

--override listeners=PLAINTEXT://:9093 \

--override zookeeper.connect=zk-0.zk-hs.default.svc.cluster.local:2181,zk-1.zk-hs.default.svc.cluster.local:2181,zk-2.zk-hs.default.svc.cluster.local:2181 \

--override log.dir=/var/lib/kafka \

--override auto.create.topics.enable=true \

--override auto.leader.rebalance.enable=true \

--override background.threads=10 \

--override compression.type=producer \

--override delete.topic.enable=false \

--override leader.imbalance.check.interval.seconds=300 \

--override leader.imbalance.per.broker.percentage=10 \

--override log.flush.interval.messages=9223372036854775807 \

--override log.flush.offset.checkpoint.interval.ms=60000 \

--override log.flush.scheduler.interval.ms=9223372036854775807 \

--override log.retention.bytes=-1 \

--override log.retention.hours=168 \

--override log.roll.hours=168 \

--override log.roll.jitter.hours=0 \

--override log.segment.bytes=1073741824 \

--override log.segment.delete.delay.ms=60000 \

--override message.max.bytes=1000012 \

--override min.insync.replicas=1 \

--override num.io.threads=8 \

--override num.network.threads=3 \

--override num.recovery.threads.per.data.dir=1 \

--override num.replica.fetchers=1 \

--override offset.metadata.max.bytes=4096 \

--override offsets.commit.required.acks=-1 \

--override offsets.commit.timeout.ms=5000 \

--override offsets.load.buffer.size=5242880 \

--override offsets.retention.check.interval.ms=600000 \

--override offsets.retention.minutes=1440 \

--override offsets.topic.compression.codec=0 \

--override offsets.topic.num.partitions=50 \

--override offsets.topic.replication.factor=3 \

--override offsets.topic.segment.bytes=104857600 \

--override queued.max.requests=500 \

--override quota.consumer.default=9223372036854775807 \

--override quota.producer.default=9223372036854775807 \

--override replica.fetch.min.bytes=1 \

--override replica.fetch.wait.max.ms=500 \

--override replica.high.watermark.checkpoint.interval.ms=5000 \

--override replica.lag.time.max.ms=10000 \

--override replica.socket.receive.buffer.bytes=65536 \

--override replica.socket.timeout.ms=30000 \

--override request.timeout.ms=30000 \

--override socket.receive.buffer.bytes=102400 \

--override socket.request.max.bytes=104857600 \

--override socket.send.buffer.bytes=102400 \

--override unclean.leader.election.enable=true \

--override zookeeper.session.timeout.ms=6000 \

--override zookeeper.set.acl=false \

--override broker.id.generation.enable=true \

--override connections.max.idle.ms=600000 \

--override controlled.shutdown.enable=true \

--override controlled.shutdown.max.retries=3 \

--override controlled.shutdown.retry.backoff.ms=5000 \

--override controller.socket.timeout.ms=30000 \

--override default.replication.factor=1 \

--override fetch.purgatory.purge.interval.requests=1000 \

--override group.max.session.timeout.ms=300000 \

--override group.min.session.timeout.ms=6000 \

--override inter.broker.protocol.version=0.10.2-IV0 \

--override log.cleaner.backoff.ms=15000 \

--override log.cleaner.dedupe.buffer.size=134217728 \

--override log.cleaner.delete.retention.ms=86400000 \

--override log.cleaner.enable=true \

--override log.cleaner.io.buffer.load.factor=0.9 \

--override log.cleaner.io.buffer.size=524288 \

--override log.cleaner.io.max.bytes.per.second=1.7976931348623157E308 \

--override log.cleaner.min.cleanable.ratio=0.5 \

--override log.cleaner.min.compaction.lag.ms=0 \

--override log.cleaner.threads=1 \

--override log.cleanup.policy=delete \

--override log.index.interval.bytes=4096 \

--override log.index.size.max.bytes=10485760 \

--override log.message.timestamp.difference.max.ms=9223372036854775807 \

--override log.message.timestamp.type=CreateTime \

--override log.preallocate=false \

--override log.retention.check.interval.ms=300000 \

--override max.connections.per.ip=2147483647 \

--override num.partitions=1 \

--override producer.purgatory.purge.interval.requests=1000 \

--override replica.fetch.backoff.ms=1000 \

--override replica.fetch.max.bytes=1048576 \

--override replica.fetch.response.max.bytes=10485760 \

--override reserved.broker.max.id=1000 "

env:

- name: KAFKA_HEAP_OPTS

value : "-Xmx512M -Xms512M"

- name: KAFKA_OPTS

value: "-Dlogging.level=INFO"

volumeMounts:

- name: datadir

mountPath: /var/lib/kafka

readinessProbe:

exec:

command:

- sh

- -c

- "/opt/kafka/bin/kafka-broker-api-versions.sh --bootstrap-server=localhost:9093"

volumeClaimTemplates:

- metadata:

name: datadir

annotations:

volume.beta.kubernetes.io/storage-class: anything

spec:

accessModes: [ "ReadWriteMany" ]

resources:

requests:

storage: 5Gi

五、部署过程中出现的问题记录

下列出现问题部署过程都已修复。

1.error validating data: ValidationError(Deployment.spec): missing required field selector

报错意思:

部署验证错误,在deploymentspec模块中必须指定 selector参数。

原因:在 Deployment.spec 模块中,只指定了 replicas 副本数量,还需要指定副本标签与 Deployment控制器进行匹配

解决办法:

在原yaml文件增加三行8-10三行内容即可(附件中的已经修改不需要做操作)

2.pod has unbound PersistentVolumeClaims

原因:

可以看到access modes是rwx权限,原yaml文件红框处不匹配所以报错