elasticsearch备份与恢复4_使用ES-Hadoop将ES中的索引数据写入HDFS中

背景知识见链接:elasticsearch备份与恢复3_使用ES-Hadoop将HDFS数据写入Elasticsearch中

项目参考《Elasticsearch集成Hadoop最佳实践》的tweets2HdfsMapper项目

项目源码:https://gitee.com/constfafa/ESToHDFS.git

开发过程:

1. 先在kibana中查看下索引的信息

"hits": [

{

"_index": "xxx-words",

"_type": "history",

"_id": "zankHWUBk5wX4tbY-gpZ",

"_score": 1,

"_source": {

"word": "abc",

"createTime": "2018-08-09 16:56:00",

"userId": "263",

"datetime": "2018-08-09T16:56:00Z"

}

},

{

"_index": "xxx-words",

"_type": "history",

"_id": "zqntHWUBk5wX4tbYFAqy",

"_score": 1,

"_source": {

"word": "bcd",

"createTime": "2018-08-09 16:59:00",

"userId": "263",

"datetime": "2018-08-09T16:59:00Z"

}

}

]

之后直接执行 hadoop jar history2hdfs-job.jar

执行过程如下

[root@docker02 jar]# hadoop jar history2hdfs-job.jar

18/06/07 04:04:36 WARN util.NativeCodeLoader: Unable to load native-hadoop library for your platform... using builtin-java classes where applicable

18/06/07 04:04:42 INFO client.RMProxy: Connecting to ResourceManager at /192.168.211.104:8032

18/06/07 04:04:48 WARN mapreduce.JobResourceUploader: Hadoop command-line option parsing not performed. Implement the Tool interface and execute your application with ToolRunner to remedy this.

18/06/07 04:04:55 INFO util.Version: Elasticsearch Hadoop v6.2.3 [039a45c5a1]

18/06/07 04:04:58 INFO mr.EsInputFormat: Reading from [hzeg-history-words/history]

18/06/07 04:04:58 INFO mr.EsInputFormat: Created [2] splits

18/06/07 04:05:00 INFO mapreduce.JobSubmitter: number of splits:2

18/06/07 04:05:02 INFO mapreduce.JobSubmitter: Submitting tokens for job: job_1528305729734_0007

18/06/07 04:05:05 INFO impl.YarnClientImpl: Submitted application application_1528305729734_0007

18/06/07 04:05:06 INFO mapreduce.Job: The url to track the job: http://docker02:8088/proxy/application_1528305729734_0007/

18/06/07 04:05:06 INFO mapreduce.Job: Running job: job_1528305729734_0007

18/06/07 04:09:31 INFO mapreduce.Job: Job job_1528305729734_0007 running in uber mode : false

18/06/07 04:09:42 INFO mapreduce.Job: map 0% reduce 0%

18/06/07 04:15:36 INFO mapreduce.Job: map 100% reduce 0%

18/06/07 04:17:26 INFO mapreduce.Job: Job job_1528305729734_0007 completed successfully

18/06/07 04:17:56 INFO mapreduce.Job: Counters: 47

File System Counters

FILE: Number of bytes read=0

FILE: Number of bytes written=230906

FILE: Number of read operations=0

FILE: Number of large read operations=0

FILE: Number of write operations=0

HDFS: Number of bytes read=74694

HDFS: Number of bytes written=222

HDFS: Number of read operations=8

HDFS: Number of large read operations=0

HDFS: Number of write operations=4

Job Counters

Launched map tasks=2

Rack-local map tasks=2

Total time spent by all maps in occupied slots (ms)=791952

Total time spent by all reduces in occupied slots (ms)=0

Total time spent by all map tasks (ms)=791952

Total vcore-seconds taken by all map tasks=791952

Total megabyte-seconds taken by all map tasks=810958848

Map-Reduce Framework

Map input records=2

Map output records=2

Input split bytes=74694

Spilled Records=0

Failed Shuffles=0

Merged Map outputs=0

GC time elapsed (ms)=8106

CPU time spent (ms)=20240

Physical memory (bytes) snapshot=198356992

Virtual memory (bytes) snapshot=4128448512

Total committed heap usage (bytes)=32157696

File Input Format Counters

Bytes Read=0

File Output Format Counters

Bytes Written=222

Elasticsearch Hadoop Counters

Bulk Retries=0

Bulk Retries Total Time(ms)=0

Bulk Total=0

Bulk Total Time(ms)=0

Bytes Accepted=0

Bytes Received=1102

Bytes Retried=0

Bytes Sent=296

Documents Accepted=0

Documents Received=0

Documents Retried=0

Documents Sent=0

Network Retries=0

Network Total Time(ms)=5973

Node Retries=0

Scroll Total=1

Scroll Total Time(ms)=666

后面仍旧报job history server找不到,并不影响

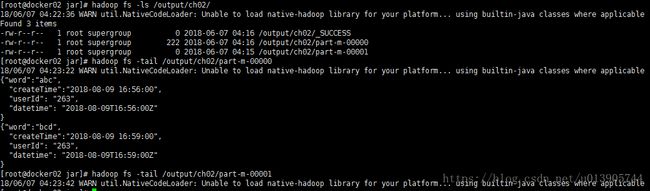

执行下面语句检查文件及数据是否正确

可以看到,最终实现了将索引文件存入HDFS的功能

自己在实际使用中遇到了下面的几个问题

1. spring boot gradle项目为了使用此功能,加入如下依赖后,

compile group: 'org.apache.hadoop', name: 'hadoop-core', version: '1.2.1'

compile group: 'org.apache.hadoop', name: 'hadoop-hdfs', version: '2.7.2'

compile group: 'org.elasticsearch', name: 'elasticsearch-hadoop', version: '6.2.4'发现spring boot启动报错A child container failed during start

查看gradle dependency

其中hadoop-core:1.2.1

+--- org.apache.hadoop:hadoop-core:1.2.1

| +--- commons-cli:commons-cli:1.2

| +--- xmlenc:xmlenc:0.52

| +--- com.sun.jersey:jersey-core:1.8 -> 1.9

| +--- com.sun.jersey:jersey-json:1.8

| | +--- org.codehaus.jettison:jettison:1.1

| | | \--- stax:stax-api:1.0.1

| | +--- com.sun.xml.bind:jaxb-impl:2.2.3-1

| | | \--- javax.xml.bind:jaxb-api:2.2.2 -> 2.3.0

| | +--- org.codehaus.jackson:jackson-core-asl:1.7.1 -> 1.9.13

| | +--- org.codehaus.jackson:jackson-mapper-asl:1.7.1 -> 1.9.13

| | | \--- org.codehaus.jackson:jackson-core-asl:1.9.13

| | +--- org.codehaus.jackson:jackson-jaxrs:1.7.1

| | | +--- org.codehaus.jackson:jackson-core-asl:1.7.1 -> 1.9.13

| | | \--- org.codehaus.jackson:jackson-mapper-asl:1.7.1 -> 1.9.13 (*)

| | +--- org.codehaus.jackson:jackson-xc:1.7.1

| | | +--- org.codehaus.jackson:jackson-core-asl:1.7.1 -> 1.9.13

| | | \--- org.codehaus.jackson:jackson-mapper-asl:1.7.1 -> 1.9.13 (*)

| | \--- com.sun.jersey:jersey-core:1.8 -> 1.9

| +--- com.sun.jersey:jersey-server:1.8 -> 1.9

| | +--- asm:asm:3.1

| | \--- com.sun.jersey:jersey-core:1.9

| +--- commons-io:commons-io:2.1 -> 2.4

| +--- commons-httpclient:commons-httpclient:3.0.1 -> 3.1

| | +--- commons-logging:commons-logging:1.0.4 -> 1.2

| | \--- commons-codec:commons-codec:1.2 -> 1.11

| +--- commons-codec:commons-codec:1.4 -> 1.11

| +--- org.apache.commons:commons-math:2.1

| +--- commons-configuration:commons-configuration:1.6

| | +--- commons-collections:commons-collections:3.2.1

| | +--- commons-lang:commons-lang:2.4 -> 2.6

| | +--- commons-logging:commons-logging:1.1.1 -> 1.2

| | +--- commons-digester:commons-digester:1.8

| | | +--- commons-beanutils:commons-beanutils:1.7.0 (*)

| | | \--- commons-logging:commons-logging:1.1 -> 1.2

| | \--- commons-beanutils:commons-beanutils-core:1.8.0

| | \--- commons-logging:commons-logging:1.1.1 -> 1.2

| +--- commons-net:commons-net:1.4.1

| | \--- oro:oro:2.0.8

| +--- org.mortbay.jetty:jetty:6.1.26

| | +--- org.mortbay.jetty:jetty-util:6.1.26

| | \--- org.mortbay.jetty:servlet-api:2.5-20081211

| +--- org.mortbay.jetty:jetty-util:6.1.26

| +--- tomcat:jasper-runtime:5.5.12

| +--- tomcat:jasper-compiler:5.5.12

| +--- org.mortbay.jetty:jsp-api-2.1:6.1.14

| | \--- org.mortbay.jetty:servlet-api-2.5:6.1.14

| +--- org.mortbay.jetty:jsp-2.1:6.1.14

| | +--- org.eclipse.jdt:core:3.1.1

| | +--- org.mortbay.jetty:jsp-api-2.1:6.1.14 (*)

| | \--- ant:ant:1.6.5

| +--- commons-el:commons-el:1.0

| | \--- commons-logging:commons-logging:1.0.3 -> 1.2

| +--- net.java.dev.jets3t:jets3t:0.6.1

| | +--- commons-codec:commons-codec:1.3 -> 1.11

| | +--- commons-logging:commons-logging:1.1.1 -> 1.2

| | \--- commons-httpclient:commons-httpclient:3.1 (*)

| +--- hsqldb:hsqldb:1.8.0.10

| +--- oro:oro:2.0.8

| +--- org.eclipse.jdt:core:3.1.1

| \--- org.codehaus.jackson:jackson-mapper-asl:1.8.8 -> 1.9.13 (*)

hadoop-hdfs:2.7.1

+--- org.apache.hadoop:hadoop-hdfs:2.7.1

| +--- com.google.guava:guava:11.0.2 -> 18.0

| +--- org.mortbay.jetty:jetty:6.1.26 (*)

| +--- org.mortbay.jetty:jetty-util:6.1.26

| +--- com.sun.jersey:jersey-core:1.9

| +--- com.sun.jersey:jersey-server:1.9 (*)

| +--- commons-cli:commons-cli:1.2

| +--- commons-codec:commons-codec:1.4 -> 1.11

| +--- commons-io:commons-io:2.4

| +--- commons-lang:commons-lang:2.6

| +--- commons-logging:commons-logging:1.1.3 -> 1.2

| +--- commons-daemon:commons-daemon:1.0.13

| +--- log4j:log4j:1.2.17

| +--- com.google.protobuf:protobuf-java:2.5.0

| +--- javax.servlet:servlet-api:2.5

| +--- org.codehaus.jackson:jackson-core-asl:1.9.13

| +--- org.codehaus.jackson:jackson-mapper-asl:1.9.13 (*)

| +--- xmlenc:xmlenc:0.52

| +--- io.netty:netty-all:4.0.23.Final -> 4.1.22.Final

| +--- xerces:xercesImpl:2.9.1

| | \--- xml-apis:xml-apis:1.3.04 -> 1.4.01

| +--- org.apache.htrace:htrace-core:3.1.0-incubating

| \--- org.fusesource.leveldbjni:leveldbjni-all:1.8

原因:

发现其都是要依赖servlet-api-2.5的,而springboot要依赖于servlet3以及更高版本,

解决方案:

要exclude servlet-api-2.5

在build.gradle中配置

configurations{

all*.exclude group:'javax.servlet'

}参考:

java - SpringBoot Catalina LifeCycle Exception - Stack Overflow

解决gradle管理依赖中 出现servlet-api.jar冲突的问题。 - CSDN博客

2. 使用的hadoop hdfs的版本是2.7.2,当es向hdfs中写入数据时报错:

ERROR security.UserGroupInformation: PriviledgedActionException as:root cause:org.apache.hadoop.ipc.RemoteException: Server IPC version 9 cannot communicate with client version 4

org.apache.hadoop.ipc.RemoteException: Server IPC version 9 cannot communicate with client version 4原因:

hadoop-core 1.2.1太老了,不支持2.7.2了

所以解决办法是:

修改依赖

compile group: 'org.apache.hadoop', name: 'hadoop-common', version: '2.7.2'

compile group: 'org.apache.hadoop', name: 'hadoop-mapreduce-client-core', version: '2.7.2'

compile group: 'org.apache.hadoop', name: 'hadoop-client', version: '2.7.2'

compile group: 'org.apache.hadoop', name: 'hadoop-hdfs', version: '2.7.2'

compile group: 'org.elasticsearch', name: 'elasticsearch-hadoop', version: '6.2.4'参考:

intellij的maven工程"Server IPC version 9 cannot communicate with client version"错误的解决办法 - CSDN博客