yolov5-pytorch训练自己数据集

一、yolov5源码测试

1、源码下载(v4.0版本)

官方地址:https://github.com/ultralytics/yolov5

2、模型下载

官方链接:https://github.com/ultralytics/yolov5/releases

yolov5l.pt

yolov5s.pt

yolov5x.pt

yolov5m.pt

将权重文件放入yolov5-master/weights文件夹下

3、环境配置

pip3 install -r requirements.txt

4、测试

git clone https://github.com/ultralytics/yolov5/releases

cd yolov5-master

#1 利用自带摄像头检测

python3 detec.py --source 0

#2 else

python detect.py --source 0 # webcam

file.jpg # image

file.mp4 # video

# 3 批量检测输出

python3 detect.py --source data/images --weights yolov5s.pt --conf 0.25

二、训练自己数据集

1、数据集构建

在yolov5-master文件夹构建my_Data文件夹存放自己数据

数据集结构显示:

myData

......images #存放图像

......Annotations #存放图像对应的xml文件

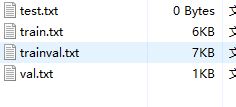

......ImageSets/Main #存放训练/存放train.txt/val.txt/test.txt/trainval.txt文件

......test.py #生成train.txt/val.txt/test.txt/trainval.txt文件

2、在ImageSets/Main下生成.txt文件

建立my_test.py文件:

# coding:utf-8

import os

import random

import argparse

parser = argparse.ArgumentParser()

#xml文件的地址,根据自己的数据进行修改 xml一般存放在Annotations下

parser.add_argument('--xml_path', default='Annotations', type=str, help='input xml label path')

#数据集的划分,地址选择自己数据下的ImageSets/Main

parser.add_argument('--txt_path', default='ImageSets/Main', type=str, help='output txt label path')

opt = parser.parse_args()

trainval_percent = 1.0

train_percent = 0.9

xmlfilepath = opt.xml_path

txtsavepath = opt.txt_path

total_xml = os.listdir(xmlfilepath)

if not os.path.exists(txtsavepath):

os.makedirs(txtsavepath)

num = len(total_xml)

list_index = range(num)

tv = int(num * trainval_percent)

tr = int(tv * train_percent)

trainval = random.sample(list_index, tv)

train = random.sample(trainval, tr)

file_trainval = open(txtsavepath + '/trainval.txt', 'w')

file_test = open(txtsavepath + '/test.txt', 'w')

file_train = open(txtsavepath + '/train.txt', 'w')

file_val = open(txtsavepath + '/val.txt', 'w')

for i in list_index:

name = total_xml[i][:-4] + '\n'

if i in trainval:

file_trainval.write(name)

if i in train:

file_train.write(name)

else:

file_val.write(name)

else:

file_test.write(name)

file_trainval.close()

file_train.close()

file_val.close()

file_test.close()

3、将数据集格式转换为yolo_txt格式,同时生成label标签

创建my_label.py文件

# -*- coding: utf-8 -*-

import xml.etree.ElementTree as ET

import os

from os import getcwd

sets = ['train', 'val', 'test']

classes = ["person"] # 改成自己的类别

abs_path = os.getcwd()

print(abs_path)

def convert(size, box):

dw = 1. / (size[0])

dh = 1. / (size[1])

x = (box[0] + box[1]) / 2.0 - 1

y = (box[2] + box[3]) / 2.0 - 1

w = box[1] - box[0]

h = box[3] - box[2]

x = x * dw

w = w * dw

y = y * dh

h = h * dh

return x, y, w, h

def convert_annotation(image_id):

in_file = open('/home/wyh/keti/mubiao/yolov5-master/my_Data/Annotations/%s.xml' % (image_id), encoding='UTF-8')

out_file = open('/home/wyh/keti/mubiao/yolov5-master/my_Data/labels/%s.txt' % (image_id), 'w')

tree = ET.parse(in_file)

root = tree.getroot()

size = root.find('size')

w = int(size.find('width').text)

h = int(size.find('height').text)

for obj in root.iter('object'):

difficult = obj.find('difficult').text

cls = obj.find('name').text

if cls not in classes or int(difficult) == 1:

continue

cls_id = classes.index(cls)

xmlbox = obj.find('bndbox')

b = (float(xmlbox.find('xmin').text), float(xmlbox.find('xmax').text), float(xmlbox.find('ymin').text),

float(xmlbox.find('ymax').text))

b1, b2, b3, b4 = b

# 标注越界修正

if b2 > w:

b2 = w

if b4 > h:

b4 = h

b = (b1, b2, b3, b4)

bb = convert((w, h), b)

out_file.write(str(cls_id) + " " + " ".join([str(a) for a in bb]) + '\n')

wd = getcwd()

for image_set in sets:

if not os.path.exists('/home/wyh/keti/mubiao/yolov5-master/my_Data/labels/'):

os.makedirs('/home/wyh/keti/mubiao/yolov5-master/my_Data/labels/')

image_ids = open('/home/wyh/keti/mubiao/yolov5-master/my_Data/ImageSets/Main/%s.txt' % (image_set)).read().strip().split()

list_file = open('/home/wyh/keti/mubiao/yolov5-master/my_Data/%s.txt' % (image_set), 'w')

for image_id in image_ids:

list_file.write(abs_path + '/home/wyh/keti/mubiao/yolov5-master/my_Data/images/%s.jpg\n' % (image_id))

convert_annotation(image_id)

list_file.close()

运行后在my_Data文件夹下生成labels文件夹和三个txt文件,labels中为不同图像的标注文件,

labels文件格式

0 0.8095238095238095 0.53 0.3630952380952381 0.892

0 0.25297619047619047 0.604 0.41666666666666663 0.46

0 0.30952380952380953 0.301 0.4583333333333333 0.28200000000000003

4、配置文件

4.1 在my_Data文件夹下新建my_data.yaml文件

文件内容:

# PASCAL VOC dataset http://host.robots.ox.ac.uk/pascal/VOC/

# Train command: python train.py --data voc.yaml

# Default dataset location is next to /yolov5:

# /parent_folder

# /VOC

# /yolov5

# download command/URL (optional)

download: bash data/scripts/get_coco.sh

# train and val data as 1) directory: path/images/, 2) file: path/images.txt, or 3) list: [path1/images/, path2/images/]

train: /home/wyh/keti/mubiao/yolov5-master/my_Data/images # 16551 images

val: /home/wyh/keti/mubiao/yolov5-master/my_Data/images # 4952 images

#test: /home/wyh/keti/mubiao/yolov5-master/my_Data/test.txt

# number of classes

nc: 1

# class names

names: [ 'person' ]

注:将labels文件夹和images文件夹修改成如下格式

images

......train #原始图片

......val

labels

......train #label文件

......val

5、修改模型配置文件

5.1 在my_Data文件夹新建my_yolov5s.yaml文件

# parameters

nc: 1 # number of classes

depth_multiple: 0.33 # model depth multiple

width_multiple: 0.50 # layer channel multiple

# anchors

anchors:

- [10,13, 16,30, 33,23] # P3/8

- [30,61, 62,45, 59,119] # P4/16

- [116,90, 156,198, 373,326] # P5/32

......

修改nc 、根据情况修改anchors

5.2 kmeans聚类

import numpy as np

def iou(box, clusters):

"""

Calculates the Intersection over Union (IoU) between a box and k clusters.

:param box: tuple or array, shifted to the origin (i. e. width and height)

:param clusters: numpy array of shape (k, 2) where k is the number of clusters

:return: numpy array of shape (k, 0) where k is the number of clusters

"""

x = np.minimum(clusters[:, 0], box[0])

y = np.minimum(clusters[:, 1], box[1])

if np.count_nonzero(x == 0) > 0 or np.count_nonzero(y == 0) > 0:

raise ValueError("Box has no area")

intersection = x * y

box_area = box[0] * box[1]

cluster_area = clusters[:, 0] * clusters[:, 1]

iou_ = intersection / (box_area + cluster_area - intersection)

return iou_

def avg_iou(boxes, clusters):

"""

Calculates the average Intersection over Union (IoU) between a numpy array of boxes and k clusters.

:param boxes: numpy array of shape (r, 2), where r is the number of rows

:param clusters: numpy array of shape (k, 2) where k is the number of clusters

:return: average IoU as a single float

"""

return np.mean([np.max(iou(boxes[i], clusters)) for i in range(boxes.shape[0])])

def translate_boxes(boxes):

"""

Translates all the boxes to the origin.

:param boxes: numpy array of shape (r, 4)

:return: numpy array of shape (r, 2)

"""

new_boxes = boxes.copy()

for row in range(new_boxes.shape[0]):

new_boxes[row][2] = np.abs(new_boxes[row][2] - new_boxes[row][0])

new_boxes[row][3] = np.abs(new_boxes[row][3] - new_boxes[row][1])

return np.delete(new_boxes, [0, 1], axis=1)

def kmeans(boxes, k, dist=np.median):

"""

Calculates k-means clustering with the Intersection over Union (IoU) metric.

:param boxes: numpy array of shape (r, 2), where r is the number of rows

:param k: number of clusters

:param dist: distance function

:return: numpy array of shape (k, 2)

"""

rows = boxes.shape[0]

distances = np.empty((rows, k))

last_clusters = np.zeros((rows,))

np.random.seed()

# the Forgy method will fail if the whole array contains the same rows

clusters = boxes[np.random.choice(rows, k, replace=False)]

while True:

for row in range(rows):

distances[row] = 1 - iou(boxes[row], clusters)

nearest_clusters = np.argmin(distances, axis=1)

if (last_clusters == nearest_clusters).all():

break

for cluster in range(k):

clusters[cluster] = dist(boxes[nearest_clusters == cluster], axis=0)

last_clusters = nearest_clusters

return clusters

if __name__ == '__main__':

a = np.array([[1, 2, 3, 4], [5, 7, 6, 8]])

print(translate_boxes(a))

聚类生成新anchors的文件new_anchors.py

# -*- coding: utf-8 -*-

# 根据标签文件求先验框

import os

import numpy as np

import xml.etree.cElementTree as et

from kmeans import kmeans, avg_iou

FILE_ROOT = "/home/wyh/keti/mubiao/yolov5-master/my_Data/" # 根路径

ANNOTATION_ROOT = "Annotations" # 数据集标签文件夹路径

ANNOTATION_PATH = FILE_ROOT + ANNOTATION_ROOT

ANCHORS_TXT_PATH = "/home/wyh/keti/mubiao/yolov5-master/my_Data/anchors.txt"

CLUSTERS = 1

CLASS_NAMES = ['person']

def load_data(anno_dir, class_names):

xml_names = os.listdir(anno_dir)

boxes = []

for xml_name in xml_names:

xml_pth = os.path.join(anno_dir, xml_name)

tree = et.parse(xml_pth)

width = float(tree.findtext("./size/width"))

height = float(tree.findtext("./size/height"))

for obj in tree.findall("./object"):

cls_name = obj.findtext("name")

if cls_name in class_names:

xmin = float(obj.findtext("bndbox/xmin")) / width

ymin = float(obj.findtext("bndbox/ymin")) / height

xmax = float(obj.findtext("bndbox/xmax")) / width

ymax = float(obj.findtext("bndbox/ymax")) / height

box = [xmax - xmin, ymax - ymin]

boxes.append(box)

else:

continue

return np.array(boxes)

if __name__ == '__main__':

anchors_txt = open(ANCHORS_TXT_PATH, "w")

train_boxes = load_data(ANNOTATION_PATH, CLASS_NAMES)

count = 1

best_accuracy = 0

best_anchors = []

best_ratios = []

for i in range(10): ##### 可以修改,不要太大,否则时间很长

anchors_tmp = []

clusters = kmeans(train_boxes, k=CLUSTERS)

idx = clusters[:, 0].argsort()

clusters = clusters[idx]

# print(clusters)

for j in range(CLUSTERS):

anchor = [round(clusters[j][0] * 640, 2), round(clusters[j][1] * 640, 2)]

anchors_tmp.append(anchor)

print(f"Anchors:{anchor}")

temp_accuracy = avg_iou(train_boxes, clusters) * 100

print("Train_Accuracy:{:.2f}%".format(temp_accuracy))

ratios = np.around(clusters[:, 0] / clusters[:, 1], decimals=2).tolist()

ratios.sort()

print("Ratios:{}".format(ratios))

print(20 * "*" + " {} ".format(count) + 20 * "*")

count += 1

if temp_accuracy > best_accuracy:

best_accuracy = temp_accuracy

best_anchors = anchors_tmp

best_ratios = ratios

anchors_txt.write("Best Accuracy = " + str(round(best_accuracy, 2)) + '%' + "\r\n")

anchors_txt.write("Best Anchors = " + str(best_anchors) + "\r\n")

anchors_txt.write("Best Ratios = " + str(best_ratios))

anchors_txt.close()

进行路径修改

三、训练

1、训练

parser = argparse.ArgumentParser()

parser.add_argument('--weights', type=str, default='yolov5s.pt', help='initial weights path')

parser.add_argument('--cfg', type=str, default='my_Data/my_yolov5s.yaml', help='model.yaml path')

parser.add_argument('--data', type=str, default='my_Data/my_data.yaml', help='data.yaml path')

parser.add_argument('--hyp', type=str, default='data/hyp.scratch.yaml', help='hyperparameters path')

parser.add_argument('--epochs', type=int, default=300)

parser.add_argument('--batch-size', type=int, default=16, help='total batch size for all GPUs')

parser.add_argument('--img-size', nargs='+', type=int, default=[640, 640], help='[train, test] image sizes')

参数修改cfg\data等

epochs:指的就是训练过程中整个数据集将被迭代多少次,显卡不行你就调小点。

batch-size:一次看完多少张图片才进行权重更新,梯度下降的mini-batch,显卡不行你就调小点。

cfg:存储模型结构的配置文件

data:存储训练、测试数据的文件

img-size:输入图片宽高,显卡不行你就调小点。

rect:进行矩形训练

resume:恢复最近保存的模型开始训练

nosave:仅保存最终checkpoint

notest:仅测试最后的epoch

evolve:进化超参数

bucket:gsutil bucket

cache-images:缓存图像以加快训练速度

weights:权重文件路径

name: 重命名results.txt to results_name.txt

device:cuda device, i.e. 0 or 0,1,2,3 or cpu

adam:使用adam优化

multi-scale:多尺度训练,img-size +/- 50%

single-cls:单类别的训练集

训练:

python3 train.py --img 640 --batch 8 --epoch 5 --data my_Data/my_data.yaml --cfg my_Data/my_yolov5s.yaml --weights weights/yolov5s.pt --device cpu # 0号GPU -device 0

2、可视化

训练后生成runs文件夹

tensorboard --logdir=runs

四、测试

参考链接:https://blog.csdn.net/qq_36756866/article/details/109111065