python实现softmax函数、sigmoid函数、 softmax 交叉熵loss函数、sigmoid 交叉熵loss函数

编辑器:jupyter notebook

Tensorflow2.0

计算用了两种方法numpy&tensorflow,建议tensorflow

import tensorflow as tf

import numpy as np

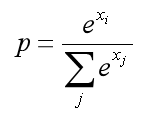

实现softmax函数

#np

def softmax(x):

x_exp = np.exp(x)

x_sum = np.sum(x_exp, axis=1, keepdims=True)

prob_x = x_exp / x_sum

return prob_x

test_data = np.random.normal(size=[10, 5])

(softmax(test_data) - tf.nn.softmax(test_data, axis=-1).numpy())**2

#tf

def softmaxF(x):

x_exp = tf.math.exp(x)

x_sum = tf.reduce_sum(x_exp, axis=1, keepdims=True)

prob_x = x_exp / x_sum

return prob_x

(softmaxF(test_data).numpy() - tf.nn.softmax(test_data, axis=-1).numpy())**2 <0.0001

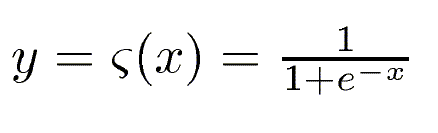

实现sigmoid函数

def sigmoid(x):

x =1 + (1 / np.exp(x))

prob_x = 1 / x

return prob_x

test_data = np.random.normal(size=[10, 5])

(sigmoid(test_data) - tf.nn.sigmoid(test_data).numpy())**2 < 0.0001

#tf

#tf

def sigmoidF(x):

prob_x =1/(1+ (1/tf.math.exp(x)))

return prob_x

test_data = np.random.normal(size=[10, 5])

# print(tf.nn.softmax(test_data, axis=-1).numpy(),'T')

(sigmoidF(test_data).numpy() - tf.nn.sigmoid(test_data).numpy())**2 < 0.0001

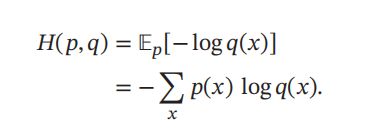

实现softmax交叉熵loss函数

def softmax_ce(x, label):

loss = -np.sum(np.nan_to_num(label*np.log(x)),axis=1)

return loss

test_data = np.random.normal(size=[10, 5])

prob = tf.nn.softmax(test_data)

label = np.zeros_like(test_data)

label[np.arange(10), np.random.randint(0, 5, size=10)]=1.

((tf.nn.softmax_cross_entropy_with_logits(label, test_data)

- softmax_ce(prob, label))**2 < 0.0001).numpy()

#tf

def softmax_ceF(x, label):

# epsilon = 1e-12

losses = -tf.reduce_sum(label*tf.math.log(x),axis=1)

# losses = -tf.reduce_mean(label*tf.math.log(x+1e-12),axis=1)

loss = tf.reduce_mean(losses)

print(loss,'F')

return loss

test_data = np.random.normal(size=[10, 5])

prob = tf.nn.softmax(test_data)

label = np.zeros_like(test_data)

label[np.arange(10), np.random.randint(0, 5, size=10)]=1.

print(tf.reduce_mean(tf.nn.softmax_cross_entropy_with_logits(label, test_data)),'T')

((tf.reduce_mean(tf.nn.softmax_cross_entropy_with_logits(label, test_data))

- softmax_ceF(prob, label))**2 < 0.0001).numpy()

实现sigmoid交叉熵loss函数

def sigmoid_ce(x, label):

loss = -np.sum(np.nan_to_num(label*np.log(x)+(1-label)*np.log(1-x)))

return loss

test_data = np.random.normal(size=[10])

prob = tf.nn.sigmoid(test_data)

label = np.random.randint(0, 2, 10).astype(test_data.dtype)

a = tf.nn.sigmoid_cross_entropy_with_logits(label, test_data).numpy()

((np.sum(a)- sigmoid_ce(prob, label))**2 < 0.0001)

#tf

def sigmoid_ceF(x, label):

losses = -tf.reduce_sum(label*tf.math.log(x)+(1.-label)*tf.math.log(1-x))/len(x)

loss = tf.reduce_mean(losses)

print(loss,'F')

return loss

test_data = np.random.normal(size=[10])

prob = tf.nn.sigmoid(test_data)

label = np.random.randint(0, 2, 10).astype(test_data.dtype)

print(tf.reduce_mean(tf.nn.sigmoid_cross_entropy_with_logits(label, test_data)),'T')

((tf.reduce_mean(tf.nn.sigmoid_cross_entropy_with_logits(label, test_data))- sigmoid_ceF(prob, label))**2 < 0.0001).numpy()