python函数笔记总结

1.logging模块的使用

from resource.util.get_logger import get_logger

main_logger=get_logger("main","data/log/{}.log".format(TrainOption.task_uuid))

main_logger.info("TASK ID {}".format(TrainOption.task_uuid))

import logging

engine_logger = logging.getLogger("main.engine")

engine.info("")

关于logging模块,现在大概知道是一个关于日志记录打印的模块,getLogger函数传入的参数是所要记录模块的日志名称,通常为模块名。返回的对象,可以接到多个输出,记录日志到文件或工作台;并且可以过滤不同等级的日志信息,方便管理。然后关于多模块使用logging,在同一个python解释器里返回的都是同一个对象实例,但可以输出不同名称的日志记录。

2.python命令行交互 argparse

使用argparse包使得python可以直接从命令行读取参数

import argparse

parser = argparse.ArgumentParser()

parser.add_argument("--m", choices=["1", "2", "3", "4","5"]) # choices设置参数值的范围,如果choices中的类型不是字符串,需要指定type

parser.add_argument("--k", action="store_true", help="with knowledge")# ‘--’表示是可选参数,action="store_true"表示在命令行中无需添加赋值内容,只需要写--k即表示args.k=true

parser.add_argument("--g", type=int, default=0)#default表示如果没有填入的话为默认值

arg=parser.parse_arg()

- choices设置参数值的范围,如果choices中的类型不是字符串,需要指定type

- ‘- -’表示是可选参数,action="store_true"表示在命令行中无需添加赋值内容,只需要写–k即表示args.k=true

- default表示如果没有填入的话为默认值

使用实例:

python 文件名.py --m 1 --k

程序执行时,得到arg.m=1,arg.k=true,arg.g=0

3.python函数中参数的传递

-

function(*arg):

在python函数的定义时,可能会根据情况的不同出现传入参数个数不固定的情况,以∗*∗加上形参名的方式表示函数的参数个数不固定,可以是0个,也可以是多个。传入的参数在函数内部被存放在以形参名为标识符的tuple中。

例子:

def loss(self,*targets):

target,history=targets[0],targets[1]

def function1(*x):

if len(x)==0:

print("None")

else:

print(x)

第二个例子运行结果:

function1()

输出:None

function1(1,2,3)

输出:1,2,3

-

function(**arg):

形参前加两个*,在函数调用时,参数将被存储在字典中,在函数调用时,传参数需要使用arg1=value1,arg2=value2…的形式

例子:

def function1(**x):

if len(x)==0:

print("None")

else:

print(x)

运行结果:

function1()

输出:None

function1(x=1,y=2,z=3)

输出:{‘x’:1,‘y’:2,‘z’:3}

4.pytorch中模型的保存与加载:torch.save(),torch.load()

模型的保存

torch.save(net,PATH)#保存模型的整个网络,包括网络的整个结构和参数

torch.save(net.state_dict,PATH)#只保存网络中的参数

模型的加载

分别对应上边的加载方法。

model_dict=torch.load(PATH)

model_dict=net.load_state_dict(torch.load(PATH))

在自定义的网络中的使用:

import torch

import torch.nn as nn

class neuralModel(nn.Module):

def __init__(self,device):super(neuralModel,self).__init__()

self.device=device#初始化函数

def dump(self,filename):#保存模型参数

torch.save(self.state_dict(),filename)

def load(self,filename):

state_dict=torch.load(open(filename,"rb"),map_location=self.device)

self.load_state_dict(state_dict,strict=True)

其中map_location为改变设备(gpu0,gpu1,cpu…)

5.torch.nn.Module中的training属性详情,与Module.train()和Module.eval()的关系

Module类的构造函数:

def __init__(self):

"""

Initializes internal Module state, shared by both nn.Module and ScriptModule.

"""

torch._C._log_api_usage_once("python.nn_module")

self.training = True

self._parameters = OrderedDict()

self._buffers = OrderedDict()

self._backward_hooks = OrderedDict()

self._forward_hooks = OrderedDict()

self._forward_pre_hooks = OrderedDict()

self._state_dict_hooks = OrderedDict()

self._load_state_dict_pre_hooks = OrderedDict()

self._modules = OrderedDict()

其中training属性表示BatchNorm与Dropout层在训练阶段和测试阶段中采取的策略不同,通过判断training值来决定前向传播策略。

对于一些含有BatchNorm,Dropout等层的模型,在训练和验证时使用的forward在计算上不太一样。在前向训练的过程中指定当前模型是在训练还是在验证。使用module.train()和module.eval()进行使用,其中这两个方法的实现均有training属性实现。

关于这两个方法的定义源码如下:

train():

def train(self, mode=True):

r"""Sets the module in training mode.

This has any effect only on certain modules. See documentations of

particular modules for details of their behaviors in training/evaluation

mode, if they are affected, e.g. :class:`Dropout`, :class:`BatchNorm`,

etc.

Args:

mode (bool): whether to set training mode (``True``) or evaluation

mode (``False``). Default: ``True``.

Returns:

Module: self

"""

self.training = mode

for module in self.children():

module.train(mode)

return self

eval():

def eval(self):

r"""Sets the module in evaluation mode.

This has any effect only on certain modules. See documentations of

particular modules for details of their behaviors in training/evaluation

mode, if they are affected, e.g. :class:`Dropout`, :class:`BatchNorm`,

etc.

This is equivalent with :meth:`self.train(False) `.

Returns:

Module: self

"""

return self.train(False)

从源码中可以看出,train和eval方法将本层及子层的training属性同时设为true或false。

具体如下:

net.train() # 将本层及子层的training设定为True

net.eval() # 将本层及子层的training设定为False

net.training = True # 注意,对module的设置仅仅影响本层,子module不受影响

net.training, net.submodel1.training

6.pytorch .to(device)

device = torch.device("cuda:0" if torch.cuda.is_available() else "cpu")

model.to(device)

mytensor = my_tensor.to(device)

这行代码的意思是将所有最开始读取数据时的tensor变量copy一份到device所指定的GPU上去,之后的运算都在GPU上进行。

这句话需要写的次数等于需要保存GPU上的tensor变量的个数;一般情况下这些tensor变量都是最开始读数据时的tensor变量,后面衍生的变量自然也都在GPU上

Tensor总结

(1)Tensor 和 Numpy都是矩阵,区别是前者可以在GPU上运行,后者只能在CPU上;

(2)Tensor和Numpy互相转化很方便,类型也比较兼容

(3)Tensor可以直接通过print显示数据类型,而Numpy不可以

7.torch.nn.CrossEntropyLoss(),torch.nn.NLLLoss()函数

torch.nn.NLLLoss()

NLLLoss() ,即负对数似然损失函数(Negative Log Likelihood)。nn.NLLLoss输入是一个对数概率向量和一个目标标签。

NLLLoss() 损失函数公式:

N L L L o s s = − 1 N ∑ k = 1 N y k ( l o g _ s o f t m a x ) NLLLoss=-\frac{1}{N}\sum_{k=1}^{N}y_k(log\_softmax) NLLLoss=−N1k=1∑Nyk(log_softmax)

y k y_k yk表示one_hot 编码之后的数据标签

损失函数运行的结果为 y k y_k yk与经过log_softmax运行的数据相乘,求平均值,再取反。

实际使用NLLLoss()损失函数时,传入的标签,无需进行 one_hot 编码(这个具体是个啥不太清楚???)

这块对于数据相乘还不太清楚,因为下边代码验证的例子,是说输入的target相当于在input中进行选择,选择标签对应的元素

torch.nn.CrossEntropyLoss()

对数据进行softmax,再log,再进行NLLLoss。其与nn.NLLLoss的关系可以描述为:

softmax(x)+log(x)+nn.NLLLoss====>nn.CrossEntropyLoss

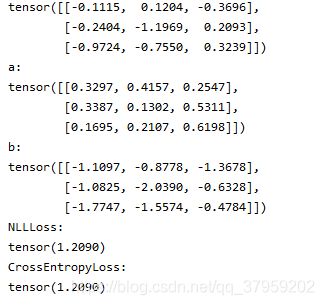

相关的代码实现验证:

import torch

input=torch.randn(3,3)

print(input)

soft_input=torch.nn.Softmax(dim=1)

a=soft_input(input)#对数据在1维上进行softmax计算

print(a)

b=torch.log(soft_input(input))#计算log

print(b)

loss=torch.nn.NLLLoss()

target=torch.tensor([0,1,2])

print('NLLLoss:')

print(loss(b,target))#选取第一行取第0个元素,第二行取第1个,

#---------------------第三行取第2个,去掉负号,求平均,得到损失值。

loss=torch.nn.CrossEntropyLoss()

print('CrossEntropyLoss:')

print(loss(input,target))

运行结果:

可以看到当softmax函数在1维上进行计算时,两种方法得到的值相同。

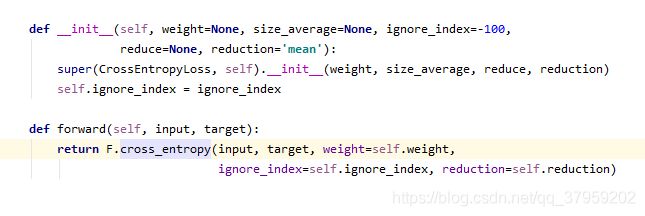

相关源码:

这一点关于torch.nn.CrossEntropyLoss()的源码中可以看到:

![]()

进入对应函数:

CrossEntropyLoss()的定义

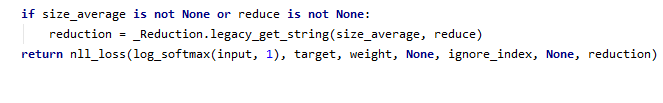

进入其返回的cross_entropy():

可以看到其是在1维上进行softmax的求解。

8.pytorch nn.GRU(),RNN,LSTM

GRU,LSTM,RNN等模型网络在pytorch中的定义均在torch/nn/modules/rnn,py中

其中GRU,RNN,LSTM均是继承的父类RNNBase

其中关于RNNBase类的定义:

def __init__(self, mode, input_size, hidden_size,

num_layers=1, bias=True, batch_first=False,

dropout=0., bidirectional=False):

super(RNNBase, self).__init__()

self.mode = mode

self.input_size = input_size

self.hidden_size = hidden_size

self.num_layers = num_layers

self.bias = bias

self.batch_first = batch_first

self.dropout = float(dropout)

self.bidirectional = bidirectional

num_directions = 2 if bidirectional else 1

if not isinstance(dropout, numbers.Number) or not 0 <= dropout <= 1 or \

isinstance(dropout, bool):

raise ValueError("dropout should be a number in range [0, 1] "

"representing the probability of an element being "

"zeroed")

if dropout > 0 and num_layers == 1:

warnings.warn("dropout option adds dropout after all but last "

"recurrent layer, so non-zero dropout expects "

"num_layers greater than 1, but got dropout={} and "

"num_layers={}".format(dropout, num_layers))

if mode == 'LSTM':

gate_size = 4 * hidden_size

elif mode == 'GRU':

gate_size = 3 * hidden_size

elif mode == 'RNN_TANH':

gate_size = hidden_size

elif mode == 'RNN_RELU':

gate_size = hidden_size

else:

raise ValueError("Unrecognized RNN mode: " + mode)

self._all_weights = []

for layer in range(num_layers):

for direction in range(num_directions):

layer_input_size = input_size if layer == 0 else hidden_size * num_directions

w_ih = Parameter(torch.Tensor(gate_size, layer_input_size))

w_hh = Parameter(torch.Tensor(gate_size, hidden_size))

b_ih = Parameter(torch.Tensor(gate_size))

# Second bias vector included for CuDNN compatibility. Only one

# bias vector is needed in standard definition.

b_hh = Parameter(torch.Tensor(gate_size))

layer_params = (w_ih, w_hh, b_ih, b_hh)

suffix = '_reverse' if direction == 1 else ''

param_names = ['weight_ih_l{}{}', 'weight_hh_l{}{}']

if bias:

param_names += ['bias_ih_l{}{}', 'bias_hh_l{}{}']

param_names = [x.format(layer, suffix) for x in param_names]

for name, param in zip(param_names, layer_params):

setattr(self, name, param)

self._all_weights.append(param_names)

self.flatten_parameters()

self.reset_parameters()

其中关于mode定义了模型是GRU,LSTM…

- input_size:输入数据X的特征值的数目。

- hidden_size:隐藏层的神经元数量,也就是隐藏层的特征数量。

- num_layers:循环神经网络的层数,默认值是 1。

- bias:默认为 True,如果为 false 则表示神经元不使用 bias 偏移参数。

- batch_first:如果设置为 True,则输入数据的维度中第一个维度就 是 batch 值,默认为 False。默认情况下第一个维度是序列的长度, 第二个维度才是 - - batch,第三个维度是特征数目。

- dropout:如果不为空,则表示最后跟一个 dropout 层抛弃部分数据,抛弃数据的比例由该参数指定。默认为0。

- bidirectional : 如果为True, 则是双向的网络,分为前向和后向。默认为false

关于GRU的输入输出,具体形式和介绍如下:

INPUTS:

- input:(seq_len,batch,input_size)

- h_0:(num_layers*num_directions,batch,hidden_size)

OUTPUTS:

- output:(seq_len,batch,num_directions*hidden_size)

- h_n:(num_layers*num_directions,batch,hidden_size)

Inputs: input, h_0

- input of shape(seq_len, batch, input_size): tensor containing the features

of the input sequence. The input can also be a packed variable length

sequence. See :func:torch.nn.utils.rnn.pack_padded_sequence

or :func:torch.nn.utils.rnn.pack_sequence

for details.

- h_0 of shape(num_layers * num_directions, batch, hidden_size): tensor

containing the initial hidden state for each element in the batch.

Defaults to zero if not provided. If the RNN is bidirectional,

num_directions should be 2, else it should be 1.Outputs: output, h_n

- output of shape(seq_len, batch, num_directions * hidden_size): tensor

containing the output features (h_t) from the last layer of the RNN,

for eacht. If a :class:torch.nn.utils.rnn.PackedSequencehas

been given as the input, the output will also be a packed sequence.

For the unpacked case, the directions can be separated

usingoutput.view(seq_len, batch, num_directions, hidden_size),

with forward and backward being direction0and1respectively.

Similarly, the directions can be separated in the packed case.

- h_n of shape(num_layers * num_directions, batch, hidden_size): tensor

containing the hidden state fort = seq_len.

Like output, the layers can be separated using

h_n.view(num_layers, num_directions, batch, hidden_size).

RNN,LSTM输入输出的形式同上。其中如果网络为双向的,则num_directions=2;否则为1。

代码参考:

>>> import torch.nn as nn

>>> gru = nn.GRU(input_size=50, hidden_size=50, batch_first=True)

>>> embed = nn.Embedding(3, 50)

>>> x = torch.LongTensor([[0, 1, 2]])

>>> x_embed = embed(x)

>>> x.size()

torch.Size([1, 3])

>>> x_embed.size()

torch.Size([1, 3, 50])

>>> out, hidden = gru(x_embed)

>>> out.size()

torch.Size([1, 3, 50])

>>> hidden.size()

torch.Size([1, 1, 50])