Python 使用opencv进行手势识别

Python 使用opencv进行手势识别

本项目是使用了谷歌开源的框架mediapipe,里面有非常多的模型提供给我们使用,例如面部检测,身体检测,手部检测等。

原理

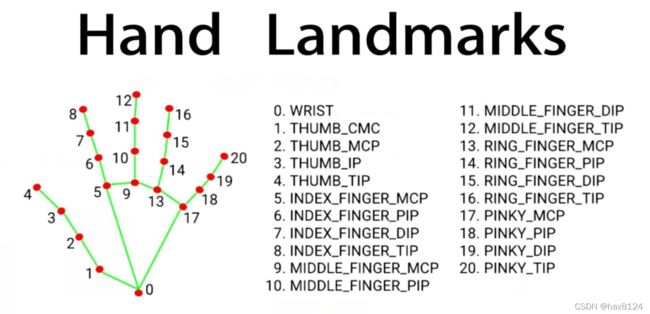

首先先进行手部的检测,找到之后会做Hand Landmarks。

将手掌的21个点找到,然后我们就可以通过手掌的21个点的坐标推测出来手势,或者在干什么。

程序部分

第一安装Opencv

pip install opencv-python

第二安装mediapipe

pip install mediapipe

程序

先调用这俩个函数库

import cv2

import mediapipe as mp

然后再调用摄像头

cap = cv2.VideoCapture(0)

函数主体部分

while True:

ret, img = cap.read()#读取当前数据

if ret:

cv2.imshow('img',img)#显示当前读取到的画面

if cv2.waitKey(1) == ord('q'):#按q键退出程序

break

全部函数

import cv2

import mediapipe as mp

import time

cap = cv2.VideoCapture(1)

mpHands = mp.solutions.hands

hands = mpHands.Hands()

mpDraw = mp.solutions.drawing_utils

handLmsStyle = mpDraw.DrawingSpec(color=(0, 0, 255), thickness=3)

handConStyle = mpDraw.DrawingSpec(color=(0, 255, 0), thickness=5)

pTime = 0

cTime = 0

while True:

ret, img = cap.read()

if ret:

imgRGB = cv2.cvtColor(img, cv2.COLOR_BGR2RGB)

result = hands.process(imgRGB)

# print(result.multi_hand_landmarks)

imgHeight = img.shape[0]

imgWidth = img.shape[1]

if result.multi_hand_landmarks:

for handLms in result.multi_hand_landmarks:

mpDraw.draw_landmarks(img, handLms, mpHands.HAND_CONNECTIONS, handLmsStyle, handConStyle)

for i, lm in enumerate(handLms.landmark):

xPos = int(lm.x * imgWidth)

yPos = int(lm.y * imgHeight)

# cv2.putText(img, str(i), (xPos-25, yPos+5), cv2.FONT_HERSHEY_SIMPLEX, 0.4, (0, 0, 255), 2)

# if i == 4:

# cv2.circle(img, (xPos, yPos), 20, (166, 56, 56), cv2.FILLED)

# print(i, xPos, yPos)

cTime = time.time()

fps = 1/(cTime-pTime)

pTime = cTime

cv2.putText(img, f"FPS : {int(fps)}", (30, 50), cv2.FONT_HERSHEY_SIMPLEX, 1, (255, 0, 0), 3)

cv2.imshow('img', img)

if cv2.waitKey(1) == ord('q'):

break

这样我们就能再电脑上显示我们的手部关键点和坐标了,对于手势识别或者别的操作就可以通过获取到的关键点的坐标进行判断了。