深度学习笔记——深度学习框架TensorFlow之MLP(十四)

MLP多层感知器的使用,多层感知器,常用来做分类,效果非常好,比如文本分类,效果比SVM和bayes好多了。

感知器学习算法基本介绍

- 单层感知器:

感知器(Single Layer Perceptron)是最简单的神经网络,它包含输入层和输出层,而输入层和输出层是直接相连的。

上图是一个单层感知器,很简单的结构,输入层和输出层直接相连。

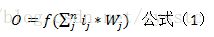

下面介绍一下如何计算输出端:

利用格式1计算输出层,首先,计算输出层中,每一个输入端和其上的权值相乘,然后将这些乘积相加,得到乘积和。对于这个乘积和做如下处理,如果乘积和大于临界值(一般是0),输入端就取1;如果小于临界值,就取-1。

利用单层感知器,我们可以提供快速的计算,它能够实现逻辑计算中的NOT,OR,AND等简单计算。 - 多层感知器(Multi-Layer Perceptrons):

MLP是最简单也是最常见的一种神经网络结构,它是所有其他神经网络结构的基础。

MLP神经网络是常见的ANN算法,它是由一个输入层,一个输出层和一个或多个隐藏层组成。

在MLP中的所有神经元都差不多,每个神经元都有几个输入(连接前一层)神经元和输出(连接后一层)神经元,该神经元将相同值传递给与之相连的多个输出神经元。

一个神经网络训练网将一个特征向量作为输入,将该向量传递到隐藏层,然后通过权重和激励函数来计算结果,并将结果传递给下一层,直到最后传递给输出层才结束。 - 接下来构造一个2层的多层感知器,其中relu可以换成tanh或者sigmoid

比如

tf.nn.sigmoid(tf.add(tf.matmul(X,w_h),b))#WX+Bdef multilayer_perceptron(_X,_weights,_biases):

layer1 = tf.nn.relu(tf.add(tf.matmul(_X,_weights['h1']),_biases['b1']))#Hidden layer with relu activation

layer2 = tf.nn.relu(tf.matmul(layer1,_weigts['h2']),_biases['b2']))#Hidden layer with Relu activation

return tf.matmul(layer2,_weights['out'])+_biases['out']

#Store layers weight & biases

weights = {

'h1':tf.Variable(tf.random_normal([n_input,256]))

'h2':tf.Variable(tf.random_normal([256,256]))

'out':tf.Variable(tf.random_normal([256,10]))

}

biases = {

'b1':tf.Variable(tf.random_normal([256])),

'b2':tf.Variable(tf.random_normal([256])),

'out':tf.Variable(tf.random_normal([10]))

}或者修改成使用sigmoid

def multilayer_perceptron(_X,_weights,_biases):

layer_1 = tf.nn.sigmoid(tf.add(tf.matmul(_X,_weights['h1']),_biases['b1']))

layer_2 = tf.nn.relu(tf.add(tf.matmul(layer_1,_weights['h2']),_biases['b2']))

return tf.matmul(layer_2,_weights['out'])+_biases['out']linear——线性感知器

tanh——双曲正切函数

sigmoid——双曲函数

softmax

log-softmax

exp——指数函数

softplus——log(1+e(wi*xi))

3. 代码实现:

'''

@author: smile

'''

import tensorflow as tf

import data.input_data as input_data

from pyexpat import features

mnist = input_data.read_data_sets("MNIST_data/",one_hot=True)

learning_rate = 0.001

training_epochs = 15

batch_size = 100

display_step = 1

#NetWork parameters

n_hidden_1 = 256#1st layer num features

n_hidden_2 = 256#2nd layer num features

n_input = 784

n_classses = 10

x = tf.placeholder("float", [None,n_input])

y = tf.placeholder("float",[None,n_classses])

def multilayer_perceptron(_X,_weights,_biases):

layer1 = tf.nn.sigmoid(tf.add(tf.matmul(_X, _weights['h1']), _biases['b1']))

layer2 = tf.nn.relu(tf.add(tf.matmul(layer1,_weights['h2']),_biases['b2']))

return tf.matmul(layer2,_weights['out'])+_biases['out']

weights = {

'h1':tf.Variable(tf.random_normal([n_input,n_hidden_1])),

'h2':tf.Variable(tf.random_normal([n_hidden_1,n_hidden_2])),

'out':tf.Variable(tf.random_normal([n_hidden_2,n_classses]))

}

biases = {

'b1':tf.Variable(tf.random_normal([n_hidden_1])),

'b2':tf.Variable(tf.random_normal([n_hidden_2])),

'out':tf.Variable(tf.random_normal([n_classses]))

}

pred = multilayer_perceptron(x, weights, biases)

cost = tf.reduce_mean(tf.nn.softmax_cross_entropy_with_logits(labels=y, logits=pred))

optimizer = tf.train.AdamOptimizer(learning_rate=learning_rate).minimize(cost)

init = tf.initialize_all_variables()

with tf.Session() as sess:

sess.run(init)

#Training cycle

for epoch in range(training_epochs):

avg_cost = 0.

total_batch = int(mnist.train._num_examples/batch_size)

for i in range(total_batch):

batch_xs,batch_ys = mnist.train.next_batch(batch_size)

sess.run(optimizer, feed_dict={x: batch_xs, y: batch_ys})

avg_cost += sess.run(cost, feed_dict={x: batch_xs, y: batch_ys})/total_batch

if epoch % display_step == 0:

print("Epoch:", '%04d' % (epoch+1), "cost=", "{:.9f}".format(avg_cost))

print("Optimization Finished!")

correct_prediction = tf.equal(tf.argmax(pred, 1), tf.argmax(y, 1))

# Calculate accuracy

accuracy = tf.reduce_mean(tf.cast(correct_prediction, "float"))

print("Accuracy:", accuracy.eval({x: mnist.test.images, y: mnist.test.labels}))