【神经网络】(16) MobileNetV3 代码复现,网络解析,附Tensorflow完整代码

各位同学好,今天和大家分享一下如何使用 Tensorflow 构建 MobileNetV3 轻量化网络模型。

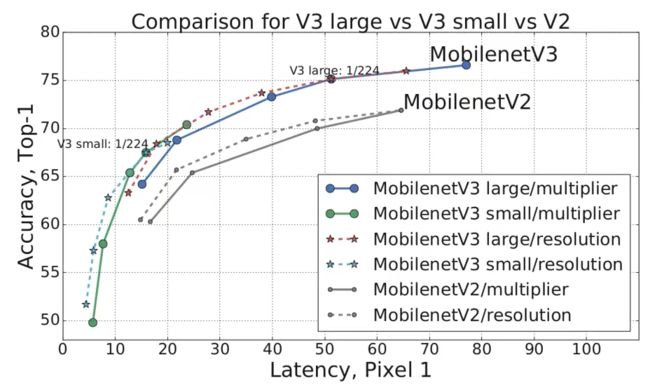

MobileNetV3 做了如下改动(1)更新了V2中的逆转残差结构;(2)使用NAS搜索参数的技术;(3)重新设计耗时层结构。MobileNetV3相比V2版本,在图像分类任务上,准确率上升了3.2%,延误降低了20%

MobileNetV3 是对MobileNetV2的改进,建议大家在学习之前,先了解 MobileNetV1、V2。

MobileNetV1:https://blog.csdn.net/dgvv4/article/details/123415708

MobileNetV2:https://blog.csdn.net/dgvv4/article/details/123417739

1. 网络核心模块介绍

1.1 MobileNetV1 深度可分离卷积

MobileNetV1 中主要使用了深度可分离卷积模块,大大减少了参数量和计算量。

普通卷积是一个卷积核处理所有的通道,输入特征图有多少个通道,卷积核就有几个通道,一个卷积核生成一张特征图。

深度可分离卷积 可理解为 深度卷积 + 逐点卷积

深度卷积只处理长宽方向的空间信息;逐点卷积只处理跨通道方向的信息。能大大减少参数量,提高计算效率

深度卷积: 一个卷积核只处理一个通道,即每个卷积核只处理自己对应的通道。输入特征图有多少个通道就有多少个卷积核。将每个卷积核处理后的特征图堆叠在一起。输入和输出特征图的通道数相同。

由于只处理长宽方向的信息会导致丢失跨通道信息,为了将跨通道的信息补充回来,需要进行逐点卷积。

逐点卷积: 是使用1x1卷积对跨通道维度处理,有多少个1x1卷积核就会生成多少个特征图。

![]()

1.2 MobileNetV2 逆转残差结构

MobileNetV2 使用了逆转残差模块。输入图像,先使用1x1卷积提升通道数;然后在高维空间下使用深度卷积;再使用1x1卷积下降通道数,降维时采用线性激活函数(y=x)。当步长等于1且输入和输出特征图的shape相同时,使用残差连接输入和输出;当步长=2(下采样阶段)直接输出降维后的特征图。

对比 ResNet 的残差结构。输入图像,先使用1x1卷积下降通道数;然后在低维空间下使用标准卷积,再使用1x1卷积上升通道数,激活函数都是ReLU函数。当步长等于1且输入和输出特征图的shape相同时,使用残差连接输入和输出;当步长=2(下采样阶段)直接输出降维后的特征图。

![]()

1.3 MobileNetV3 改进逆转残差结构

主要有以下改进:(1)添加SE注意力机制;(2)使用新的激活函数

1.3.1 SE注意力机制

(1)先将特征图进行全局平均池化,特征图有多少个通道,那么池化结果(一维向量)就有多少个元素,[h, w, c]==>[None, c]。

(2)然后经过两个全连接层得到输出向量。第一个全连接层的输出通道数等于原输入特征图的通道数的1/4;第二个全连接层的输出通道数等于原输入特征图的通道数。即先降维后升维。

(3)全连接层的输出向量可理解为,向量的每个元素是对每张特征图进行分析得出的权重关系。比较重要的特征图就会赋予更大的权重,即该特征图对应的向量元素的值较大。反之,不太重要的特征图对应的权重值较小。

(4)第一个全连接层使用ReLU激活函数,第二个全连接层使用 hard_sigmoid 激活函数

(5)经过两个全连接层得到一个由channel个元素组成的向量,每个元素是针对每个通道的权重,将权重和原特征图的对应相乘,得到新的特征图数据

以下图为例,特征图经过两个全连接层之后,比较重要的特征图对应的向量元素的值就较大。将得到的权重和对应特征图中的所有元素相乘,得到新的输出特征图

![]()

1.3.2 使用不同的激活函数

swish激活函数公式为:![]() ,尽管提高了网络精度,但是它的计算、求导复杂,对量化过程不友好,尤其对移动端设备的计算。

,尽管提高了网络精度,但是它的计算、求导复杂,对量化过程不友好,尤其对移动端设备的计算。

h_sigmoid激活函数公式为:![]() ,ReLU6激活函数公式为:

,ReLU6激活函数公式为:![]()

h_swish激活函数公式为:![]() ,替换之后网络的推理速度加快,对量化过程比较友好

,替换之后网络的推理速度加快,对量化过程比较友好

![]()

1.3.3 总体流程

图像输入,先通过1x1卷积上升通道数;然后在高维空间下使用深度卷积;再经过SE注意力机制优化特征图数据;最后经过1x1卷积下降通道数(使用线性激活函数)。当步长等于1且输入和输出特征图的shape相同时,使用残差连接输入和输出;当步长=2(下采样阶段)直接输出降维后的特征图。

![]()

1.4 重新设计耗时层结构

(1)减少第一个卷积层的卷积核个数。将卷积核个数从32个降低到16个之后,准确率和降低之前是一样的。减少卷积核个数可以减少计算量,节省2ms时间

(2)简化最后的输出层。删除多余的卷积层,在准确率上没有变化,节省了7ms执行时间,这7ms占据了整个推理过程的11%的执行时间。明显提升计算速度。

2. 代码复现

2.1 网络结构图

网络模型结构如图所示。exp size 代表1*1卷积上升的通道数;#out 代表1*1卷积下降的通道数,即输出特征图数量;SE 代表是否使用注意力机制;NL 代表使用哪种激活函数;s 代表步长;bneck 代表逆残差结构;NBN 代表不使用批标准化。

![]()

2.2 搭建核心模块

(1)激活函数选择

根据上面的公式,定义hard_sigmoid激活函数和hard_swish激活函数。

#(1)激活函数:h-sigmoid

def h_sigmoid(input_tensor):

x = layers.Activation('hard_sigmoid')(input_tensor)

return x

#(2)激活函数:h-swish

def h_swish(input_tensor):

x = input_tensor * h_sigmoid(input_tensor)

return x(2)SE注意力机制

SE注意力机制由 全局平均池化 + 全连接层降维 + 全连接层升维 + 对应权重相乘 组成。为了减少参数量和计算量,全连接层由1*1普通卷积层代替

#(3)SE注意力机制

def se_block(input_tensor):

squeeze = input_tensor.shape[-1]/4 # 第一个全连接通道数下降1/4

excitation = input_tensor.shape[-1] # 第二个全连接通道数上升至原来

# 全局平均池化[b,h,w,c]==>[b,c]

x = layers.GlobalAveragePooling2D()(input_tensor)

# 添加宽度和高度的维度信息,因为下面要使用卷积层代替全连接层

x = layers.Reshape(target_shape=(1, 1, x.shape[-1]))(x) #[b,c]==>[b,1,1,c]

# 第一个全连接层下降通道数,用 1*1卷积层 代替,减少参数量

x = layers.Conv2D(filters=squeeze, # 通道数下降原来的1/4

kernel_size=(1,1), # 1*1卷积融合通道信息

strides=1, # 步长=1

padding='same')(x) # 卷积过程中特征图size不变

x = layers.ReLU()(x) # relu激活

# 第二个全连接层上升通道数,也用1*1卷积层代替

x = layers.Conv2D(filters=excitation, # 通道数上升至原始的特征图数量

kernel_size=(1,1),

strides=1,

padding='same')(x)

x = h_sigmoid(x) # hard_sigmoid激活函数

# 将输入特征图的每个通道和SE得到的针对每个通道的权重相乘

output = layers.Multiply()([input_tensor, x])

return output(3)标准卷积块

一个标准卷积块是由 普通卷积 + 批标准化 + 激活函数 组成

#(4)标准卷积块

def conv_block(input_tensor, filters, kernel_size, stride, activation):

# 判断使用什么类型的激活函数

if activation == 'RE':

act = layers.ReLU() # relu激活

elif activation == 'HS':

act = h_swish # hardswish激活

# 普通卷积

x = layers.Conv2D(filters, kernel_size, strides=stride, padding='same', use_bias=False)(input_tensor)

# BN层

x = layers.BatchNormalization()(x)

# 激活

x = act(x)

return x(4)逆残差模块

相比于MobileNetV2的逆残差模块,添加了注意力机制,使用不同的激活函数

#(5)逆转残差模块bneck

def bneck(x, expansion, filters, kernel_size, stride, se, activation):

"""

filters代表bottleneck模块输出特征图的通道数个数

se是bool类型, se=True 就使用注意力机制, 反之则不使用

activation表示使用什么类型的激活函数'RE'和'HS'

"""

# 残差边

residual = x

# 判断使用什么类型的激活函数

if activation == 'RE':

act = layers.ReLU() # relu激活

elif activation == 'HS':

act = h_swish # hardswish激活

# ① 1*1卷积上升通道数

if expansion != filters: # 第一个bneck模块不需要上升通道数,

x = layers.Conv2D(filters = expansion, # 上升的通道数

kernel_size = (1,1), # 1*1卷积融合通道信息

strides = 1, # 只处理通道方向的信息

padding = 'same', # 卷积过程中size不变,

use_bias = False)(x) # 有BN层就不使用偏置

x = layers.BatchNormalization()(x) # 批标准化

x = act(x) # 激活函数

# ② 深度卷积

x = layers.DepthwiseConv2D(kernel_size = kernel_size, # 卷积核size

strides = stride, # 是否进行下采样

padding = 'same', # 步长=2,特征图长宽减半

use_bias = False)(x) # 有BN层就不要偏置

x = layers.BatchNormalization()(x) # 批标准化

x = act(x) # 激活函数

# ③ 是否使用注意力机制

if se == True:

x = se_block(x)

# ④ 1*1卷积下降通道数

x = layers.Conv2D(filters = filters, # 输出特征图个数

kernel_size = (1,1), # 1*1卷积融合通道信息

strides = 1,

padding = 'same',

use_bias = False)(x)

x = layers.BatchNormalization()(x)

# 使用的是线性激活函数y=x

# ④ 如果深度卷积的步长=1并且输入和输出的shape相同,就叠加残差边

if stride == 1 and residual.shape==x.shape:

x = layers.Add()([residual, x])

return x # 如果步长=2,直接返回1*1卷积下降通道数后的结果2.3 完整代码展示

import tensorflow as tf

from tensorflow import keras

from tensorflow.keras import Model, layers

#(1)激活函数:h-sigmoid

def h_sigmoid(input_tensor):

x = layers.Activation('hard_sigmoid')(input_tensor)

return x

#(2)激活函数:h-swish

def h_swish(input_tensor):

x = input_tensor * h_sigmoid(input_tensor)

return x

#(3)SE注意力机制

def se_block(input_tensor):

squeeze = input_tensor.shape[-1]/4 # 第一个全连接通道数下降1/4

excitation = input_tensor.shape[-1] # 第二个全连接通道数上升至原来

# 全局平均池化[b,h,w,c]==>[b,c]

x = layers.GlobalAveragePooling2D()(input_tensor)

# 添加宽度和高度的维度信息,因为下面要使用卷积层代替全连接层

x = layers.Reshape(target_shape=(1, 1, x.shape[-1]))(x) #[b,c]==>[b,1,1,c]

# 第一个全连接层下降通道数,用 1*1卷积层 代替,减少参数量

x = layers.Conv2D(filters=squeeze, # 通道数下降原来的1/4

kernel_size=(1,1), # 1*1卷积融合通道信息

strides=1, # 步长=1

padding='same')(x) # 卷积过程中特征图size不变

x = layers.ReLU()(x) # relu激活

# 第二个全连接层上升通道数,也用1*1卷积层代替

x = layers.Conv2D(filters=excitation, # 通道数上升至原始的特征图数量

kernel_size=(1,1),

strides=1,

padding='same')(x)

x = h_sigmoid(x) # hard_sigmoid激活函数

# 将输入特征图的每个通道和SE得到的针对每个通道的权重相乘

output = layers.Multiply()([input_tensor, x])

return output

#(4)标准卷积块

def conv_block(input_tensor, filters, kernel_size, stride, activation):

# 判断使用什么类型的激活函数

if activation == 'RE':

act = layers.ReLU() # relu激活

elif activation == 'HS':

act = h_swish # hardswish激活

# 普通卷积

x = layers.Conv2D(filters, kernel_size, strides=stride, padding='same', use_bias=False)(input_tensor)

# BN层

x = layers.BatchNormalization()(x)

# 激活

x = act(x)

return x

#(5)逆转残差模块bneck

def bneck(x, expansion, filters, kernel_size, stride, se, activation):

"""

filters代表bottleneck模块输出特征图的通道数个数

se是bool类型, se=True 就使用注意力机制, 反之则不使用

activation表示使用什么类型的激活函数'RE'和'HS'

"""

# 残差边

residual = x

# 判断使用什么类型的激活函数

if activation == 'RE':

act = layers.ReLU() # relu激活

elif activation == 'HS':

act = h_swish # hardswish激活

# ① 1*1卷积上升通道数

if expansion != filters: # 第一个bneck模块不需要上升通道数,

x = layers.Conv2D(filters = expansion, # 上升的通道数

kernel_size = (1,1), # 1*1卷积融合通道信息

strides = 1, # 只处理通道方向的信息

padding = 'same', # 卷积过程中size不变,

use_bias = False)(x) # 有BN层就不使用偏置

x = layers.BatchNormalization()(x) # 批标准化

x = act(x) # 激活函数

# ② 深度卷积

x = layers.DepthwiseConv2D(kernel_size = kernel_size, # 卷积核size

strides = stride, # 是否进行下采样

padding = 'same', # 步长=2,特征图长宽减半

use_bias = False)(x) # 有BN层就不要偏置

x = layers.BatchNormalization()(x) # 批标准化

x = act(x) # 激活函数

# ③ 是否使用注意力机制

if se == True:

x = se_block(x)

# ④ 1*1卷积下降通道数

x = layers.Conv2D(filters = filters, # 输出特征图个数

kernel_size = (1,1), # 1*1卷积融合通道信息

strides = 1,

padding = 'same',

use_bias = False)(x)

x = layers.BatchNormalization()(x)

# 使用的是线性激活函数y=x

# ④ 如果深度卷积的步长=1并且输入和输出的shape相同,就叠加残差边

if stride == 1 and residual.shape==x.shape:

x = layers.Add()([residual, x])

return x # 如果步长=2,直接返回1*1卷积下降通道数后的结果

#(6)主干网络

def mobilenet(input_shape, classes): # 输入图像shape,分类数

# 构造输入

inputs = keras.Input(shape=input_shape)

# [224,224,3] ==> [112,112,16]

x = conv_block(inputs, filters=16, kernel_size=(3,3), stride=2, activation='HS')

# [112,112,16] ==> [112,112,16]

x = bneck(x, expansion=16, filters=16, kernel_size=(3,3), stride=1, se=False, activation='RE')

# [112,112,16] ==> [56,56,24]

x = bneck(x, expansion=64, filters=24, kernel_size=(3,3), stride=2, se=False, activation='RE')

# [56,56,24] ==> [56,56,24]

x = bneck(x, expansion=72, filters=24, kernel_size=(3,3), stride=1, se=False, activation='RE')

# [56,56,24] ==> [28,28,40]

x = bneck(x, expansion=72, filters=40, kernel_size=(5,5), stride=2, se=True, activation='RE')

# [28,28,40] ==> [28,28,40]

x = bneck(x, expansion=120, filters=40, kernel_size=(5,5), stride=1, se=True, activation='RE')

# [28,28,40] ==> [28,28,40]

x = bneck(x, expansion=120, filters=40, kernel_size=(5,5), stride=1, se=True, activation='RE')

# [28,28,40] ==> [14,14,80]

x = bneck(x, expansion=240, filters=80, kernel_size=(3,3), stride=2, se=False, activation='HS')

# [14,14,80] ==> [14,14,80]

x = bneck(x, expansion=200, filters=80, kernel_size=(3,3), stride=1, se=False, activation='HS')

# [14,14,80] ==> [14,14,80]

x = bneck(x, expansion=184, filters=80, kernel_size=(3,3), stride=1, se=False, activation='HS')

# [14,14,80] ==> [14,14,80]

x = bneck(x, expansion=184, filters=80, kernel_size=(3,3), stride=1, se=False, activation='HS')

# [14,14,80] ==> [14,14,112]

x = bneck(x, expansion=480, filters=112, kernel_size=(3,3), stride=1, se=True, activation='HS')

# [14,14,112] ==> [14,14,112]

x = bneck(x, expansion=672, filters=112, kernel_size=(3,3), stride=1, se=True, activation='HS')

# [14,14,112] ==> [7,7,160]

x = bneck(x, expansion=672, filters=160, kernel_size=(5,5), stride=2, se=True, activation='HS')

# [7,7,160] ==> [7,7,160]

x = bneck(x, expansion=960, filters=160, kernel_size=(5,5), stride=1, se=True, activation='HS')

# [7,7,160] ==> [7,7,160]

x = bneck(x, expansion=960, filters=160, kernel_size=(5,5), stride=1, se=True, activation='HS')

# [7,7,160] ==> [7,7,960]

x = conv_block(x, filters=960, kernel_size=(1,1), stride=1, activation='HS')

# [7,7,960] ==> [None,960]

x = layers.MaxPooling2D(pool_size=(7,7))(x)

# [None,960] ==> [1,1,960]

x = layers.Reshape(target_shape=(1,1,x.shape[-1]))(x)

# [1,1,960] ==> [1,1,1280]

x = layers.Conv2D(filters=1280, kernel_size=(1,1), strides=1, padding='same')(x)

x = h_swish(x)

# [1,1,960] ==> [1,1,classes]

x = layers.Conv2D(filters=classes, kernel_size=(1,1), strides=1, padding='same')(x)

# [1,1,classes] ==> [None,classes]

logits = layers.Flatten()(x)

# 构造模型

model = Model(inputs, logits)

return model

#(7)接收网络模型

if __name__ == '__main__':

model = mobilenet(input_shape=[224,224,3], classes=1000)

model.summary() # 查看网络架构2.4 查看网络模型结构

通过函数model.summary()查看网络总体框架,约五百万参数量

Model: "model"

__________________________________________________________________________________________________

Layer (type) Output Shape Param # Connected to

==================================================================================================

input_1 (InputLayer) [(None, 224, 224, 3) 0

__________________________________________________________________________________________________

conv2d (Conv2D) (None, 112, 112, 16) 432 input_1[0][0]

__________________________________________________________________________________________________

batch_normalization (BatchNorma (None, 112, 112, 16) 64 conv2d[0][0]

__________________________________________________________________________________________________

activation (Activation) (None, 112, 112, 16) 0 batch_normalization[0][0]

__________________________________________________________________________________________________

tf.math.multiply (TFOpLambda) (None, 112, 112, 16) 0 batch_normalization[0][0]

activation[0][0]

__________________________________________________________________________________________________

depthwise_conv2d (DepthwiseConv (None, 112, 112, 16) 144 tf.math.multiply[0][0]

__________________________________________________________________________________________________

batch_normalization_1 (BatchNor (None, 112, 112, 16) 64 depthwise_conv2d[0][0]

__________________________________________________________________________________________________

re_lu (ReLU) (None, 112, 112, 16) 0 batch_normalization_1[0][0]

__________________________________________________________________________________________________

conv2d_1 (Conv2D) (None, 112, 112, 16) 256 re_lu[0][0]

__________________________________________________________________________________________________

batch_normalization_2 (BatchNor (None, 112, 112, 16) 64 conv2d_1[0][0]

__________________________________________________________________________________________________

conv2d_2 (Conv2D) (None, 112, 112, 64) 1024 batch_normalization_2[0][0]

__________________________________________________________________________________________________

batch_normalization_3 (BatchNor (None, 112, 112, 64) 256 conv2d_2[0][0]

__________________________________________________________________________________________________

re_lu_1 (ReLU) multiple 0 batch_normalization_3[0][0]

batch_normalization_4[0][0]

__________________________________________________________________________________________________

depthwise_conv2d_1 (DepthwiseCo (None, 56, 56, 64) 576 re_lu_1[0][0]

__________________________________________________________________________________________________

batch_normalization_4 (BatchNor (None, 56, 56, 64) 256 depthwise_conv2d_1[0][0]

__________________________________________________________________________________________________

conv2d_3 (Conv2D) (None, 56, 56, 24) 1536 re_lu_1[1][0]

__________________________________________________________________________________________________

batch_normalization_5 (BatchNor (None, 56, 56, 24) 96 conv2d_3[0][0]

__________________________________________________________________________________________________

conv2d_4 (Conv2D) (None, 56, 56, 72) 1728 batch_normalization_5[0][0]

__________________________________________________________________________________________________

batch_normalization_6 (BatchNor (None, 56, 56, 72) 288 conv2d_4[0][0]

__________________________________________________________________________________________________

re_lu_2 (ReLU) (None, 56, 56, 72) 0 batch_normalization_6[0][0]

batch_normalization_7[0][0]

__________________________________________________________________________________________________

depthwise_conv2d_2 (DepthwiseCo (None, 56, 56, 72) 648 re_lu_2[0][0]

__________________________________________________________________________________________________

batch_normalization_7 (BatchNor (None, 56, 56, 72) 288 depthwise_conv2d_2[0][0]

__________________________________________________________________________________________________

conv2d_5 (Conv2D) (None, 56, 56, 24) 1728 re_lu_2[1][0]

__________________________________________________________________________________________________

batch_normalization_8 (BatchNor (None, 56, 56, 24) 96 conv2d_5[0][0]

__________________________________________________________________________________________________

conv2d_6 (Conv2D) (None, 56, 56, 72) 1728 batch_normalization_8[0][0]

__________________________________________________________________________________________________

batch_normalization_9 (BatchNor (None, 56, 56, 72) 288 conv2d_6[0][0]

__________________________________________________________________________________________________

re_lu_3 (ReLU) multiple 0 batch_normalization_9[0][0]

batch_normalization_10[0][0]

__________________________________________________________________________________________________

depthwise_conv2d_3 (DepthwiseCo (None, 28, 28, 72) 1800 re_lu_3[0][0]

__________________________________________________________________________________________________

batch_normalization_10 (BatchNo (None, 28, 28, 72) 288 depthwise_conv2d_3[0][0]

__________________________________________________________________________________________________

global_average_pooling2d (Globa (None, 72) 0 re_lu_3[1][0]

__________________________________________________________________________________________________

reshape (Reshape) (None, 1, 1, 72) 0 global_average_pooling2d[0][0]

__________________________________________________________________________________________________

conv2d_7 (Conv2D) (None, 1, 1, 18) 1314 reshape[0][0]

__________________________________________________________________________________________________

re_lu_4 (ReLU) (None, 1, 1, 18) 0 conv2d_7[0][0]

__________________________________________________________________________________________________

conv2d_8 (Conv2D) (None, 1, 1, 72) 1368 re_lu_4[0][0]

__________________________________________________________________________________________________

activation_1 (Activation) (None, 1, 1, 72) 0 conv2d_8[0][0]

__________________________________________________________________________________________________

multiply (Multiply) (None, 28, 28, 72) 0 re_lu_3[1][0]

activation_1[0][0]

__________________________________________________________________________________________________

conv2d_9 (Conv2D) (None, 28, 28, 40) 2880 multiply[0][0]

__________________________________________________________________________________________________

batch_normalization_11 (BatchNo (None, 28, 28, 40) 160 conv2d_9[0][0]

__________________________________________________________________________________________________

conv2d_10 (Conv2D) (None, 28, 28, 120) 4800 batch_normalization_11[0][0]

__________________________________________________________________________________________________

batch_normalization_12 (BatchNo (None, 28, 28, 120) 480 conv2d_10[0][0]

__________________________________________________________________________________________________

re_lu_5 (ReLU) (None, 28, 28, 120) 0 batch_normalization_12[0][0]

batch_normalization_13[0][0]

__________________________________________________________________________________________________

depthwise_conv2d_4 (DepthwiseCo (None, 28, 28, 120) 3000 re_lu_5[0][0]

__________________________________________________________________________________________________

batch_normalization_13 (BatchNo (None, 28, 28, 120) 480 depthwise_conv2d_4[0][0]

__________________________________________________________________________________________________

global_average_pooling2d_1 (Glo (None, 120) 0 re_lu_5[1][0]

__________________________________________________________________________________________________

reshape_1 (Reshape) (None, 1, 1, 120) 0 global_average_pooling2d_1[0][0]

__________________________________________________________________________________________________

conv2d_11 (Conv2D) (None, 1, 1, 30) 3630 reshape_1[0][0]

__________________________________________________________________________________________________

re_lu_6 (ReLU) (None, 1, 1, 30) 0 conv2d_11[0][0]

__________________________________________________________________________________________________

conv2d_12 (Conv2D) (None, 1, 1, 120) 3720 re_lu_6[0][0]

__________________________________________________________________________________________________

activation_2 (Activation) (None, 1, 1, 120) 0 conv2d_12[0][0]

__________________________________________________________________________________________________

multiply_1 (Multiply) (None, 28, 28, 120) 0 re_lu_5[1][0]

activation_2[0][0]

__________________________________________________________________________________________________

conv2d_13 (Conv2D) (None, 28, 28, 40) 4800 multiply_1[0][0]

__________________________________________________________________________________________________

batch_normalization_14 (BatchNo (None, 28, 28, 40) 160 conv2d_13[0][0]

__________________________________________________________________________________________________

conv2d_14 (Conv2D) (None, 28, 28, 120) 4800 batch_normalization_14[0][0]

__________________________________________________________________________________________________

batch_normalization_15 (BatchNo (None, 28, 28, 120) 480 conv2d_14[0][0]

__________________________________________________________________________________________________

re_lu_7 (ReLU) (None, 28, 28, 120) 0 batch_normalization_15[0][0]

batch_normalization_16[0][0]

__________________________________________________________________________________________________

depthwise_conv2d_5 (DepthwiseCo (None, 28, 28, 120) 3000 re_lu_7[0][0]

__________________________________________________________________________________________________

batch_normalization_16 (BatchNo (None, 28, 28, 120) 480 depthwise_conv2d_5[0][0]

__________________________________________________________________________________________________

global_average_pooling2d_2 (Glo (None, 120) 0 re_lu_7[1][0]

__________________________________________________________________________________________________

reshape_2 (Reshape) (None, 1, 1, 120) 0 global_average_pooling2d_2[0][0]

__________________________________________________________________________________________________

conv2d_15 (Conv2D) (None, 1, 1, 30) 3630 reshape_2[0][0]

__________________________________________________________________________________________________

re_lu_8 (ReLU) (None, 1, 1, 30) 0 conv2d_15[0][0]

__________________________________________________________________________________________________

conv2d_16 (Conv2D) (None, 1, 1, 120) 3720 re_lu_8[0][0]

__________________________________________________________________________________________________

activation_3 (Activation) (None, 1, 1, 120) 0 conv2d_16[0][0]

__________________________________________________________________________________________________

multiply_2 (Multiply) (None, 28, 28, 120) 0 re_lu_7[1][0]

activation_3[0][0]

__________________________________________________________________________________________________

conv2d_17 (Conv2D) (None, 28, 28, 40) 4800 multiply_2[0][0]

__________________________________________________________________________________________________

batch_normalization_17 (BatchNo (None, 28, 28, 40) 160 conv2d_17[0][0]

__________________________________________________________________________________________________

conv2d_18 (Conv2D) (None, 28, 28, 240) 9600 batch_normalization_17[0][0]

__________________________________________________________________________________________________

batch_normalization_18 (BatchNo (None, 28, 28, 240) 960 conv2d_18[0][0]

__________________________________________________________________________________________________

activation_4 (Activation) (None, 28, 28, 240) 0 batch_normalization_18[0][0]

__________________________________________________________________________________________________

tf.math.multiply_1 (TFOpLambda) (None, 28, 28, 240) 0 batch_normalization_18[0][0]

activation_4[0][0]

__________________________________________________________________________________________________

depthwise_conv2d_6 (DepthwiseCo (None, 14, 14, 240) 2160 tf.math.multiply_1[0][0]

__________________________________________________________________________________________________

batch_normalization_19 (BatchNo (None, 14, 14, 240) 960 depthwise_conv2d_6[0][0]

__________________________________________________________________________________________________

activation_5 (Activation) (None, 14, 14, 240) 0 batch_normalization_19[0][0]

__________________________________________________________________________________________________

tf.math.multiply_2 (TFOpLambda) (None, 14, 14, 240) 0 batch_normalization_19[0][0]

activation_5[0][0]

__________________________________________________________________________________________________

conv2d_19 (Conv2D) (None, 14, 14, 80) 19200 tf.math.multiply_2[0][0]

__________________________________________________________________________________________________

batch_normalization_20 (BatchNo (None, 14, 14, 80) 320 conv2d_19[0][0]

__________________________________________________________________________________________________

conv2d_20 (Conv2D) (None, 14, 14, 200) 16000 batch_normalization_20[0][0]

__________________________________________________________________________________________________

batch_normalization_21 (BatchNo (None, 14, 14, 200) 800 conv2d_20[0][0]

__________________________________________________________________________________________________

activation_6 (Activation) (None, 14, 14, 200) 0 batch_normalization_21[0][0]

__________________________________________________________________________________________________

tf.math.multiply_3 (TFOpLambda) (None, 14, 14, 200) 0 batch_normalization_21[0][0]

activation_6[0][0]

__________________________________________________________________________________________________

depthwise_conv2d_7 (DepthwiseCo (None, 14, 14, 200) 1800 tf.math.multiply_3[0][0]

__________________________________________________________________________________________________

batch_normalization_22 (BatchNo (None, 14, 14, 200) 800 depthwise_conv2d_7[0][0]

__________________________________________________________________________________________________

activation_7 (Activation) (None, 14, 14, 200) 0 batch_normalization_22[0][0]

__________________________________________________________________________________________________

tf.math.multiply_4 (TFOpLambda) (None, 14, 14, 200) 0 batch_normalization_22[0][0]

activation_7[0][0]

__________________________________________________________________________________________________

conv2d_21 (Conv2D) (None, 14, 14, 80) 16000 tf.math.multiply_4[0][0]

__________________________________________________________________________________________________

batch_normalization_23 (BatchNo (None, 14, 14, 80) 320 conv2d_21[0][0]

__________________________________________________________________________________________________

conv2d_22 (Conv2D) (None, 14, 14, 184) 14720 batch_normalization_23[0][0]

__________________________________________________________________________________________________

batch_normalization_24 (BatchNo (None, 14, 14, 184) 736 conv2d_22[0][0]

__________________________________________________________________________________________________

activation_8 (Activation) (None, 14, 14, 184) 0 batch_normalization_24[0][0]

__________________________________________________________________________________________________

tf.math.multiply_5 (TFOpLambda) (None, 14, 14, 184) 0 batch_normalization_24[0][0]

activation_8[0][0]

__________________________________________________________________________________________________

depthwise_conv2d_8 (DepthwiseCo (None, 14, 14, 184) 1656 tf.math.multiply_5[0][0]

__________________________________________________________________________________________________

batch_normalization_25 (BatchNo (None, 14, 14, 184) 736 depthwise_conv2d_8[0][0]

__________________________________________________________________________________________________

activation_9 (Activation) (None, 14, 14, 184) 0 batch_normalization_25[0][0]

__________________________________________________________________________________________________

tf.math.multiply_6 (TFOpLambda) (None, 14, 14, 184) 0 batch_normalization_25[0][0]

activation_9[0][0]

__________________________________________________________________________________________________

conv2d_23 (Conv2D) (None, 14, 14, 80) 14720 tf.math.multiply_6[0][0]

__________________________________________________________________________________________________

batch_normalization_26 (BatchNo (None, 14, 14, 80) 320 conv2d_23[0][0]

__________________________________________________________________________________________________

conv2d_24 (Conv2D) (None, 14, 14, 184) 14720 batch_normalization_26[0][0]

__________________________________________________________________________________________________

batch_normalization_27 (BatchNo (None, 14, 14, 184) 736 conv2d_24[0][0]

__________________________________________________________________________________________________

activation_10 (Activation) (None, 14, 14, 184) 0 batch_normalization_27[0][0]

__________________________________________________________________________________________________

tf.math.multiply_7 (TFOpLambda) (None, 14, 14, 184) 0 batch_normalization_27[0][0]

activation_10[0][0]

__________________________________________________________________________________________________

depthwise_conv2d_9 (DepthwiseCo (None, 14, 14, 184) 1656 tf.math.multiply_7[0][0]

__________________________________________________________________________________________________

batch_normalization_28 (BatchNo (None, 14, 14, 184) 736 depthwise_conv2d_9[0][0]

__________________________________________________________________________________________________

activation_11 (Activation) (None, 14, 14, 184) 0 batch_normalization_28[0][0]

__________________________________________________________________________________________________

tf.math.multiply_8 (TFOpLambda) (None, 14, 14, 184) 0 batch_normalization_28[0][0]

activation_11[0][0]

__________________________________________________________________________________________________

conv2d_25 (Conv2D) (None, 14, 14, 80) 14720 tf.math.multiply_8[0][0]

__________________________________________________________________________________________________

batch_normalization_29 (BatchNo (None, 14, 14, 80) 320 conv2d_25[0][0]

__________________________________________________________________________________________________

conv2d_26 (Conv2D) (None, 14, 14, 480) 38400 batch_normalization_29[0][0]

__________________________________________________________________________________________________

batch_normalization_30 (BatchNo (None, 14, 14, 480) 1920 conv2d_26[0][0]

__________________________________________________________________________________________________

activation_12 (Activation) (None, 14, 14, 480) 0 batch_normalization_30[0][0]

__________________________________________________________________________________________________

tf.math.multiply_9 (TFOpLambda) (None, 14, 14, 480) 0 batch_normalization_30[0][0]

activation_12[0][0]

__________________________________________________________________________________________________

depthwise_conv2d_10 (DepthwiseC (None, 14, 14, 480) 4320 tf.math.multiply_9[0][0]

__________________________________________________________________________________________________

batch_normalization_31 (BatchNo (None, 14, 14, 480) 1920 depthwise_conv2d_10[0][0]

__________________________________________________________________________________________________

activation_13 (Activation) (None, 14, 14, 480) 0 batch_normalization_31[0][0]

__________________________________________________________________________________________________

tf.math.multiply_10 (TFOpLambda (None, 14, 14, 480) 0 batch_normalization_31[0][0]

activation_13[0][0]

__________________________________________________________________________________________________

global_average_pooling2d_3 (Glo (None, 480) 0 tf.math.multiply_10[0][0]

__________________________________________________________________________________________________

reshape_3 (Reshape) (None, 1, 1, 480) 0 global_average_pooling2d_3[0][0]

__________________________________________________________________________________________________

conv2d_27 (Conv2D) (None, 1, 1, 120) 57720 reshape_3[0][0]

__________________________________________________________________________________________________

re_lu_9 (ReLU) (None, 1, 1, 120) 0 conv2d_27[0][0]

__________________________________________________________________________________________________

conv2d_28 (Conv2D) (None, 1, 1, 480) 58080 re_lu_9[0][0]

__________________________________________________________________________________________________

activation_14 (Activation) (None, 1, 1, 480) 0 conv2d_28[0][0]

__________________________________________________________________________________________________

multiply_3 (Multiply) (None, 14, 14, 480) 0 tf.math.multiply_10[0][0]

activation_14[0][0]

__________________________________________________________________________________________________

conv2d_29 (Conv2D) (None, 14, 14, 112) 53760 multiply_3[0][0]

__________________________________________________________________________________________________

batch_normalization_32 (BatchNo (None, 14, 14, 112) 448 conv2d_29[0][0]

__________________________________________________________________________________________________

conv2d_30 (Conv2D) (None, 14, 14, 672) 75264 batch_normalization_32[0][0]

__________________________________________________________________________________________________

batch_normalization_33 (BatchNo (None, 14, 14, 672) 2688 conv2d_30[0][0]

__________________________________________________________________________________________________

activation_15 (Activation) (None, 14, 14, 672) 0 batch_normalization_33[0][0]

__________________________________________________________________________________________________

tf.math.multiply_11 (TFOpLambda (None, 14, 14, 672) 0 batch_normalization_33[0][0]

activation_15[0][0]

__________________________________________________________________________________________________

depthwise_conv2d_11 (DepthwiseC (None, 14, 14, 672) 6048 tf.math.multiply_11[0][0]

__________________________________________________________________________________________________

batch_normalization_34 (BatchNo (None, 14, 14, 672) 2688 depthwise_conv2d_11[0][0]

__________________________________________________________________________________________________

activation_16 (Activation) (None, 14, 14, 672) 0 batch_normalization_34[0][0]

__________________________________________________________________________________________________

tf.math.multiply_12 (TFOpLambda (None, 14, 14, 672) 0 batch_normalization_34[0][0]

activation_16[0][0]

__________________________________________________________________________________________________

global_average_pooling2d_4 (Glo (None, 672) 0 tf.math.multiply_12[0][0]

__________________________________________________________________________________________________

reshape_4 (Reshape) (None, 1, 1, 672) 0 global_average_pooling2d_4[0][0]

__________________________________________________________________________________________________

conv2d_31 (Conv2D) (None, 1, 1, 168) 113064 reshape_4[0][0]

__________________________________________________________________________________________________

re_lu_10 (ReLU) (None, 1, 1, 168) 0 conv2d_31[0][0]

__________________________________________________________________________________________________

conv2d_32 (Conv2D) (None, 1, 1, 672) 113568 re_lu_10[0][0]

__________________________________________________________________________________________________

activation_17 (Activation) (None, 1, 1, 672) 0 conv2d_32[0][0]

__________________________________________________________________________________________________

multiply_4 (Multiply) (None, 14, 14, 672) 0 tf.math.multiply_12[0][0]

activation_17[0][0]

__________________________________________________________________________________________________

conv2d_33 (Conv2D) (None, 14, 14, 112) 75264 multiply_4[0][0]

__________________________________________________________________________________________________

batch_normalization_35 (BatchNo (None, 14, 14, 112) 448 conv2d_33[0][0]

__________________________________________________________________________________________________

conv2d_34 (Conv2D) (None, 14, 14, 672) 75264 batch_normalization_35[0][0]

__________________________________________________________________________________________________

batch_normalization_36 (BatchNo (None, 14, 14, 672) 2688 conv2d_34[0][0]

__________________________________________________________________________________________________

activation_18 (Activation) (None, 14, 14, 672) 0 batch_normalization_36[0][0]

__________________________________________________________________________________________________

tf.math.multiply_13 (TFOpLambda (None, 14, 14, 672) 0 batch_normalization_36[0][0]

activation_18[0][0]

__________________________________________________________________________________________________

depthwise_conv2d_12 (DepthwiseC (None, 7, 7, 672) 16800 tf.math.multiply_13[0][0]

__________________________________________________________________________________________________

batch_normalization_37 (BatchNo (None, 7, 7, 672) 2688 depthwise_conv2d_12[0][0]

__________________________________________________________________________________________________

activation_19 (Activation) (None, 7, 7, 672) 0 batch_normalization_37[0][0]

__________________________________________________________________________________________________

tf.math.multiply_14 (TFOpLambda (None, 7, 7, 672) 0 batch_normalization_37[0][0]

activation_19[0][0]

__________________________________________________________________________________________________

global_average_pooling2d_5 (Glo (None, 672) 0 tf.math.multiply_14[0][0]

__________________________________________________________________________________________________

reshape_5 (Reshape) (None, 1, 1, 672) 0 global_average_pooling2d_5[0][0]

__________________________________________________________________________________________________

conv2d_35 (Conv2D) (None, 1, 1, 168) 113064 reshape_5[0][0]

__________________________________________________________________________________________________

re_lu_11 (ReLU) (None, 1, 1, 168) 0 conv2d_35[0][0]

__________________________________________________________________________________________________

conv2d_36 (Conv2D) (None, 1, 1, 672) 113568 re_lu_11[0][0]

__________________________________________________________________________________________________

activation_20 (Activation) (None, 1, 1, 672) 0 conv2d_36[0][0]

__________________________________________________________________________________________________

multiply_5 (Multiply) (None, 7, 7, 672) 0 tf.math.multiply_14[0][0]

activation_20[0][0]

__________________________________________________________________________________________________

conv2d_37 (Conv2D) (None, 7, 7, 160) 107520 multiply_5[0][0]

__________________________________________________________________________________________________

batch_normalization_38 (BatchNo (None, 7, 7, 160) 640 conv2d_37[0][0]

__________________________________________________________________________________________________

conv2d_38 (Conv2D) (None, 7, 7, 960) 153600 batch_normalization_38[0][0]

__________________________________________________________________________________________________

batch_normalization_39 (BatchNo (None, 7, 7, 960) 3840 conv2d_38[0][0]

__________________________________________________________________________________________________

activation_21 (Activation) (None, 7, 7, 960) 0 batch_normalization_39[0][0]

__________________________________________________________________________________________________

tf.math.multiply_15 (TFOpLambda (None, 7, 7, 960) 0 batch_normalization_39[0][0]

activation_21[0][0]

__________________________________________________________________________________________________

depthwise_conv2d_13 (DepthwiseC (None, 7, 7, 960) 24000 tf.math.multiply_15[0][0]

__________________________________________________________________________________________________

batch_normalization_40 (BatchNo (None, 7, 7, 960) 3840 depthwise_conv2d_13[0][0]

__________________________________________________________________________________________________

activation_22 (Activation) (None, 7, 7, 960) 0 batch_normalization_40[0][0]

__________________________________________________________________________________________________

tf.math.multiply_16 (TFOpLambda (None, 7, 7, 960) 0 batch_normalization_40[0][0]

activation_22[0][0]

__________________________________________________________________________________________________

global_average_pooling2d_6 (Glo (None, 960) 0 tf.math.multiply_16[0][0]

__________________________________________________________________________________________________

reshape_6 (Reshape) (None, 1, 1, 960) 0 global_average_pooling2d_6[0][0]

__________________________________________________________________________________________________

conv2d_39 (Conv2D) (None, 1, 1, 240) 230640 reshape_6[0][0]

__________________________________________________________________________________________________

re_lu_12 (ReLU) (None, 1, 1, 240) 0 conv2d_39[0][0]

__________________________________________________________________________________________________

conv2d_40 (Conv2D) (None, 1, 1, 960) 231360 re_lu_12[0][0]

__________________________________________________________________________________________________

activation_23 (Activation) (None, 1, 1, 960) 0 conv2d_40[0][0]

__________________________________________________________________________________________________

multiply_6 (Multiply) (None, 7, 7, 960) 0 tf.math.multiply_16[0][0]

activation_23[0][0]

__________________________________________________________________________________________________

conv2d_41 (Conv2D) (None, 7, 7, 160) 153600 multiply_6[0][0]

__________________________________________________________________________________________________

batch_normalization_41 (BatchNo (None, 7, 7, 160) 640 conv2d_41[0][0]

__________________________________________________________________________________________________

conv2d_42 (Conv2D) (None, 7, 7, 960) 153600 batch_normalization_41[0][0]

__________________________________________________________________________________________________

batch_normalization_42 (BatchNo (None, 7, 7, 960) 3840 conv2d_42[0][0]

__________________________________________________________________________________________________

activation_24 (Activation) (None, 7, 7, 960) 0 batch_normalization_42[0][0]

__________________________________________________________________________________________________

tf.math.multiply_17 (TFOpLambda (None, 7, 7, 960) 0 batch_normalization_42[0][0]

activation_24[0][0]

__________________________________________________________________________________________________

depthwise_conv2d_14 (DepthwiseC (None, 7, 7, 960) 24000 tf.math.multiply_17[0][0]

__________________________________________________________________________________________________

batch_normalization_43 (BatchNo (None, 7, 7, 960) 3840 depthwise_conv2d_14[0][0]

__________________________________________________________________________________________________

activation_25 (Activation) (None, 7, 7, 960) 0 batch_normalization_43[0][0]

__________________________________________________________________________________________________

tf.math.multiply_18 (TFOpLambda (None, 7, 7, 960) 0 batch_normalization_43[0][0]

activation_25[0][0]

__________________________________________________________________________________________________

global_average_pooling2d_7 (Glo (None, 960) 0 tf.math.multiply_18[0][0]

__________________________________________________________________________________________________

reshape_7 (Reshape) (None, 1, 1, 960) 0 global_average_pooling2d_7[0][0]

__________________________________________________________________________________________________

conv2d_43 (Conv2D) (None, 1, 1, 240) 230640 reshape_7[0][0]

__________________________________________________________________________________________________

re_lu_13 (ReLU) (None, 1, 1, 240) 0 conv2d_43[0][0]

__________________________________________________________________________________________________

conv2d_44 (Conv2D) (None, 1, 1, 960) 231360 re_lu_13[0][0]

__________________________________________________________________________________________________

activation_26 (Activation) (None, 1, 1, 960) 0 conv2d_44[0][0]

__________________________________________________________________________________________________

multiply_7 (Multiply) (None, 7, 7, 960) 0 tf.math.multiply_18[0][0]

activation_26[0][0]

__________________________________________________________________________________________________

conv2d_45 (Conv2D) (None, 7, 7, 160) 153600 multiply_7[0][0]

__________________________________________________________________________________________________

batch_normalization_44 (BatchNo (None, 7, 7, 160) 640 conv2d_45[0][0]

__________________________________________________________________________________________________

conv2d_46 (Conv2D) (None, 7, 7, 960) 153600 batch_normalization_44[0][0]

__________________________________________________________________________________________________

batch_normalization_45 (BatchNo (None, 7, 7, 960) 3840 conv2d_46[0][0]

__________________________________________________________________________________________________

activation_27 (Activation) (None, 7, 7, 960) 0 batch_normalization_45[0][0]

__________________________________________________________________________________________________

tf.math.multiply_19 (TFOpLambda (None, 7, 7, 960) 0 batch_normalization_45[0][0]

activation_27[0][0]

__________________________________________________________________________________________________

max_pooling2d (MaxPooling2D) (None, 1, 1, 960) 0 tf.math.multiply_19[0][0]

__________________________________________________________________________________________________

reshape_8 (Reshape) (None, 1, 1, 960) 0 max_pooling2d[0][0]

__________________________________________________________________________________________________

conv2d_47 (Conv2D) (None, 1, 1, 1280) 1230080 reshape_8[0][0]

__________________________________________________________________________________________________

activation_28 (Activation) (None, 1, 1, 1280) 0 conv2d_47[0][0]

__________________________________________________________________________________________________

tf.math.multiply_20 (TFOpLambda (None, 1, 1, 1280) 0 conv2d_47[0][0]

activation_28[0][0]

__________________________________________________________________________________________________

conv2d_48 (Conv2D) (None, 1, 1, 1000) 1281000 tf.math.multiply_20[0][0]

__________________________________________________________________________________________________

flatten (Flatten) (None, 1000) 0 conv2d_48[0][0]

==================================================================================================

Total params: 5,505,598

Trainable params: 5,481,198

Non-trainable params: 24,400

__________________________________________________________________________________________________