手撕Desenet卷积神经网络-pytorch-详细注释版(可以直接替换自己数据集)-直接放置自己的数据集就能直接跑。跑的代码有问题的可以在评论区指出,看到了会回复。训练代码和预测代码均有。

论文链接:https://arxiv.org/pdf/1608.06993.pdf

没法下载论文的看我下面的百度云链接,在里面有论文

需要学习Resnet的可以看我的这篇博客。

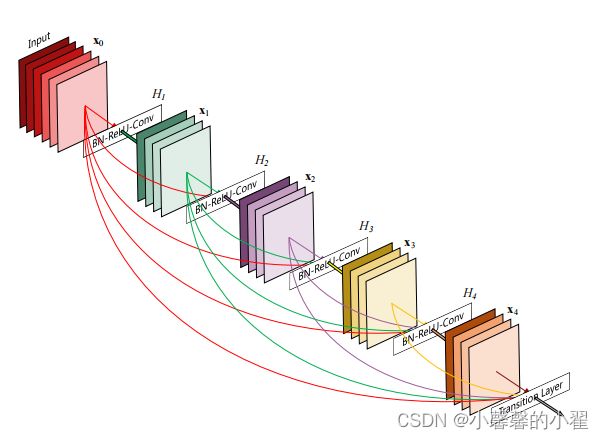

Desenet则是将前面所有层的输出,打入当前层进行传递,所以就造成了该网络结构密密麻麻的网络结构,Dense Block模块如下图所示。

可以看到图中密密麻麻的线就是将每个层之前所有层的输出,输入当前层。根据作者论文中的描述,这样做的好处如下:

DenseNet优势

(1)较好的解决了深层网络的梯度消失问题;

(2)加强了特征的传播(因为之前层的输出都输入当前层) ;

(3)鼓励特征重用(因为之前层的输出都输入当前层);

(4)减少了模型参数(层数堆叠变少了,毕竟都在重复用之前的);

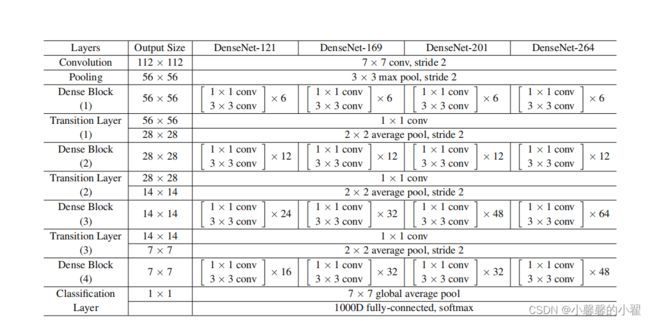

具体网络结果如下图:

可以根据这个网络结构,搭一下网络,我们手撕网络也是基于这个结构图。如果想细细了解网络结构,就去读一下我的代码

接下来我们来看代码:

导入需要的库:

import torch

import torch.nn as nn

import torch.nn.functional as F

import torch.utils.checkpoint as cp

from torch import Tensor

import torchvision.models

from matplotlib import pyplot as plt

from tqdm import tqdm

from torch.utils.data import DataLoader

from torchvision.transforms import transforms

import re

from typing import Any, List, Tuple

from collections import OrderedDict图像预处理: 将所有图像缩放成120*120进行处理

data_transform = {

"train": transforms.Compose([transforms.RandomResizedCrop(120),

transforms.RandomHorizontalFlip(),

transforms.ToTensor(),

transforms.Normalize((0.5, 0.5, 0.5), (0.5, 0.5, 0.5))]),

"val": transforms.Compose([transforms.Resize((120, 120)), # cannot 224, must (224, 224)

transforms.ToTensor(),

transforms.Normalize((0.5, 0.5, 0.5), (0.5, 0.5, 0.5))])}训练集数据和测试集数据的导入 :

将数据像挤牙膏似的一点一点的抽出去,设置相应的batc_size

自己的数据放在跟代码相同的文件夹下新建一个data文件夹,data文件夹里的新建一个train文件夹用于放置训练集的图片。同理新建一个val文件夹用于放置测试集的图片。

train_data = torchvision.datasets.ImageFolder(root = "./data/train" , transform = data_transform["train"])

traindata = DataLoader(dataset=train_data, batch_size=32, shuffle=True, num_workers=0) # 将训练数据以每次32张图片的形式抽出进行训练

test_data = torchvision.datasets.ImageFolder(root = "./data/val" , transform = data_transform["val"])

train_size = len(train_data) # 训练集的长度

test_size = len(test_data) # 测试集的长度

print(train_size) #输出训练集长度看一下,相当于看看有几张图片

print(test_size) #输出测试集长度看一下,相当于看看有几张图片

testdata = DataLoader(dataset=test_data, batch_size=32, shuffle=True, num_workers=0) # 将训练数据以每次32张图片的形式抽出进行测试设置GPU 和 CPU的使用:

有GPU则调用GPU,没有的话就调用CPU

device = torch.device("cuda:0" if torch.cuda.is_available() else "cpu")

print("using {} device.".format(device))构建Densenet网络:

class _DenseLayer(nn.Module):

def __init__(self,

input_c: int,

growth_rate: int,

bn_size: int,

drop_rate: float,

memory_efficient: bool = False):

super(_DenseLayer, self).__init__()

self.add_module("norm1", nn.BatchNorm2d(input_c))

self.add_module("relu1", nn.ReLU(inplace=True))

self.add_module("conv1", nn.Conv2d(in_channels=input_c,

out_channels=bn_size * growth_rate,

kernel_size=1,

stride=1,

bias=False))

self.add_module("norm2", nn.BatchNorm2d(bn_size * growth_rate))

self.add_module("relu2", nn.ReLU(inplace=True))

self.add_module("conv2", nn.Conv2d(bn_size * growth_rate,

growth_rate,

kernel_size=3,

stride=1,

padding=1,

bias=False))

self.drop_rate = drop_rate

self.memory_efficient = memory_efficient

def bn_function(self, inputs: List[Tensor]) -> Tensor:

concat_features = torch.cat(inputs, 1)

bottleneck_output = self.conv1(self.relu1(self.norm1(concat_features)))

return bottleneck_output

@staticmethod

def any_requires_grad(inputs: List[Tensor]) -> bool:

for tensor in inputs:

if tensor.requires_grad:

return True

return False

@torch.jit.unused

def call_checkpoint_bottleneck(self, inputs: List[Tensor]) -> Tensor:

def closure(*inp):

return self.bn_function(inp)

return cp.checkpoint(closure, *inputs)

def forward(self, inputs: Tensor) -> Tensor:

if isinstance(inputs, Tensor):

prev_features = [inputs]

else:

prev_features = inputs

if self.memory_efficient and self.any_requires_grad(prev_features):

if torch.jit.is_scripting():

raise Exception("memory efficient not supported in JIT")

bottleneck_output = self.call_checkpoint_bottleneck(prev_features)

else:

bottleneck_output = self.bn_function(prev_features)

new_features = self.conv2(self.relu2(self.norm2(bottleneck_output)))

if self.drop_rate > 0:

new_features = F.dropout(new_features,

p=self.drop_rate,

training=self.training)

return new_features

class _DenseBlock(nn.ModuleDict):

_version = 2

def __init__(self,

num_layers: int,

input_c: int,

bn_size: int,

growth_rate: int,

drop_rate: float,

memory_efficient: bool = False):

super(_DenseBlock, self).__init__()

for i in range(num_layers):

layer = _DenseLayer(input_c + i * growth_rate,

growth_rate=growth_rate,

bn_size=bn_size,

drop_rate=drop_rate,

memory_efficient=memory_efficient)

self.add_module("denselayer%d" % (i + 1), layer)

def forward(self, init_features: Tensor) -> Tensor:

features = [init_features]

for name, layer in self.items():

new_features = layer(features)

features.append(new_features)

return torch.cat(features, 1)

class _Transition(nn.Sequential):

def __init__(self,

input_c: int,

output_c: int):

super(_Transition, self).__init__()

self.add_module("norm", nn.BatchNorm2d(input_c))

self.add_module("relu", nn.ReLU(inplace=True))

self.add_module("conv", nn.Conv2d(input_c,

output_c,

kernel_size=1,

stride=1,

bias=False))

self.add_module("pool", nn.AvgPool2d(kernel_size=2, stride=2))

class DenseNet(nn.Module):

"""

Densenet-BC model class for imagenet

Args:

growth_rate (int) - how many filters to add each layer (`k` in paper)

block_config (list of 4 ints) - how many layers in each pooling block

num_init_features (int) - the number of filters to learn in the first convolution layer

bn_size (int) - multiplicative factor for number of bottle neck layers

(i.e. bn_size * k features in the bottleneck layer)

drop_rate (float) - dropout rate after each dense layer

num_classes (int) - number of classification classes

memory_efficient (bool) - If True, uses checkpointing. Much more memory efficient

"""

def __init__(self,

growth_rate: int = 32,

block_config: Tuple[int, int, int, int] = (6, 12, 24, 16),

num_init_features: int = 64,

bn_size: int = 4,

drop_rate: float = 0,

num_classes: int = 1000,

memory_efficient: bool = False):

super(DenseNet, self).__init__()

# first conv+bn+relu+pool

self.features = nn.Sequential(OrderedDict([

("conv0", nn.Conv2d(3, num_init_features, kernel_size=7, stride=2, padding=3, bias=False)),

("norm0", nn.BatchNorm2d(num_init_features)),

("relu0", nn.ReLU(inplace=True)),

("pool0", nn.MaxPool2d(kernel_size=3, stride=2, padding=1)),

]))

# each dense block

num_features = num_init_features

for i, num_layers in enumerate(block_config):

block = _DenseBlock(num_layers=num_layers,

input_c=num_features,

bn_size=bn_size,

growth_rate=growth_rate,

drop_rate=drop_rate,

memory_efficient=memory_efficient)

self.features.add_module("denseblock%d" % (i + 1), block)

num_features = num_features + num_layers * growth_rate

if i != len(block_config) - 1:

trans = _Transition(input_c=num_features,

output_c=num_features // 2)

self.features.add_module("transition%d" % (i + 1), trans)

num_features = num_features // 2

# finnal batch norm

self.features.add_module("norm5", nn.BatchNorm2d(num_features))

# fc layer

self.classifier = nn.Linear(num_features, num_classes)

# init weights

for m in self.modules():

if isinstance(m, nn.Conv2d):

nn.init.kaiming_normal_(m.weight)

elif isinstance(m, nn.BatchNorm2d):

nn.init.constant_(m.weight, 1)

nn.init.constant_(m.bias, 0)

elif isinstance(m, nn.Linear):

nn.init.constant_(m.bias, 0)

def forward(self, x: Tensor) -> Tensor:

features = self.features(x)

out = F.relu(features, inplace=True)

out = F.adaptive_avg_pool2d(out, (1, 1))

out = torch.flatten(out, 1)

out = self.classifier(out)

return out

def densenet121(**kwargs: Any) -> DenseNet:

# Top-1 error: 25.35%

# 'densenet121': 'https://download.pytorch.org/models/densenet121-a639ec97.pth'

return DenseNet(growth_rate=32,

block_config=(6, 12, 24, 16),

num_init_features=64,

**kwargs)

def densenet169(**kwargs: Any) -> DenseNet:

# Top-1 error: 24.00%

# 'densenet169': 'https://download.pytorch.org/models/densenet169-b2777c0a.pth'

return DenseNet(growth_rate=32,

block_config=(6, 12, 32, 32),

num_init_features=64,

**kwargs)

def densenet201(**kwargs: Any) -> DenseNet:

# Top-1 error: 22.80%

# 'densenet201': 'https://download.pytorch.org/models/densenet201-c1103571.pth'

return DenseNet(growth_rate=32,

block_config=(6, 12, 48, 32),

num_init_features=64,

**kwargs)

def densenet161(**kwargs: Any) -> DenseNet:

# Top-1 error: 22.35%

# 'densenet161': 'https://download.pytorch.org/models/densenet161-8d451a50.pth'

return DenseNet(growth_rate=48,

block_config=(6, 12, 36, 24),

num_init_features=96,

**kwargs)

def load_state_dict(model: nn.Module, weights_path: str) -> None:

# '.'s are no longer allowed in module names, but previous _DenseLayer

# has keys 'norm.1', 'relu.1', 'conv.1', 'norm.2', 'relu.2', 'conv.2'.

# They are also in the checkpoints in model_urls. This pattern is used

# to find such keys.

pattern = re.compile(

r'^(.*denselayer\d+\.(?:norm|relu|conv))\.((?:[12])\.(?:weight|bias|running_mean|running_var))$')

state_dict = torch.load(weights_path)

num_classes = model.classifier.out_features

load_fc = num_classes == 1000

for key in list(state_dict.keys()):

if load_fc is False:

if "classifier" in key:

del state_dict[key]

res = pattern.match(key)

if res:

new_key = res.group(1) + res.group(2)

state_dict[new_key] = state_dict[key]

del state_dict[key]

model.load_state_dict(state_dict, strict=load_fc)

print("successfully load pretrain-weights.")

启动模型,测试模型输出:

DenseNet1 = DenseNet(num_classes = 2) #将模型命名为Densenet这里的2就改成自己的数据集的种类即可,几种就改成几

DenseNet1.to(device)

print(DenseNet1.to(device)) #输出模型结构

test1 = torch.ones(64, 3, 120, 120) # 测试一下输出的形状大小 输入一个64,3,120,120的向量

test1 = DenseNet1(test1.to(device)) #将向量打入神经网络进行测试

print(test1.shape) #查看输出的结果#输出一个测试数据看看模型的数据是几种的,是不是我们需要的种类设置训练需要的参数,epoch,学习率learning 优化器。损失函数。

epoch = 10 # 迭代次数即训练次数

learning = 0.001 # 学习率

optimizer = torch.optim.Adam(net.parameters(), lr=learning) # 使用Adam优化器-写论文的话可以具体查一下这个优化器的原理

loss = nn.CrossEntropyLoss() # 损失计算方式,交叉熵损失函数设置四个空数组,用来存放训练集的loss和accuracy 测试集的loss和 accuracy

train_loss_all = [] # 存放训练集损失的数组

train_accur_all = [] # 存放训练集准确率的数组

test_loss_all = [] # 存放测试集损失的数组

test_accur_all = [] # 存放测试集准确率的数组开始训练:

for i in range(epoch): #开始迭代

train_loss = 0 #训练集的损失初始设为0

train_num = 0.0 #

train_accuracy = 0.0 #训练集的准确率初始设为0

DenseNet1.train() #将模型设置成 训练模式

train_bar = tqdm(traindata) #用于进度条显示,没啥实际用处

for step, data in enumerate(train_bar): #开始迭代跑, enumerate这个函数不懂可以查查,将训练集分为 data是序号,data是数据

img, target = data #将data 分位 img图片,target标签

optimizer.zero_grad() # 清空历史梯度

outputs = DenseNet1(img.to(device)) # 将图片打入网络进行训练,outputs是输出的结果

loss1 = loss(outputs, target.to(device)) # 计算神经网络输出的结果outputs与图片真实标签target的差别-这就是我们通常情况下称为的损失

outputs = torch.argmax(outputs, 1) #会输出10个值,最大的值就是我们预测的结果 求最大值

loss1.backward() #神经网络反向传播

optimizer.step() #梯度优化 用上面的abam优化

train_loss += loss1.item() #将所有损失的绝对值加起来

accuracy = torch.sum(outputs == target.to(device)) #outputs == target的 即使预测正确的,统计预测正确的个数,从而计算准确率

train_accuracy = train_accuracy + accuracy #求训练集的准确率

train_num += img.size(0) #

print("epoch:{} , train-Loss:{} , train-accuracy:{}".format(i + 1, train_loss / train_num, #输出训练情况

train_accuracy / train_num))

train_loss_all.append(train_loss / train_num) #将训练的损失放到一个列表里 方便后续画图

train_accur_all.append(train_accuracy.double().item() / train_num)#训练集的准确率开始测试:

test_loss = 0 #同上 测试损失

test_accuracy = 0.0 #测试准确率

test_num = 0

DenseNet1.eval() #将模型调整为测试模型

with torch.no_grad(): #清空历史梯度,进行测试 与训练最大的区别是测试过程中取消了反向传播

test_bar = tqdm(testdata)

for data in test_bar:

img, target = data

outputs = DenseNet1(img.to(device))

loss2 = loss(outputs, target.to(device))

outputs = torch.argmax(outputs, 1)

test_loss += loss2.item()

accuracy = torch.sum(outputs == target.to(device))

test_accuracy = test_accuracy + accuracy

test_num += img.size(0)

print("test-Loss:{} , test-accuracy:{}".format(test_loss / test_num, test_accuracy / test_num))

test_loss_all.append(test_loss / test_num)

test_accur_all.append(test_accuracy.double().item() / test_num)

绘制训练集loss和accuracy图 和测试集的loss和accuracy图:

#下面的是画图过程,将上述存放的列表 画出来即可

plt.figure(figsize=(12, 4))

plt.subplot(1, 2, 1)

plt.plot(range(epoch), train_loss_all,

"ro-", label="Train loss")

plt.plot(range(epoch), test_loss_all,

"bs-", label="test loss")

plt.legend()

plt.xlabel("epoch")

plt.ylabel("Loss")

plt.subplot(1, 2, 2)

plt.plot(range(epoch), train_accur_all,

"ro-", label="Train accur")

plt.plot(range(epoch), test_accur_all,

"bs-", label="test accur")

plt.xlabel("epoch")

plt.ylabel("acc")

plt.legend()

plt.show()

torch.save(DenseNet1, "DenseNet.pth")

print("模型已保存")全部train训练代码:

import torch

import torch.nn as nn

import torch.nn.functional as F

import torch.utils.checkpoint as cp

from torch import Tensor

import torchvision.models

from matplotlib import pyplot as plt

from tqdm import tqdm

from torch.utils.data import DataLoader

from torchvision.transforms import transforms

import re

from typing import Any, List, Tuple

from collections import OrderedDict

data_transform = {

"train": transforms.Compose([transforms.RandomResizedCrop(120),

transforms.RandomHorizontalFlip(),

transforms.ToTensor(),

transforms.Normalize((0.5, 0.5, 0.5), (0.5, 0.5, 0.5))]),

"val": transforms.Compose([transforms.Resize((120, 120)), # cannot 224, must (224, 224)

transforms.ToTensor(),

transforms.Normalize((0.5, 0.5, 0.5), (0.5, 0.5, 0.5))])}

train_data = torchvision.datasets.ImageFolder(root = "./data/train" , transform = data_transform["train"])

traindata = DataLoader(dataset=train_data, batch_size=32, shuffle=True, num_workers=0) # 将训练数据以每次32张图片的形式抽出进行训练

test_data = torchvision.datasets.ImageFolder(root = "./data/val" , transform = data_transform["val"])

train_size = len(train_data) # 训练集的长度

test_size = len(test_data) # 测试集的长度

print(train_size) #输出训练集长度看一下,相当于看看有几张图片

print(test_size) #输出测试集长度看一下,相当于看看有几张图片

testdata = DataLoader(dataset=test_data, batch_size=32, shuffle=True, num_workers=0) # 将训练数据以每次32张图片的形式抽出进行测试

device = torch.device("cuda:0" if torch.cuda.is_available() else "cpu")

print("using {} device.".format(device))

class _DenseLayer(nn.Module):

def __init__(self,

input_c: int,

growth_rate: int,

bn_size: int,

drop_rate: float,

memory_efficient: bool = False):

super(_DenseLayer, self).__init__()

self.add_module("norm1", nn.BatchNorm2d(input_c))

self.add_module("relu1", nn.ReLU(inplace=True))

self.add_module("conv1", nn.Conv2d(in_channels=input_c,

out_channels=bn_size * growth_rate,

kernel_size=1,

stride=1,

bias=False))

self.add_module("norm2", nn.BatchNorm2d(bn_size * growth_rate))

self.add_module("relu2", nn.ReLU(inplace=True))

self.add_module("conv2", nn.Conv2d(bn_size * growth_rate,

growth_rate,

kernel_size=3,

stride=1,

padding=1,

bias=False))

self.drop_rate = drop_rate

self.memory_efficient = memory_efficient

def bn_function(self, inputs: List[Tensor]) -> Tensor:

concat_features = torch.cat(inputs, 1)

bottleneck_output = self.conv1(self.relu1(self.norm1(concat_features)))

return bottleneck_output

@staticmethod

def any_requires_grad(inputs: List[Tensor]) -> bool:

for tensor in inputs:

if tensor.requires_grad:

return True

return False

@torch.jit.unused

def call_checkpoint_bottleneck(self, inputs: List[Tensor]) -> Tensor:

def closure(*inp):

return self.bn_function(inp)

return cp.checkpoint(closure, *inputs)

def forward(self, inputs: Tensor) -> Tensor:

if isinstance(inputs, Tensor):

prev_features = [inputs]

else:

prev_features = inputs

if self.memory_efficient and self.any_requires_grad(prev_features):

if torch.jit.is_scripting():

raise Exception("memory efficient not supported in JIT")

bottleneck_output = self.call_checkpoint_bottleneck(prev_features)

else:

bottleneck_output = self.bn_function(prev_features)

new_features = self.conv2(self.relu2(self.norm2(bottleneck_output)))

if self.drop_rate > 0:

new_features = F.dropout(new_features,

p=self.drop_rate,

training=self.training)

return new_features

class _DenseBlock(nn.ModuleDict):

_version = 2

def __init__(self,

num_layers: int,

input_c: int,

bn_size: int,

growth_rate: int,

drop_rate: float,

memory_efficient: bool = False):

super(_DenseBlock, self).__init__()

for i in range(num_layers):

layer = _DenseLayer(input_c + i * growth_rate,

growth_rate=growth_rate,

bn_size=bn_size,

drop_rate=drop_rate,

memory_efficient=memory_efficient)

self.add_module("denselayer%d" % (i + 1), layer)

def forward(self, init_features: Tensor) -> Tensor:

features = [init_features]

for name, layer in self.items():

new_features = layer(features)

features.append(new_features)

return torch.cat(features, 1)

class _Transition(nn.Sequential):

def __init__(self,

input_c: int,

output_c: int):

super(_Transition, self).__init__()

self.add_module("norm", nn.BatchNorm2d(input_c))

self.add_module("relu", nn.ReLU(inplace=True))

self.add_module("conv", nn.Conv2d(input_c,

output_c,

kernel_size=1,

stride=1,

bias=False))

self.add_module("pool", nn.AvgPool2d(kernel_size=2, stride=2))

class DenseNet(nn.Module):

"""

Densenet-BC model class for imagenet

Args:

growth_rate (int) - how many filters to add each layer (`k` in paper)

block_config (list of 4 ints) - how many layers in each pooling block

num_init_features (int) - the number of filters to learn in the first convolution layer

bn_size (int) - multiplicative factor for number of bottle neck layers

(i.e. bn_size * k features in the bottleneck layer)

drop_rate (float) - dropout rate after each dense layer

num_classes (int) - number of classification classes

memory_efficient (bool) - If True, uses checkpointing. Much more memory efficient

"""

def __init__(self,

growth_rate: int = 32,

block_config: Tuple[int, int, int, int] = (6, 12, 24, 16),

num_init_features: int = 64,

bn_size: int = 4,

drop_rate: float = 0,

num_classes: int = 1000,

memory_efficient: bool = False):

super(DenseNet, self).__init__()

# first conv+bn+relu+pool

self.features = nn.Sequential(OrderedDict([

("conv0", nn.Conv2d(3, num_init_features, kernel_size=7, stride=2, padding=3, bias=False)),

("norm0", nn.BatchNorm2d(num_init_features)),

("relu0", nn.ReLU(inplace=True)),

("pool0", nn.MaxPool2d(kernel_size=3, stride=2, padding=1)),

]))

# each dense block

num_features = num_init_features

for i, num_layers in enumerate(block_config):

block = _DenseBlock(num_layers=num_layers,

input_c=num_features,

bn_size=bn_size,

growth_rate=growth_rate,

drop_rate=drop_rate,

memory_efficient=memory_efficient)

self.features.add_module("denseblock%d" % (i + 1), block)

num_features = num_features + num_layers * growth_rate

if i != len(block_config) - 1:

trans = _Transition(input_c=num_features,

output_c=num_features // 2)

self.features.add_module("transition%d" % (i + 1), trans)

num_features = num_features // 2

# finnal batch norm

self.features.add_module("norm5", nn.BatchNorm2d(num_features))

# fc layer

self.classifier = nn.Linear(num_features, num_classes)

# init weights

for m in self.modules():

if isinstance(m, nn.Conv2d):

nn.init.kaiming_normal_(m.weight)

elif isinstance(m, nn.BatchNorm2d):

nn.init.constant_(m.weight, 1)

nn.init.constant_(m.bias, 0)

elif isinstance(m, nn.Linear):

nn.init.constant_(m.bias, 0)

def forward(self, x: Tensor) -> Tensor:

features = self.features(x)

out = F.relu(features, inplace=True)

out = F.adaptive_avg_pool2d(out, (1, 1))

out = torch.flatten(out, 1)

out = self.classifier(out)

return out

def densenet121(**kwargs: Any) -> DenseNet:

# Top-1 error: 25.35%

# 'densenet121': 'https://download.pytorch.org/models/densenet121-a639ec97.pth'

return DenseNet(growth_rate=32,

block_config=(6, 12, 24, 16),

num_init_features=64,

**kwargs)

def densenet169(**kwargs: Any) -> DenseNet:

# Top-1 error: 24.00%

# 'densenet169': 'https://download.pytorch.org/models/densenet169-b2777c0a.pth'

return DenseNet(growth_rate=32,

block_config=(6, 12, 32, 32),

num_init_features=64,

**kwargs)

def densenet201(**kwargs: Any) -> DenseNet:

# Top-1 error: 22.80%

# 'densenet201': 'https://download.pytorch.org/models/densenet201-c1103571.pth'

return DenseNet(growth_rate=32,

block_config=(6, 12, 48, 32),

num_init_features=64,

**kwargs)

def densenet161(**kwargs: Any) -> DenseNet:

# Top-1 error: 22.35%

# 'densenet161': 'https://download.pytorch.org/models/densenet161-8d451a50.pth'

return DenseNet(growth_rate=48,

block_config=(6, 12, 36, 24),

num_init_features=96,

**kwargs)

def load_state_dict(model: nn.Module, weights_path: str) -> None:

# '.'s are no longer allowed in module names, but previous _DenseLayer

# has keys 'norm.1', 'relu.1', 'conv.1', 'norm.2', 'relu.2', 'conv.2'.

# They are also in the checkpoints in model_urls. This pattern is used

# to find such keys.

pattern = re.compile(

r'^(.*denselayer\d+\.(?:norm|relu|conv))\.((?:[12])\.(?:weight|bias|running_mean|running_var))$')

state_dict = torch.load(weights_path)

num_classes = model.classifier.out_features

load_fc = num_classes == 1000

for key in list(state_dict.keys()):

if load_fc is False:

if "classifier" in key:

del state_dict[key]

res = pattern.match(key)

if res:

new_key = res.group(1) + res.group(2)

state_dict[new_key] = state_dict[key]

del state_dict[key]

model.load_state_dict(state_dict, strict=load_fc)

print("successfully load pretrain-weights.")

DenseNet1 = DenseNet(num_classes = 2) #将模型命名为Densenet

DenseNet1.to(device)

print(DenseNet1.to(device)) #输出模型结构

test1 = torch.ones(64, 3, 120, 120) # 测试一下输出的形状大小 输入一个64,3,120,120的向量

test1 = DenseNet1(test1.to(device)) #将向量打入神经网络进行测试

print(test1.shape) #查看输出的结果

epoch = 50 # 迭代次数即训练次数

learning = 0.001 # 学习率

optimizer = torch.optim.Adam(DenseNet1.parameters(), lr=learning) # 使用Adam优化器-写论文的话可以具体查一下这个优化器的原理

loss = nn.CrossEntropyLoss() # 损失计算方式,交叉熵损失函数

train_loss_all = [] # 存放训练集损失的数组

train_accur_all = [] # 存放训练集准确率的数组

test_loss_all = [] # 存放测试集损失的数组

test_accur_all = [] # 存放测试集准确率的数组

for i in range(epoch): #开始迭代

train_loss = 0 #训练集的损失初始设为0

train_num = 0.0 #

train_accuracy = 0.0 #训练集的准确率初始设为0

DenseNet1.train() #将模型设置成 训练模式

train_bar = tqdm(traindata) #用于进度条显示,没啥实际用处

for step, data in enumerate(train_bar): #开始迭代跑, enumerate这个函数不懂可以查查,将训练集分为 data是序号,data是数据

img, target = data #将data 分位 img图片,target标签

optimizer.zero_grad() # 清空历史梯度

outputs = DenseNet1(img.to(device)) # 将图片打入网络进行训练,outputs是输出的结果

loss1 = loss(outputs, target.to(device)) # 计算神经网络输出的结果outputs与图片真实标签target的差别-这就是我们通常情况下称为的损失

outputs = torch.argmax(outputs, 1) #会输出10个值,最大的值就是我们预测的结果 求最大值

loss1.backward() #神经网络反向传播

optimizer.step() #梯度优化 用上面的abam优化

train_loss += loss1.item() #将所有损失的绝对值加起来

accuracy = torch.sum(outputs == target.to(device)) #outputs == target的 即使预测正确的,统计预测正确的个数,从而计算准确率

train_accuracy = train_accuracy + accuracy #求训练集的准确率

train_num += img.size(0) #

print("epoch:{} , train-Loss:{} , train-accuracy:{}".format(i + 1, train_loss / train_num, #输出训练情况

train_accuracy / train_num))

train_loss_all.append(train_loss / train_num) #将训练的损失放到一个列表里 方便后续画图

train_accur_all.append(train_accuracy.double().item() / train_num)#训练集的准确率

test_loss = 0 #同上 测试损失

test_accuracy = 0.0 #测试准确率

test_num = 0

DenseNet1.eval() #将模型调整为测试模型

with torch.no_grad(): #清空历史梯度,进行测试 与训练最大的区别是测试过程中取消了反向传播

test_bar = tqdm(testdata)

for data in test_bar:

img, target = data

outputs = DenseNet1(img.to(device))

loss2 = loss(outputs, target.to(device))

outputs = torch.argmax(outputs, 1)

test_loss += loss2.item()

accuracy = torch.sum(outputs == target.to(device))

test_accuracy = test_accuracy + accuracy

test_num += img.size(0)

print("test-Loss:{} , test-accuracy:{}".format(test_loss / test_num, test_accuracy / test_num))

test_loss_all.append(test_loss / test_num)

test_accur_all.append(test_accuracy.double().item() / test_num)

#下面的是画图过程,将上述存放的列表 画出来即可

plt.figure(figsize=(12, 4))

plt.subplot(1, 2, 1)

plt.plot(range(epoch), train_loss_all,

"ro-", label="Train loss")

plt.plot(range(epoch), test_loss_all,

"bs-", label="test loss")

plt.legend()

plt.xlabel("epoch")

plt.ylabel("Loss")

plt.subplot(1, 2, 2)

plt.plot(range(epoch), train_accur_all,

"ro-", label="Train accur")

plt.plot(range(epoch), test_accur_all,

"bs-", label="test accur")

plt.xlabel("epoch")

plt.ylabel("acc")

plt.legend()

plt.show()

torch.save(DenseNet1, "DenseNet.pth")

print("模型已保存")

全部predict代码:

import torch

from PIL import Image

import torch.nn as nn

from torchvision.transforms import transforms

import re

from typing import Any, List, Tuple

from collections import OrderedDict

import torch.nn.functional as F

import torch.utils.checkpoint as cp

from torch import Tensor

image_path = "1.jpg"#相对路径 导入图片

trans = transforms.Compose([transforms.Resize((120 , 120)),

transforms.ToTensor()]) #将图片缩放为跟训练集图片的大小一样 方便预测,且将图片转换为张量

image = Image.open(image_path) #打开图片

print(image) #输出图片 看看图片格式

image = image.convert("RGB") #将图片转换为RGB格式

image = trans(image) #上述的缩放和转张量操作在这里实现

print(image) #查看转换后的样子

image = torch.unsqueeze(image, dim=0) #将图片维度扩展一维

classes = ["恶性" , "良性" ] #预测种类

class _DenseLayer(nn.Module):

def __init__(self,

input_c: int,

growth_rate: int,

bn_size: int,

drop_rate: float,

memory_efficient: bool = False):

super(_DenseLayer, self).__init__()

self.add_module("norm1", nn.BatchNorm2d(input_c))

self.add_module("relu1", nn.ReLU(inplace=True))

self.add_module("conv1", nn.Conv2d(in_channels=input_c,

out_channels=bn_size * growth_rate,

kernel_size=1,

stride=1,

bias=False))

self.add_module("norm2", nn.BatchNorm2d(bn_size * growth_rate))

self.add_module("relu2", nn.ReLU(inplace=True))

self.add_module("conv2", nn.Conv2d(bn_size * growth_rate,

growth_rate,

kernel_size=3,

stride=1,

padding=1,

bias=False))

self.drop_rate = drop_rate

self.memory_efficient = memory_efficient

def bn_function(self, inputs: List[Tensor]) -> Tensor:

concat_features = torch.cat(inputs, 1)

bottleneck_output = self.conv1(self.relu1(self.norm1(concat_features)))

return bottleneck_output

@staticmethod

def any_requires_grad(inputs: List[Tensor]) -> bool:

for tensor in inputs:

if tensor.requires_grad:

return True

return False

@torch.jit.unused

def call_checkpoint_bottleneck(self, inputs: List[Tensor]) -> Tensor:

def closure(*inp):

return self.bn_function(inp)

return cp.checkpoint(closure, *inputs)

def forward(self, inputs: Tensor) -> Tensor:

if isinstance(inputs, Tensor):

prev_features = [inputs]

else:

prev_features = inputs

if self.memory_efficient and self.any_requires_grad(prev_features):

if torch.jit.is_scripting():

raise Exception("memory efficient not supported in JIT")

bottleneck_output = self.call_checkpoint_bottleneck(prev_features)

else:

bottleneck_output = self.bn_function(prev_features)

new_features = self.conv2(self.relu2(self.norm2(bottleneck_output)))

if self.drop_rate > 0:

new_features = F.dropout(new_features,

p=self.drop_rate,

training=self.training)

return new_features

class _DenseBlock(nn.ModuleDict):

_version = 2

def __init__(self,

num_layers: int,

input_c: int,

bn_size: int,

growth_rate: int,

drop_rate: float,

memory_efficient: bool = False):

super(_DenseBlock, self).__init__()

for i in range(num_layers):

layer = _DenseLayer(input_c + i * growth_rate,

growth_rate=growth_rate,

bn_size=bn_size,

drop_rate=drop_rate,

memory_efficient=memory_efficient)

self.add_module("denselayer%d" % (i + 1), layer)

def forward(self, init_features: Tensor) -> Tensor:

features = [init_features]

for name, layer in self.items():

new_features = layer(features)

features.append(new_features)

return torch.cat(features, 1)

class _Transition(nn.Sequential):

def __init__(self,

input_c: int,

output_c: int):

super(_Transition, self).__init__()

self.add_module("norm", nn.BatchNorm2d(input_c))

self.add_module("relu", nn.ReLU(inplace=True))

self.add_module("conv", nn.Conv2d(input_c,

output_c,

kernel_size=1,

stride=1,

bias=False))

self.add_module("pool", nn.AvgPool2d(kernel_size=2, stride=2))

class DenseNet(nn.Module):

"""

Densenet-BC model class for imagenet

Args:

growth_rate (int) - how many filters to add each layer (`k` in paper)

block_config (list of 4 ints) - how many layers in each pooling block

num_init_features (int) - the number of filters to learn in the first convolution layer

bn_size (int) - multiplicative factor for number of bottle neck layers

(i.e. bn_size * k features in the bottleneck layer)

drop_rate (float) - dropout rate after each dense layer

num_classes (int) - number of classification classes

memory_efficient (bool) - If True, uses checkpointing. Much more memory efficient

"""

def __init__(self,

growth_rate: int = 32,

block_config: Tuple[int, int, int, int] = (6, 12, 24, 16),

num_init_features: int = 64,

bn_size: int = 4,

drop_rate: float = 0,

num_classes: int = 1000,

memory_efficient: bool = False):

super(DenseNet, self).__init__()

# first conv+bn+relu+pool

self.features = nn.Sequential(OrderedDict([

("conv0", nn.Conv2d(3, num_init_features, kernel_size=7, stride=2, padding=3, bias=False)),

("norm0", nn.BatchNorm2d(num_init_features)),

("relu0", nn.ReLU(inplace=True)),

("pool0", nn.MaxPool2d(kernel_size=3, stride=2, padding=1)),

]))

# each dense block

num_features = num_init_features

for i, num_layers in enumerate(block_config):

block = _DenseBlock(num_layers=num_layers,

input_c=num_features,

bn_size=bn_size,

growth_rate=growth_rate,

drop_rate=drop_rate,

memory_efficient=memory_efficient)

self.features.add_module("denseblock%d" % (i + 1), block)

num_features = num_features + num_layers * growth_rate

if i != len(block_config) - 1:

trans = _Transition(input_c=num_features,

output_c=num_features // 2)

self.features.add_module("transition%d" % (i + 1), trans)

num_features = num_features // 2

# finnal batch norm

self.features.add_module("norm5", nn.BatchNorm2d(num_features))

# fc layer

self.classifier = nn.Linear(num_features, num_classes)

# init weights

for m in self.modules():

if isinstance(m, nn.Conv2d):

nn.init.kaiming_normal_(m.weight)

elif isinstance(m, nn.BatchNorm2d):

nn.init.constant_(m.weight, 1)

nn.init.constant_(m.bias, 0)

elif isinstance(m, nn.Linear):

nn.init.constant_(m.bias, 0)

def forward(self, x: Tensor) -> Tensor:

features = self.features(x)

out = F.relu(features, inplace=True)

out = F.adaptive_avg_pool2d(out, (1, 1))

out = torch.flatten(out, 1)

out = self.classifier(out)

return out

def densenet121(**kwargs: Any) -> DenseNet:

# Top-1 error: 25.35%

# 'densenet121': 'https://download.pytorch.org/models/densenet121-a639ec97.pth'

return DenseNet(growth_rate=32,

block_config=(6, 12, 24, 16),

num_init_features=64,

**kwargs)

def densenet169(**kwargs: Any) -> DenseNet:

# Top-1 error: 24.00%

# 'densenet169': 'https://download.pytorch.org/models/densenet169-b2777c0a.pth'

return DenseNet(growth_rate=32,

block_config=(6, 12, 32, 32),

num_init_features=64,

**kwargs)

def densenet201(**kwargs: Any) -> DenseNet:

# Top-1 error: 22.80%

# 'densenet201': 'https://download.pytorch.org/models/densenet201-c1103571.pth'

return DenseNet(growth_rate=32,

block_config=(6, 12, 48, 32),

num_init_features=64,

**kwargs)

def densenet161(**kwargs: Any) -> DenseNet:

# Top-1 error: 22.35%

# 'densenet161': 'https://download.pytorch.org/models/densenet161-8d451a50.pth'

return DenseNet(growth_rate=48,

block_config=(6, 12, 36, 24),

num_init_features=96,

**kwargs)

def load_state_dict(model: nn.Module, weights_path: str) -> None:

# '.'s are no longer allowed in module names, but previous _DenseLayer

# has keys 'norm.1', 'relu.1', 'conv.1', 'norm.2', 'relu.2', 'conv.2'.

# They are also in the checkpoints in model_urls. This pattern is used

# to find such keys.

pattern = re.compile(

r'^(.*denselayer\d+\.(?:norm|relu|conv))\.((?:[12])\.(?:weight|bias|running_mean|running_var))$')

state_dict = torch.load(weights_path)

num_classes = model.classifier.out_features

load_fc = num_classes == 1000

for key in list(state_dict.keys()):

if load_fc is False:

if "classifier" in key:

del state_dict[key]

res = pattern.match(key)

if res:

new_key = res.group(1) + res.group(2)

state_dict[new_key] = state_dict[key]

del state_dict[key]

model.load_state_dict(state_dict, strict=load_fc)

print("successfully load pretrain-weights.")

device = torch.device("cuda:0" if torch.cuda.is_available() else "cpu") #将代码放入GPU进行训练

print("using {} device.".format(device))

DenseNet1 = DenseNet(num_classes = 2) #将模型命名为DenseNet1

DenseNet1.to(device)

print(DenseNet1.to(device)) #输出模型结构

#以上是神经网络结构,因为读取了模型之后代码还得知道神经网络的结构才能进行预测

model = torch.load("Densenet.pth") #读取模型

model.eval() #关闭梯度,将模型调整为测试模式

with torch.no_grad(): #梯度清零

outputs = model(image.to(device)) #将图片打入神经网络进行测试

print(model) #输出模型结构

print(outputs) #输出预测的张量数组

ans = (outputs.argmax(1)).item() #最大的值即为预测结果,找出最大值在数组中的序号,

# 对应找其在种类中的序号即可然后输出即为其种类

print(classes[ans])链接:https://pan.baidu.com/s/1iufeKWuOGaSfdjdk3SxNKQ

提取码:yw78

有用的话麻烦点一下关注,博主后续会开源更多代码,非常感谢支持!