Win7下darknet训练yolov4-tiny及opencv调用模型测试训练结果

本博文只为记录,方便自己需要时查看;如若对大家有帮助,那自然是最好的。

进入正题

一、 训练环境

1. Windows下编译好的darknet(具体编译不细说了,网上很多),源码地址:https://github.com/AlexeyAB/darknet

2. opencv有最好,可以显示训练过程

3. GPU(这个没什么好说的)

二、数据集

所需文件的目录结构如下:

darknet

----------x64

---------------data

---------------------VOCdevkit

------------------------------------VOC2007

-------------------------------------------------Annotations(放图片的xml标注文件)

-------------------------------------------------JPEGImages(放图片)

-------------------------------------------------ImageSets

---------------------------------------------------------------Main

**默认经典结构**

准备好待训练的图片和标注文件(yolo需要的txt),如果是xml的标注文件需要转成txt,方法如下:

1. 将图片分为训练集、验证集和测试集

结果在darknet\x64\data\VOCdevkit\VOC2007\ImageSets\Main目录下

import os

import random

trainval_percent = 0.1

train_percent = 0.9

xmlfilepath = 'Annotations'

txtsavepath = 'ImageSets\Main'

total_xml = os.listdir(xmlfilepath)

num = len(total_xml)

list = range(num)

tv = int(num * trainval_percent)

tr = int(tv * train_percent)

trainval = random.sample(list, tv)

train = random.sample(trainval, tr)

ftrainval = open('ImageSets/Main/trainval.txt', 'w')

ftest = open('ImageSets/Main/test.txt', 'w')

ftrain = open('ImageSets/Main/train.txt', 'w')

fval = open('ImageSets/Main/val.txt', 'w')

for i in list:

name = total_xml[i][:-4] + '\n'

if i in trainval:

ftrainval.write(name)

if i in train:

ftest.write(name)

else:

fval.write(name)

else:

ftrain.write(name)

ftrainval.close()

ftrain.close()

fval.close()

ftest.close()2. 将对应数据集的xml转txt

import xml.etree.ElementTree as ET

import pickle

import os

from os import listdir, getcwd

from os.path import join

sets=[('2007', 'train'), ('2007', 'val'), ('2007', 'test')]

classes = ["yes_mask", "no_mask"] #改为自己数据集的label

def convert(size, box):

dw = 1./size[0]

dh = 1./size[1]

x = (box[0] + box[1])/2.0

y = (box[2] + box[3])/2.0

w = box[1] - box[0]

h = box[3] - box[2]

x = x*dw

w = w*dw

y = y*dh

h = h*dh

return (x,y,w,h)

def convert_annotation(year, image_id):

in_file = open('VOC%s/Annotations/%s.xml'%(year, image_id))

out_file = open('VOC%s/labels/%s.txt'%(year, image_id), 'w')

tree=ET.parse(in_file)

root = tree.getroot()

size = root.find('size')

w = int(size.find('width').text)

h = int(size.find('height').text)

for obj in root.iter('object'):

difficult = obj.find('difficult').text

cls = obj.find('name').text

if cls not in classes or int(difficult) == 1:

continue

cls_id = classes.index(cls)

xmlbox = obj.find('bndbox')

b = (float(xmlbox.find('xmin').text), float(xmlbox.find('xmax').text), float(xmlbox.find('ymin').text), float(xmlbox.find('ymax').text))

bb = convert((w,h), b)

out_file.write(str(cls_id) + " " + " ".join([str(a) for a in bb]) + '\n')

wd = getcwd()

for year, image_set in sets:

if not os.path.exists('VOC%s/labels/'%(year)): #注意路径

os.makedirs('VOC%s/labels/'%(year))

image_ids = open('VOC%s/ImageSets/Main/%s.txt'%(year, image_set)).read().strip().split()

list_file = open('%s_%s.txt'%(year, image_set), 'w')

for image_id in image_ids:

list_file.write('%s/VOC%s/JPEGImages/%s.jpg\n'%(wd, year, image_id))

convert_annotation(year, image_id)

list_file.close()完成后会在darknet\x64\data\VOCdevkit\VOC2007目录下新建一个labels文件夹,里面是对应的txt标注文件

每个txt文件里面是固定格式的数据【‘目标类别’ ‘目标中心点横坐标’ ‘目标中心点纵坐标’ ‘目标框的宽’ ‘目标框的高’】

1 0.243 0.38109756097560976 0.074 0.1402439024390244

1 0.387 0.3704268292682927 0.062 0.10670731707317073

1 0.964 0.3628048780487805 0.044 0.07317073170731708重要:最后需要将labels下的所有txt文件复制到 darknet\x64\data\VOCdevkit\VOC2007\JPEGImages 目录下(图和对应的标注txt文件)

三、准备训练所需的文件以及预训练模型

1. 下载预训练模型

源码地址下有,对应下载即可

下载完成后放入目录darknet\x64\weights下,方便管理。

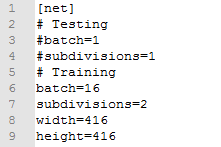

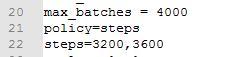

2. 创建及修改cfg文件

cfg为模型结构文件,需要根据自己的数据、运行环境、训练方式进行修改。以yolov4-tiny.cfg为例,选择复制改为yolov4-tiny-mask.cfg

修改yolov4-tiny-mask.cfg的几个地方

- 尺寸默认416的,这里也可以修改为任意32的整数倍,batch和subdivisions根据自己电脑配置来,配置高的batch可以调到64

- 最大迭代次数可以修改为你数据集的label个数*2000,steps为max_batches的80%和90%。(这部分可以不修改)

- 修改所有yolo层的classes,以及yolo层前一个卷积层的filters(计算方式为filters=(classes + 5)x3)

3. 创建data文件和names文件

我新建了一个训练目录darknet\x64\data\mask

2007_train.txt和2007_test.txt为原darknet\x64\data\VOCdevkit目录下复制而来,为了方便区分。

data文件内容

names文件内容

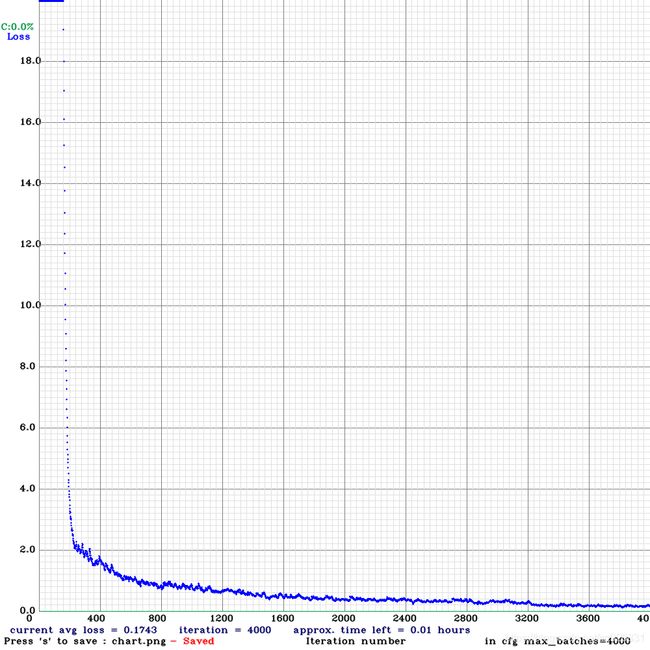

四、训练

在darknet\x64目录下打开cmd,运行

带opencv编译darknet的会显示训练过程(loss曲线),也可以在运行命令后加 -dont show关闭显示。

训练过程中也会在backupv4目录下保存模型,完成后只需拷贝yolov4-tiny-mask_final.weights、yolov4-tiny-mask.cfg和mask.names文件即可

五、opencv调用yolov4

调用模型文件的脚本如下(yolov4需要opencv版本4.4.0及以上)

import numpy as np

import cv2

import os

import random

weights_path = 'models/yolov4-tiny-mask.weights'#模型权重文件

cfg_path = 'models/yolov4-tiny-mask.cfg'#模型配置文件

labels_path = 'models/mask.names'#模型类别标签文件

#初始化一些参数

LABELS = open(labels_path).read().strip().split("\n")

boxes = []

confidences = []

classIDs = []

color_list=[]

for i in range(len(LABELS)):

color_list.append([random.randint(0,255),random.randint(0,255),random.randint(0,255)])

#加载网络配置与训练的权重文件 构建网络

net = cv2.dnn.readNetFromDarknet(cfg_path, weights_path)

#读入待检测的图像

image = cv2.imread(os.path.join("img","1.jpg"))

#得到图像的高和宽

(H,W) = image.shape[0: 2]

#得到YOLO需要的输出层

ln = net.getLayerNames()

out = net.getUnconnectedOutLayers() #得到未连接层得序号 [[200] /n [267] /n [400] ]

x = []

for i in out: # 1=[200]

x.append(ln[i[0]-1]) # i[0]-1 取out中的数字 [200][0]=200 ln(199)= 'yolo_82'

ln=x

# ln = ['yolo_82', 'yolo_94', 'yolo_106'] 得到 YOLO需要的输出层

#从输入图像构造一个blob,然后通过加载的模型,给我们提供边界框和相关概率

#blobFromImage(image, scalefactor=None, size=None, mean=None, swapRB=None, crop=None, ddepth=None)

blob = cv2.dnn.blobFromImage(image, 1 / 255.0, (416, 416),swapRB=True, crop=False)

#构造了一个blob图像,对原图像进行了图像的归一化,缩放了尺寸 ,对应训练模型

net.setInput(blob)

layerOutputs = net.forward(ln) #ln此时为输出层名称 ,向前传播 得到检测结果

for output in layerOutputs: #对三个输出层 循环

for detection in output: #对每个输出层中的每个检测框循环

scores=detection[5:] #detection=[x,y,h,w,c,class1,class2] scores取第6位至最后

classID = np.argmax(scores)#np.argmax反馈最大值的索引

confidence = scores[classID]

if confidence >0.5:#过滤掉那些置信度较小的检测结果

box = detection[0:4] * np.array([W, H, W, H])

#print(box)

(centerX, centerY, width, height)= box.astype("int")

# 边框的左上角

x = int(centerX - (width / 2))

y = int(centerY - (height / 2))

# 更新检测出来的框

boxes.append([x, y, int(width), int(height)])

confidences.append(float(confidence))

classIDs.append(classID)

idxs=cv2.dnn.NMSBoxes(boxes, confidences, 0.2,0.3)

box_seq = idxs.flatten()#[ 2 9 7 10 6 5 4]

if len(idxs)>0:

for seq in box_seq:

(x, y) = (boxes[seq][0], boxes[seq][1]) # 框左上角

(w, h) = (boxes[seq][2], boxes[seq][3]) # 框宽高

# if classIDs[seq]==0: #根据类别设定框的颜色

# color = [0,0,255]

# else:

# color = [0,255,0]

cv2.rectangle(image, (x, y), (x + w, y + h), color_list[classIDs[seq]], 2) # 画框

text = "{}: {:.4f}".format(LABELS[classIDs[seq]], confidences[seq])

cv2.putText(image, text, (x, y - 5), cv2.FONT_HERSHEY_SIMPLEX, 0.8, color_list[classIDs[seq]],2) # 写字

cv2.namedWindow('Image', cv2.WINDOW_AUTOSIZE)

cv2.imshow("Image", image)

cv2.waitKey(0)yolov3-tiny和yolov4-tiny对比(迭代4000次)

注:图片如有侵权,联系我删除,谢谢