import tensorflow as tf

import matplotlib.pyplot as plt

%matplotlib inline

import numpy as np

import pathlib

数据读取及预处理

data_dir = "./2_class"

data_root = pathlib.Path(data_dir)

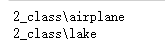

for item in data_root.iterdir():

print(item)

all_image_path = list(data_root.glob("*/*"))

len(all_image_path)

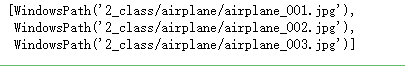

all_image_path[:3]

all_image_path = [str(path) for path in all_image_path]

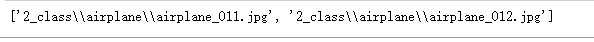

all_image_path[10:12]

import random

random.shuffle(all_image_path)

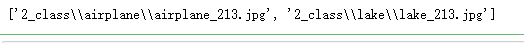

all_image_path[10:12]

image_count = len(all_image_path)

image_count

label_names = sorted (item.name for item in data_root.glob("*/"))

label_names

label_to_index = dict((name,index) for index,name in enumerate(label_names))

label_to_index

all_image_path[:3]

pathlib.Path("2_class\\lake\\lake_405.jpg").parent.name

all_image_label = [label_to_index[pathlib.Path(p).parent.name]for p in all_image_path]

all_image_label[:5]

all_image_path[:5]

import IPython.display as display

index_to_label = dict((v,k) for k,v in label_to_index.items())

index_to_label

读取和解码图片

for n in range(3):

image_index = random.choice(range(len(all_image_path)))

display.display(display.Image(all_image_path[image_index]))

print(index_to_label[all_image_label[image_index]])

print()

img_path = all_image_path[0]

img_path

img_raw = tf.io.read_file(img_path)

img_raw

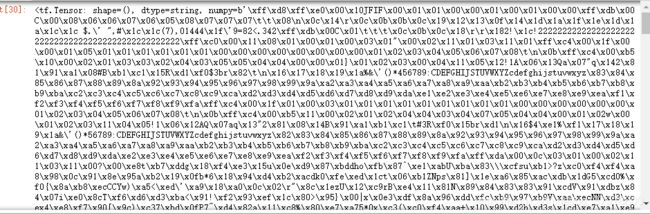

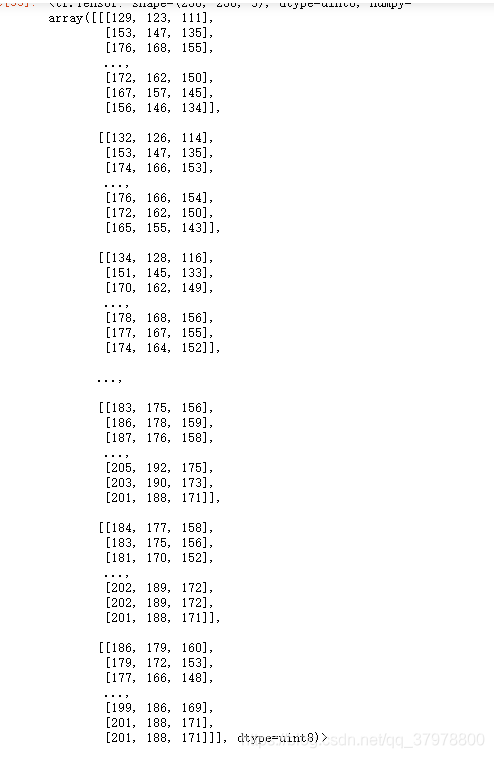

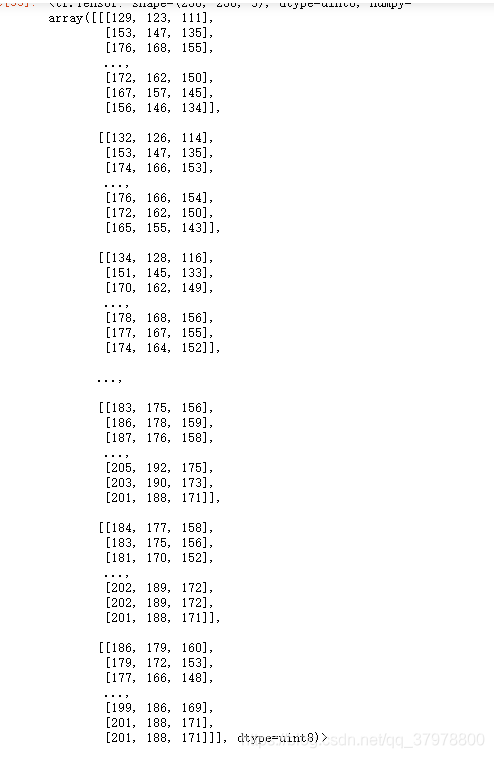

img_tensor = tf.image.decode_image(img_raw)

img_tensor.shape

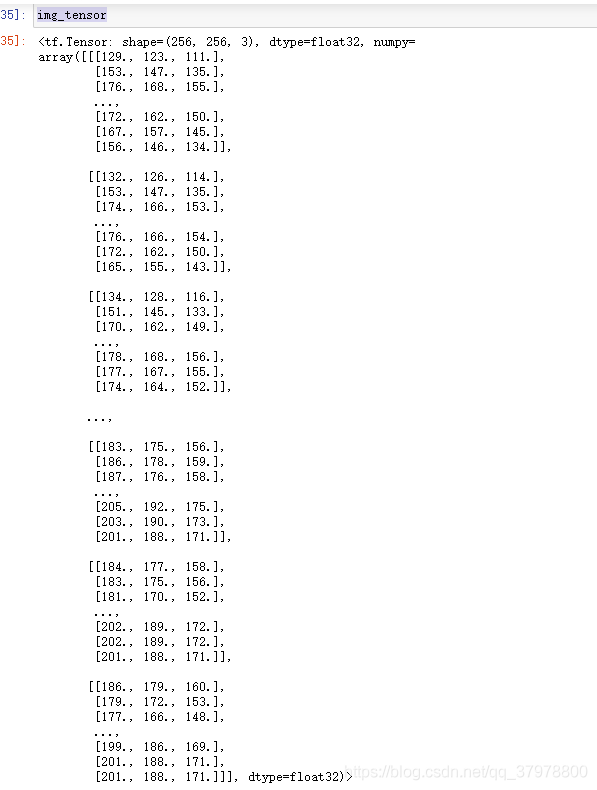

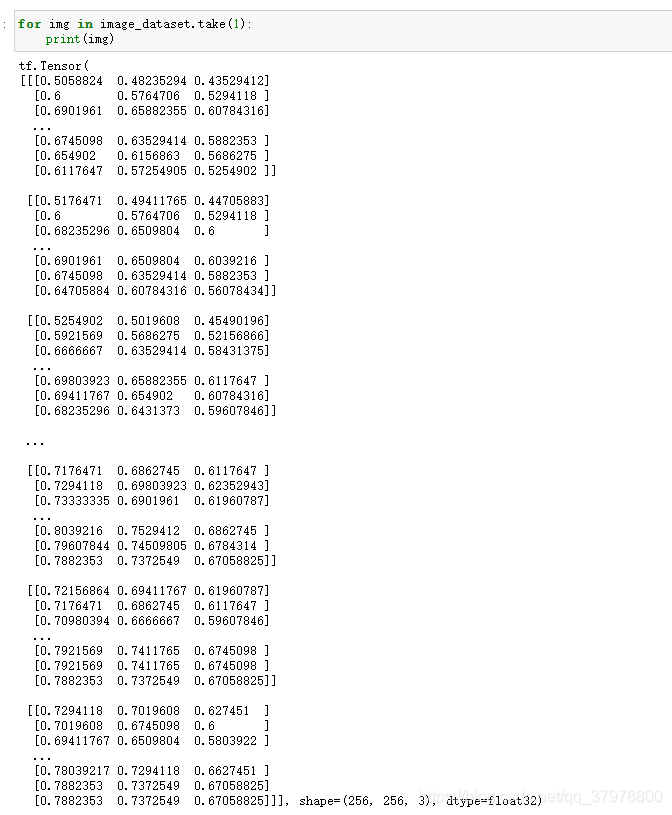

img_tensor

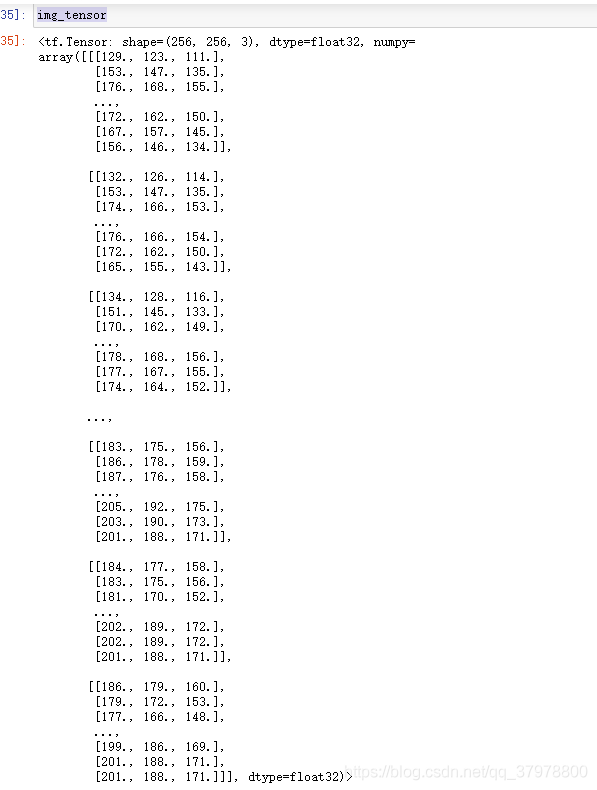

img_tensor = tf.cast(img_tensor,tf.float32)

img_tensor

img_tensor = img_tensor/255

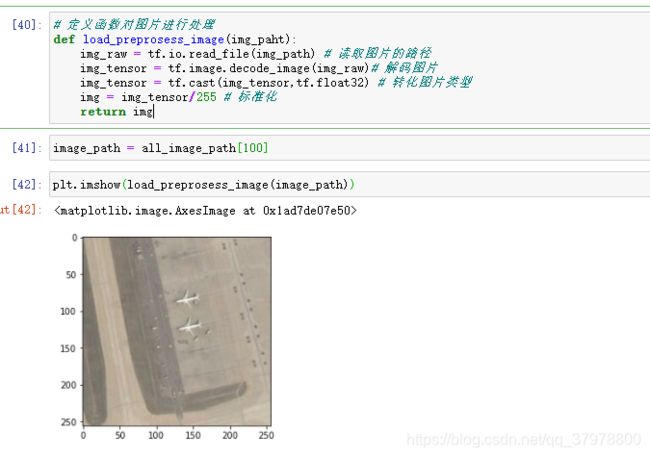

定义函数对图片进行处理

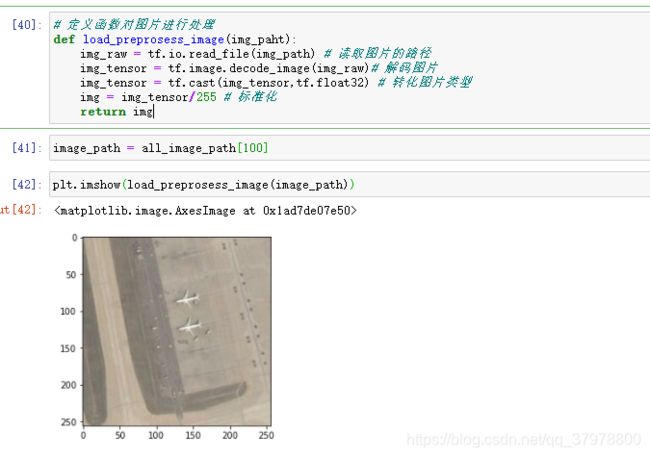

def load_preprosess_image(img_paht):

img_raw = tf.io.read_file(img_path)

img_tensor = tf.image.decode_jpeg(img_raw,channels=3)

img_tensor = tf.image.resize(img_tensor,[256,256])

img_tensor = tf.cast(img_tensor,tf.float32)

img = img_tensor/255

return img

使用tf.data 构建图片输入管道

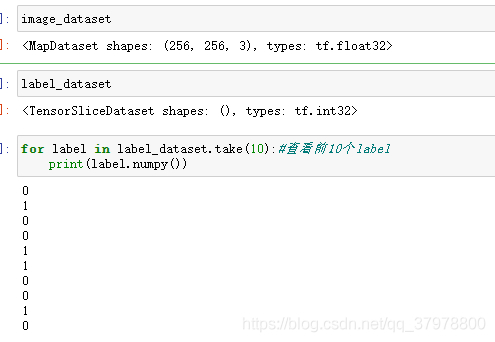

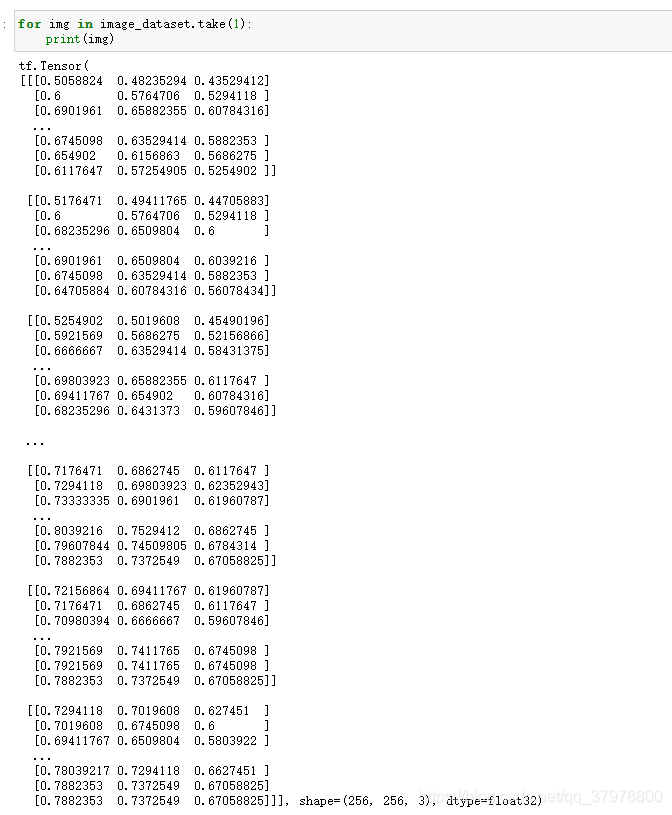

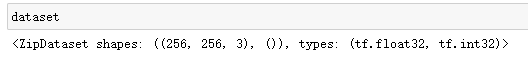

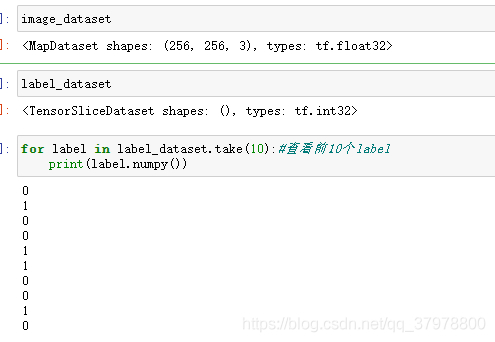

path_ds = tf.data.Dataset.from_tensor_slices(all_image_path)

image_dataset = path_ds.map(load_preprosess_image)

label_dataset = tf.data.Dataset.from_tensor_slices(all_image_label)

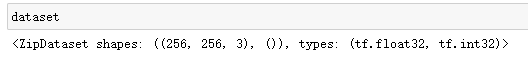

dataset = tf.data.Dataset.zip((image_dataset,label_dataset))

test_count = int(image_count*0.2)

train_count = image_count-test_count

train_dataset = dataset.skip(test_count)

test_dataset = dataset.take(test_count)

BATCH_SIZE = 32

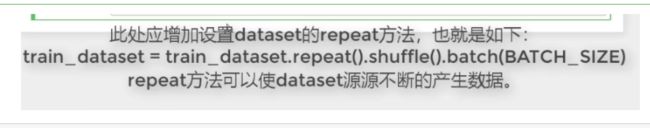

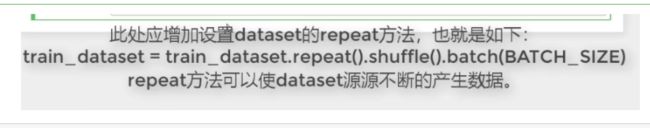

train_dataset = train_dataset.shuffle(buffer_size=train_count).batch(BATCH_SIZE)

test_dataset = test_dataset.batch(BATCH_SIZE)

建立模型

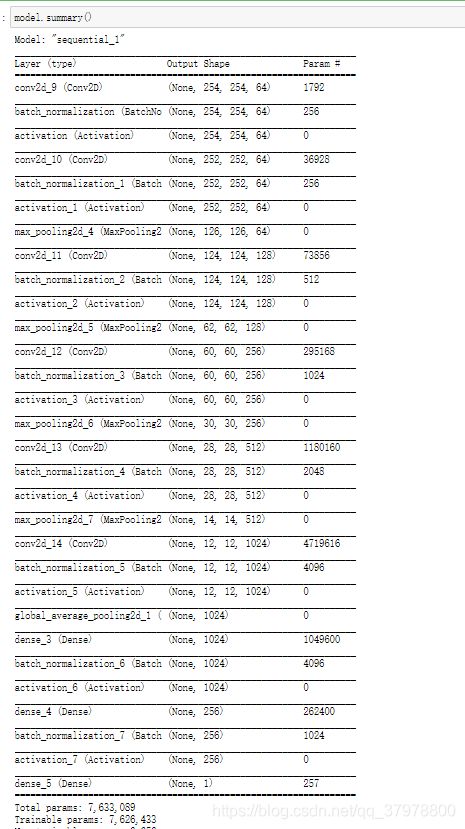

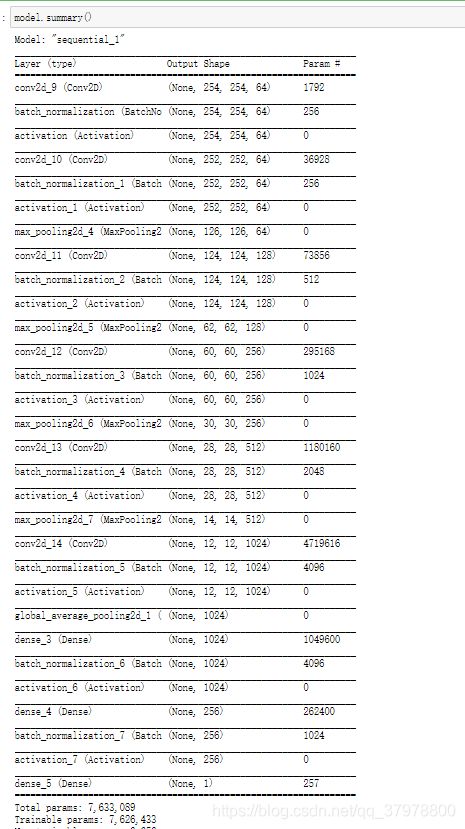

model = tf.keras.Sequential()

model.add(tf.keras.layers.Conv2D(64,(3,3),input_shape=(256,256,3)))

model.add(tf.keras.layers.BatchNormalization())

model.add(tf.keras.layers.Activation("relu"))

model.add(tf.keras.layers.Conv2D(64,(3,3)))

model.add(tf.keras.layers.BatchNormalization())

model.add(tf.keras.layers.Activation("relu"))

model.add(tf.keras.layers.MaxPooling2D())

model.add(tf.keras.layers.Conv2D(128,(3,3)))

model.add(tf.keras.layers.BatchNormalization())

model.add(tf.keras.layers.Activation("relu"))

model.add(tf.keras.layers.MaxPooling2D())

model.add(tf.keras.layers.Conv2D(256,(3,3)))

model.add(tf.keras.layers.BatchNormalization())

model.add(tf.keras.layers.Activation("relu"))

model.add(tf.keras.layers.MaxPooling2D())

model.add(tf.keras.layers.Conv2D(512,(3,3)))

model.add(tf.keras.layers.BatchNormalization())

model.add(tf.keras.layers.Activation("relu"))

model.add(tf.keras.layers.MaxPooling2D())

model.add(tf.keras.layers.Conv2D(1024,(3,3)))

model.add(tf.keras.layers.BatchNormalization())

model.add(tf.keras.layers.Activation("relu"))

model.add(tf.keras.layers.GlobalAveragePooling2D())

model.add(tf.keras.layers.Dense(1024))

model.add(tf.keras.layers.BatchNormalization())

model.add(tf.keras.layers.Activation("relu"))

model.add(tf.keras.layers.Dense(256))

model.add(tf.keras.layers.BatchNormalization())

model.add(tf.keras.layers.Activation("relu"))

model.add(tf.keras.layers.Dense(1,activation="sigmoid"))

model.summary()

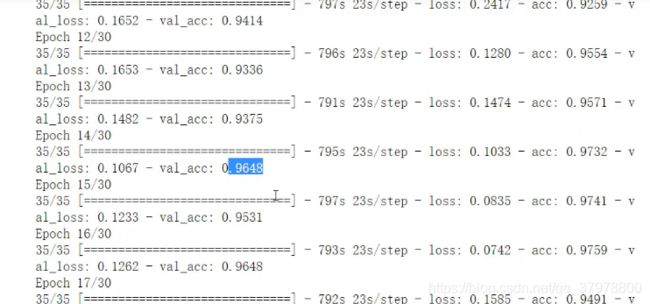

model.compile(optimizer="adam",

loss="binary_crossentropy",

metrics=["acc"])

steps_per_epoch = train_count//BATCH_SIZE

validation_steps = test_count//BATCH_SIZE

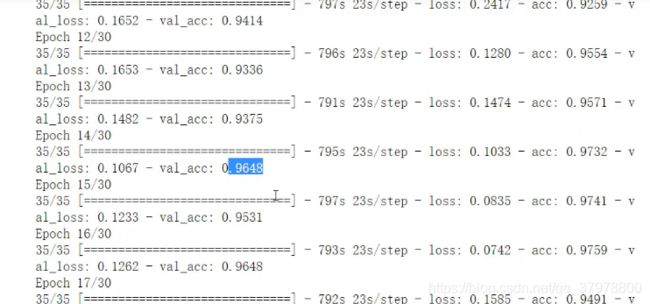

history = model.fit(train_dataset,epochs=30,

steps_per_epoch=steps_per_epoch,

validation_data=test_dataset,

validation_steps=validation_steps)

![]()

![]()

![]()