pytorch使用迁移学习模型MobilenetV2实现猫狗分类

目录

- MobilenetV2介绍

- MobilenetV2网络结构

-

- 1. Depthwise Separable Convolutions

- 2. Linear Bottlenecks

- 3. Inverted residuals

- 4. Model Architecture

- 数据集下载

- 代码实现

-

- 1. 导入相关库

- 2. 定义超参数

- 3. 数据预处理

- 4. 构造数据器

- 5. 重新定义迁移模型

- 6. 定义损失调整和优化器

- 7. 定义训练和测试函数

- 8. 预测图片

MobilenetV2介绍

网络设计是基于MobileNetV1。它保持了简单性,同时显著提高了精度,在移动应用的多图像分类和检测任务上达到了最新的水平。主要贡献是一个新的层模块:具有线性瓶颈的倒置残差。该模块将输入的低维压缩表示首先扩展到高维并用轻量级深度卷积进行过滤。随后用线性卷积将特征投影回低维表示。

MobilenetV2网络结构

在介绍MobilenetV2网络结构之前需要先了解一下网络内部的细节。

1. Depthwise Separable Convolutions

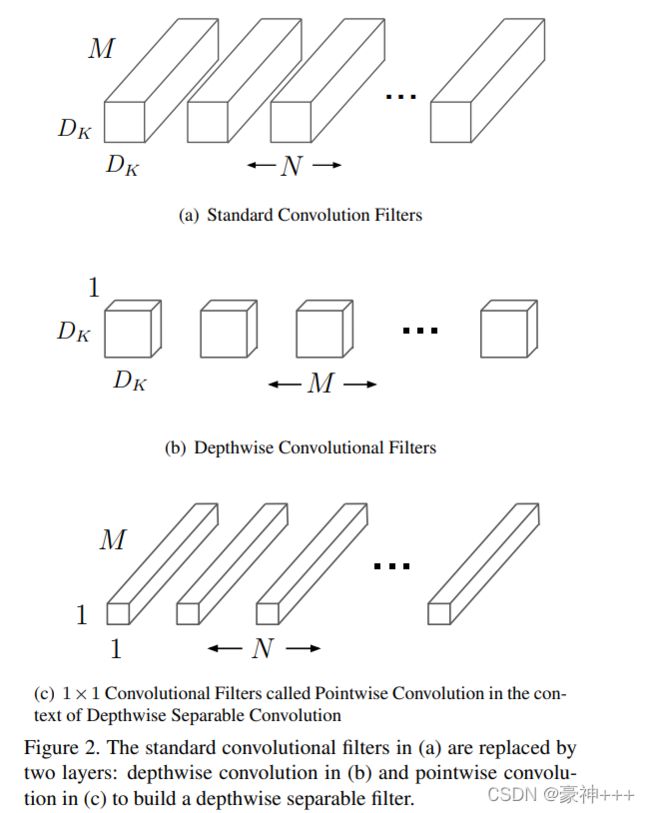

深度可分卷积这是一种分解卷积的形式,它将一个标准卷积分解为深度卷积和一个1×1卷积,1x1卷积又叫称为点卷积,下图是MobilenetV1中论文的图。

标准的卷积是输入一个 D F D_{F} DF x D F D_{F} DF x M M M 的特征图,输出一个 D F D_{F} DF x D F D_{F} DF x N N N 的特征图。

标准的卷积层的参数是 D K D_{K} DK x D K D_{K} DK x M M M x N N N ,其中 D K D_{K} DK是卷积核的小大, M M M是输入通道数, N N N是输出通道数。

所以标准的卷积计算量如下:

D K D_{K} DK x D K D_{K} DK x M M M x N N N x D F D_{F} DF x D F D_{F} DF

深度可分离卷积由两层组成:深度卷积和点卷积。

我们使用深度卷积来为每个输入通道(输入深度)应用一个过滤器。然后使用点态卷积(一个简单的1×1卷积)创建深度层输出的线性组合。

其计算量为: D K D_{K} DK x D K D_{K} DK x M M M x D F D_{F} DF x D F D_{F} DF

相对于标准卷积,深度卷积是非常有效的。然而,它只过滤输入通道,并没有将它们组合起来创建新的功能。因此,为了生成这些新特征,需要一个额外的层,通过1 × 1的卷积计算深度卷积输出的线性组合。

其计算量为: M M M x N N N x D F D_{F} DF x D F D_{F} DF

例子:

标准卷积:

假设有一个56 x 56 x 16的特征图,其卷积核是3 x 3大小,输出通道为32,计算量大小就是56 x56 x16 x 32 x 3 x 3 = 14450688

深度可分离卷积:

假设有一个56 x 56 x 16的特征图,输出通道为32,先用16个3 x 3大小的卷积核进行卷积,接着用32个1 x 1大小的卷积核分别对这用16个3 x 3大小的卷积核进行卷积之后的特征图进行卷积,计算量为3 x 3 x 16 x 56 x 56 + 16 x 32 x 56 x 56 = 2057216

从而可见计算量少了许多。

2. Linear Bottlenecks

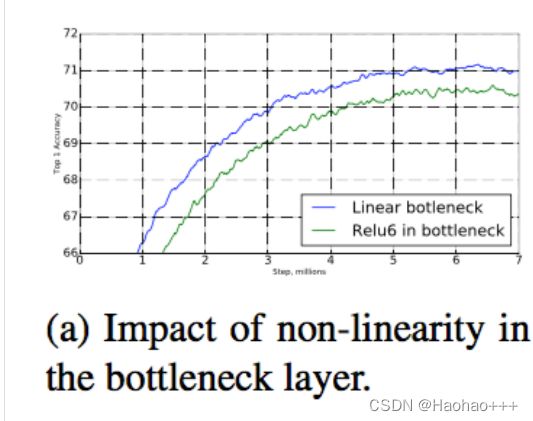

在论文中,实验者们发现,使用线性层是至关重要的,因为它可以防止非线性破坏太多的信息,在瓶颈中使用非线性层确实会使性能降低几个百分点,如下图。线性瓶颈模型的严格来说比非线性模型要弱一些,因为激活总是可以在线性状态下进行,并对偏差和缩放进行适当的修改。然而,我们在图a中展示的实验表明,线性瓶颈改善了性能,为非线性破坏低维空间中的信息提供了支持。

3. Inverted residuals

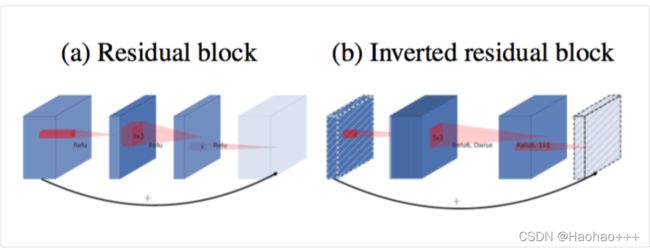

倒残差瓶颈块与残差块类似,其中每个块包含一个输入,然后是几个瓶颈,然后是扩展,下图是论文中给大家展示的残差块与倒残差瓶颈块的区别。

主要区别就是残差块先进行降维再升维,而倒残差瓶颈块是先进行升维再降维,结构如下:

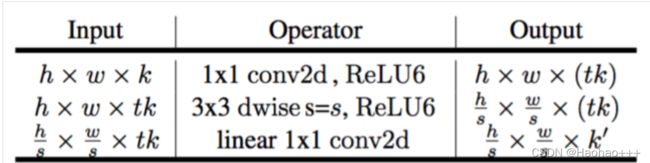

倒残差瓶颈块从 k k k转换为 k ′ k′ k′个通道,步长为 s s s,扩展系数为 t t t。

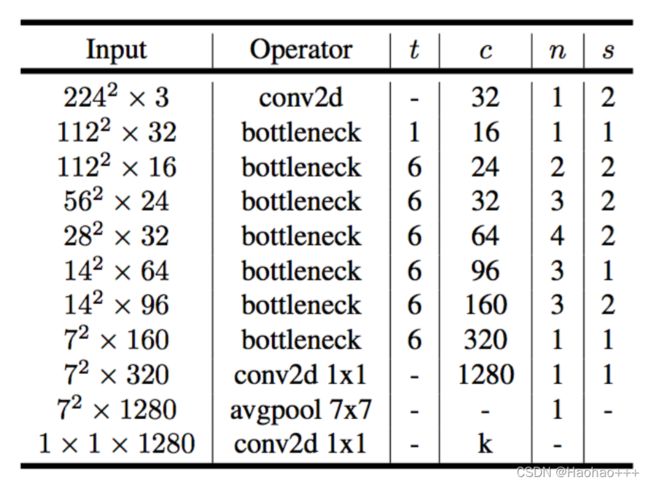

4. Model Architecture

网络使用使用ReLU6作为非线性,因为用于低精度计算时它的鲁棒性。

其中:

- n n n代表重复次数。

- c c c代表有相同的通道数。

- s s s代表步长。

- t t t代表扩展系数。

数据集下载

链接:https://pan.baidu.com/s/1zs9U76OmGAIwbYr91KQxgg

提取码:bhjx

代码实现

1. 导入相关库

新建train.py文件

import torch,os

import torch.nn as nn

import torch.nn.functional as F

from torch.utils.data import DataLoader, Dataset

from torchvision import models, transforms

from PIL import Image

from torch.autograd import Variable

2. 定义超参数

EPOCH = 100

IMG_SIZE = 224

BATCH_SIZE= 64

IMG_MEAN = [0.485, 0.456, 0.406]

IMG_STD = [0.229, 0.224, 0.225]

CUDA=torch.cuda.is_available()

DEVICE = torch.device("cuda" if CUDA else "cpu")

train_path = './data1_dog_cat/train'

test_path = './data1_dog_cat/test'

classes_name = os.listdir(train_path)

3. 数据预处理

train_transforms = transforms.Compose([

transforms.Resize(IMG_SIZE),

transforms.RandomResizedCrop(IMG_SIZE),

transforms.RandomHorizontalFlip(),

transforms.RandomRotation(30),

transforms.ToTensor(),

transforms.Normalize(IMG_MEAN, IMG_STD)

])

val_transforms = transforms.Compose([

transforms.Resize(IMG_SIZE),

transforms.CenterCrop(IMG_SIZE),

transforms.ToTensor(),

transforms.Normalize(IMG_MEAN, IMG_STD)

])

4. 构造数据器

class DogDataset(Dataset):

def __init__(self, paths, classes_name, transform=None):

self.paths = self.make_path(paths, classes_name)

self.transform = transform

def __len__(self):

return len(self.paths)

def __getitem__(self, idx):

image = self.paths[idx].split(';')[0]

img = Image.open(image)

label = self.paths[idx].split(';')[1]

if self.transform:

img = self.transform(img)

return img, int(label)

def make_path(self, path, classes_name):

# path: ./data1_dog_cat/train

# path = './data1_dog_cat/train'

path_list = []

for class_name in classes_name:

names = os.listdir(path + '/' +class_name)

for name in names:

p = os.path.join(path + '/' + class_name, name)

label = str(classes_name.index(class_name))

path_list.append(p+';'+label)

return path_list

train_dataset = DogDataset(train_path, classes_name, train_transforms)

val_dataset = DogDataset(test_path, classes_name, val_transforms)

image_dataset = {'train':train_dataset, 'valid':val_dataset}

image_dataloader = {x:DataLoader(image_dataset[x],batch_size=BATCH_SIZE,shuffle=True) for x in ['train', 'valid']}

dataset_sizes = {x:len(image_dataset[x]) for x in ['train', 'valid']}

5. 重新定义迁移模型

model_ft = models.mobilenet_v2(pretrained=True)

for param in model_ft.parameters():

param.requires_grad = False

num_ftrs = model_ft.classifier[1].in_features

model_ft.classifier[1]=nn.Linear(num_ftrs, len(classes_name),bias=True)

model_ft.to(DEVICE)

print(model_ft)

6. 定义损失调整和优化器

criterion = nn.CrossEntropyLoss()

optimizer = torch.optim.Adam(model_ft.parameters(), lr=1e-3)#指定 新加的fc层的学习率

cosine_schedule = torch.optim.lr_scheduler.CosineAnnealingLR(optimizer=optimizer,T_max=20,eta_min=1e-9)

7. 定义训练和测试函数

def train(model, device, train_loader, optimizer, epoch):

model.train()

sum_loss = 0

total_accuracy = 0

total_num = len(train_loader.dataset)

print(total_num, len(train_loader))

for batch_idx, (data, target) in enumerate(train_loader):

data, target = data.to(device, non_blocking=True), target.to(device, non_blocking=True)

optimizer.zero_grad()

output = model(data)

loss = criterion(output, target)

loss.backward()

optimizer.step()

lr = optimizer.state_dict()['param_groups'][0]['lr']

print_loss = loss.data.item()

sum_loss += print_loss

accuracy = torch.mean((torch.argmax(F.softmax(output, dim=-1), dim=-1) == target).type(torch.FloatTensor))

total_accuracy += accuracy.item()

if (batch_idx + 1) % 10 == 0:

ave_loss = sum_loss / (batch_idx+1)

acc = total_accuracy / (batch_idx+1)

print('Train Epoch: {} [{}/{} ({:.0f}%)]\tLoss: {:.6f}\tLR:{:.9f}'.format(

epoch, (batch_idx + 1) * len(data), len(train_loader.dataset),

100. * (batch_idx + 1) / len(train_loader), loss.item(),lr))

print('epoch:%d,loss:%.4f,train_acc:%.4f'%(epoch, ave_loss, acc))

ACC=0

# 验证过程

def val(model, device, test_loader):

global ACC

model.eval()

test_loss = 0

correct = 0

total_num = len(test_loader.dataset)

print(total_num, len(test_loader))

with torch.no_grad():

for data, target in test_loader:

data, target = Variable(data).to(device), Variable(target).to(device)

output = model(data)

loss = criterion(output, target)

_, pred = torch.max(output.data, 1)

correct += torch.sum(pred == target)

print_loss = loss.data.item()

test_loss += print_loss

correct = correct.data.item()

acc = correct / total_num

avgloss = test_loss / len(test_loader)

print('\nVal set: Average loss: {:.4f}, Accuracy: {}/{} ({:.0f}%)\n'.format(

avgloss, correct, len(test_loader.dataset), 100 * acc))

if acc > ACC:

torch.save(model_ft, 'model_' + 'epoch_' + str(epoch) + '_' + 'ACC-' + str(round(acc, 3)) + '.pth')

ACC = acc

# 训练

for epoch in range(1, EPOCH + 1):

train(model_ft, DEVICE, image_dataloader['train'], optimizer, epoch)

cosine_schedule.step()

val(model_ft, DEVICE, image_dataloader['valid'])

训练完后会得到一个.pth模型文件

8. 预测图片

新建predict.py文件

import torch.utils.data.distributed

import torchvision.transforms as transforms

from PIL import Image, ImageFont, ImageDraw

from torch.autograd import Variable

import os

classes = ['cat', 'dog']

transform_test = transforms.Compose([

transforms.Resize((224, 224)),

transforms.ToTensor(),

transforms.Normalize(mean=[0.485, 0.456, 0.406], std=[0.229, 0.224, 0.225])

])

DEVICE = torch.device("cuda:0" if torch.cuda.is_available() else "cpu")

model = torch.load("./model_epoch_13_ACC-0.97.pth") # 模型文件路径

model.eval()

model.to(DEVICE)

path= './data1_dog_cat/test/cat/cat.10000.jpg' # 预测图片路径

img = Image.open(path)

image = transform_test(img)

image.unsqueeze_(0)

image = Variable(image).to(DEVICE)

out=model(image)

_, pred = torch.max(out.data, 1)

# 在图上显示预测结果

draw = ImageDraw.Draw(img)

font = ImageFont.truetype("arial.ttf", 30) # 设置字体

content = classes[pred.data.item()]

draw.text((0, 0), content, font = font)

img.show()