利用Pytorch实现GoogLeNet网络

目 录

1 GoogLeNet网络

1.1 网络结构及参数

1.2 Inception结构

1.3 带降维功能的Inception结构

1.4 辅助分类器

2 利用Pytorch实现GoogLeNet网络

2.1 模型定义

2.2 训练过程

2.3 预测过程

1 GoogLeNet网络

1.1 网络结构及参数

整个网络的结构参数如下:

后面几列与网络的对应关系如下:

该网络的亮点在于:

①引入了Inception结构(融合不同尺度的特征信息);

②使用1x1的卷积核进行降维以及映射处理;

③添加两个辅助分类器帮助训练,在之前的AlexNet和VGG都只有一个输出层,但是在GoogLeNet有三个输出层(其中有两个是辅助分类层);

④丢弃全连接层,使用平均池化层,能够大大减少模型参数。

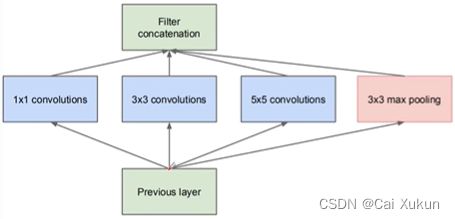

1.2 Inception结构

将上一层得到的输出矩阵同时输入到四个分支当中进行处理,处理之后再将得到的四个特征矩阵按照深度进行拼接,得到一个输出特征矩阵。四个分支分别为:1×1的卷积核、3×3的卷积核、5×5的卷积核、3×3的池化核,每个分支得到的特征矩阵高和宽必须相同,否则无法按深度进行拼接。

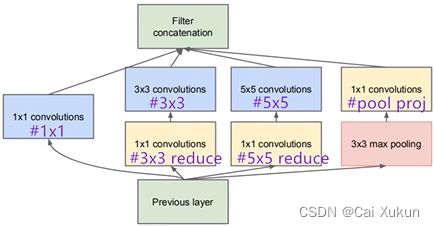

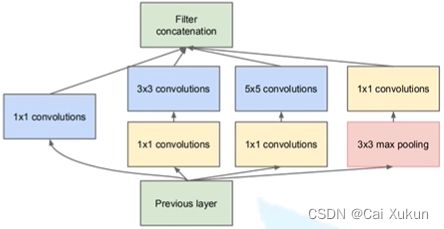

1.3 带降维功能的Inception结构

在后三个分支分别多了1×1的卷积核,起到了降维的作用。如果不使用1×1的卷积核,如下图所示:

一共需要819200个参数,但若先使用1×1的卷积核进行降维,会大大减少参数个数:

第一个卷积层需要12288个参数,再经过一次卷积需要38400个参数,一共只需要50688个参数,大大减少了计算量。

1.4 辅助分类器

①第一层是平均池化下采样操作,池化核大小为5×5,步长为3,网络中一共有两个辅助分类器,输入分别是Inception(4a)和Inception(4d)的输出分别为14×14×512和14×14×528,两个辅助分类器对应的输出值分别为4×4×512和4×4×528;

②然后使用128个大小为1×1的卷积核组成的卷积层进行处理,降低维度,并使用ReLU激活函数;

③采用节点个数为1024的全连接层,并使用ReLU激活函数;

④在全连接层之间使用Drouput函数,随机失活70%的神经元;

⑤输出层神经元个数根据类别调整,再通过softmax激活函数得到概率分布。

2 利用Pytorch实现GoogLeNet网络

2.1 模型定义

搭建卷积层时一般是将卷积和ReLU激活函数共同使用,将其定义到一个类中调用比较方便:

class BasicConv2d(nn.Module):

def __init__(self, in_channels, out_channels, **kwargs):

super(BasicConv2d, self).__init__()

self.conv = nn.Conv2d(in_channels, out_channels, **kwargs)

self.relu = nn.ReLU(inplace=True)

def forward(self, x):

x = self.conv(x)

x = self.relu(x)

return x然后搭建Inception结构:

class Inception(nn.Module):

def __init__(self, in_channels, ch1x1, ch3x3red, ch3x3, ch5x5red, ch5x5, pool_proj):

super(Inception, self).__init__()

self.branch1 = BasicConv2d(in_channels, ch1x1, kernel_size=1)

self.branch2 = nn.Sequential(

BasicConv2d(in_channels, ch3x3red, kernel_size=1),

BasicConv2d(ch3x3red, ch3x3, kernel_size=3, padding=1) # 保证输出大小等于输入大小

)

self.branch3 = nn.Sequential(

BasicConv2d(in_channels, ch5x5red, kernel_size=1),

BasicConv2d(ch5x5red, ch5x5, kernel_size=5, padding=2) # 保证输出大小等于输入大小

)

self.branch4 = nn.Sequential(

nn.MaxPool2d(kernel_size=3, stride=1, padding=1),

BasicConv2d(in_channels, pool_proj, kernel_size=1)

)

def forward(self, x):

branch1 = self.branch1(x)

branch2 = self.branch2(x)

branch3 = self.branch3(x)

branch4 = self.branch4(x)

outputs = [branch1, branch2, branch3, branch4]

return torch.cat(outputs, 1)torch.cat函数可以将四个分支合并,1维对应在channels(batch,channels,H,W)上进行拼接,然后定义辅助分类器:

class InceptionAux(nn.Module):

def __init__(self, in_channels, num_classes):

super(InceptionAux, self).__init__()

self.averagePool = nn.AvgPool2d(kernel_size=5, stride=3)

self.conv = BasicConv2d(in_channels, 128, kernel_size=1) # output[batch, 128, 4, 4]

self.fc1 = nn.Linear(2048, 1024)

self.fc2 = nn.Linear(1024, num_classes)

def forward(self, x):

# aux1: N x 512 x 14 x 14, aux2: N x 528 x 14 x 14

x = self.averagePool(x)

# aux1: N x 512 x 4 x 4, aux2: N x 528 x 4 x 4

x = self.conv(x)

# N x 128 x 4 x 4

x = torch.flatten(x, 1)

x = F.dropout(x, 0.5, training=self.training)

# N x 2048

x = F.relu(self.fc1(x), inplace=True)

x = F.dropout(x, 0.5, training=self.training)

# N x 1024

x = self.fc2(x)

# N x num_classes

return x定义网络结构:

class GoogLeNet(nn.Module):

def __init__(self, num_classes=1000, aux_logits=True, init_weights=False):

super(GoogLeNet, self).__init__()

self.aux_logits = aux_logits

self.conv1 = BasicConv2d(3, 64, kernel_size=7, stride=2, padding=3)

self.maxpool1 = nn.MaxPool2d(3, stride=2, ceil_mode=True)

self.conv2 = BasicConv2d(64, 64, kernel_size=1)

self.conv3 = BasicConv2d(64, 192, kernel_size=3, padding=1)

self.maxpool2 = nn.MaxPool2d(3, stride=2, ceil_mode=True)

self.inception3a = Inception(192, 64, 96, 128, 16, 32, 32)

self.inception3b = Inception(256, 128, 128, 192, 32, 96, 64)

self.maxpool3 = nn.MaxPool2d(3, stride=2, ceil_mode=True)

self.inception4a = Inception(480, 192, 96, 208, 16, 48, 64)

self.inception4b = Inception(512, 160, 112, 224, 24, 64, 64)

self.inception4c = Inception(512, 128, 128, 256, 24, 64, 64)

self.inception4d = Inception(512, 112, 144, 288, 32, 64, 64)

self.inception4e = Inception(528, 256, 160, 320, 32, 128, 128)

self.maxpool4 = nn.MaxPool2d(3, stride=2, ceil_mode=True)

self.inception5a = Inception(832, 256, 160, 320, 32, 128, 128)

self.inception5b = Inception(832, 384, 192, 384, 48, 128, 128)

if self.aux_logits:

self.aux1 = InceptionAux(512, num_classes)

self.aux2 = InceptionAux(528, num_classes)

self.avgpool = nn.AdaptiveAvgPool2d((1, 1))

self.dropout = nn.Dropout(0.4)

self.fc = nn.Linear(1024, num_classes)

if init_weights:

self._initialize_weights()

def forward(self, x):

# N x 3 x 224 x 224

x = self.conv1(x)

# N x 64 x 112 x 112

x = self.maxpool1(x)

# N x 64 x 56 x 56

x = self.conv2(x)

# N x 64 x 56 x 56

x = self.conv3(x)

# N x 192 x 56 x 56

x = self.maxpool2(x)

# N x 192 x 28 x 28

x = self.inception3a(x)

# N x 256 x 28 x 28

x = self.inception3b(x)

# N x 480 x 28 x 28

x = self.maxpool3(x)

# N x 480 x 14 x 14

x = self.inception4a(x)

# N x 512 x 14 x 14

if self.training and self.aux_logits: # eval model lose this layer

aux1 = self.aux1(x)

x = self.inception4b(x)

# N x 512 x 14 x 14

x = self.inception4c(x)

# N x 512 x 14 x 14

x = self.inception4d(x)

# N x 528 x 14 x 14

if self.training and self.aux_logits: # eval model lose this layer

aux2 = self.aux2(x)

x = self.inception4e(x)

# N x 832 x 14 x 14

x = self.maxpool4(x)

# N x 832 x 7 x 7

x = self.inception5a(x)

# N x 832 x 7 x 7

x = self.inception5b(x)

# N x 1024 x 7 x 7

x = self.avgpool(x)

# N x 1024 x 1 x 1

x = torch.flatten(x, 1)

# N x 1024

x = self.dropout(x)

x = self.fc(x)

# N x 1000 (num_classes)

if self.training and self.aux_logits: # eval model lose this layer

return x, aux2, aux1

return x

def _initialize_weights(self):

for m in self.modules():

if isinstance(m, nn.Conv2d):

nn.init.kaiming_normal_(m.weight, mode='fan_out', nonlinearity='relu')

if m.bias is not None:

nn.init.constant_(m.bias, 0)

elif isinstance(m, nn.Linear):

nn.init.normal_(m.weight, 0, 0.01)

nn.init.constant_(m.bias, 0)定义池化层时的ceil_mode参数设置为True时,若最大池化下采样操作后计算得到的值是小数,会向上取整,为False则向下取整。

nn.AdaptiveAvgPool2d是自适应平均池化下采样操作,不论输入图像多大,都能得到我们所需要规格的输出特征矩阵。

2.2 训练过程

在训练时实例化网络:

net = GoogLeNet(num_classes=5, aux_logits=True, init_weights=True)

net.to(device)

loss_function = nn.CrossEntropyLoss()

optimizer = optim.Adam(net.parameters(), lr=0.0003)将图片传入到网络中,会有三个输出。在计算损失时要注意,训练时有三个损失,分别是主损失和两个辅助分类器的损失,而且要将三个损失按照论文中所给的比例相加,得到一个总损失:

logits, aux_logits2, aux_logits1 = net(images.to(device))

loss0 = loss_function(logits, labels.to(device))

loss1 = loss_function(aux_logits1, labels.to(device))

loss2 = loss_function(aux_logits2, labels.to(device))

loss = loss0 + loss1 * 0.3 + loss2 * 0.3在模型定义时使用了self.training参数,当使用net.train()模式时,该参数为True,在模型定义时有定义,会返回三个输出(主分类器和两个辅助分类器);当使用net.eval()模式时,该参数为False,只会产生一个输出(主分类器)。在训练过程中需要辅助分类器来帮助优化参数,但是测试过程中不需要关注辅助分类器的结果,这就是self.training参数的作用。

2.3 预测过程

在预测时不需要两个辅助分类器,所以在网络初始化时要将aux_logits参数设置为False:

model = GoogLeNet(num_classes=5, aux_logits=False).to(device)但是在保存模型时,已经将辅助分类器的参数保存了,所以在载入模型时,要将strict参数设置为False,这样就不会精准的匹配当前模型与载入模型的结构:

missing_keys, unexpected_keys = model.load_state_dict(torch.load(weights_path, map_location=device),

strict=False)这样在unexpected_keys中会有一系列属于两个辅助分类器的层,这些层就不做预测用。