学习李沐动手学深度学习中的实现ssd

代码已注释,运行时出现小问题在代码后说明。

import torch

import torchvision

from torch import nn

from torch.nn import functional as F

from d2l import torch as d2l

import matplotlib.pyplot as plt

def cls_predictor(num_inputs, num_anchors, num_classes):

# 输入通道数:num_inputs,

# 输出通道数:锚框的个数num_anchors×(类别数num_classes+背景类1),

# 此时高宽没有归一化,所以卷积核3×3,填充1,保证输出高宽和输入高宽一样。

return nn.Conv2d(num_inputs,

num_anchors * (num_classes + 1),

kernel_size=3, padding=1)

def bbox_predictor(num_inputs, num_anchors):

# 每个锚框4个偏移量值故乘4。

return nn.Conv2d(num_inputs, num_anchors * 4, kernel_size=3, padding=1)

def forward(x, block):

# 返回block块输出。

return block(x)

# 测试张量维度

Y1 = forward(torch.zeros((2, 8, 20, 20)), cls_predictor(8, 5, 10))

Y2 = forward(torch.zeros((2, 16, 10, 10)), cls_predictor(16, 3, 10))

print(Y1.shape, Y2.shape)

# 结果torch.Size([2, 55, 20, 20]) torch.Size([2, 33, 10, 10])

# 特征图尺度改变除了批量之外,其他都发生变化,55与33是预测输出通道个数。(批量大小,通道数,高度,宽度)

def flatten_pred(pred):

# 利用permute函数进行换序操作,把通道数放在最后。

# start_dim=1沿维度1拉成:批量数×(高×宽×通道数)的二维张量,为了下面拼接。

# 换序操作避免类别预测在flatten后相距较远。

return torch.flatten(pred.permute(0, 2, 3, 1), start_dim=1)

def concat_preds(preds):

# 沿一维度拼接。

return torch.cat([flatten_pred(p) for p in preds], dim=1)

# 测试沿一维度拼接结果55 * 20 * 20 + 33 * 10 * 10 = 25300,

# 结果:torch.Size([2, 25300])

print(concat_preds([Y1, Y2]).shape)

# 定义一个简单的CNN网络,输入维度in_channels,输出out_channels,高宽减半。

def down_sample_blk(in_channels, out_channels):

blk = []

# 卷积,BN层,ReLU激活函数,重复两次。

for _ in range(2):

blk.append(nn.Conv2d(in_channels, out_channels, kernel_size=3, padding=1))

blk.append(nn.BatchNorm2d(out_channels))

blk.append(nn.ReLU())

in_channels = out_channels

# 池化层默认步长等于2,形成高宽减半的效果。

blk.append(nn.MaxPool2d(2))

# 放在Sequential中。加星:*args:接收若干个位置参数,转换成元组tuple形式。

return nn.Sequential(*blk)

print(forward(torch.zeros((2, 3, 20, 20)), down_sample_blk(3, 10)).shape)

# 结果:torch.Size([2, 10, 10, 10]),高和宽减半块会更改输入通道的数量,并将输入特征图的高度和宽度减半。

def base_net():

blk = []

num_filters = [3, 16, 32, 64]

# 通道3-16-32-64,通道数翻倍,高宽减半。

for i in range(len(num_filters) - 1):

blk.append(down_sample_blk(num_filters[i], num_filters[i+1]))

return nn.Sequential(*blk)

print(forward(torch.zeros((2, 3, 256, 256)), base_net()).shape)

# torch.Size([2, 64, 32, 32])

# 通道3×2^3,256/2^3

def get_blk(i):

if i == 0:

blk = base_net()

elif i == 1:

blk = down_sample_blk(64, 128)

elif i == 4:

# 全局最大池化,高宽变1。

blk = nn.AdaptiveMaxPool2d((1,1))

# i等于2或3通道数没有改变因为数据集小,通道数没必要搞太大。

else:

blk = down_sample_blk(128, 128)

return blk

def blk_forward(X, blk, size, ratio, cls_predictor, bbox_predictor):

# 算出特征图Y

Y = blk(X)

# 在特征图Y尺度下面锚框缩放比和宽高比。

# 锚框只要Y的高和宽不需要具体值,故可以提前生成。

anchors = d2l.multibox_prior(Y, sizes=size, ratios=ratio)

# 类别预测。

cls_preds = cls_predictor(Y)

# 偏移预测。

bbox_preds = bbox_predictor(Y)

return (Y, anchors, cls_preds, bbox_preds)

# 覆盖率从小到大

sizes = [[0.2, 0.272],

[0.37, 0.447],

[0.54, 0.619],

[0.71, 0.79],

[0.88, 0.961]]

# 宽高比常用组合×5个

ratios = [[1, 2, 0.5]] * 5

# n+m-1

num_anchors = len(sizes[0]) + len(ratios[0]) - 1

# 简版SSD

class TinySSD(nn.Module):

def __init__(self, num_classes, **kwargs):

super(TinySSD, self).__init__(**kwargs)

# 类别数

self.num_classes = num_classes

# 5个块的输出channel数

idx_to_in_channels = [64, 128, 128, 128, 128]

for i in range(5):

# 即赋值语句self.blk_i=get_blk(i)

# setattr用法:

# Sets the named attribute on the given object to the specified value.

# setattr(x, 'y', v) is equivalent to ``x.y = v''

# 调用get_blk函数遍历每个块,同时对每个块分别进行类别预测和偏移预测。

setattr(self, f'blk_{i}', get_blk(i))

setattr(self, f'cls_{i}', cls_predictor(idx_to_in_channels[i],

num_anchors, num_classes))

setattr(self, f'bbox_{i}', bbox_predictor(idx_to_in_channels[i],

num_anchors))

def forward(self, X):

anchors, cls_preds, bbox_preds = [None] * 5, [None] * 5, [None] * 5

for i in range(5):

# getattr(self,'blk_%d'%i)即访问self.blk_i

# 除了X,其余都存起来了

X, anchors[i], cls_preds[i], bbox_preds[i] = blk_forward(

X, getattr(self, f'blk_{i}'), sizes[i], ratios[i],

getattr(self, f'cls_{i}'), getattr(self, f'bbox_{i}'))

anchors = torch.cat(anchors, dim=1)

cls_preds = concat_preds(cls_preds)

# 0维不动,1维未知,2维为预测类别加背景(+1)。

cls_preds = cls_preds.reshape(cls_preds.shape[0], -1, self.num_classes + 1)

bbox_preds = concat_preds(bbox_preds)

return anchors, cls_preds, bbox_preds

net = TinySSD(num_classes=1)

# 测试维度

X = torch.zeros((32, 3, 256, 256))

anchors, cls_preds, bbox_preds = net(X)

print('output anchors:', anchors.shape)

print('output class preds:', cls_preds.shape)

print('output bbox preds:', bbox_preds.shape)

# 结果:

# 5440个锚框,每个框四个参数定义

# output anchors: torch.Size([1, 5444, 4])

# 批量32,5440个锚框,对每个锚框分类,定义的类别num_classes=1,加上背景,等于2。

# output class preds: torch.Size([32, 5444, 2])

# 每个锚框四个预测5444×4

# output bbox preds: torch.Size([32, 21776])

batch_size = 32

# 香蕉数据集,类别为1(香蕉)

train_iter, _ = d2l.load_data_bananas(batch_size)

device, net = d2l.try_gpu(), TinySSD(num_classes=1)

# 梯度下降法,优化器,学习率,weight_decay权值衰减。

trainer = torch.optim.SGD(net.parameters(), lr=0.2, weight_decay=5e-4)

# 损失函数:交叉熵损失函数

cls_loss = nn.CrossEntropyLoss(reduction='none')

# 均绝对误差

bbox_loss = nn.L1Loss(reduction='none')

def calc_loss(cls_preds, cls_labels, bbox_preds, bbox_labels, bbox_masks):

batch_size, num_classes = cls_preds.shape[0], cls_preds.shape[2]

# 类别的损失函数,预测的类别和标注的类别,第二次reshape第0维为批量大小,然后沿第一个维度取平均值。

cls = cls_loss(cls_preds.reshape(-1, num_classes), cls_labels.reshape(-1)).reshape(batch_size, -1).mean(dim=1)

# 都乘个masks,当锚框对应背景框masks为0,意味着背景框不用预测偏移量,沿着第一个维度取平均值。

bbox = bbox_loss(bbox_preds * bbox_masks, bbox_labels * bbox_masks).mean(dim=1)

# 返回两个损失(误差)之和。

return cls + bbox

# 我们可以沿用准确率评价分类结果。

# 由于偏移量使用了范数损失,我们使用平均绝对误差来评价边界框的预测结果。

# 这些预测结果是从生成的锚框及其预测偏移量中获得的。

def cls_eval(cls_preds, cls_labels):

# 由于类别预测结果放在最后一维,argmax需要指定最后一维。

return float((cls_preds.argmax(dim=-1).type(cls_labels.dtype) == cls_labels).sum())

def bbox_eval(bbox_preds, bbox_labels, bbox_masks):

# abs取绝对值

return float((torch.abs((bbox_labels - bbox_preds) * bbox_masks)).sum())

# 训练模型

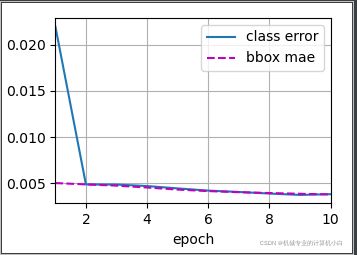

num_epochs, timer = 10, d2l.Timer()

# 画图

animator = d2l.Animator(xlabel='epoch', xlim=[1, num_epochs],

legend=['class error', 'bbox mae'])

net = net.to(device)

for epoch in range(num_epochs):

# 训练精确度的和,训练精确度的和中的示例数

# 绝对误差的和,绝对误差的和中的示例数

metric = d2l.Accumulator(4)

net.train()

for features, target in train_iter:

timer.start()

trainer.zero_grad()

X, Y = features.to(device), target.to(device)

# 生成多尺度的锚框,为每个锚框预测类别和偏移量

anchors, cls_preds, bbox_preds = net(X)

# 为每个锚框标注类别和偏移量

bbox_labels, bbox_masks, cls_labels = d2l.multibox_target(anchors, Y)

# 根据类别和偏移量的预测和标注值计算损失函数

l = calc_loss(cls_preds, cls_labels, bbox_preds, bbox_labels,

bbox_masks)

l.mean().backward()

trainer.step()

metric.add(cls_eval(cls_preds, cls_labels), cls_labels.numel(),

bbox_eval(bbox_preds, bbox_labels, bbox_masks),

bbox_labels.numel())

cls_err, bbox_mae = 1 - metric[0] / metric[1], metric[2] / metric[3]

animator.add(epoch + 1, (cls_err, bbox_mae))

print(f'class err {cls_err:.2e}, bbox mae {bbox_mae:.2e}')

print(f'{len(train_iter.dataset) / timer.stop():.1f} examples/sec on '

f'{str(device)}')

plt.show()

# 预测

X = torchvision.io.read_image('../img/banana.jpg').unsqueeze(0).float()

img = X.squeeze(0).permute(1, 2, 0).long()

def predict(X):

net.eval()

anchors, cls_preds, bbox_preds = net(X.to(device))

# softmax函数相当于概率

cls_probs = F.softmax(cls_preds, dim=2).permute(0, 2, 1)

output = d2l.multibox_detection(cls_probs, bbox_preds, anchors)

idx = [i for i, row in enumerate(output[0]) if row[0] != -1]

return output[0, idx]

output = predict(X)

# 筛选0.9以下的边界框

def display(img, output, threshold):

d2l.set_figsize((5, 5))

fig = d2l.plt.imshow(img)

for row in output:

score = float(row[1])

if score < threshold:

continue

h, w = img.shape[0:2]

bbox = [row[2:6] * torch.tensor((w, h, w, h), device=row.device)]

d2l.show_bboxes(fig.axes, bbox, '%.2f' % score, 'w')

display(img, output.cpu(), threshold=0.9)

plt.show()

结果,gpu1050ti:

torch.Size([2, 55, 20, 20]) torch.Size([2, 33, 10, 10])

torch.Size([2, 25300])

torch.Size([2, 10, 10, 10])

torch.Size([2, 64, 32, 32])

D:\anaconda\envs\pytorch\lib\site-packages\torch\functional.py:445: UserWarning: torch.meshgrid: in an upcoming release, it will be required to pass the indexing argument. (Triggered internally at ..\aten\src\ATen\native\TensorShape.cpp:2157.)

return _VF.meshgrid(tensors, **kwargs) # type: ignore[attr-defined]

output anchors: torch.Size([1, 5444, 4])

output class preds: torch.Size([32, 5444, 2])

output bbox preds: torch.Size([32, 21776])

read 1000 training examples

read 100 validation examples

class err 3.80e-03, bbox mae 3.77e-03

3493.3 examples/sec on cuda:0 细节:由于用的pycharm,故

%matplotlib inline

这个是不行的,根据网上经验,得调用

import matplotlib.pyplot as plt

并在结尾

plt.show()

将图片打印出来。

但是在本程序中如果仅仅是在结尾出加上plt.show( ),会出现如下不正常现象:

并报错:

UserWarning: Tight layout not applied. The left and right margins cannot be made large enough to accommodate all axes decorations.

根据报错去找网上经验,并不适用。在编写代码时输出训练结果图片并没有出现问题,故怀疑加上预测结果图片后,不能通过在程序结尾加上plt.show()分别输出两个图片。

经过不断尝试:

将训练程序下面和预测结果下面分别加上plt.show(),可在程序中看plt.show()的位置,问题解决!