AlexNet

AlexNet详解

1.AlexNet背景

AlexNet是2012年ISLVRC 2012(ImageNet Large Scale Visual Recognition Challenge)竞赛的冠军网络,分类准确率由传统的 70%+提升到 80%+。它是由Hinton和他的学生Alex Krizhevsky设计的。也是在那年之后,深度学习开始迅速发展。

2.AlexNet详解

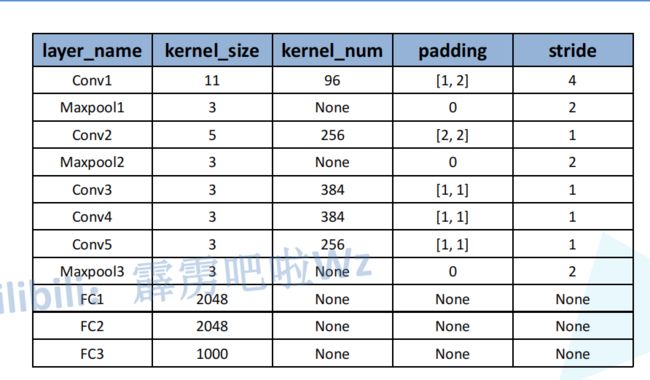

-

网络亮点

(1)首次利用GPU进行网络加速训练

(2)使用了RELU激活函数,而不是传统的Sigmoid激活函数以及Tanh激活函数

(3)使用了LRN局部响应归一化

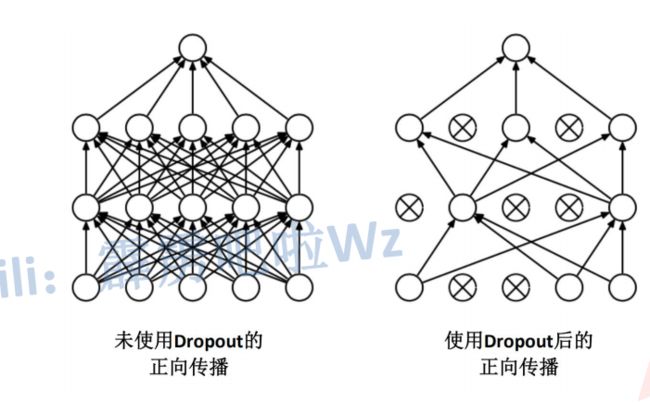

(4)在全连接层的前两层中使用了Dropout随机失活神经元操作,以减少过拟合

-

过拟合

根本原因时特征维度过多,模型假设过于复杂,参数过多,训练数据过少,噪声过多,导致拟合的函数完美的预测训练集,但对新数据的测试集预测结果差,过度的拟合了训练数据,而没有考虑到泛化能力。

经卷积后的矩阵尺寸大小计算为:N = (w-F+2*P)/S+1

-

图片输入大小 W * W

-

Filter大小 F * F

-

步长S

-

padding的像素数P

3.卷积过程分析

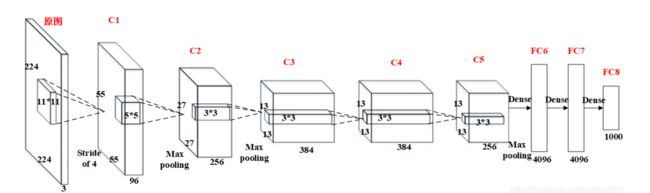

(1)AlexNet网络结构:8层卷积神经网络

5层卷积层+3层全连接层

以下为手写笔记分析:

原图输入224*224,实际上(227×227)进行了随机裁剪

1.卷积层C1

2.卷积层C2

3.卷积层C3

4.卷积层C4

6.全连接层FC6

- FC6的基本结构为:全连接–>ReLU–>Dropout

(1)全连接:此层的全连接实际上是通过卷积进行的,输入6×6×256,4096个6×6×256的卷积核,扩充边缘padding = 0, 步长stride = 1, 因此其FeatureMap大小为(6-6+0×2+1)/1 = 1,即1×1×4096;

(2)激活函数:ReLU;

(3)Dropout:全连接层中去掉了一些神经节点,达到防止过拟合,FC6输出为1×1×4096;

7.全连接层FC7 - FC6的基本结构为:全连接–>ReLU–>Dropout

(1)全连接:此层的全连接,输入1×1×4096;

(2)激活函数:ReLU;

(3)Dropout:全连接层中去掉了一些神经节点,达到防止过拟合,FC7输出为1×1×4096;

8.全连接层FC8 - FC8的基本结构为:全连接–>softmax

(1)全连接:此层的全连接,输入1×1×4096;

(2)softmax:softmax为1000,FC8输出为1×1×1000;

此处参考博客分析:Alex网络结构详解

4.代码复现

1.model.py

import torch

import torch.nn as nn

import torch.nn.functional as F

class AlexNet(nn.Module):

def __init__(self, num_classes=1000, init_weights=False):

super(AlexNet, self).__init__()

self.features = nn.Sequential(

nn.Conv2d(3, 48, kernel_size=11, stride=4, padding=2), # input[3, 224, 224] output[48, 55, 55]

nn.ReLU(inplace=True),

nn.MaxPool2d(kernel_size=3, stride=2), # output[48, 27, 27]

nn.Conv2d(48, 128, kernel_size=5, padding=2), # output[128, 27, 27]

nn.ReLU(inplace=True),

nn.MaxPool2d(kernel_size=3, stride=2), # output[128, 13, 13]

nn.Conv2d(128, 192, kernel_size=3, padding=1), # output[192, 13, 13]

nn.ReLU(inplace=True),

nn.Conv2d(192, 192, kernel_size=3, padding=1), # output[192, 13, 13]

nn.ReLU(inplace=True),

nn.Conv2d(192, 128, kernel_size=3, padding=1), # output[128, 13, 13]

nn.ReLU(inplace=True),

nn.MaxPool2d(kernel_size=3, stride=2), # output[128, 6, 6]

)

self.classifier = nn.Sequential(

nn.Dropout(p=0.5),

nn.Linear(128 * 6 * 6, 2048),

nn.ReLU(inplace=True),

nn.Dropout(p=0.5),

nn.Linear(2048, 2048),

nn.ReLU(inplace=True),

nn.Linear(2048, num_classes),

)

if init_weights:

self._initialize_weights()

def forward(self, x):

x = self.features(x)

x = torch.flatten(x, start_dim=1)

x = self.classifier(x)

return x

def _initialize_weights(self):

for m in self.modules():

if isinstance(m, nn.Conv2d):

nn.init.kaiming_normal_(m.weight, mode='fan_out', nonlinearity='relu')

if m.bias is not None:

nn.init.constant_(m.bias, 0)

elif isinstance(m, nn.Linear):

nn.init.normal_(m.weight, 0, 0.01)

nn.init.constant_(m.bias, 0)

2.train.py

import os

import sys

import json

import torch

import torch.nn as nn

from torchvision import transforms, datasets, utils

import matplotlib.pyplot as plt

import numpy as np

import torch.optim as optim

from tqdm import tqdm

from model import AlexNet

def main():

device = torch.device("cuda:0" if torch.cuda.is_available() else "cpu")

print("using {} device.".format(device))

data_transform = {

"train":transforms.Compose([

transforms.RandomResizedCrop(224),

transforms.RandomHorizontalFlip(),

transforms.ToTensor(),

transforms.Normalize((0.5,0.5,0.5),(0.5,0.5,0.5))

]),

"val":transforms.Compose([

transforms.Resize((224,224)),

transforms.ToTensor(),

transforms.Normalize((0.5,0.5,0.5),(0.5,0.5,0.5))

])

}

data_root = os.path.abspath(os.path.join(os.getcwd(),"../")) #获取数据根目录

#print(data_root) #D:\深度学习笔记\wzppt\deep-learning-for-image-processing-master

image_path = os.path.join(data_root,"data_set","flower_data") #flower data set path

#print(image_path) #D:\深度学习笔记\wzppt\deep-learning-for-image-processing-master\data-set\flower_data

assert os.path.exists(image_path),"{} path does not exists.".format(image_path)

train_dataset = datasets.ImageFolder(root=os.path.join(image_path,"train"),

transform=data_transform["train"])

train_num = len(train_dataset)

# {'daisy':0, 'dandelion':1, 'roses':2, 'sunflower':3, 'tulips':4}

flower_list = train_dataset.class_to_idx

cla_dict = dict((val,key)for key,val in flower_list.items())

#将类别写入json文件

json_str = json.dumps(cla_dict,indent=4)

with open('class_indices.json','w') as json_file:

json_file.write(json_str)

batch_size = 32

nw = min([os.cpu_count(),batch_size if batch_size>1 else 0,8]) #number of workers

print('Using {} dataloader workers every process'.format(nw))

train_loader = torch.utils.data.DataLoader(train_dataset,

batch_size=batch_size,

shuffle = True,

num_workers = nw)

val_dataset = datasets.ImageFolder(root=os.path.join(image_path,"val"),

transform=data_transform["val"])

val_num = len(val_dataset)

validate_loader = torch.utils.data.DataLoader(val_dataset,

batch_size=4,

shuffle=True,

num_workers=nw)

# test_data_iter = iter(validate_loader)

# test_image,test_label = test_data_iter.next()

# def imshow(img):

# img = img/2+0.5

# npimg = img.numpy()

# plt.imshow(np.transpose(npimg,(1,2,0)))

# plt.show()

#

# print(' '.join('%5s' % cla_dict[test_label[j].item()] for j in range(4)))

# imshow(utils.make_grid(test_image))

net = AlexNet(num_classes=5,init_weights=True)

net.to(device)

loss_function = nn.CrossEntropyLoss()

#pata = list(net.parameters())

#print(pata)

optimizer = optim.Adam(net.parameters(),lr=0.0002)

#训练

epoches = 50

save_path = './AlexNet.pth'

best_acc = 0.0

train_steps = len(train_loader)

for epoch in range(epoches):

#train

net.train()

running_loss = 0.0

train_bar = tqdm(train_loader,file=sys.stdout)

for step,data in enumerate(train_bar):

images,labels = data

optimizer.zero_grad()

outputs = net(images.to(device))

loss = loss_function(outputs,labels.to(device))

loss.backward()

optimizer.step()

#打印分析

running_loss +=loss.item()

train_bar.desc = "train epoch[{}/{}] loss:{:.3f}".format(epoch+1,epoches,loss)

#验证

net.eval()

acc = 0.0

with torch.no_grad():

val_bar = tqdm(validate_loader,file = sys.stdout)

for val_data in val_bar:

val_images,val_labels = val_data

outputs = net(val_images.to(device))

predict_y = torch.max(outputs,dim=1)[1]

acc += (predict_y==val_labels).sum().item()

val_accurate = acc/val_num

print('[epoch %d]train loss:%.3f val_accuracy %.3f'%(epoch+1,running_loss/train_steps,val_accurate))

if val_accurate>best_acc:

best_acc = val_accurate

torch.save(net.state_dict(),save_path)

print('Finished Training')

if __name__ == "__main__":

main()

3.predict.py

import os

import json

import torch

from PIL import Image

from torchvision import transforms

import matplotlib.pyplot as plt

from model import AlexNet

def main():

device = torch.device("cuda:0" if torch.cuda.is_available() else "cpu")

data_transform = transforms.Compose(

[transforms.Resize((224, 224)),

transforms.ToTensor(),

transforms.Normalize((0.5, 0.5, 0.5), (0.5, 0.5, 0.5))])

# load image

img_path = "../tulip.jpg"

assert os.path.exists(img_path), "file: '{}' dose not exist.".format(img_path)

print(img_path)

img = Image.open(img_path)

plt.imshow(img)

# [N, C, H, W]

img = data_transform(img) #预处理图片

# expand batch dimension

img = torch.unsqueeze(img, dim=0)

# read class_indict

json_path = './class_indices.json'

assert os.path.exists(json_path), "file: '{}' dose not exist.".format(json_path)

with open(json_path, "r") as f:

class_indict = json.load(f)

# create model

model = AlexNet(num_classes=5).to(device)

# load model weights

weights_path = "./AlexNet.pth"

assert os.path.exists(weights_path), "file: '{}' dose not exist.".format(weights_path)

model.load_state_dict(torch.load(weights_path))

model.eval()

with torch.no_grad():

# predict class

output = torch.squeeze(model(img.to(device))).cpu()

predict = torch.softmax(output, dim=0)

predict_cla = torch.argmax(predict).numpy()

print_res = "class: {} prob: {:.3}".format(class_indict[str(predict_cla)],

predict[predict_cla].numpy())

plt.title(print_res)

for i in range(len(predict)):

print("class: {:10} prob: {:.3}".format(class_indict[str(i)],

predict[i].numpy()))

plt.show()

if __name__ == '__main__':

main()