视觉注意力机制——CBAM- Convolutional Block Attention Module

论文下载:paper-Convolutional Block Attention Module

代码下载:github-https://github.com/luuuyi/CBAM.PyTorch

文章目录

- 视觉注意力机制——CBAM

- 一、CBAM

-

- 通道注意力模块

- 空间注意力模块

- 二、代码分析

视觉注意力机制——CBAM

通常将软注意力机制:空间域、通道域、混合域、卷积域。

(1) 空间域——将图片中的的空间信息做相应的空间变换得到相应的权重分布,从而能将关键的信息提取出来。代表作有:Spatial Attention Module。

(2) 通道域——简单的说就是给每个通道上的信号都增加一个权重,来代表该通道与关键信息的相关性,通常权重越大,二者的相关性越高。代表作有:SELayer, Channel Attention Module。

(3) 混合域——通俗的讲就是在通道和空间上共同处理,先在空间上得到权重分布,在到通道上得到权重分布。代表作有:Spatial Attention Module+ Channel Attention Module。

(3) 卷积域——是在卷积核上做处理,得到权重分布,这是一种更高级的玩法,代表作有:SKUnit

一、CBAM

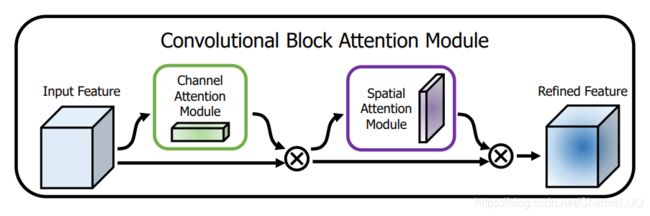

论文Introduction部分提出了影响卷积神经网络模型性能的三个因素:深度、宽度、基数。并且列举了一些代表新的网络结构,比如和深度相关的VGG和ResNet系列,和宽度相关的GoogLeNet和wide-ResNet系列,和基数相关的Xception和ResNeXt。除了这三个因素之外,还有一个模块,也能影响网络的性能,这就是attention——注意力机制。作者提出的channel attention module和spatial attention module。 可以通过级联方式连接起来。模块较为灵活,可以嵌入到ResBlock等。

上图为整个CBAM的示意图,先是通过注意力机制模块,然后是空间注意力模块,作者通过一系列实验证明发现先连接通道注意力在空间注意力效果更好。

通道注意力模块

1. 在S步采取了全局平均池化以及全局最大池化,两种不同的池化意味着提取的高层次特征更加丰富。

2. 接着在E步同样通过两个全连接层和相应的激活函数建模通道之间的相关性,合并两个输出得到各个特征通道的权重。

3. 最后,得到特征通道的权重之后,通过乘法逐通道加权到原来的特征上,完成在通道维度上的原始特征重标定。

我们一起来看代码是怎么实现的吧:

import torch

import torch.nn as nn

################################

# 定义3*3的卷积 #

################################

def conv3x3(in_planes, out_planes, stride=1):

"3x3 convolution with padding"

return nn.Conv2d(in_planes, out_planes, kernel_size=3, stride=stride,

padding=1, bias=False)

###############################

# 定义通道注意力模块 #

###############################

class ChannelAttention(nn.Module):

"""

1.CBAM在S步采取了全局平均池化以及全局最大池化,两种不同的池化意味着提取的高层次特征更加丰富。

2.接着在E步同样通过两个全连接层和相应的激活函数建模通道之间的相关性,合并两个输出得到各个特征通道的权重

3.最后,得到特征通道的权重之后,通过乘法逐通道加权到原来的特征上,完成在通道维度上的原始特征重标定。

"""

def __init__(self, in_planes, ratio=16):

super(ChannelAttention, self).__init__()

# 两个分支:1.自适应平均池化 2.自适应最大池化

self.avg_pool = nn.AdaptiveAvgPool2d(1) # (batchsize, 3, 1, 1)

self.max_pool = nn.AdaptiveMaxPool2d(1) # (batchsize, 3, 1, 1)

# 都需要经过类似于resnet的纺锤结构:两端大-中间小

self.fc1 = nn.Conv2d(in_planes, in_planes //ratio , 1, bias=False)

self.relu1 = nn.ReLU()

self.fc2 = nn.Conv2d(in_planes // ratio, in_planes, 1, bias=False)

self.sigmoid = nn.Sigmoid()

def forward(self, x):

feat = x

avg_out = self.fc2(self.relu1(self.fc1(self.avg_pool(x))))

max_out = self.fc2(self.relu1(self.fc1(self.max_pool(x))))

out = avg_out + max_out

return self.sigmoid(out)*feat

if __name__=="__main__":

img = torch.randn(2, 64, 512, 512)

model = ChannelAttention(64)

out = model(img)

criterion = nn.L1Loss()

loss = criterion(out, img)

loss.backward()

print("out shape:{}".format(out.shape))

print('loss value:{}'.format(loss))

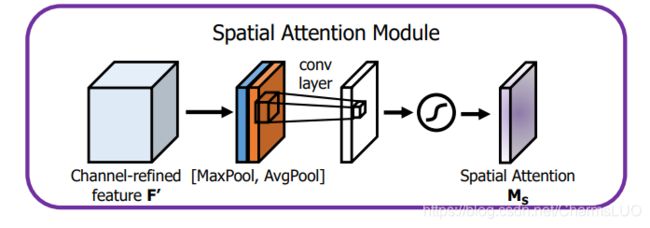

空间注意力模块

1. 首先输入的是经过通道注意力模块的特征,同样利用了全局平均池化和全局最大池化,不同的是,这里是在通道这个维度上进行的操作,也就是说把所有输入通道池化成2个实数,由(hwc)形状的输入得到两个(hw1)的特征图。

2. 接着使用一个 7x7 的卷积核,卷积后形成新的(hw1)的特征图。

3. 最后也是相同的Scale操作,注意力模块特征与得到的新特征图相乘得到经过双重注意力调整的特征图。

我们一起来看一下这部分的代码:

import torch

import torch.nn as nn

################################

# 定义3*3的卷积 #

################################

def conv3x3(in_planes, out_planes, stride=1):

"3x3 convolution with padding"

return nn.Conv2d(in_planes, out_planes, kernel_size=3, stride=stride,

padding=1, bias=False)

###################################

# 定义空间注意力模块 #

###################################

class SpatialAttention(nn.Module):

"""

1.首先输入的是经过通道注意力模块的特征,同样利用了全局平均池化和全局最大池化,不同的是,

这里是在通道这个维度上进行的操作,也就是说把所有输入通道池化成2个实数,

由(h*w*c)形状的输入得到两个(h*w*1)的特征图。

2.接着使用一个 7*7 的卷积核,卷积后形成新的(h*w*1)的特征图。

3.最后也是相同的Scale操作,注意力模块特征与得到的新特征图相乘得到经过双重注意力调整的特征图。

"""

def __init__(self, kernel_size=7):

super(SpatialAttention, self).__init__()

assert kernel_size in (3, 7), 'kernel size must be 3 or 7'

padding = 3 if kernel_size == 7 else 1

self.conv1 = nn.Conv2d(2, 1, kernel_size, padding=padding, bias=False)

self.sigmoid = nn.Sigmoid()

def forward(self, x):

feat = x

avg_out = torch.mean(x, dim=1, keepdim=True)

max_out = torch.max(x, dim=1, keepdim=True)[0] # 输出行的最大值加索引

x = torch.cat([avg_out, max_out], dim=1)

x = self.conv1(x)

return self.sigmoid(x)* feat

if __name__=="__main__":

img = torch.randn(2, 64, 512, 512)

model = SpatialAttention()

out = model(img)

criterion = nn.L1Loss()

loss = criterion(out, img)

loss.backward()

print("out shape:{}".format(out.shape))

print('loss value:{}'.format(loss))

二、代码分析

import torch

import torch.nn as nn

import math

import torch.utils.model_zoo as model_zoo

__all__ = ['ResNet', 'resnet18_cbam',

'resnet34_cbam', 'resnet50_cbam',

'resnet101_cbam','resnet152_cbam']

model_urls = {'resnet18': 'https://download.pytorch.org/models/resnet18-5c106cde.pth',

'resnet34': 'https://download.pytorch.org/models/resnet34-333f7ec4.pth',

'resnet50': 'https://download.pytorch.org/models/resnet50-19c8e357.pth',

'resnet101': 'https://download.pytorch.org/models/resnet101-5d3b4d8f.pth',

'resnet152': 'https://download.pytorch.org/models/resnet152-b121ed2d.pth',

}

################################

# 定义3*3的卷积 #

################################

def conv3x3(in_planes, out_planes, stride=1):

"3x3 convolution with padding"

return nn.Conv2d(in_planes, out_planes, kernel_size=3, stride=stride,

padding=1, bias=False)

###############################

# 定义通道注意力模块 #

###############################

class ChannelAttention(nn.Module):

"""

1.CBAM在S步采取了全局平均池化以及全局最大池化,两种不同的池化意味着提取的高层次特征更加丰富。

2.接着在E步同样通过两个全连接层和相应的激活函数建模通道之间的相关性,合并两个输出得到各个特征通道的权重

3.最后,得到特征通道的权重之后,通过乘法逐通道加权到原来的特征上,完成在通道维度上的原始特征重标定。

"""

def __init__(self, in_planes, ratio=16):

super(ChannelAttention, self).__init__()

# 两个分支:1.自适应平均池化 2.自适应最大池化

self.avg_pool = nn.AdaptiveAvgPool2d(1) # (batchsize, 3, 1, 1)

self.max_pool = nn.AdaptiveMaxPool2d(1) # (batchsize, 3, 1, 1)

# 都需要经过类似于resnet的纺锤结构:两端大-中间小

self.fc1 = nn.Conv2d(in_planes, in_planes //ratio , 1, bias=False)

self.relu1 = nn.ReLU()

self.fc2 = nn.Conv2d(in_planes // ratio, in_planes, 1, bias=False)

self.sigmoid = nn.Sigmoid()

def forward(self, x):

feat = x

avg_out = self.fc2(self.relu1(self.fc1(self.avg_pool(x))))

max_out = self.fc2(self.relu1(self.fc1(self.max_pool(x))))

out = avg_out + max_out

return self.sigmoid(out)*feat

###################################

# 定义空间注意力模块 #

###################################

class SpatialAttention(nn.Module):

"""

1.首先输入的是经过通道注意力模块的特征,同样利用了全局平均池化和全局最大池化,不同的是,

这里是在通道这个维度上进行的操作,也就是说把所有输入通道池化成2个实数,

由(h*w*c)形状的输入得到两个(h*w*1)的特征图。

2.接着使用一个 7*7 的卷积核,卷积后形成新的(h*w*1)的特征图。

3.最后也是相同的Scale操作,注意力模块特征与得到的新特征图相乘得到经过双重注意力调整的特征图。

"""

def __init__(self, kernel_size=7):

super(SpatialAttention, self).__init__()

assert kernel_size in (3, 7), 'kernel size must be 3 or 7'

padding = 3 if kernel_size == 7 else 1

self.conv1 = nn.Conv2d(2, 1, kernel_size, padding=padding, bias=False)

self.sigmoid = nn.Sigmoid()

def forward(self, x):

feat = x

avg_out = torch.mean(x, dim=1, keepdim=True)

max_out = torch.max(x, dim=1, keepdim=True)[0] # 输出行的最大值加索引

x = torch.cat([avg_out, max_out], dim=1)

x = self.conv1(x)

return self.sigmoid(x)* feat

#################################################

# 基础模块包含:空间+通道注意力机制 #

#################################################

class BasicBlock(nn.Module):

expansion = 1

def __init__(self, inplanes, planes, stride=1, downsample=None):

super(BasicBlock, self).__init__()

self.conv1 = conv3x3(inplanes, planes, stride)

self.bn1 = nn.BatchNorm2d(planes)

self.relu = nn.ReLU(inplace=True)

self.conv2 = conv3x3(planes, planes)

self.bn2 = nn.BatchNorm2d(planes)

self.ca = ChannelAttention(planes)

self.sa = SpatialAttention()

self.downsample = downsample

self.stride = stride

def forward(self, x):

residual = x

out = self.conv1(x)

out = self.bn1(out)

out = self.relu(out)

out = self.conv2(out)

out = self.bn2(out)

out = self.ca(out)

out = self.sa(out)

if self.downsample is not None:

residual = self.downsample(x)

out += residual

out = self.relu(out)

return out

###################################################

# 增强模块 #

###################################################

class Bottleneck(nn.Module):

expansion = 4 # expansion=last_block_channel/first_block_channel

def __init__(self, inplanes, planes, stride=1, downsample=None):

super(Bottleneck, self).__init__()

self.conv1 = nn.Conv2d(inplanes, planes, kernel_size=1, bias=False)

self.bn1 = nn.BatchNorm2d(planes)

self.conv2 = nn.Conv2d(planes, planes, kernel_size=3, stride=stride,

padding=1, bias=False)

self.bn2 = nn.BatchNorm2d(planes)

self.conv3 = nn.Conv2d(planes, planes * 4, kernel_size=1, bias=False)

self.bn3 = nn.BatchNorm2d(planes * 4)

self.relu = nn.ReLU(inplace=True)

self.ca = ChannelAttention(planes * 4)

self.sa = SpatialAttention()

self.downsample = downsample

self.stride = stride

def forward(self, x):

residual = x

out = self.conv1(x)

out = self.bn1(out)

out = self.relu(out)

out = self.conv2(out)

out = self.bn2(out)

out = self.relu(out)

out = self.conv3(out)

out = self.bn3(out)

out = self.ca(out)

out = self.sa(out)

if self.downsample is not None:

residual = self.downsample(x)

out += residual

out = self.relu(out)

return out

class ResNet(nn.Module):

def __init__(self, block, layers, num_classes=1000):

self.inplanes = 64

super(ResNet, self).__init__()

self.conv1 = nn.Conv2d(3, 64, kernel_size=7, stride=2, padding=3,

bias=False)

self.bn1 = nn.BatchNorm2d(64)

self.relu = nn.ReLU(inplace=True)

self.maxpool = nn.MaxPool2d(kernel_size=3, stride=2, padding=1)

self.layer1 = self._make_layer(block, 64, layers[0])

self.layer2 = self._make_layer(block, 128, layers[1], stride=2)

self.layer3 = self._make_layer(block, 256, layers[2], stride=2)

self.layer4 = self._make_layer(block, 512, layers[3], stride=2)

self.avgpool = nn.AvgPool2d(7, stride=1)

self.fc = nn.Linear(512 * block.expansion, num_classes)

for m in self.modules():

if isinstance(m, nn.Conv2d):

n = m.kernel_size[0] * m.kernel_size[1] * m.out_channels

m.weight.data.normal_(0, math.sqrt(2. / n))

elif isinstance(m, nn.BatchNorm2d):

m.weight.data.fill_(1)

m.bias.data.zero_()

def _make_layer(self, block, planes, blocks, stride=1):

downsample = None

if stride != 1 or self.inplanes != planes * block.expansion:

downsample = nn.Sequential(

nn.Conv2d(self.inplanes, planes * block.expansion,

kernel_size=1, stride=stride, bias=False),

nn.BatchNorm2d(planes * block.expansion),

)

layers = []

layers.append(block(self.inplanes, planes, stride, downsample))

self.inplanes = planes * block.expansion

for i in range(1, blocks):

layers.append(block(self.inplanes, planes))

return nn.Sequential(*layers)

def forward(self, x):

x = self.conv1(x)

x = self.bn1(x)

x = self.relu(x)

x = self.maxpool(x)

x = self.layer1(x)

x = self.layer2(x)

x = self.layer3(x)

x = self.layer4(x)

x = self.avgpool(x)

x = x.view(x.size(0), -1)

x = self.fc(x)

return x

def resnet18_cbam(pretrained=False, **kwargs):

"""Constructs a ResNet-18 model.

Args:

pretrained (bool): If True, returns a model pre-trained on ImageNet

"""

model = ResNet(BasicBlock, [2, 2, 2, 2], **kwargs)

if pretrained:

pretrained_state_dict = model_zoo.load_url(model_urls['resnet18'])

now_state_dict = model.state_dict()

now_state_dict.update(pretrained_state_dict)

model.load_state_dict(now_state_dict)

return model

def resnet34_cbam(pretrained=False, **kwargs):

"""Constructs a ResNet-34 model.

Args:

pretrained (bool): If True, returns a model pre-trained on ImageNet

"""

model = ResNet(BasicBlock, [3, 4, 6, 3], **kwargs)

if pretrained:

pretrained_state_dict = model_zoo.load_url(model_urls['resnet34'])

now_state_dict = model.state_dict()

now_state_dict.update(pretrained_state_dict)

model.load_state_dict(now_state_dict)

return model

def resnet50_cbam(pretrained=False, **kwargs):

"""Constructs a ResNet-50 model.

Args:

pretrained (bool): If True, returns a model pre-trained on ImageNet

"""

model = ResNet(Bottleneck, [3, 4, 6, 3], **kwargs)

if pretrained:

pretrained_state_dict = model_zoo.load_url(model_urls['resnet50'])

now_state_dict = model.state_dict()

now_state_dict.update(pretrained_state_dict)

model.load_state_dict(now_state_dict)

return model

def resnet101_cbam(pretrained=False, **kwargs):

"""Constructs a ResNet-101 model.

Args:

pretrained (bool): If True, returns a model pre-trained on ImageNet

"""

model = ResNet(Bottleneck, [3, 4, 23, 3], **kwargs)

if pretrained:

pretrained_state_dict = model_zoo.load_url(model_urls['resnet101'])

now_state_dict = model.state_dict()

now_state_dict.update(pretrained_state_dict)

model.load_state_dict(now_state_dict)

return model

def resnet152_cbam(pretrained=False, **kwargs):

"""Constructs a ResNet-152 model.

Args:

pretrained (bool): If True, returns a model pre-trained on ImageNet

"""

model = ResNet(Bottleneck, [3, 8, 36, 3], **kwargs)

if pretrained:

pretrained_state_dict = model_zoo.load_url(model_urls['resnet152'])

now_state_dict = model.state_dict()

now_state_dict.update(pretrained_state_dict)

model.load_state_dict(now_state_dict)

return model