PyTorch搭建图卷积神经网络(GCN)完成对论文分类及预测实战(附源码和数据集)

需要数据集和源码请点赞关注收藏后评论区留言~~~

一、数据集简介

我们将使用Cora数据集。

该数据集共2708个样本点,每个样本点都是一篇科学论文,所有样本点被分为7个类别,类别分别是1)基于案例;2)遗传算法;3)神经网络;4)概率方法;5)强化学习;6)规则学习;7)理论

每篇论文都由一个1433维的词向量表示,所以,每个样本点具有1433个特征。词向量的每个元素都对应一个词,且该元素只有0或1两种取值。取0表示该元素对应的词不在论文中,取1表示在论文中。所有的词来源于一个具有1433个词的字典。

每篇论文都至少引用了一篇其他论文,或者被其他论文引用,也就是样本点之间存在联系,没有任何一个样本点与其他样本点完全没联系。如果将样本点看作图中的点,则这是一个连通的图,不存在孤立点。

数据集主要文件有两个:cora.cites, cora.content。其中,cora.content包含了2708个样本的具体信息,每行代表一个论文样本,格式为

<论文id> <由01组成的1433维特征> <论文类别(label)>

总的来说,如果将论文当作“图”的节点,则引用关系则为“图”的边,论文节点信息和引用关系共同构成了图数据。本次实验,我们将利用这些信息,对论文所属的类别进行预测,完成关于论文类别的分类任务。

二、图神经网络与图卷积神经网络简介

图神经网络(Graph Neural Networks, GNN)作为新的人工智能学习模型,可以将实际问题看作图数据中节点之间的连接和消息传播问题,对节点之间的依赖关系进行建模,挖掘传统神经网络无法分析的非欧几里得空间数据的潜在信息。在自然语言处理、计算机视觉、生物化学等领域中,图神经网络得到广泛的应用,并发挥着重要作用。

图卷积神经网络(Graph Convolutional Networks, GCN)是目前主流的图神经网络分支,分类任务则是机器学习中的常见任务。我们将利用GCN算法完成分类任务,进一步体会理解图神经网络工作的原理、GCN的构建实现过程,以及如何将GCN应用于分类任务。

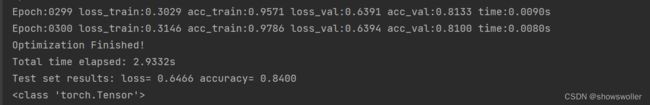

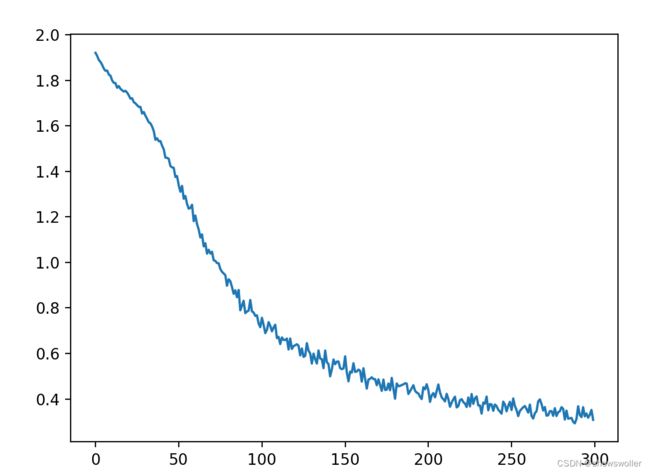

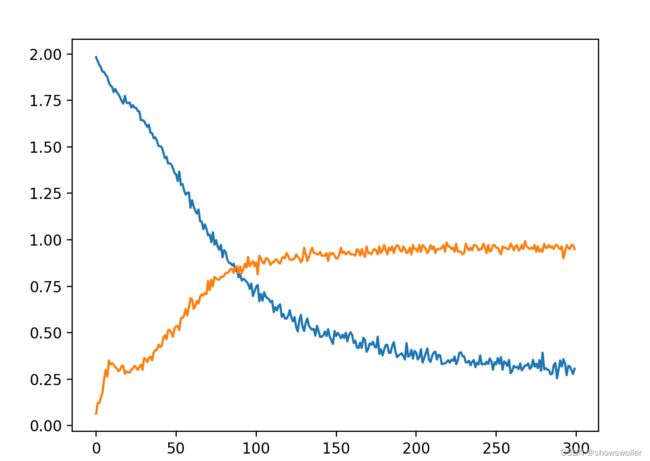

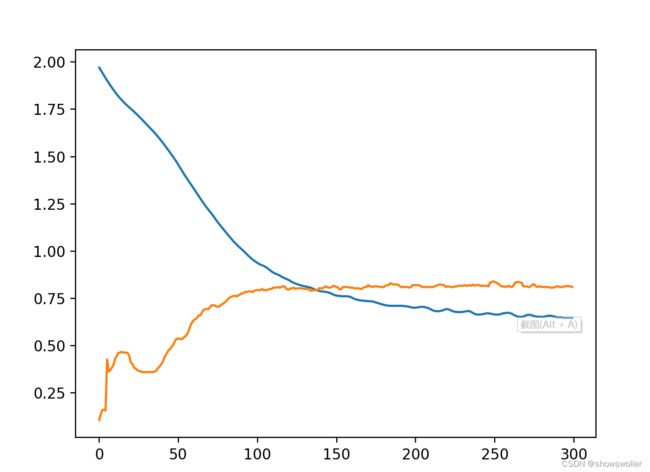

三、运行效果

如下图 可见随着训练次数的增加,损失率在下降,精确度在上升,大概在200次左右收敛。

四、部分源码

主测试类代码如下

from __future__ import division

from __future__ import print_function

import os

os.environ["KMP_DUPLICATE_LIB_OK"]="TRUE"

import time

import argparse

import numpy as np

from torch.utils.data import DataLoader

import torch

import torch.nn.functional as F

import torch.optim as optim

from utils import load_data, accuracy

from models import GCN

import matplotlib.pyplot as plt

# Training settings

parser = argparse.ArgumentParser()

parser.add_argument('--no-cuda', action='store_true', default=False,

help='Disables CUDA training.')

parser.add_argument('--fastmode', action='store_true', default=False,

help='Validate during training pass.')

parser.add_argument('--seed', type=int, default=42, help='Random seed.')

parser.add_argument('--epochs', type=int, default=300,

help='Number of epochs to train.')

parser.add_argument('--lr', type=float, default=0.01,

help='Initial learning rate.')

parser.add_argument('--weight_decay', type=float, default=5e-4,

help='Weight decay (L2 loss on parameters).')

parser.add_argument('--hidden', type=int, default=16,

help='Number of hidden units.')

parser.add_argument('--dropout', type=float, default=0.5,

help='Dropout rate (1 - keep probability).')

args = parser.parse_args()

args.cuda = not args.no_cuda and torch.cuda.is_available()

.manual_seed(args.seed)

# Load data

adj, features, labels, idx_train, idx_val, idx_test = load_data()

# Model and optimizer

model = GCN(nfeat=features.shape[1],

nhid=args.hidden,

nclass=labels.max().item() + 1,

dropout=args.dropout)

optimizer = optim.Adam(model.parameters(),

lr=args.lr, weight_decay=args.weight_decay)

if args.cuda:

model.cuda()

features = features.cuda()

adj = adj.cuda()

labels = labels.cuda()

idx_train = idx_train.cuda()

idx_val = idx_val.cuda()

idx_test = idx_test.cuda()

Loss_list = []

accval=[]

def train(epoch):

t=time.time()

model.train()

optimizer.zero_grad()

output=model(features,adj)

loss_train=F.nll_loss(output[idx_train],labels[idx_train])

acc_train=accuracy(output[idx_train],labels[idx_train])

loss_train.backward()

optimizer.step()

if not args.fastmode:

model.eval()

output=model(features,adj)

loss_val=F.nll_loss(output[idx_val],labels[idx_val])

acc_val=accuracy(output[idx_val],labels[idx_val])

print('Epoch:{:04d}'.format(epoch+1),

'loss_train:{:.4f}'.format(loss_train.item()),

'acc_train:{:.4f}'.format(acc_train.item()),

'loss_val:{:.4f}'.format(loss_val.item()),

'acc_val:{:.4f}'.format(acc_val.item()),

'time:{:.4f}s'.format(time.time()-t))

Loss_list.append(loss_train.item())

Accuracy_list.append(acc_train.item())

lossval.append(loss_val.item())

accval.append(acc_val.item())

def test():

model.eval()

output = model(features, adj)

loss_test = F.nll_loss(output[idx_test], labels[idx_test])

acc_test = accuracy(output[idx_test], labels[idx_test])

print("Test set results:",

"loss= {:.4f}".format(loss_test.item()),

"accuracy= {:.4f}".format(acc_test.item()))

acc=acc_test.detach().numpy()

loss=loss_test.detach().numpy()

print(type(loss_test))

print(type(acc_test))

# 定义两个数组

# Train model

t_total = time.time()

for epoch in range(args.epochs):

train(epoch)

print("Optimization Finished!")

printal time elapsed: {:.4f}s".format(time.time() - t_total))

'''

plt.plot([i for i in range(len(Loss_list))],Loss_list)

pplot([i for i in range(len(Accuracy_list))],Accuracy_list)

'''

plt.plot([i for i in range(len(lossval))],lossval)

plot([i for i in range(len(accval))],accval)

print(type(Loss_list))

print(type(Accuracy_list))

#plt.plot([i for i in range(len(Accuracy_list),Accuracy_list)])

plt.show()

# Testing

test()

模型类如下

import torch.nn as nn

import torch.nn.functional as F

from layers import GraphConvolution

class GCN(nn.Module):

def __init__(self, nfeat, nhid, nclass, dropout):

super(GCN, self).__init__()

self.gc1 = GraphConvolution(nfeat, nhid)

on(nhid, nclass)

self.dropout = dropout

def forward(self, x, adj):

x=F.relu(self.gc1(x,adj))

x=F.dropout(x,self.dropout,training=self.training)

x=self.gc2(x,adj)

return F.log_softmax(x,dim=1)

layer类如下

import math

import torch

from torch.nn.parameter import Parameter

from torch.nn.modules.module import Module

class GraphConvolution(Module):

"""

Simple GCN layer, similar to https://arxiv.org/abs/1609.02907

"""

def __init__(self, in_features, out_features, bias=True):

super(GraphConvolution, self).__init__()

self.in_features=in_features

self.out_features=out_features

self.weight=Parameter(torch.FloatTensor(in_features,out_features))

if bias:

self.bias=Parameter(torch.FloatTensor(out_features))

else:

self.register_parameter('bias',None)

self.reset_parameters()

def reset_parameters(self):

stdv = 1. / math.sqrt(self.weight.size(1))

self.weight.data.uniform_(-stdv, stdv)

if self.bias is not None:

self.bias.data.uniform_(-stdv, stdv)

def forward(self, input, adj):

support=torch.mm(input,self.weight)

output=torch.spmm(adj,support)

if self.bias is not None:

return output+self.bias

else:

return output

def __repr__(self):

return self.__class__.__name__ + ' (' \

+ str(self.in_features) + ' -> ' \

+ str(self.out_features) + ')'

util类如下

import numpy as np

import scipy.sparse as sp

import torch

def encode_onehot(labels):

classes = set(labels)

classes_dict = {c: np.identity(len(classes))[i, :] for i, c in

enumerate(classes)}

labels_onehot = np.array(list(map(classes_dict.get, labels)),

dtype=np.int32)

return labels_onehot

def load_data(path="data/cora/", dataset="cora"):

"""Load citation network dataset (cora only for now)"""

print('Loading {} dataset...'.format(dataset))

idx_features_labels = np.genfromtxt("{}{}.content".format(path, dataset),

dtype=np.dtype(str))

features = sp.csr_matrix(idx_features_labels[:, 1:-1], dtype=np.float32)

labels = encode_onehot(idx_features_labels[:, -1])

# build graph

idx = np.array(idx_features_labels[:, 0], dtype=np.int32)

idx_map = {j: i for i, j in enumerate(idx)}

edges_unordered = np.genfromtxt("{}{}.cites".format(path, dataset),

dtype=np.int32)

edges = np.array(list(map(idx_map.get, edges_unordered.flatten())),

dtype=np.int32).reshape(edges_unordered.shape)

adj = sp.coo_matrix((np.ones(edges.shape[0]), (edges[:, 0], edges[:, 1])),

shape=(labels.shape[0], labels.shape[0]),

dtype=np.float32)

# build symmetric adjacency matrix

adj = adj + adj.T.multiply(adj.T > adj) - adj.multiply(adj.T > adj)

features = normalize(features)

adj = normalize(adj + sp.eye(adj.shape[0]))

idx_train = range(140)

idx_val = range(200, 500)

idx_test = range(500, 1500)

features = torch.FloatTensor(np.array(features.todense()))

labels = torch.LongTensor(np.where(labels)[1])

adj = sparse_mx_to_torch_sparse_tensor(adj)

idx_train = torch.LongTensor(idx_train)

idx_val = torch.LongTensor(idx_val)

idx_test = torch.LongTensor(idx_test)

return adj, features, labels, idx_train, idx_val, idx_test

def normalize(mx):

"""Row-normalize sparse matrix"""

rowsum = np.array(mx.sum(1))

r_inv = np.power(rowsum, -1).flatten()

r_inv[np.isinf(r_inv)] = 0.

r_mat_inv = sp.diags(r_inv)

mx = r_mat_inv.dot(mx)

return mx

de_to_torch_sparse_tensor(sparse_mx):

"""Convert a scipy sparse matrix to a torch sparse tensor."""

sparse_mx = sparse_mx.tocoo().astype(np.float32)

indices = torch.from_numpy(

np.vstack((sparse_mx.row, sparse_mx.col)).astype(np.int64))

values = torch.from_numpy(sparse_mx.data)

shape = torch.Size(sparse_mx.shape)

return torch.sparse.FloatTensor(indices, values, shape)

创作不易 觉得有帮助请点赞关注收藏~~~