NNDL 实验六 卷积神经网络(4)ResNet18实现MNIST

5.4 基于残差网络的手写体数字识别实验

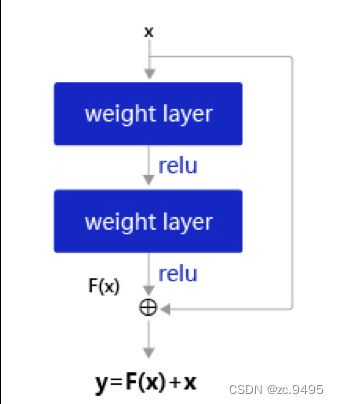

残差网络(Residual Network,ResNet)是在神经网络模型中给非线性层增加直连边的方式来缓解梯度消失问题,从而使训练深度神经网络变得更加容易。

假设f(x;θ)为一个或多个神经层,残差单元在f()的输入和输出之间加上一个直连边。不同于传统网络结构中让网络f(x;θ)去逼近一个目标函数h(x),在残差网络中,将目标函数h(x)拆为了两个部分:恒等函数x和残差函数h(x)−x

![]()

其中θ为可学习的参数。

一个典型的残差单元如图所示,由多个级联的卷积层和一个跨层的直连边组成。

5.4.1 模型构建

构建ResNet18的残差单元,然后在组建完整的网络。

5.4.1.1 残差单元

残差单元包裹的非线性层的输入和输出形状大小应该一致。如果一个卷积层的输入特征图和输出特征图的通道数不一致,则其输出与输入特征图无法直接相加。为了解决上述问题,我们可以使用1×1大小的卷积将输入特征图的通道数映射为与级联卷积输出特征图的一致通道数。

1×1卷积:与标准卷积完全一样,唯一的特殊点在于卷积核的尺寸是1×1,也就是不去考虑输入数据局部信息之间的关系,而把关注点放在不同通道间。通过使用1×1卷积,可以起到如下作用:

实现信息的跨通道交互与整合。考虑到卷积运算的输入输出都是3个维度(宽、高、多通道),所以1×1卷积实际上就是对每个像素点,在不同的通道上进行线性组合,从而整合不同通道的信息;

对卷积核通道数进行降维和升维,减少参数量。经过1×1卷积后的输出保留了输入数据的原有平面结构,通过调控通道数,从而完成升维或降维的作用;

利用1×1卷积后的非线性激活函数,在保持特征图尺寸不变的前提下,大幅增加非线性。

import torch

import torch.nn as nn

import torch.nn.functional as F

class ResBlock(nn.Module):

def __init__(self, in_channels, out_channels, stride=1, use_residual=True):

"""

残差单元

输入:

- in_channels:输入通道数

- out_channels:输出通道数

- stride:残差单元的步长,通过调整残差单元中第一个卷积层的步长来控制

- use_residual:用于控制是否使用残差连接

"""

super(ResBlock, self).__init__()

self.stride = stride

self.use_residual = use_residual

# 第一个卷积层,卷积核大小为3×3,可以设置不同输出通道数以及步长

self.conv1 = nn.Conv2d(in_channels, out_channels, 3, padding=1, stride=self.stride, bias=False)

# 第二个卷积层,卷积核大小为3×3,不改变输入特征图的形状,步长为1

self.conv2 = nn.Conv2d(out_channels, out_channels, 3, padding=1, bias=False)

# 如果conv2的输出和此残差块的输入数据形状不一致,则use_1x1conv = True

# 当use_1x1conv = True,添加1个1x1的卷积作用在输入数据上,使其形状变成跟conv2一致

if in_channels != out_channels or stride != 1:

self.use_1x1conv = True

else:

self.use_1x1conv = False

# 当残差单元包裹的非线性层输入和输出通道数不一致时,需要用1×1卷积调整通道数后再进行相加运算

if self.use_1x1conv:

self.shortcut = nn.Conv2d(in_channels, out_channels, 1, stride=self.stride, bias=False)

# 每个卷积层后会接一个批量规范化层,批量规范化的内容在7.5.1中会进行详细介绍

self.bn1 = nn.BatchNorm2d(out_channels)

self.bn2 = nn.BatchNorm2d(out_channels)

if self.use_1x1conv:

self.bn3 = nn.BatchNorm2d(out_channels)

def forward(self, inputs):

y = F.relu(self.bn1(self.conv1(inputs)))

y = self.bn2(self.conv2(y))

if self.use_residual:

if self.use_1x1conv: # 如果为真,对inputs进行1×1卷积,将形状调整成跟conv2的输出y一致

shortcut = self.shortcut(inputs)

shortcut = self.bn3(shortcut)

else: # 否则直接将inputs和conv2的输出y相加

shortcut = inputs

y = torch.add(shortcut, y)

out = F.relu(y)

return out

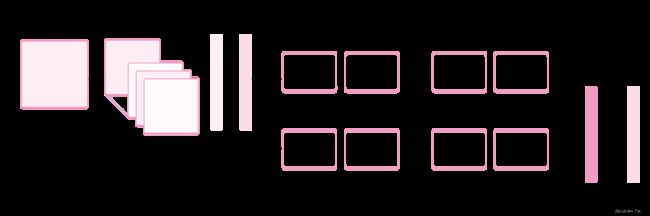

5.4.1.2 残差网络的整体结构

残差网络就是将很多个残差单元串联起来构成的一个非常深的网络。ResNet18 的网络结构如下图所示.

其中为了便于理解,可以将ResNet18网络划分为6个模块:

第一模块:包含了一个步长为2,大小为7×7的卷积层,卷积层的输出通道数为64,卷积层的输出经过批量归一化、ReLU激活函数的处理后,接了一个步长为2的3×3的最大汇聚层;

第二模块:包含了两个残差单元,经过运算后,输出通道数为64,特征图的尺寸保持不变;

第三模块:包含了两个残差单元,经过运算后,输出通道数为128,特征图的尺寸缩小一半;

第四模块:包含了两个残差单元,经过运算后,输出通道数为256,特征图的尺寸缩小一半;

第五模块:包含了两个残差单元,经过运算后,输出通道数为512,特征图的尺寸缩小一半;

第六模块:包含了一个全局平均汇聚层,将特征图变为1×1的大小,最终经过全连接层计算出最后的输出。

ResNet18模型的代码实现如下:

定义模块一:

def make_first_module(in_channels):

# 模块一:7*7卷积、批量规范化、汇聚

m1 = nn.Sequential(nn.Conv2d(in_channels, 64, 7, stride=2, padding=3),

nn.BatchNorm2d(64), nn.ReLU(),

nn.MaxPool2d(kernel_size=3, stride=2, padding=1))

return m1

定义模块二到模块五:

def resnet_module(input_channels, out_channels, num_res_blocks, stride=1, use_residual=True):

blk = []

# 根据num_res_blocks,循环生成残差单元

for i in range(num_res_blocks):

if i == 0: # 创建模块中的第一个残差单元

blk.append(ResBlock(input_channels, out_channels,

stride=stride, use_residual=use_residual))

else: # 创建模块中的其他残差单元

blk.append(ResBlock(out_channels, out_channels, use_residual=use_residual))

return blk

封装模块二到模块五:

def make_modules(use_residual):

# 模块二:包含两个残差单元,输入通道数为64,输出通道数为64,步长为1,特征图大小保持不变

m2 = nn.Sequential(*resnet_module(64, 64, 2, stride=1, use_residual=use_residual))

# 模块三:包含两个残差单元,输入通道数为64,输出通道数为128,步长为2,特征图大小缩小一半。

m3 = nn.Sequential(*resnet_module(64, 128, 2, stride=2, use_residual=use_residual))

# 模块四:包含两个残差单元,输入通道数为128,输出通道数为256,步长为2,特征图大小缩小一半。

m4 = nn.Sequential(*resnet_module(128, 256, 2, stride=2, use_residual=use_residual))

# 模块五:包含两个残差单元,输入通道数为256,输出通道数为512,步长为2,特征图大小缩小一半。

m5 = nn.Sequential(*resnet_module(256, 512, 2, stride=2, use_residual=use_residual))

return m2, m3, m4, m5

定义完整网络:

# 定义完整网络

class Model_ResNet18(nn.Module):

def __init__(self, in_channels=3, num_classes=10, use_residual=True):

super(Model_ResNet18,self).__init__()

m1 = make_first_module(in_channels)

m2, m3, m4, m5 = make_modules(use_residual)

# 封装模块一到模块6

self.net = nn.Sequential(m1, m2, m3, m4, m5,

# 模块六:汇聚层、全连接层

nn.AdaptiveAvgPool2D(1), nn.Flatten(), nn.Linear(512, num_classes) )

def forward(self, x):

return self.net(x)

这里同样可以使用torchsummary.summary统计模型的参数量。

device = torch.device("cuda" if torch.cuda.is_available() else "cpu")

model = Model_ResNet18(in_channels=1, num_classes=10, use_residual=True).to(device)

torchsummary.summary(model, (1, 32, 32))

实验结果:

----------------------------------------------------------------

Layer (type) Output Shape Param #

================================================================

Conv2d-1 [-1, 64, 16, 16] 3,200

BatchNorm2d-2 [-1, 64, 16, 16] 128

ReLU-3 [-1, 64, 16, 16] 0

MaxPool2d-4 [-1, 64, 8, 8] 0

Conv2d-5 [-1, 64, 8, 8] 36,864

BatchNorm2d-6 [-1, 64, 8, 8] 128

Conv2d-7 [-1, 64, 8, 8] 36,864

BatchNorm2d-8 [-1, 64, 8, 8] 128

ResBlock-9 [-1, 64, 8, 8] 0

Conv2d-10 [-1, 64, 8, 8] 36,864

BatchNorm2d-11 [-1, 64, 8, 8] 128

Conv2d-12 [-1, 64, 8, 8] 36,864

BatchNorm2d-13 [-1, 64, 8, 8] 128

ResBlock-14 [-1, 64, 8, 8] 0

Conv2d-15 [-1, 128, 4, 4] 73,728

BatchNorm2d-16 [-1, 128, 4, 4] 256

Conv2d-17 [-1, 128, 4, 4] 147,456

BatchNorm2d-18 [-1, 128, 4, 4] 256

Conv2d-19 [-1, 128, 4, 4] 8,192

BatchNorm2d-20 [-1, 128, 4, 4] 256

ResBlock-21 [-1, 128, 4, 4] 0

Conv2d-22 [-1, 128, 4, 4] 147,456

BatchNorm2d-23 [-1, 128, 4, 4] 256

Conv2d-24 [-1, 128, 4, 4] 147,456

BatchNorm2d-25 [-1, 128, 4, 4] 256

ResBlock-26 [-1, 128, 4, 4] 0

Conv2d-27 [-1, 256, 2, 2] 294,912

BatchNorm2d-28 [-1, 256, 2, 2] 512

Conv2d-29 [-1, 256, 2, 2] 589,824

BatchNorm2d-30 [-1, 256, 2, 2] 512

Conv2d-31 [-1, 256, 2, 2] 32,768

BatchNorm2d-32 [-1, 256, 2, 2] 512

ResBlock-33 [-1, 256, 2, 2] 0

Conv2d-34 [-1, 256, 2, 2] 589,824

BatchNorm2d-35 [-1, 256, 2, 2] 512

Conv2d-36 [-1, 256, 2, 2] 589,824

BatchNorm2d-37 [-1, 256, 2, 2] 512

ResBlock-38 [-1, 256, 2, 2] 0

Conv2d-39 [-1, 512, 1, 1] 1,179,648

BatchNorm2d-40 [-1, 512, 1, 1] 1,024

Conv2d-41 [-1, 512, 1, 1] 2,359,296

BatchNorm2d-42 [-1, 512, 1, 1] 1,024

Conv2d-43 [-1, 512, 1, 1] 131,072

BatchNorm2d-44 [-1, 512, 1, 1] 1,024

ResBlock-45 [-1, 512, 1, 1] 0

Conv2d-46 [-1, 512, 1, 1] 2,359,296

BatchNorm2d-47 [-1, 512, 1, 1] 1,024

Conv2d-48 [-1, 512, 1, 1] 2,359,296

BatchNorm2d-49 [-1, 512, 1, 1] 1,024

ResBlock-50 [-1, 512, 1, 1] 0

AdaptiveAvgPool2d-51 [-1, 512, 1, 1] 0

Flatten-52 [-1, 512] 0

Linear-53 [-1, 10] 5,130

================================================================

Total params: 11,175,434

Trainable params: 11,175,434

Non-trainable params: 0

----------------------------------------------------------------

Input size (MB): 0.00

Forward/backward pass size (MB): 1.05

Params size (MB): 42.63

Estimated Total Size (MB): 43.69

----------------------------------------------------------------

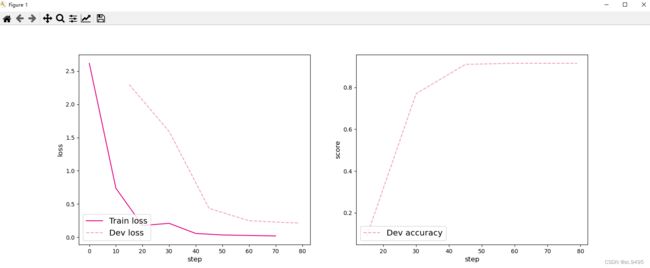

为了验证残差连接对深层卷积神经网络的训练可以起到促进作用,接下来先使用ResNet18(use_residual设置为False)进行手写数字识别实验,再添加残差连接(use_residual设置为True),观察实验对比效果。

5.4.2 没有残差连接的ResNet18

为了验证残差连接的效果,先使用没有残差连接的ResNet18进行实验。

5.4.2.1 模型训练

使用训练集和验证集进行模型训练,共训练5个epoch。在实验中,保存准确率最高的模型作为最佳模型。代码实现如下

import plot

from torch.utils.data import DataLoader,Dataset

import json

import gzip

import torchvision.transforms as transforms

import numpy as np

from PIL import Image

import torch.optim as opt

# 打印并观察数据集分布情况

train_set, dev_set, test_set = json.load(gzip.open('./mnist.json.gz'))

train_images, train_labels = train_set[0][:1000], train_set[1][:1000]

dev_images, dev_labels = dev_set[0][:200], dev_set[1][:200]

test_images, test_labels = test_set[0][:200], test_set[1][:200]

train_set, dev_set, test_set = [train_images, train_labels], [dev_images, dev_labels], [test_images, test_labels]

# 数据预处理

transforms = transforms.Compose([transforms.Resize(32),transforms.ToTensor(), transforms.Normalize(mean=[0.5], std=[0.5])])

class MNIST_dataset(Dataset):

def __init__(self, dataset, transforms, mode='train'):

self.mode = mode

self.transforms = transforms

self.dataset = dataset

def __getitem__(self, idx):

# 获取图像和标签

image, label = self.dataset[0][idx], self.dataset[1][idx]

image, label = np.array(image).astype('float32'), int(label)

image = np.reshape(image, [28, 28])

image = Image.fromarray(image.astype('uint8'), mode='L')

image = self.transforms(image)

return image, label

def __len__(self):

return len(self.dataset[0])

# 加载 mnist 数据集

train_dataset = MNIST_dataset(dataset=train_set, transforms=transforms, mode='train')

test_dataset = MNIST_dataset(dataset=test_set, transforms=transforms, mode='test')

dev_dataset = MNIST_dataset(dataset=dev_set, transforms=transforms, mode='dev')

# 学习率大小

lr = 0.005

# 批次大小

batch_size = 64

# 加载数据

train_loader = DataLoader(train_dataset, batch_size=batch_size, shuffle=True)

dev_loader = DataLoader(dev_dataset, batch_size=batch_size)

test_loader = DataLoader(test_dataset, batch_size=batch_size)

# 定义网络,不使用残差结构的深层网络

model = Model_ResNet18(in_channels=1, num_classes=10, use_residual=False)

# 定义优化器

optimizer = opt.SGD(model.parameters(), lr)

loss_fn = F.cross_entropy

# 定义评价指标

metric = Accuracy()

# 实例化RunnerV3

runner = RunnerV3(model, optimizer, loss_fn, metric)

# 启动训练

log_steps = 15

eval_steps = 15

runner.train(train_loader, dev_loader, num_epochs=5, log_steps=log_steps,

eval_steps=eval_steps, save_path="best_model.pdparams")

# 可视化观察训练集与验证集的Loss变化情况

plot.plot(runner, 'cnn-loss2.pdf')

实验结果:

[Train] epoch: 0/5, step: 0/80, loss: 2.39121

[Train] epoch: 0/5, step: 15/80, loss: 1.20334

[Evaluate] dev score: 0.14500, dev loss: 2.29637

[Evaluate] best accuracy performence has been updated: 0.00000 --> 0.14500

[Train] epoch: 1/5, step: 30/80, loss: 0.50368

[Evaluate] dev score: 0.07000, dev loss: 2.29749

[Train] epoch: 2/5, step: 45/80, loss: 0.29673

[Evaluate] dev score: 0.79500, dev loss: 1.14356

[Evaluate] best accuracy performence has been updated: 0.14500 --> 0.79500

[Train] epoch: 3/5, step: 60/80, loss: 0.18768

[Evaluate] dev score: 0.89500, dev loss: 0.45073

[Evaluate] best accuracy performence has been updated: 0.79500 --> 0.89500

[Train] epoch: 4/5, step: 75/80, loss: 0.09906

[Evaluate] dev score: 0.93500, dev loss: 0.28159

[Evaluate] best accuracy performence has been updated: 0.89500 --> 0.93500

[Evaluate] dev score: 0.92000, dev loss: 0.27241

[Train] Training done!

5.4.2.2 模型评价

使用测试数据对在训练过程中保存的最佳模型进行评价,观察模型在测试集上的准确率以及损失情况。代码实现如下

# 加载最优模型

runner.load_model('best_model.pdparams')

# 模型评价

score, loss = runner.evaluate(test_loader)

print("[Test] accuracy/loss: {:.4f}/{:.4f}".format(score, loss))

实验结果:

[Test] accuracy/loss: 0.9250/0.3992

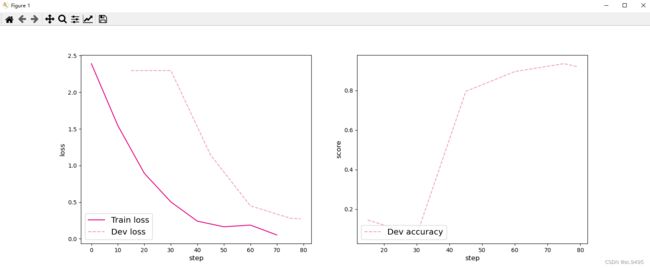

5.4.3 带残差连接的ResNet18

5.4.3.1 模型训练

使用带残差连接的ResNet18重复上面的实验(use_residual=True)代码实现如下:

# 学习率大小

lr = 0.01

# 批次大小

batch_size = 64

# 加载数据

train_loader = data.DataLoader(train_dataset, batch_size=batch_size, shuffle=True)

dev_loader = data.DataLoader(dev_dataset, batch_size=batch_size)

test_loader = data.DataLoader(test_dataset, batch_size=batch_size)

# 定义网络,通过指定use_residual为True,使用残差结构的深层网络

model = Model_ResNet18(in_channels=1, num_classes=10, use_residual=True)

# 定义优化器

optimizer = opt.SGD(lr=lr, params=model.parameters())

# 实例化RunnerV3

runner = RunnerV3(model, optimizer, loss_fn, metric)

# 启动训练

log_steps = 15

eval_steps = 15

runner.train(train_loader, dev_loader, num_epochs=5, log_steps=log_steps,

eval_steps=eval_steps, save_path="best_model.pdparams")

# 可视化观察训练集与验证集的Loss变化情况

plot(runner, 'cnn-loss3.pdf')

实验结果:

[Train] epoch: 0/5, step: 0/80, loss: 2.61708

[Train] epoch: 0/5, step: 15/80, loss: 0.54654

[Evaluate] dev score: 0.09000, dev loss: 2.29633

[Evaluate] best accuracy performence has been updated: 0.00000 --> 0.09000

[Train] epoch: 1/5, step: 30/80, loss: 0.20883

[Evaluate] dev score: 0.77000, dev loss: 1.59059

[Evaluate] best accuracy performence has been updated: 0.09000 --> 0.77000

[Train] epoch: 2/5, step: 45/80, loss: 0.05153

[Evaluate] dev score: 0.91000, dev loss: 0.43380

[Evaluate] best accuracy performence has been updated: 0.77000 --> 0.91000

[Train] epoch: 3/5, step: 60/80, loss: 0.02612

[Evaluate] dev score: 0.91500, dev loss: 0.24842

[Evaluate] best accuracy performence has been updated: 0.91000 --> 0.91500

[Train] epoch: 4/5, step: 75/80, loss: 0.01828

[Evaluate] dev score: 0.91500, dev loss: 0.21769

[Evaluate] dev score: 0.91500, dev loss: 0.21340

[Train] Training done!

5.4.3.2 模型评价

使用测试数据对在训练过程中保存的最佳模型进行评价,观察模型在测试集上的准确率以及损失情况。

# 加载最优模型

runner.load_model('best_model.pdparams')

# 模型评价

score, loss = runner.evaluate(test_loader)

print("[Test] accuracy/loss: {:.4f}/{:.4f}".format(score, loss))

实验结果:

[Test] accuracy/loss: 0.9400/0.2833

添加了残差连接后,模型收敛曲线更平滑。

从输出结果看,和不使用残差连接的ResNet相比,添加了残差连接后,模型效果有了一定的提升。

5.4.4 与高层API实现版本的对比实验

对于Reset18这种比较经典的图像分类网络,飞桨高层API中都为大家提供了实现好的版本,大家可以不再从头开始实现。这里为高层API版本的resnet18模型和自定义的resnet18模型赋予相同的权重,并使用相同的输入数据,观察输出结果是否一致。

import warnings

#warnings.filterwarnings("ignore")

# 使用飞桨HAPI中实现的resnet18模型,该模型默认输入通道数为3,输出类别数1000

hapi_model = resnet18(pretrained=True)

# 自定义的resnet18模型

model = Model_ResNet18(in_channels=3, num_classes=1000, use_residual=True)

# 获取网络的权重

params = hapi_model.state_dict()

# 用来保存参数名映射后的网络权重

new_params = {}

# 将参数名进行映射

for key in params:

if 'layer' in key:

if 'downsample.0' in key:

new_params['net.' + key[5:8] + '.shortcut' + key[-7:]] = params[key]

elif 'downsample.1' in key:

new_params['net.' + key[5:8] + '.shorcutt' + key[23:]] = params[key]

else:

new_params['net.' + key[5:]] = params[key]

elif 'conv1.weight' == key:

new_params['net.0.0.weight'] = params[key]

elif 'bn1' in key:

new_params['net.0.1' + key[3:]] = params[key]

elif 'fc' in key:

new_params['net.7' + key[2:]] = params[key]

# 将飞桨HAPI中实现的resnet18模型的权重参数赋予自定义的resnet18模型,保持两者一致

del new_params[ "net.2.0.shorcutteight"]

del new_params["net.2.0.shorcuttias"]

del new_params["net.2.0.shorcuttunning_mean"]

del new_params["net.2.0.shorcuttunning_var"]

del new_params["net.2.0.shorcuttum_batches_tracked"]

del new_params["net.3.0.shorcutteight"]

del new_params["net.3.0.shorcuttias"]

del new_params["net.3.0.shorcuttunning_mean"]

del new_params["net.3.0.shorcuttunning_var"]

del new_params["net.3.0.shorcuttum_batches_tracked"]

del new_params["net.4.0.shorcutteight"]

del new_params["net.4.0.shorcuttias"]

del new_params["net.4.0.shorcuttunning_mean"]

del new_params["net.4.0.shorcuttunning_var"]

del new_params["net.4.0.shorcuttum_batches_tracked"]

#model.load_state_dict(torch.load("best_model.pdparams"))

#model.load_state_dict(new_params)

# 这里用np.random创建一个随机数组作为测试数据

inputs = np.random.randn(*[3,3,32,32])

inputs = inputs.astype('float32')

x = torch.tensor(inputs)

output = hapi_model(x)

hapi_out = hapi_model(x)

# 计算两个模型输出的差异

diff = output - hapi_out

# 取差异最大的值

max_diff = torch.max(diff)

print(max_diff)

实验结果:

tensor(0., grad_fn=<MaxBackward1>)

可以看到,高层API版本的resnet18模型和自定义的resnet18模型输出结果是一致的,也就说明两个模型的实现完全一样。

实验心得:

这次实验,学习了ResNet经典残差网络完成了Mnist手写数字的识别,比较了有无残差连接的ResNet18,看到了有残差连接后,模型效果更好。也体会了高层API的便捷性。