模型剪枝大瘦身代码实战案例

模型剪枝大瘦身代码实战案例

注:大家觉得博客好的话,别忘了点赞收藏呀,本人每周都会更新关于人工智能和大数据相关的内容,内容多为原创,Python Java Scala SQL 代码,CV NLP 推荐系统等,Spark Flink Kafka Hbase Hive Flume等等~写的都是纯干货,各种顶会的论文解读,一起进步。

今天和大家分享一下如何通过BN加上L1正则化的方法对模型进行剪枝大瘦身

Learning Efficient Convolutional Networks through Network Slimming

https://github.com/foolwood/pytorch-slimming

#博学谷IT学习技术支持#

文章目录

- 模型剪枝大瘦身代码实战案例

- 前言

- 一、损失函数

- 二、整体框架

- 三、代码实战

-

- 1.训练模型

- 2.模型剪纸瘦身

- 3.VGG模型本身

- 总结

前言

今天和大家分享一篇ICCV的经典论文,通俗易懂,非常简单,主要是通过对BN中gamma加上L1正则化的方法对模型进行剪枝大瘦身。

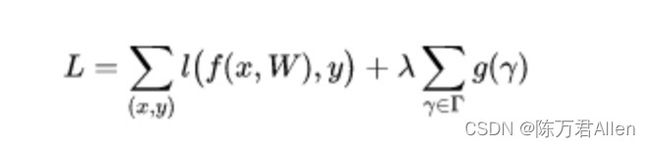

一、损失函数

现有的一些稀疏化方法,包括权重稀疏化、核稀疏化、通道稀疏化和层稀疏化等。权重稀疏化灵活性最高,也获得最高的压缩率,但是通常需要特定硬件的支持才能达到加速的效果。层稀疏化最粗糙,灵活性最低,但是在一般的硬件上都能获得加速效果。通道裁剪有一个比较好的均衡,而且也可以在包括 CNN 或者全连层网络上都可以进行应用。

为了达到通道稀疏性的效果,和这个通道相关的输入和输出连接都需要被裁剪。

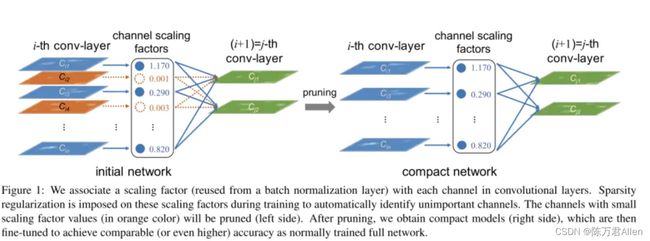

论文提出的方法,是对每个通道训练一个尺度因子,将这个尺度因子和通道输出进行相乘。在网络训练时对网络和这些尺度因子进行联合优化,然后裁剪掉尺度因子小的通道,并对裁剪后的网络进行微调。因此网络的损失函数就是:

这里和一般的损失函数相比唯一的区别就是对BN中的参数gamma加了L1的正则项。这样通过L1的正则项,可以使得那些原本权重偏小的gamma更趋向于0。

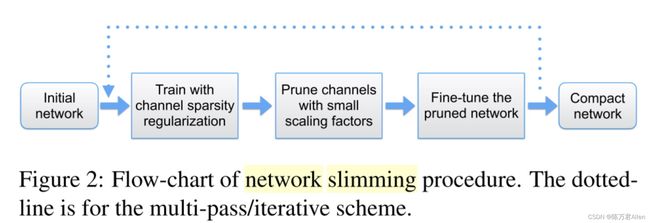

二、整体框架

通过BN中正则化以后gamma值的差异,可以看出CNN中哪写特征图是对模型更有用的。留下这些特征图即可。

然后在对模型进行微调重新训练即可。模型效果不会差太多。

三、代码实战

1.训练模型

这里和一般训练模型的代码没什么区别,唯一不一样的是

定义updateBN函数,因为要对gamma加上L1正则项。

def updateBN():

for m in model.modules():

if isinstance(m, nn.BatchNorm2d):

m.weight.grad.data.add_(args.s*torch.sign(m.weight.data)) # L1 大于0为1 小于0

代码如下(示例):

from __future__ import print_function

import os

import argparse

import torch

import torch.nn as nn

import torch.nn.functional as F

import torch.optim as optim

from torchvision import datasets, transforms

from torch.autograd import Variable

from vgg import vgg

import shutil

# 1:训练,并且加入l1正则化 -sr --s 0.0001

# 2:执行剪枝操作 --model model_best.pth.tar --save pruned.pth.tar --percent 0.7

# 3:再次进行微调操作 --refine pruned.pth.tar --epochs 40

# Training settings

parser = argparse.ArgumentParser(description='PyTorch Slimming CIFAR training')

parser.add_argument('--dataset', type=str, default='cifar10',

help='training dataset (default: cifar10)')

parser.add_argument('--sparsity-regularization', '-sr', dest='sr', action='store_true',

help='train with channel sparsity regularization')

parser.add_argument('--s', type=float, default=0.0001,

help='scale sparse rate (default: 0.0001)')

parser.add_argument('--refine', default='', type=str, metavar='PATH',

help='refine from prune model')

parser.add_argument('--batch-size', type=int, default=100, metavar='N',

help='input batch size for training (default: 100)')

parser.add_argument('--test-batch-size', type=int, default=1000, metavar='N',

help='input batch size for testing (default: 1000)')

parser.add_argument('--epochs', type=int, default=5, metavar='N',

help='number of epochs to train (default: 160)')

parser.add_argument('--start-epoch', default=0, type=int, metavar='N',

help='manual epoch number (useful on restarts)')

parser.add_argument('--lr', type=float, default=0.1, metavar='LR',

help='learning rate (default: 0.1)')

parser.add_argument('--momentum', type=float, default=0.9, metavar='M',

help='SGD momentum (default: 0.9)')

parser.add_argument('--weight-decay', '--wd', default=1e-4, type=float,

metavar='W', help='weight decay (default: 1e-4)')

parser.add_argument('--resume', default='', type=str, metavar='PATH',

help='path to latest checkpoint (default: none)')

parser.add_argument('--no-cuda', action='store_true', default=False,

help='disables CUDA training')

parser.add_argument('--seed', type=int, default=1, metavar='S',

help='random seed (default: 1)')

parser.add_argument('--log-interval', type=int, default=100, metavar='N',

help='how many batches to wait before logging training status')

args = parser.parse_args()

args.cuda = not args.no_cuda and torch.cuda.is_available()

torch.manual_seed(args.seed)

if args.cuda:

torch.cuda.manual_seed(args.seed)

kwargs = {'num_workers': 0, 'pin_memory': True} if args.cuda else {}

train_loader = torch.utils.data.DataLoader(

datasets.CIFAR10('./data', train=True, download=True,

transform=transforms.Compose([

transforms.Pad(4),

transforms.RandomCrop(32),

transforms.RandomHorizontalFlip(),

transforms.ToTensor(),

transforms.Normalize((0.5, 0.5, 0.5), (0.5, 0.5, 0.5))

])),

batch_size=args.batch_size, shuffle=True, **kwargs)

test_loader = torch.utils.data.DataLoader(

datasets.CIFAR10('./data', train=False, transform=transforms.Compose([

transforms.ToTensor(),

transforms.Normalize((0.5, 0.5, 0.5), (0.5, 0.5, 0.5))

])),

batch_size=args.test_batch_size, shuffle=True, **kwargs)

if args.refine:

checkpoint = torch.load(args.refine)

model = vgg(cfg=checkpoint['cfg'])

model.cuda()

model.load_state_dict(checkpoint['state_dict'])

else:

model = vgg()

if args.cuda:

model.cuda()

optimizer = optim.SGD(model.parameters(), lr=args.lr, momentum=args.momentum, weight_decay=args.weight_decay)

if args.resume:

if os.path.isfile(args.resume):

print("=> loading checkpoint '{}'".format(args.resume))

checkpoint = torch.load(args.resume)

args.start_epoch = checkpoint['epoch']

best_prec1 = checkpoint['best_prec1']

model.load_state_dict(checkpoint['state_dict'])

optimizer.load_state_dict(checkpoint['optimizer'])

print("=> loaded checkpoint '{}' (epoch {}) Prec1: {:f}"

.format(args.resume, checkpoint['epoch'], best_prec1))

else:

print("=> no checkpoint found at '{}'".format(args.resume))

# additional subgradient descent on the sparsity-induced penalty term

def updateBN():

for m in model.modules():

if isinstance(m, nn.BatchNorm2d):

m.weight.grad.data.add_(args.s*torch.sign(m.weight.data)) # L1 大于0为1 小于0为-1 0还是0

def train(epoch):

model.train()

for batch_idx, (data, target) in enumerate(train_loader):

if args.cuda:

data, target = data.cuda(), target.cuda()

data, target = Variable(data), Variable(target)

optimizer.zero_grad()

output = model(data)

loss = F.cross_entropy(output, target)

loss.backward()

if args.sr:

updateBN()

optimizer.step()

if batch_idx % args.log_interval == 0:

print('Train Epoch: {} [{}/{} ({:.1f}%)]\tLoss: {:.6f}'.format(

epoch, batch_idx * len(data), len(train_loader.dataset),

100. * batch_idx / len(train_loader), loss.item()))

def test():

model.eval()

test_loss = 0

correct = 0

for data, target in test_loader:

if args.cuda:

data, target = data.cuda(), target.cuda()

data, target = Variable(data, volatile=True), Variable(target)

output = model(data)

test_loss += F.cross_entropy(output, target, size_average=False).item() # sum up batch loss

pred = output.data.max(1, keepdim=True)[1] # get the index of the max log-probability

correct += pred.eq(target.data.view_as(pred)).cpu().sum()

test_loss /= len(test_loader.dataset)

print('\nTest set: Average loss: {:.4f}, Accuracy: {}/{} ({:.1f}%)\n'.format(

test_loss, correct, len(test_loader.dataset),

100. * correct / len(test_loader.dataset)))

return correct / float(len(test_loader.dataset))

def save_checkpoint(state, is_best, filename='checkpoint.pth.tar'):

torch.save(state, filename)

if is_best:

shutil.copyfile(filename, 'model_best.pth.tar')

best_prec1 = 0.

for epoch in range(args.start_epoch, args.epochs):

if epoch in [args.epochs*0.5, args.epochs*0.75]:

for param_group in optimizer.param_groups:

param_group['lr'] *= 0.1

train(epoch)

prec1 = test()

is_best = prec1 > best_prec1

best_prec1 = max(prec1, best_prec1)

save_checkpoint({

'epoch': epoch + 1,

'state_dict': model.state_dict(),

'best_prec1': best_prec1,

'optimizer': optimizer.state_dict(),

}, is_best)

2.模型剪纸瘦身

这里通过gamma阈值剪去无用的特征图后,对模型进行再训练微调,使模型整体效果得到提升。

代码如下(示例):

import os

import argparse

import torch

import torch.nn as nn

from torch.autograd import Variable

from torchvision import datasets, transforms

from vgg import vgg

import numpy as np

# Prune settings

parser = argparse.ArgumentParser(description='PyTorch Slimming CIFAR prune')

parser.add_argument('--dataset', type=str, default='cifar10',

help='training dataset (default: cifar10)')

parser.add_argument('--test-batch-size', type=int, default=1000, metavar='N',

help='input batch size for testing (default: 1000)')

parser.add_argument('--no-cuda', action='store_true', default=False,

help='disables CUDA training')

parser.add_argument('--percent', type=float, default=0.5,

help='scale sparse rate (default: 0.5)')

parser.add_argument('--model', default='', type=str, metavar='PATH',

help='path to raw trained model (default: none)')

parser.add_argument('--save', default='', type=str, metavar='PATH',

help='path to save prune model (default: none)')

args = parser.parse_args()

args.cuda = not args.no_cuda and torch.cuda.is_available()

model = vgg()

if args.cuda:

model.cuda()

if args.model:

if os.path.isfile(args.model):

print("=> loading checkpoint '{}'".format(args.model))

checkpoint = torch.load(args.model)

args.start_epoch = checkpoint['epoch']

best_prec1 = checkpoint['best_prec1']

model.load_state_dict(checkpoint['state_dict'])

print("=> loaded checkpoint '{}' (epoch {}) Prec1: {:f}"

.format(args.model, checkpoint['epoch'], best_prec1))

else:

print("=> no checkpoint found at '{}'".format(args.resume))

print(model)

total = 0 # 每层特征图个数 总和

for m in model.modules():

if isinstance(m, nn.BatchNorm2d):

total += m.weight.data.shape[0]

bn = torch.zeros(total) # 拿到每一个gamma值 每个特征图都会对应一个γ、β

index = 0

for m in model.modules():

if isinstance(m, nn.BatchNorm2d):

size = m.weight.data.shape[0]

bn[index:(index+size)] = m.weight.data.abs().clone()

index += size

y, i = torch.sort(bn)

thre_index = int(total * args.percent)

thre = y[thre_index]

pruned = 0

cfg = []

cfg_mask = []

for k, m in enumerate(model.modules()):

if isinstance(m, nn.BatchNorm2d):

weight_copy = m.weight.data.clone()

mask = weight_copy.abs().gt(thre).float().cuda() #.gt 比较前者是否大于后者

pruned = pruned + mask.shape[0] - torch.sum(mask)

m.weight.data.mul_(mask) # BN层gamma置0

m.bias.data.mul_(mask) #

cfg.append(int(torch.sum(mask)))

cfg_mask.append(mask.clone())

print('layer index: {:d} \t total channel: {:d} \t remaining channel: {:d}'.

format(k, mask.shape[0], int(torch.sum(mask))))

elif isinstance(m, nn.MaxPool2d):

cfg.append('M')

pruned_ratio = pruned/total

print('Pre-processing Successful!')

# 置0后先测试下效果

def test():

kwargs = {'num_workers': 0, 'pin_memory': True} if args.cuda else {}

test_loader = torch.utils.data.DataLoader(

datasets.CIFAR10('./data', train=False, transform=transforms.Compose([

transforms.ToTensor(),

transforms.Normalize((0.5, 0.5, 0.5), (0.5, 0.5, 0.5))])),

batch_size=args.test_batch_size, shuffle=True, **kwargs)

model.eval()

correct = 0

for data, target in test_loader:

if args.cuda:

data, target = data.cuda(), target.cuda()

data, target = Variable(data, volatile=True), Variable(target)

output = model(data)

pred = output.data.max(1, keepdim=True)[1] # get the index of the max log-probability

correct += pred.eq(target.data.view_as(pred)).cpu().sum()

print('\nTest set: Accuracy: {}/{} ({:.1f}%)\n'.format(

correct, len(test_loader.dataset), 100. * correct / len(test_loader.dataset)))

return correct / float(len(test_loader.dataset))

# test()

# 执行剪枝

print(cfg)

newmodel = vgg(cfg=cfg) # 剪枝后的模型

newmodel.cuda()

# 为剪枝后的模型赋值权重

layer_id_in_cfg = 0

start_mask = torch.ones(3) #输入

end_mask = cfg_mask[layer_id_in_cfg] #输出

for [m0, m1] in zip(model.modules(), newmodel.modules()):

if isinstance(m0, nn.BatchNorm2d):

idx1 = np.squeeze(np.argwhere(np.asarray(end_mask.cpu().numpy()))) # 赋值

m1.weight.data = m0.weight.data[idx1].clone()

m1.bias.data = m0.bias.data[idx1].clone()

m1.running_mean = m0.running_mean[idx1].clone()

m1.running_var = m0.running_var[idx1].clone()

layer_id_in_cfg += 1

start_mask = end_mask.clone() #下一层的

if layer_id_in_cfg < len(cfg_mask): # do not change in Final FC

end_mask = cfg_mask[layer_id_in_cfg] #输出

elif isinstance(m0, nn.Conv2d):

idx0 = np.squeeze(np.argwhere(np.asarray(start_mask.cpu().numpy())))

idx1 = np.squeeze(np.argwhere(np.asarray(end_mask.cpu().numpy())))

print('In shape: {:d} Out shape:{:d}'.format(idx0.shape[0], idx1.shape[0]))

w = m0.weight.data[:, idx0, :, :].clone() #拿到原始训练好权重

w = w[idx1, :, :, :].clone()

m1.weight.data = w.clone() # 将所需权重赋值到剪枝后的模型

# m1.bias.data = m0.bias.data[idx1].clone()

elif isinstance(m0, nn.Linear):

idx0 = np.squeeze(np.argwhere(np.asarray(start_mask.cpu().numpy())))

m1.weight.data = m0.weight.data[:, idx0].clone()

torch.save({'cfg': cfg, 'state_dict': newmodel.state_dict()}, args.save)

print(newmodel)

model = newmodel

test()

3.VGG模型本身

不多说了,一个比较老比较经典的模型举个例子,大家可以根据自己的下游任务替换即可。

代码如下(示例):

import torch

import torch.nn as nn

from torch.autograd import Variable

import math # init

class vgg(nn.Module):

def __init__(self, dataset='cifar10', init_weights=True, cfg=None):

super(vgg, self).__init__()

if cfg is None:

cfg = [64, 64, 'M', 128, 128, 'M', 256, 256, 256, 256, 'M', 512, 512, 512, 512, 'M', 512, 512, 512, 512]

self.feature = self.make_layers(cfg, True)

if dataset == 'cifar100':

num_classes = 100

elif dataset == 'cifar10':

num_classes = 10

self.classifier = nn.Linear(cfg[-1], num_classes)

if init_weights:

self._initialize_weights()

def make_layers(self, cfg, batch_norm=False):

layers = []

in_channels = 3

for v in cfg:

if v == 'M':

layers += [nn.MaxPool2d(kernel_size=2, stride=2)]

else:

conv2d = nn.Conv2d(in_channels, v, kernel_size=3, padding=1, bias=False)

#print (in_channels,' ',v)

if batch_norm:

layers += [conv2d, nn.BatchNorm2d(v), nn.ReLU(inplace=True)]

else:

layers += [conv2d, nn.ReLU(inplace=True)]

in_channels = v

return nn.Sequential(*layers)

def forward(self, x):

x = self.feature(x)

x = nn.AvgPool2d(2)(x)

x = x.view(x.size(0), -1)

y = self.classifier(x)

return y

def _initialize_weights(self):

for m in self.modules():

if isinstance(m, nn.Conv2d):

n = m.kernel_size[0] * m.kernel_size[1] * m.out_channels

m.weight.data.normal_(0, math.sqrt(2. / n))

if m.bias is not None:

m.bias.data.zero_()

elif isinstance(m, nn.BatchNorm2d):

m.weight.data.fill_(0.5)

m.bias.data.zero_()

elif isinstance(m, nn.Linear):

m.weight.data.normal_(0, 0.01)

m.bias.data.zero_()

if __name__ == '__main__':

net = vgg()

x = Variable(torch.FloatTensor(16, 3, 40, 40))

y = net(x)

print(y.data.shape)

总结

非常直观的模型剪枝大瘦身方法非常好用,文章的核心在于,将BN层中的可学习参数gamma,作为稀疏化参数,对CNN卷积之后提取保留更有用的特征图的方法进行模型剪枝大瘦身。