【PyTorch】10 文本篇更多代码——BOW、N-Gram、CBOW、LSTM、BI-LSTM CRF

示例

- 1. 基于逻辑回归与词袋模式(BOW)的文本分类器

-

- 完整代码

- 结果

- 2. 词嵌入:编码形式的词汇语义

-

- 2.1 N-Gram语言模型

-

- 完整代码

- 结果

- 2.2 计算连续词袋模型(CBOW)的词向量

-

- 完整代码

- 结果

- 3. 序列模型和长短句记忆(LSTM)模型

-

- 完整代码

- 结果

- 4. 高级:制定动态决策和BI-LSTM CRF

-

- 代码

- 结果

- 小结

1. 基于逻辑回归与词袋模式(BOW)的文本分类器

原教程网站

模型将会把BOW表示映射成标签上的对数概率。我们为词汇中的每个词指定一个索引。例如,我们所有的词汇是两个单词“hello”和"world", 用0和1表示。句子“hello hello hello hello”的表示是[4,0];对于“hello world world hello”, 则表示成[2,2],即通常表示成:

[Count(hello),Count(world)]

用x来表示这个BOW向量。网络的输出是: logSoftmax ( A X + b ) \text{logSoftmax}(AX+b) logSoftmax(AX+b)

也就是说,我们数据传入一个仿射变换然后做对数归一化logsoftmax

构造word_to_ix字典

{'me': 0, 'gusta': 1, 'comer': 2, 'en': 3, 'la': 4, 'cafeteria': 5, 'Give': 6, 'it': 7, 'to': 8, 'No': 9, 'creo': 10, 'que': 11, 'sea': 12, 'una': 13, 'buena': 14, 'idea': 15, 'is': 16, 'not': 17, 'a': 18, 'good': 19, 'get': 20, 'lost': 21, 'at': 22, 'Yo': 23, 'si': 24, 'on': 25}

观察模型参数

for param in model.parameters():

print(param) # 模型知道它的参数。 下面的第一个输出是A,第二个输出是b。无论何时将组件分配给模块的__init__函数中的类变量,都是使用self.linear = nn.Linear(...)行完成的。然后通过PyTorch,你的模块(在本例中为BoWClassifier)将存储nn.Linear参数的知识

Parameter containing:

tensor([[ 9.9125e-02, 1.3158e-01, 9.5439e-02, -4.4233e-02, 8.8466e-02,

1.4610e-01, 3.9570e-03, 1.4892e-01, 9.1140e-02, 2.2203e-05,

1.3627e-01, -1.8189e-01, 1.5543e-01, -8.9664e-02, 1.4045e-01,

1.6706e-01, -1.3230e-01, -3.0776e-02, -1.0510e-01, -4.8319e-02,

1.2490e-02, -1.7101e-01, -1.3855e-01, 4.4969e-02, -8.6853e-02,

-1.1655e-01],

[ 1.6611e-01, -1.5862e-01, -5.8331e-02, 1.8198e-01, -1.1924e-01,

-7.3907e-02, 5.2499e-02, -1.7922e-01, -1.1853e-01, 1.6698e-01,

9.2033e-02, 3.9494e-02, 6.4311e-02, -7.4467e-02, -9.1371e-02,

9.7125e-02, -1.2738e-03, -1.2694e-01, -7.6443e-02, 1.8346e-01,

-1.1923e-01, 1.2048e-01, 1.1547e-01, -7.8988e-02, -1.5942e-01,

1.2734e-02]], requires_grad=True)

Parameter containing:

tensor([0.1288, 0.1815], requires_grad=True)

完整代码

data = [("me gusta comer en la cafeteria".split(), "SPANISH"),

("Give it to me".split(), "ENGLISH"),

("No creo que sea una buena idea".split(), "SPANISH"),

("No it is not a good idea to get lost at sea".split(), "ENGLISH")]

test_data = [("Yo creo que si".split(), "SPANISH"),

("it is lost on me".split(), "ENGLISH")]

word_to_ix = {} # word_to_ix 将词汇中的每个词映射到一个唯一的整数,这个整数将是它在词袋向量中的索引。

for sent, _ in data + test_data:

for word in sent:

if word not in word_to_ix:

word_to_ix[word] = len(word_to_ix)

VOCAB_SIZE = len(word_to_ix)

NUM_LABELS = 2

import torch.nn as nn

import torch.nn.functional as F

class BoWClassifier(nn.Module):

def __init__(self, num_labels, vocab_size):

super(BoWClassifier, self).__init__() # 调用nn.Module的init函数。 不要被语法所迷惑,只需在nn.Module中进行调用即可。

self.linear = nn.Linear(vocab_size, num_labels) # 定义你需要的参数。 在本例中,我们需要A和b,即仿射映射的参数。Torch定义了nn.Linear(),它提供了仿射映射。确保你明白为什么输入维度是vocab_size,而输出是num_labels!

def forward(self, bow_vec):

output = self.linear(bow_vec)

output = F.log_softmax(output, dim=1)

return output

import torch

def make_bow_vector(sentence, word_to_ix):

vec = torch.zeros(len(word_to_ix))

for word in sentence:

vec[word_to_ix[word]] += 1

return vec.view(1, -1)

def make_target(label, label_to_ix):

return torch.LongTensor([label_to_ix[label]])

model = BoWClassifier(NUM_LABELS, VOCAB_SIZE)

label_to_ix = {"SPANISH": 0, "ENGLISH": 1}

with torch.no_grad():

for instance, label in test_data:

bow_vec = make_bow_vector(instance, word_to_ix)

log_probs = model(bow_vec)

print(log_probs)

print(next(model.parameters())[:, word_to_ix["creo"]])

import torch.optim as optim

loss_function = nn.NLLLoss()

optimizer = optim.SGD(model.parameters(), lr=0.1)

for epoch in range(100): # 通常,您希望多次传递训练数据.100比实际数据集大得多,但真实数据集有两个以上的实例。通常,在5到30个epochs之间是合理的。

for instance, label in data:

model.zero_grad() # 步骤1: 请记住,PyTorch会累积梯度。

bow_vec = make_bow_vector(instance, word_to_ix)

target = make_target(label, label_to_ix)

log_probs = model(bow_vec)

loss = loss_function(log_probs, target)

loss.backward()

optimizer.step()

with torch.no_grad():

for instance, label in test_data:

bow_vec = make_bow_vector(instance, word_to_ix)

log_probs = model(bow_vec)

print(log_probs)

print(next(model.parameters())[:, word_to_ix["creo"]]) # 对应西班牙语的指数上升,英语下降!

结果

tensor([[-0.5363, -0.8793]])

tensor([[-1.1423, -0.3843]])

tensor([-0.0594, -0.0207], grad_fn=<SelectBackward>)

tensor([[-0.1338, -2.0772]])

tensor([[-3.3813, -0.0346]])

tensor([ 0.3217, -0.4018], grad_fn=<SelectBackward>)

我们得到了正确的结果!可以看到测试集Spanish的对数概率比第一个例子中的高的多,English的对数概率在第二个测试数据中更高,结果也应该 是这样。

对于西班牙语的单词"creo"的模型A和b的参数可以读出来

现在了解了如何创建一个PyTorch组件,将数据传入并进行梯度更新。现在我们已经可以开始进行深度学习上的自然语言处理了

2. 词嵌入:编码形式的词汇语义

原教程网站

2.1 N-Gram语言模型

在 n-gram 语言模型中,给定一个单词序列向量,我们要计算的是:

P ( w i ∣ w i − 1 , w i − 2 , … , w i − n + 1 ) P\left(w_{i} \mid w_{i-1}, w_{i-2}, \ldots, w_{i-n+1}\right) P(wi∣wi−1,wi−2,…,wi−n+1)

w i w_i wi是单词序列的第 i 个单词。 在本例中,我们将在训练样例上计算损失函数,并且用反向传播算法更新参数

完整代码

CONTEXT_SIZE = 2

EMBEDDING_DIM = 10

test_sentence = """When forty winters shall besiege thy brow, # 莎士比亚的十四行诗 Sonnet 2

And dig deep trenches in thy beauty's field,

Thy youth's proud livery so gazed on now,

Will be a totter'd weed of small worth held:

Then being asked, where all thy beauty lies,

Where all the treasure of thy lusty days;

To say, within thine own deep sunken eyes,

Were an all-eating shame, and thriftless praise.

How much more praise deserv'd thy beauty's use,

If thou couldst answer 'This fair child of mine

Shall sum my count, and make my old excuse,'

Proving his beauty by succession thine!

This were to be new made when thou art old,

And see thy blood warm when thou feel'st it cold.""".split()

trigrams = [([test_sentence[i], test_sentence[i + 1]], test_sentence[i + 2])

for i in range(len(test_sentence) - 2)] # 创建一系列的元组,每个元组都是([ word_i-2, word_i-1 ], target word)的形式

print(trigrams[:3]) # 输出前3行,先看下是什么样子

vocab = set(test_sentence)

word_to_ix = {word: i for i, word in enumerate(vocab)}

import torch.nn as nn

import torch.nn.functional as F

class NGramLanguageModeler(nn.Module):

def __init__(self, vocab_size, embedding_dim, context_size):

super(NGramLanguageModeler, self).__init__()

self.embeddings = nn.Embedding(vocab_size, embedding_dim) # vocab_size * 10

self.linear1 = nn.Linear(context_size * embedding_dim, 128) # 2*10 , 128

self.linear2 = nn.Linear(128, vocab_size) # 128, vocab_size

def forward(self, inputs):

embeds = self.embeddings(inputs).view((1, -1)) # 1 * (seq_len * 10)

output = self.linear1(embeds) # 1 * 128

output = F.relu(output)

output = self.linear2(output) # 1 * vocab_size

log_probs = F.log_softmax(output, dim=1)

return log_probs # 1 * vocab_size

losses = []

loss_function = nn.NLLLoss()

model = NGramLanguageModeler(len(vocab), EMBEDDING_DIM, CONTEXT_SIZE)

import torch.optim as optim

optimizer = optim.SGD(model.parameters(), lr=0.001)

import torch

for epoch in range(10):

total_loss = 0

for context, target in trigrams:

context_idxs = torch.tensor([word_to_ix[w] for w in context], dtype=torch.long) # 准备好进入模型的数据 (例如将单词转换成整数索引,并将其封装在变量中)

model.zero_grad() # 回调torch累乘梯度,在传入一个新实例之前,需要把旧实例的梯度置零

log_probs = model(context_idxs) # 得到单词的log概率值

loss = loss_function(log_probs, torch.tensor([word_to_ix[target]], dtype=torch.long)) # 计算损失函数(再次注意,Torch需要将目标单词封装在变量里)

loss.backward() # 反向传播更新梯度

optimizer.step()

total_loss += loss.item() # 通过调tensor.item()得到单个Python数值

losses.append(total_loss)

print(losses) # 用训练数据每次迭代,损失函数都会下降

结果

[(['When', 'forty'], 'winters'), (['forty', 'winters'], 'shall'), (['winters', 'shall'], 'besiege')]

[542.3591945171356, 539.7471482753754, 537.1517448425293, 534.5717997550964, 532.0062839984894, 529.4537584781647, 526.9119682312012, 524.3817772865295, 521.8613884449005, 519.3491265773773]

2.2 计算连续词袋模型(CBOW)的词向量

连续词袋模型(CBOW)在NLP深度学习中使用很频繁。它是一个模型,尝试通过目标词前后几个单词的文本,来预测目标词。这有别于语言模型, 因为CBOW不是序列的,也不必是概率性的。CBOW常用于快速地训练词向量,得到的嵌入用来初始化一些复杂模型的嵌入。通常情况下,这被称为预训练嵌入。它几乎总能帮忙把模型性能提升几个百分点

CBOW 模型如下所示:给定一个单词 w i w_i wi, N N N代表两边的滑窗距,如 w i − 1 , . . . , w i − N w_{i-1},...,w_{i-N} wi−1,...,wi−N和 w i + 1 , . . . , w i + N w_{i+1},...,w_{i+N} wi+1,...,wi+N, 并将所有的上下文词统称为 C C C,CBOW 试图最小化:

− log p ( w i ∣ C ) = − log Softmax ( A ( ∑ w ∈ C q w ) + b ) -\log p\left(w_{i} \mid C\right)=-\log \operatorname{Softmax}\left(A\left(\sum_{w \in C} q_{w}\right)+b\right) −logp(wi∣C)=−logSoftmax(A(w∈C∑qw)+b)

其中是 q w q_w qw 单词 w i w_i wi 的嵌入

在 Pytorch 中,通过填充下面的类来实现这个模型,有两条需要注意:

- 考虑下你需要定义哪些参数

- 确保你知道每步操作后的结构,如果想重构,请使用.view()

完整代码

CONTEXT_SIZE = 2 # 左右各两个词

raw_text = """We are about to study the idea of a computational process.

Computational processes are abstract beings that inhabit computers.

As they evolve, processes manipulate other abstract things called data.

The evolution of a process is directed by a pattern of rules

called a program. People create programs to direct processes. In effect,

we conjure the spirits of the computer with our spells.""".split()

vocab = set(raw_text) # 通过对`raw_text`使用set()函数,我们进行去重操作

vocab_size = len(vocab)

word_to_ix = {word: i for i, word in enumerate(vocab)}

data = []

for i in range(2, len(raw_text) - 2):

context = [raw_text[i - 2], raw_text[i - 1],

raw_text[i + 1], raw_text[i + 2]]

target = raw_text[i]

data.append((context, target))

print(data[:5])

import torch.nn as nn

class CBOW(nn.Module):

def __init__(self):

pass

def forward(self, inputs):

pass

import torch

def make_context_vector(context, word_to_ix): # 创建模型并且训练。这里有些函数帮你在使用模块之前制作数据

idxs = [word_to_ix[w] for w in context]

return torch.tensor(idxs, dtype=torch.long)

x = make_context_vector(data[0][0], word_to_ix) # example

print(x)

结果

[(['We', 'are', 'to', 'study'], 'about'), (['are', 'about', 'study', 'the'], 'to'), (['about', 'to', 'the', 'idea'], 'study'), (['to', 'study', 'idea', 'of'], 'the'), (['study', 'the', 'of', 'a'], 'idea')]

tensor([ 9, 40, 34, 0])

只是一个例子,说明CBOW可以参照BOW的模型进行上下文单词生成

3. 序列模型和长短句记忆(LSTM)模型

原教程网站

新建一个字典word_to_ix

{'The': 0, 'dog': 1, 'ate': 2, 'the': 3, 'apple': 4, 'Everybody': 5, 'read': 6, 'that': 7, 'book': 8}

import matplotlib as plt的时候弹出窗口报错:

This application failed to start because it could not find or load the Qt Platforms

解决办法,升级pyqt5:

pip install -U pyqt5

结果:

ERROR: pip's dependency resolver does not currently take into account all the packages that are installed. This behaviour is the source of the following dependency conflicts.

spyder 4.2.3 requires pyqt5<5.13, but you have pyqt5 5.15.4 which is incompatible.

pyqt5-tools 5.15.2.3.0.2 requires pyqt5==5.15.2, but you have pyqt5 5.15.4 which is incompatible.

pyqt5-plugins 5.15.2.2.0.1 requires pyqt5==5.15.2, but you have pyqt5 5.15.4 which is incompatible.

Successfully installed PyQt5-Qt5-5.15.2 pyqt5-5.15.4

重新运行程序报错:

OMP: Error #15: Initializing libiomp5md.dll, but found libiomp5md.dll already initialized.

解决办法:

conda install nomkl

以上并不行,找到’libiomp5md.dll’此文件,放到另外的文件夹下

完整代码

import matplotlib.pyplot as plt

training_data = [

("The dog ate the apple".split(), ["DET", "NN", "V", "DET", "NN"]),

("Everybody read that book".split(), ["NN", "V", "DET", "NN"])

]

word_to_ix = {}

for sent, tags in training_data:

for word in sent:

if word not in word_to_ix:

word_to_ix[word] = len(word_to_ix)

tag_to_ix = {"DET": 0, "NN": 1, "V": 2}

EMBEDDING_DIM = 6 # 实际中通常使用更大的维度如32维, 64维.

HIDDEN_DIM = 6 # 这里我们使用小的维度, 为了方便查看训练过程中权重的变化.

import torch

import torch.nn as nn

import torch.nn.functional as F

class LSTMTagger(nn.Module):

def __init__(self, embedding_dim, hidden_dim, vocab_size, tagset_size):

super(LSTMTagger, self).__init__()

self.hidden_dim = hidden_dim

self.word_embeddings = nn.Embedding(vocab_size, embedding_dim)

self.lstm = nn.LSTM(embedding_dim, hidden_dim)

self.hidden2tag = nn.Linear(hidden_dim, tagset_size) # 线性层将隐藏状态空间映射到标注空间

self.hidden = self.init_hidden()

def init_hidden(self): # 开始并没有隐藏状态所以我们要先初始化一个

return (torch.zeros(1, 1, self.hidden_dim), # num_layers * num_directions, batch, hidden_size

torch.zeros(1, 1, self.hidden_dim))

def forward(self, sentence):

embeds = self.word_embeddings(sentence) # seq_len * embedding_dim

lstm_out, self.hidden = self.lstm(embeds.view(len(sentence), 1, -1), self.hidden) # seq_len * 1 * 6

tag_space = self.hidden2tag(lstm_out.view(len(sentence), -1)) # seq_len * 6 → seq_len * 3

tag_scores = F.log_softmax(tag_space, dim=1)

return tag_scores

model = LSTMTagger(EMBEDDING_DIM, HIDDEN_DIM, len(word_to_ix), len(tag_to_ix))

loss_function = nn.NLLLoss()

optimizer = torch.optim.SGD(model.parameters(), lr=0.1)

def prepare_sequence(seq, to_ix):

idxs = [to_ix[w] for w in seq]

return torch.tensor(idxs, dtype=torch.long)

with torch.no_grad(): # 查看训练前的分数

inputs = prepare_sequence(training_data[0][0], word_to_ix) # 注意: 输出的 i,j 元素的值表示单词 i 的 j 标签的得分

tag_scores = model(inputs) # seq_len * 3

print(tag_scores)

losses = []

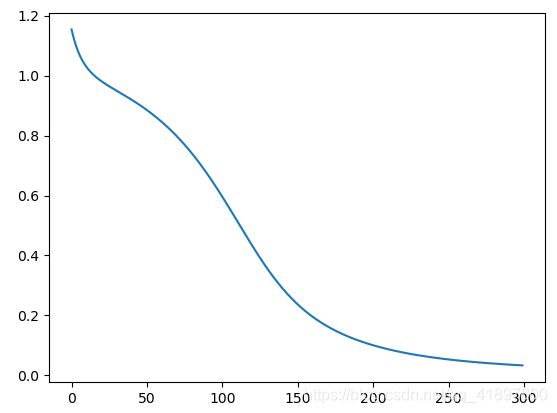

for epoch in range(300): # 实际情况下你不会训练300个周期, 此例中我们只是随便设了一个值

for sentence, tags in training_data:

model.zero_grad()

model.hidden = model.init_hidden()

sentence_in = prepare_sequence(sentence, word_to_ix)

targets = prepare_sequence(tags, tag_to_ix)

tag_scores = model(sentence_in)

loss = loss_function(tag_scores, targets)

loss.backward()

optimizer.step()

losses.append(loss.item())

with torch.no_grad(): # 查看训练后的得分

inputs = prepare_sequence(training_data[0][0], word_to_ix)

tag_scores = model(inputs)

print(tag_scores)

plt.figure()

plt.plot(losses)

plt.show()

结果

tensor([[-0.6698, -1.3377, -1.4884],

[-0.5620, -1.4076, -1.6863],

[-0.6160, -1.3408, -1.6182],

[-0.6227, -1.3323, -1.6112],

[-0.6392, -1.3581, -1.5364]])

tensor([[-0.2488, -1.8419, -2.7849],

[-4.1014, -0.0244, -4.8848],

[-1.5006, -3.2135, -0.3054],

[-0.0682, -3.3692, -3.4574],

[-3.1913, -0.0469, -5.3687]])

4. 高级:制定动态决策和BI-LSTM CRF

原教程网站

Pytorch是一种动态神经网络套件。另一个动态套件的例子是Dynet,相反的是静态工具包,其中包 括Theano,Keras,TensorFlow等

CRF(conditional random field algorithm)条件随机场算法,是基于遵循马尔可夫性的概率图模型,结合了最大熵模型和隐马尔可夫模型的特点,是一种无向图模型,近年来在分词、词性标注和命名实体识别等序列标注任务中取得了很好的效果

原理可见此

关于.manual_seed(1)函数,随机数生成器的种子设置为固定值,这样当调用时torch.rand(),结果将可重现

关于nn.Parameter函数,torch.nn.Parameter是继承自torch.Tensor的子类,其主要作用是作为nn.Module中的可训练参数使用。它与torch.Tensor的区别就是nn.Parameter会自动被认为是module的可训练参数,即加入到parameter()这个迭代器中去;而module中非nn.Parameter()的普通tensor是不在parameter中的。

注意到,nn.Parameter的对象的requires_grad属性的默认值是True,即是可被训练的,这与torth.Tensor对象的默认值相反

代码

import torch

import torch.autograd as autograd

import torch.nn as nn

import torch.optim as optim

torch.manual_seed(1)

def argmax(vec): # 将argmax作为python int返回

_, idx = torch.max(vec, 1)

return idx.item()

def prepare_sequence(seq, to_ix):

idxs = [to_ix[w] for w in seq]

return torch.tensor(idxs, dtype=torch.long)

def log_sum_exp(vec): # 以正向算法的数值稳定方式计算log sum exp

max_score = vec[0, argmax(vec)]

max_score_broadcast = max_score.view(1, -1).expand(1, vec.size()[1])

return max_score + \

torch.log(torch.sum(torch.exp(vec - max_score_broadcast)))

class BiLSTM_CRF(nn.Module):

def __init__(self, vocab_size, tag_to_ix, embedding_dim, hidden_dim):

super(BiLSTM_CRF, self).__init__()

self.embedding_dim = embedding_dim

self.hidden_dim = hidden_dim

self.vocab_size = vocab_size

self.tag_to_ix = tag_to_ix

self.tagset_size = len(tag_to_ix)

self.word_embeds = nn.Embedding(vocab_size, embedding_dim)

self.lstm = nn.LSTM(embedding_dim, hidden_dim // 2,

num_layers=1, bidirectional=True)

# 将LSTM的输出映射到标记空间。

self.hidden2tag = nn.Linear(hidden_dim, self.tagset_size)

# 转换参数矩阵。 输入i,j是得分从j转换到i。

self.transitions = nn.Parameter(

torch.randn(self.tagset_size, self.tagset_size))

# 这两个语句强制执行我们从不转移到开始标记的约束

# 并且我们永远不会从停止标记转移

self.transitions.data[tag_to_ix[START_TAG], :] = -10000

self.transitions.data[:, tag_to_ix[STOP_TAG]] = -10000

self.hidden = self.init_hidden()

def init_hidden(self):

return (torch.randn(2, 1, self.hidden_dim // 2),

torch.randn(2, 1, self.hidden_dim // 2))

def _forward_alg(self, feats):

# 使用前向算法来计算分区函数

init_alphas = torch.full((1, self.tagset_size), -10000.)

# START_TAG包含所有得分.

init_alphas[0][self.tag_to_ix[START_TAG]] = 0.

# 包装一个变量,以便我们获得自动反向提升

forward_var = init_alphas

# 通过句子迭代

for feat in feats:

alphas_t = [] # The forward tensors at this timestep

for next_tag in range(self.tagset_size):

# 广播发射得分:无论以前的标记是怎样的都是相同的

emit_score = feat[next_tag].view(

1, -1).expand(1, self.tagset_size)

# trans_score的第i个条目是从i转换到next_tag的分数

trans_score = self.transitions[next_tag].view(1, -1)

# next_tag_var的第i个条目是我们执行log-sum-exp之前的边(i -> next_tag)的值

next_tag_var = forward_var + trans_score + emit_score

# 此标记的转发变量是所有分数的log-sum-exp。

alphas_t.append(log_sum_exp(next_tag_var).view(1))

forward_var = torch.cat(alphas_t).view(1, -1)

terminal_var = forward_var + self.transitions[self.tag_to_ix[STOP_TAG]]

alpha = log_sum_exp(terminal_var)

return alpha

def _get_lstm_features(self, sentence):

self.hidden = self.init_hidden()

embeds = self.word_embeds(sentence).view(len(sentence), 1, -1)

lstm_out, self.hidden = self.lstm(embeds, self.hidden)

lstm_out = lstm_out.view(len(sentence), self.hidden_dim)

lstm_feats = self.hidden2tag(lstm_out)

return lstm_feats

def _score_sentence(self, feats, tags):

# Gives the score of a provided tag sequence

score = torch.zeros(1)

tags = torch.cat([torch.tensor([self.tag_to_ix[START_TAG]], dtype=torch.long), tags])

for i, feat in enumerate(feats):

score = score + \

self.transitions[tags[i + 1], tags[i]] + feat[tags[i + 1]]

score = score + self.transitions[self.tag_to_ix[STOP_TAG], tags[-1]]

return score

def _viterbi_decode(self, feats):

backpointers = []

# Initialize the viterbi variables in log space

init_vvars = torch.full((1, self.tagset_size), -10000.)

init_vvars[0][self.tag_to_ix[START_TAG]] = 0

# forward_var at step i holds the viterbi variables for step i-1

forward_var = init_vvars

for feat in feats:

bptrs_t = [] # holds the backpointers for this step

viterbivars_t = [] # holds the viterbi variables for this step

for next_tag in range(self.tagset_size):

# next_tag_var [i]保存上一步的标签i的维特比变量

# 加上从标签i转换到next_tag的分数。

# 我们这里不包括emission分数,因为最大值不依赖于它们(我们在下面添加它们)

next_tag_var = forward_var + self.transitions[next_tag]

best_tag_id = argmax(next_tag_var)

bptrs_t.append(best_tag_id)

viterbivars_t.append(next_tag_var[0][best_tag_id].view(1))

# 现在添加emission分数,并将forward_var分配给我们刚刚计算的维特比变量集

forward_var = (torch.cat(viterbivars_t) + feat).view(1, -1)

backpointers.append(bptrs_t)

# 过渡到STOP_TAG

terminal_var = forward_var + self.transitions[self.tag_to_ix[STOP_TAG]]

best_tag_id = argmax(terminal_var)

path_score = terminal_var[0][best_tag_id]

# 按照后退指针解码最佳路径。

best_path = [best_tag_id]

for bptrs_t in reversed(backpointers):

best_tag_id = bptrs_t[best_tag_id]

best_path.append(best_tag_id)

# 弹出开始标记(我们不想将其返回给调用者)

start = best_path.pop()

assert start == self.tag_to_ix[START_TAG] # Sanity check

best_path.reverse()

return path_score, best_path

def neg_log_likelihood(self, sentence, tags):

feats = self._get_lstm_features(sentence)

forward_score = self._forward_alg(feats)

gold_score = self._score_sentence(feats, tags)

return forward_score - gold_score

def forward(self, sentence): # dont confuse this with _forward_alg above.

# 获取BiLSTM的emission分数

lstm_feats = self._get_lstm_features(sentence)

# 根据功能,找到最佳路径。

score, tag_seq = self._viterbi_decode(lstm_feats)

return score, tag_seq

START_TAG = ""

STOP_TAG = ""

EMBEDDING_DIM = 5

HIDDEN_DIM = 4

# 弥补一些训练数据

training_data = [(

"the wall street journal reported today that apple corporation made money".split(),

"B I I I O O O B I O O".split()

), (

"georgia tech is a university in georgia".split(),

"B I O O O O B".split()

)]

word_to_ix = {}

for sentence, tags in training_data:

for word in sentence:

if word not in word_to_ix:

word_to_ix[word] = len(word_to_ix)

tag_to_ix = {"B": 0, "I": 1, "O": 2, START_TAG: 3, STOP_TAG: 4}

model = BiLSTM_CRF(len(word_to_ix), tag_to_ix, EMBEDDING_DIM, HIDDEN_DIM)

optimizer = optim.SGD(model.parameters(), lr=0.01, weight_decay=1e-4)

# 在训练前检查预测

with torch.no_grad():

precheck_sent = prepare_sequence(training_data[0][0], word_to_ix)

precheck_tags = torch.tensor([tag_to_ix[t] for t in training_data[0][1]], dtype=torch.long)

print(model(precheck_sent))

# 确保加载LSTM部分中较早的prepare_sequence

for epoch in range(

300): # again, normally you would NOT do 300 epochs, it is toy data

for sentence, tags in training_data:

# 步骤1. 请记住,Pytorch积累了梯度

# We need to clear them out before each instance

model.zero_grad()

# 步骤2. 为我们为网络准备的输入,即将它们转换为单词索引的张量.

sentence_in = prepare_sequence(sentence, word_to_ix)

targets = torch.tensor([tag_to_ix[t] for t in tags], dtype=torch.long)

# 步骤3. 向前运行

loss = model.neg_log_likelihood(sentence_in, targets)

# 步骤4.通过调用optimizer.step()来计算损失,梯度和更新参数

loss.backward()

optimizer.step()

# 训练后检查预测

with torch.no_grad():

precheck_sent = prepare_sequence(training_data[0][0], word_to_ix)

print(model(precheck_sent))

# 得到结果

结果

(tensor(2.6907), [1, 2, 2, 2, 2, 2, 2, 2, 2, 2, 1])

(tensor(20.4906), [0, 1, 1, 1, 2, 2, 2, 0, 1, 2, 2])

小结

- 词袋模式(BOW)主要对出现的单词进行计数

- N-Gram语言模型对一个单词的前几个单词进行计数

- CBOW对一个单词的前后几个单词进行计数

- LSTM和之前的操作类似

- BI-LSTM CRF和什么动态规划里头的维特比变量没听说过,代码是复制粘贴的,理论不懂,暂时跳过