【Pytorch学习】-- 构建模型 -- torch.nn

学习视频:https://www.bilibili.com/video/BV1hE411t7RN?p=1,内含环境搭建

搭建神经网络

当我们完成读取数据和处理数据之后,下一步就可以将数据输入到我们的神经网络当中,而pytorch已经为我们写好一个模型类,当需要构建我们自己的模型时,只需要继承torch.nn即可。官方文档

torch.nn

import torch

from torch import nn # 导入对应库

class MyModule(nn.Module): # 创建自己的模型类,继承Module类

def __init__(self): # 初始化自己创建的神经网络,必须重调用"super().__init()" 来初始化

super().__init__()

def forward(self,input): # 神经网络具体要使用的函数,都写在forward中,必须重写

output = input + 1 # 假设该神经网络的功能是将输入加一然后输出

return output # 返回对应处理结果

mymodule = MyModule() # 初始化一个模型实例

x = torch.tensor(1.0) # 创建一个tensor类型

output = mymodule (x) # 输入x到该模型中

print(output) # 打印输出结果

输出结果:

tensor(2.)

创建一个卷积神经网络

卷积的理论知识在教学视频有,本文主要着重于代码实现:

import torch

import torchvision

from torch.utils.data import DataLoader

from torch import nn

from torch.nn import Conv2d # 卷积所要用到的库,2d代表二维

#读取数据

dataset = torchvision.datasets.CIFAR10(root = "./CIFAR10_Dataset"

,train = False

,transform = torchvision.transforms.ToTensor()

,download = False)

dataloader = DataLoader(dataset,batch_size = 64)

# 创建网络

class Conv(nn.Module):

def __init__(self):

super(Conv,self).__init__() # 完成父类初始化

# 实例化一个卷积函数

self.conv1 = Conv2d(in_channels = 3,out_channels = 6,kernel_size = 3,stride = 1,padding = 0)

def forward(self,x):

x = self.conv1(x)

return x

conv = Conv()

for data in dataloader:

imgs,targets = data

output = conv(imgs)

print("Original:",imgs.shape)

print("Conv: ",output.shape)

输出结果:对应数值代表batch、通道数和尺寸

Original: torch.Size([64, 3, 32, 32])

Conv: torch.Size([64, 6, 30, 30])

···

借助tensorboard查看,但是通道数为6,tensorboard无法使用,因此使用reshape来重新改造尺寸

from torch.utils.tensorboard import SummaryWriter

writer = SummaryWriter("logs")

step = 0

for data in dataloader:

imgs,targets = data

output = conv(imgs)

print("Original:",imgs.shape)

# torch.Size([64,3,32,32])

print("Conv: ",output.shape)

#torch.Size([64, 6, 30, 30])

writer.add_images("Input",imgs,step)

# 让torch.Size([64, 6, 30, 30]) -> [xxx,3,30,30]

# 写-1可以自动匹配batch_size,把多余的通道组成新的batch

output = torch.reshape(output,(-1,3,30,30))

writer.add_images("Output",output,step)

step += 1

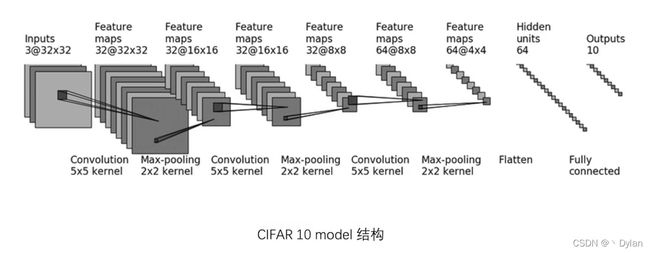

实例

from torch import nn

from torch.nn import Conv2d,MaxPool2d,Flatten,Linear # 导入所需库,其余库用法与conv2d类似

# 构建神经网络

class Tudui(nn.Module):

def __init__(self):

super(Tudui,self).__init__()

self.conv1 = Conv2d(3,32,5,padding = 2) # 由图计算得到padding和stride

self.maxpool1 = MaxPool2d(2)

self.conv2 = Conv2d(32,32,5,padding = 2)

self.maxpool2 = MaxPool2d(2)

self.conv3 = Conv2d(32,64,5,padding = 2)

self.maxpool3 = MaxPool2d(2)

self.flatten = Flatten()

self.linear1 = Linear(1024,64)

self.linear2 = Linear(64,10)

def forward(self,x):

x = self.conv1(x)

x = self.maxpool1(x)

x = self.conv2(x)

x = self.maxpool2(x)

x = self.conv3(x)

x = self.maxpool3(x)

x = self.flatten(x)

x = self.linear1(x)

x = self.linear2(x)

return x

tudui = Tudui()

print(tudui)

输出结果,查看我们构建的网络

Tudui(

(conv1): Conv2d(3, 32, kernel_size=(5, 5), stride=(1, 1), padding=(2, 2))

(maxpool1): MaxPool2d(kernel_size=2, stride=2, padding=0, dilation=1, ceil_mode=False)

(conv2): Conv2d(32, 32, kernel_size=(5, 5), stride=(1, 1), padding=(2, 2))

(maxpool2): MaxPool2d(kernel_size=2, stride=2, padding=0, dilation=1, ceil_mode=False)

(conv3): Conv2d(32, 64, kernel_size=(5, 5), stride=(1, 1), padding=(2, 2))

(maxpool3): MaxPool2d(kernel_size=2, stride=2, padding=0, dilation=1, ceil_mode=False)

(flatten): Flatten(start_dim=1, end_dim=-1)

(linear1): Linear(in_features=1024, out_features=64, bias=True)

(linear2): Linear(in_features=64, out_features=10, bias=True)

)

检查网络是否正确:

input = torch.ones((64,3,32,32)) # 创建一个tensor数据类型来输入

output = tudui(input)

print(output.shape)

输出结果:

torch.Size([64, 10])

结果正确

借助Sequential()简化网络

# 引入Sequential

from torch import nn

from torch.nn import Conv2d,MaxPool2d,Flatten,Linear,Sequential

from torch.utils.tensorboard import SummaryWriter

class Tudui(nn.Module):

def __init__(self):

super(Tudui,self).__init__()

self.model1 = Sequential( # 将要使用的函数放入Sequetial即可

Conv2d(3,32,5,padding = 2),

MaxPool2d(2),

Conv2d(32,32,5,padding = 2),

MaxPool2d(2),

Conv2d(32,64,5,padding = 2),

MaxPool2d(2),

Flatten(),

Linear(1024,64),

Linear(64,10)

)

def forward(self,x):

x = self.model1(x)

return x

tudui = Tudui()

input = torch.ones((64,3,32,32))

output = tudui(input)

print(output.shape)

writer = SummaryWriter("logs_seq")

writer.add_graph(tudui,input) # 借助tensorboard查看那我们的网络结构

writer.close()