【Pytorch】完整的模型训练套路(7)

以CIFAR10为例,

model.py

# -*- coding: utf-8 -*-

# 作者:小土堆

# 公众号:土堆碎念

import torch

from torch import nn

# 搭建神经网络

class Tudui(nn.Module):

def __init__(self):

super(Tudui, self).__init__()

self.model = nn.Sequential(

nn.Conv2d(3, 32, 5, 1, 2),

nn.MaxPool2d(2),

nn.Conv2d(32, 32, 5, 1, 2),

nn.MaxPool2d(2),

nn.Conv2d(32, 64, 5, 1, 2),

nn.MaxPool2d(2),

nn.Flatten(),

nn.Linear(64*4*4, 64),

nn.Linear(64, 10)

)

def forward(self, x):

x = self.model(x)

return x

if __name__ == '__main__':

tudui = Tudui()

# 测试网络结构正确性

input = torch.ones((64, 3, 32, 32))

output = tudui(input)

print(output.shape) # tensor.Size([64, 10])train.py

# -*- coding: utf-8 -*-

# 作者:小土堆

# 公众号:土堆碎念

import torch

import torchvision

from model import * # 外部文件

# 准备数据集

from torch import nn

from torch.utils.data import DataLoader

# 准备好的训练数据

train_data = torchvision.datasets.CIFAR10(root="./data", train=True, transform=torchvision.transforms.ToTensor(),

download=True)

# 准备好的测试数据

test_data = torchvision.datasets.CIFAR10(root="./data", train=False, transform=torchvision.transforms.ToTensor(),

download=True)

# length 长度

train_data_size = len(train_data)

test_data_size = len(test_data)

# 如果train_data_size=10, 训练数据集的长度为:10

print("训练数据集的长度为:{}".format(train_data_size)) # 训练数据集的长度为:50000

print("测试数据集的长度为:{}".format(test_data_size)) # 测试数据集的长度为:10000

# 利用 DataLoader 来加载数据集

train_dataloader = DataLoader(train_data, batch_size=64)

test_dataloader = DataLoader(test_data, batch_size=64)

# 创建网络模型

tudui = Tudui()

# 损失函数

loss_fn = nn.CrossEntropyLoss()

# 优化器

# learning_rate = 0.01

# 1e-2=1 x (10)^(-2) = 1 /100 = 0.01

learning_rate = 1e-2

optimizer = torch.optim.SGD(tudui.parameters(), lr=learning_rate)

#########################

# 设置训练网络的一些参数

#########################

# 记录训练的次数

total_train_step = 0

# 记录测试的次数

total_test_step = 0

# 训练的轮数

epoch = 10

# 添加tensorboard

# writer = SummaryWriter("../logs_train")

# 1、for循环里的内容做了10次

for i in range(epoch):

print("-------第 {} 轮训练开始-------".format(i+1))

# 2、训练步骤开始

tudui.train() ###############没有这步也能运行,为什么要有呢?

# 对特定的层有用, BN,Dropout等

#

# 3、从dataloader中取数据

for data in train_dataloader:

imgs, targets = data

outputs = tudui(imgs)

loss = loss_fn(outputs, targets)

# 4、优化器优化模型

optimizer.zero_grad() # 梯度清零

loss.backward() # 反向传播

optimizer.step() # 调用优化器优化参数

# 5、做了一次训练,训练次数+1,打印相关训练信息

total_train_step = total_train_step + 1

if total_train_step % 100 == 0:

print("训练次数:{}, Loss: {}".format(total_train_step, loss.item()))

# writer.add_scalar("train_loss", loss.item(), total_train_step)

# 6、测试步骤开始

# 每训练完一轮,都会在测试数据上跑一遍,用于评估模型怎么样

tudui.eval() ###############没有这步也能运行

# 对特定的层有用, BN,Dropout等

#

total_test_loss = 0 # 验证集上loss的总和

total_accuracy = 0 # 整体的正确率

# 没有梯度,不对参数进行调优

with torch.no_grad():

# 7、读取测试数据

for data in test_dataloader:

imgs, targets = data

# 传入网络

outputs = tudui(imgs)

# 计算loss,注意这里没有反向传播

loss = loss_fn(outputs, targets)

total_test_loss = total_test_loss + loss.item()

# 计算准确率

accuracy = (outputs.argmax(1) == targets).sum()

total_accuracy = total_accuracy + accuracy

print("整体测试集上的Loss: {}".format(total_test_loss))

print("整体测试集上的正确率: {}".format(total_accuracy/test_data_size))

# writer.add_scalar("test_loss", total_test_loss, total_test_step)

# writer.add_scalar("test_accuracy", total_accuracy/test_data_size, total_test_step)

total_test_step = total_test_step + 1

# 8、每轮训练完成后,保存模型

torch.save(tudui, "tudui_{}.pth".format(i))

# 方式二保存

torch.save(tudui.state_dict(), "tudui_{}.pth".format(i))

print("模型已保存")

# writer.close()关于验证部分loss.item()的原因,.item()使得tensor数据转为int数据。

import torch

a = torch.tensor(5)

print(a) # tensor(5), tensor

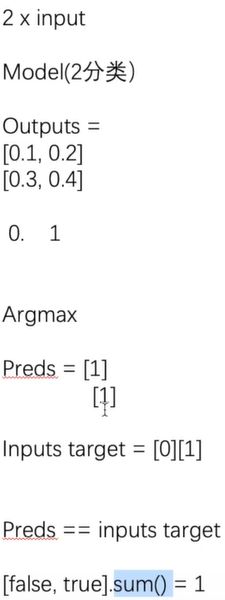

print(a.item()) # 5, int求正确率的流程

import torch

outputs = torch.tensor([[0.1, 0.2],

[0.3, 0.4]])

print(outputs.argmax(1)) # 1是横着看,0是纵着看

# tensor([1, 1])

preds = outputs.argmax(1)

targets = torch.tensor([0, 1])

print(preds==targets)

# tensor([0, 1], dtype=torch.uint8)

print((preds==targets).sum())

# tensor(1)