动手学深度学习(0-3章)代码

我们将本书中经常导⼊和引⽤的函数、类等封装在d2l包中。

import collections #提供有关集合的操作

import hashlib #提供字符串加密的功能

import math

import os #处理文件和目录

import random

import re #正则表达式

import shutil #复制、移动、删除、压缩、解压文件

import sys #与python解释器交互的一个接口

import tarfile #压缩

import zipfile #解压

import time

from collections import defaultdict #当字典里的元素不存在但被查找时,返回一个默认值

import pandas as pd #利用series和dataframe进行数据处理

import requests #解码来自服务器的响应

from IPython import display #显示图片

from matplotlib import pyplot as plt #绘图

from matplotlib_inline import backend_inline #

d2l = sys.modules[__name__] 从 PyTorch 导⼊模块。

import numpy as np

import torch

import torchvision

from PIL import Image

from torch import nn

from torch.nn import functional as F

from torch.utils import data

from torchvision import transformsimport random

import torch

from d2l import torch as d2l

def synthetic_data(w, b, num_examples):

X = torch.normal(0, 1, (num_examples, len(w))) #均值为0,方差为1,大小为n个样本,列数为w的长度

y = torch.matmul(X, w) + b #y = Xw + b

y += torch.normal(0, 0.01, y.shape) #均值为0,方差为0.1(标准差为0.01),形状和y相同,y = Xw + b + 噪声

return X, y.reshape((-1, 1)) #以列向量返回

true_w = torch.tensor([2, -3.4])

true_b = 4.2

features, labels = synthetic_data(true_w, true_b, 1000)

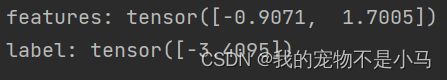

print('features:',features[0],'\nlabel:',labels[0])

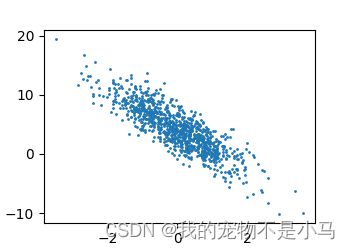

d2l.set_figsize()

d2l.plt.scatter(features[:,1].detach().numpy(),labels.detach().numpy(),1)

d2l.plt.show()import random

import torch

from d2l import torch as d2l

def synthetic_data(w, b, num_examples):

X = torch.normal(0, 1, (num_examples, len(w)))

y = torch.matmul(X, w) + b

y += torch.normal(0, 0.01, y.shape)

return X, y.reshape((-1, 1))

true_w = torch.tensor([2, -3.4])

true_b = 4.2

features, labels = synthetic_data(true_w, true_b, 1000)

print('features:',features[0],'\nlabel:',labels[0])

d2l.set_figsize()

d2l.plt.scatter(features[:,1].detach().numpy(),labels.detach().numpy(),1)

d2l.plt.show()

def data_iter(batch_size,features,labels):

num_examples = len(features)

indices = list(range(num_examples))

random.shuffle(indices)

for i in range(0,num_examples,batch_size):

batch_indices = torch.tensor(indices[i:min(i+batch_size,num_examples)])

yield features[batch_indices], labels[batch_indices]

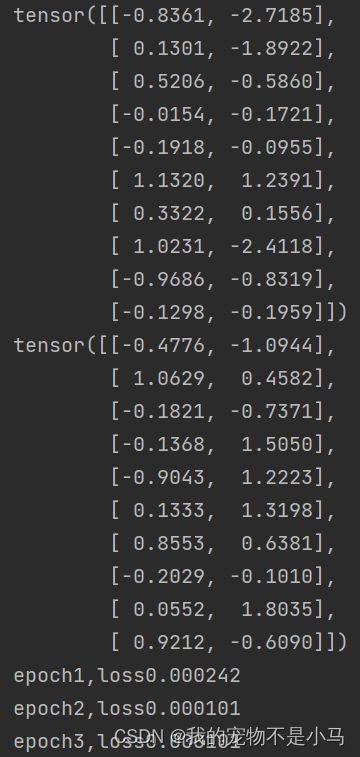

batch_size = 10

for X, y in data_iter(batch_size,features,labels):

print(X, '\n', y)

breakimport random

import torch

from d2l import torch as d2l

def synthetic_data(w, b, num_examples):

X = torch.normal(0, 1, (num_examples, len(w))) #均值为0,方差为1,大小为n个样本,列数为w的长度

y = torch.matmul(X, w) + b #y = Xw + b

y += torch.normal(0, 0.01, y.shape) #均值为0,方差为0.1(标准差为0.01),形状和y相同,y = Xw + b + 噪声

return X, y.reshape((-1, 1)) #以列向量返回

true_w = torch.tensor([2, -3.4])

true_b = 4.2

features, labels = synthetic_data(true_w, true_b, 1000)

print('features:',features[0],'\nlabel:',labels[0])

d2l.set_figsize()

d2l.plt.scatter(features[:,1].detach().numpy(),labels.detach().numpy(),1)

d2l.plt.show()

def data_iter(batch_size,features,labels):

num_examples = len(features)

indices = list(range(num_examples)) #生成样本的index

random.shuffle(indices) #打乱

for i in range(0,num_examples,batch_size):

batch_indices = torch.tensor(indices[i:min(i+batch_size,num_examples)])

yield features[batch_indices], labels[batch_indices]

batch_size = 10

for X, y in data_iter(batch_size,features,labels):

print(X, '\n', y)

break

w = torch.normal(0,0.01,size = (2,1),requires_grad = True)

b = torch.zeros(1,requires_grad = True)

def linreg(X,w,b):

return torch.matmul(X,w) + b

def squard_loss(y_hat,y):

return(y_hat - y.reshape(y_hat.shape)) ** 2 / 2

def sgd(params,lr,batch_size):

with torch.no_grad():

for param in params:

param += -lr * param.grad / batch_size

param.grad.zero_()

lr = 0.03

num_epochs = 3

net = linreg

loss = squard_loss

for epoch in range(num_epochs):

for X,y in data_iter(batch_size,features,labels):

l = loss(net(X,w,b),y)

l.sum().backward()

sgd([w,b],lr,batch_size)

with torch.no_grad():

train_1 = loss(net(features,w,b),labels)

print(f'epoch{epoch + 1},loss{float(train_1.mean()):f}')

print(f'w的误差估计:{true_w - w.reshape(true_w.shape)}')

print(f'b的误差估计:{true_b - b}')

线性回归的简洁实现

import torch

from torch import nn

from torch.utils import data

from d2l import torch as d2l

true_w = torch.tensor([2,-3.4])

true_b = 4.2

features,labels = d2l.synthetic_data(true_w,true_b,1000)

def load_array(data_array,batch_size,is_train = True):

dataset = data.TensorDataset(*data_array)

return data.DataLoader(dataset,batch_size,shuffle = is_train)

batch_size = 10

data_iter = load_array((features,labels),batch_size)

print(next(iter(data_iter))[0])

print(next(iter(data_iter))[0])

net = nn.Sequential(nn.Linear(2,1)) #指定输入输出的维度

net[0].weight.data.normal_(0,0.01)

net[0].bias.data.fill_(0)

loss = nn.MSELoss()

trainer = torch.optim.SGD(net.parameters(),lr = 0.03)

num_epochs = 3

for epoch in range(num_epochs):

for X,y in data_iter:

l = loss(net(X),y) #前向传播

trainer.zero_grad() #梯度置0

l.backward() #计算梯度

trainer.step() #模型更新

l = loss(net(features),labels)

print(f'epoch{epoch + 1},loss{l:f}')图像分类数据集

import torch

import torchvision

from torch.utils import data

from torchvision import transforms

from d2l import torch as d2l

d2l.use_svg_display()

trans = transforms.ToTensor() #转为tensor

mnist_train = torchvision.datasets.FashionMNIST(root = "../Data",train = True,transform = trans,download = True)

mnist_test = torchvision.datasets.FashionMNIST(root = "../Data",train = False,transform = trans,download = True)

print(len(mnist_train))

print(len(mnist_test))

print(mnist_train[0][0].shape)

def get_fashion_mnist_labels(labels):

text_labels = ['t-shirt','trouser','pullover','dress','coat','sandal','shirt','sneaker','bag','ankle boot']

return [text_labels[int(i)] for i in labels]

def show_images(imgs,num_rows,num_cols,titles = None,scale = 1.5):

figsize = (num_cols * scale,num_rows * scale)

_,axes = d2l.plt.subplots(num_rows,num_cols,figsize = figsize)

axes = axes.flatten()

for i,(ax,img) in enumerate(zip(axes,imgs)):

if torch.is_tensor(img):

ax.imshow(img.numpy(),'gray')

""""""

ax.axis('off')

ax.set_title(titles[i])

else:

ax.imshow(img)

d2l.plt.show()

X,y = next(iter(data.DataLoader(mnist_train,batch_size = 18)))

show_images(X.reshape(18,28,28),2,9,titles = get_fashion_mnist_labels(y))

batch_size = 256

def get_dataloader_workers():

return 4 #4个进程来进行读取

train_iter = data.DataLoader(mnist_train,batch_size,shuffle = True,num_workers = get_dataloader_workers())

timer = d2l.Timer()

for X,y in train_iter:

continue

print(f'{timer.stop():.2f}sec')

def load_data_fashion_mnist(batch_size,resize = None):

trans = [transforms.ToTensor()]

if resize:

trans.insert(0,transforms.Resize(resize))

trans = transforms.Compose(trans)

mnist_train = torchvision.datasets.FashionMNIST(root = "../Data",train = True,transform = trans,download = True)

mnist_test = torchvision.datasets.FashionMNIST(root = "../Data",train = False,transform = trans,download = True)

return (data.DataLoader(mnist_train,batch_size,shuffle = True,num_workers = get_dataloader_workers()),

data.DataLoader(mnist_test,batch_size,shuffle = True,num_workers = get_dataloader_workers()))

softmax回归的从0开始实现

import torch

from IPython import display

from d2l import torch as d2l

batch_size = 256

train_iter, test_iter = d2l.load_data_fashion_mnist(batch_size)

num_inputs = 784

num_outputs = 10

W = torch.normal(0,0.01,size=(num_inputs, num_outputs),requires_grad=True)

b = torch.zeros(num_outputs,requires_grad=True)

def softmax(X):

X_exp = torch.exp(X)

partition = X_exp.sum(1, keepdim=True) # 输入的X为矩阵,所以就是对矩阵每一行做Softmax

return X_exp / partition

X = torch.normal(0, 1, (2, 5))

X_prob = softmax(X)

print(X_prob)

print(X_prob.sum(1))

def net(X):

return softmax(torch.matmul(X.reshape((-1, W.shape[0])), W) + b)

X = torch.normal(0, 1, (2, 5))

print(X)

print(X + 1)

y = torch.tensor([0,2])

y_hat = torch.tensor([[0.1, 0.3, 0.6], [0.3, 0.2, 0.5]])

print(y_hat[[0, 1], y])

def cross_entropy(y_hat, y):

return -torch.log(y_hat[range(len(y_hat)), y])

def accuracy(y_hat, y):

if len(y_hat.shape) > 1 and y_hat.shape[1] > 1:

y_hat = y_hat.argmax(axis=1)

cmp = y_hat.type(y.dtype) == y

return float(cmp.type(y.dtype).sum())

print(accuracy(y_hat, y))

class Accumulator:

def __init__(self, n):

self.data = [0.0] * n

def add(self, *args):

self.data = [a + float(b) for a, b in zip(self.data, args)]

def reset(self):

self.data = [0.0] * len(self.data)

def __getitem__(self, idx):

return self.data[idx]

def evaluate_accuracy(net, data_iter):

if isinstance(net, torch.nn.Module):

net.eval()

metric = Accumulator(2)

for X, y in data_iter:

metric.add(accuracy(net(X), y), y.numel())

return metric[0] / metric[1]

def train_epoch_ch3(net, train_iter, loss, updater):

if isinstance(net, torch.nn.Module):

net.train()

metric = Accumulator(3)

for X, y in train_iter:

y_hat = net(X)

l = loss(y_hat, y)

if isinstance(updater, torch.optim.Optimizer):

updater.zero_grad()

l.backward()

updater.step()

metric.add(float(l) * len(y), accuracy(y_hat, y), y.size().numel())

else :

l.sum().backward()

updater(X.shape[0])

metric.add(float(l.sum()), accuracy(y_hat, y), y.numel())

return metric[0] / metric[2] , metric[1] / metric[2]

class Animator:

def __init__(self, xlabel=None, ylabel=None, legend=None, xlim=None,

ylim=None, xscale='linear', yscale='linear',

fmts=('-', 'm--', 'g-.', 'r:'), nrows=1, ncols=1,

figsize=(3.5, 2.5)):

if legend is None:

legend = []

d2l.use_svg_display()

self.fig, self.axes = d2l.plt.subplots(nrows, ncols, figsize=figsize)

if nrows * ncols == 1:

self.axes = [self.axes, ]

self.config_axes = lambda: d2l.set_axes(

self.axes[0], xlabel, ylabel, xlim, ylim, xscale, yscale, legend)

self.X, self.Y, self.fmts = None, None, fmts

def add(self, x, y):

if not hasattr(y, "__len__"):

y = [y]

n = len(y)

if not hasattr(x, "__len__"):

x = [x] * n

if not self.X:

self.X = [[] for _ in range(n)]

if not self.Y:

self.Y = [[] for _ in range(n)]

for i, (a, b) in enumerate(zip(x, y)):

if a is not None and b is not None:

self.X[i].append(a)

self.Y[i].append(b)

self.axes[0].cla()

for x, y, fmt in zip(self.X, self.Y, self.fmts):

self.axes[0].plot(x, y, fmt)

self.config_axes()

display.display(self.fig)

display.clear_output(wait=True)

def train_ch3(net, train_iter, test_iter, loss, num_epochs, updater):

animator = Animator(xlabel='epoch', xlim=[1, num_epochs], ylim=[0.3, 0.9],

legend=['train loss', 'train acc', 'test acc'])

for epoch in range(num_epochs):

train_metrics = train_epoch_ch3(net, train_iter, loss, updater)

test_acc = evaluate_accuracy(net, test_iter)

animator.add(epoch + 1, train_metrics + (test_acc,))

d2l.plt.show()

train_loss, train_acc = train_metrics

lr = 0.1

def updater(batch_size):

return d2l.sgd([W, b], lr, batch_size)

num_epoch = 10

train_ch3(net, train_iter, test_iter, cross_entropy, num_epoch, updater)

def predict_ch3(net, test_iter, n=6):

for X, y in test_iter:

break

trues = d2l.get_fashion_mnist_labels(y)

preds = d2l.get_fashion_mnist_labels(net(X).argmax(axis=1))

titles = [true + '\n' +pred for true, pred in zip(trues, preds)]

d2l.show_images(X[0:n].reshape((n, 28, 28)), 1, n, titles=titles[0:n])

d2l.plt.show()

predict_ch3(net, test_iter)

softmax的简洁实现