onnxruntime调用AI模型的python和C++编程

python版的onnxruntime是比较容易使用的,先保证pip更新到最新再安装onnxruntime:

pip install --upgrade pip

#安装cpu版

pip install onnxruntime

#或者安装gpu版

#pip install onnxruntime-gpu只是用来验证模型的话,用cpu版的就很好了,比较简单易用。注意两种版本不要同时安装,否则调用时怎么弄都出错,说参数不对:

incompatible constructor arguments. The following argument types are supported卸载掉onnxruntime-gpu版后,onnxruntime InferenceSession(...)报错:

module 'onnxruntime' has no attribute 'InferenceSession'解决办法就是把onnxruntime cpu版也卸载掉后重新安装onnxruntime cpu版即可。

参照这里的API文档和sample代码写自己的代码即可:

https://www.onnxruntime.ai/python/api_summary.html

https://www.onnxruntime.ai/python/tutorial.html

当模型的输入输出都只有一个参数时,一般仿照sample里代码写就行了,如果有多个参数时可以仿照API文档里的例子使用iobinding来指定每个输入输出参数。

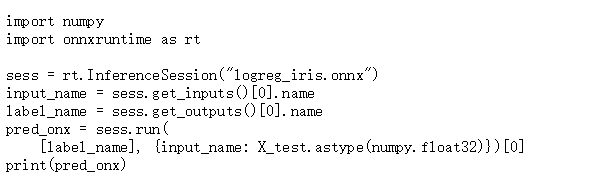

我自己代码,看起来调用部分代码很简单吧:

ort_session = onnxruntime.InferenceSession("models/efficientdet-d0-s.onnx")

...

#preprocess input data to get x

...

#

ort_inputs = {ort_session.get_inputs()[0].name: to_numpy(x)}

ort_outs = ort_session.run(None, ort_inputs)

regression = torch.from_numpy(ort_outs[0])

classification = torch.from_numpy(ort_outs[1])

anchors = torch.from_numpy(ort_outs[2])

#postprocess regression and classification and anchors to get bboxes

...

使用C++调用就麻烦些了,一般做AI模型的实验和工程应用落地的开发都是在linux上,但是,MS一贯的毛病就是只优先自家的宝贝Windows .NET:

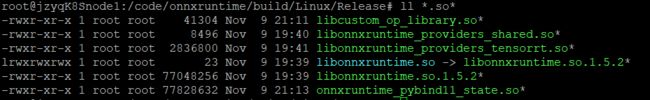

所以,首先你得针对你的Linux操作系统和CPU芯片编译出so库(python版的whl安装包也会顺带编译出来),如何编译,可以参考前一篇文章。

编译完后在onnxruntime/build/Linux/MinSizeRel/下会生成一堆文件,其中有几个so库文件,其中libonnxruntime.so就是需要在编译你的调用程序时需要加上-lonnxruntime进行链接的。

另外,头文件在onnxruntime/include/onnxruntime/core/下,编译时是需要include的,为了方便可以把整个onnxruntime/include/下的onnxruntime整个目录全部拷贝或者链接到项目的include路径里去:

CFLAGS+= -DUSE_TENSORRT -Wno-deprecated-declarations -fPIC -DDS_VERSION=\"5.0.0\" -DBOOST_ALL_DYN_LINK \

-Wno-deprecated-declarations -fPIC -DDS_VERSION=\"5.0.0\" -DBOOST_ALL_DYN_LINK \

-I /usr/local/cuda-$(CUDA_VER)/include \

-I /opt/nvidia/deepstream/deepstream/sources/includes/ \

-I $(PROJECT_ROOT)/plugin/common \

-I $(PROJECT_ROOT)/ext/inc \

-I $(PROJECT_ROOT)/ext/inc/onnxruntime \

-I $(PROJECT_ROOT)/ext/inc/onnxruntime/core/common \

-I $(PROJECT_ROOT)/ext/inc/onnxruntime/core/common/logging \

-I $(PROJECT_ROOT)/ext/inc/onnxruntime/core/framework \

-I $(PROJECT_ROOT)/ext/inc/onnxruntime/core/graph \

-I $(PROJECT_ROOT)/ext/inc/onnxruntime/core/optimizer \

-I $(PROJECT_ROOT)/ext/inc/onnxruntime/core/platform \

-I $(PROJECT_ROOT)/ext/inc/onnxruntime/core/session \

-I /usr/local/cuda-10.2/targets/aarch64-linux/include/ \

-I /usr/include/opencv4C++ API的文档是 https://github.com/microsoft/onnxruntime/blob/master/include/onnxruntime/core/session/onnxruntime_cxx_api.h

C++ samples里可以看看这两个简单的例子:

https://github.com/microsoft/onnxruntime/blob/master/samples/c_cxx/MNIST/MNIST.cpp

https://github.com/microsoft/onnxruntime/blob/master/csharp/test/Microsoft.ML.OnnxRuntime.EndToEndTests.Capi/CXX_Api_Sample.cpp

这些C++代码调用onnxruntime的例子在调用模型时都属于很简单的情况,AI模型只有一个input和一个output,实际项目中我们自己的模型很可能有多个output,这些怎么弄呢,API文档是没有说清楚的,我也是琢磨了一阵,翻看了onnxruntime的靠下层的源码onnxruntime/include/onnxruntime/core/session/onnxruntime_cxx_inline.h 才弄明白:

这里贴出我的与onnxruntime调用相关的代码作为示例,更多的代码涉及到项目的商业秘密就不好列出来了:

#include

#include

#include

#include

#include

#include

#include

#include

#include

#include "providers.h"

class EfficientDetOnnxRT {

public:

EfficientDetOnnxRT(std::string onnx_file,unsigned int numClasses);

~EfficientDetOnnxRT();

...

bool processInput(float* buffer,cv::Mat& cv_img_origin);

std::vector> processOutput(float* regressionOutputBuffer,float* classificationOutputBuffer,

float* anchorsOutputBuffer,float nms_threshold, float score_thresholdi);

std::vector> infer(cv::Mat& cv_img, float nms_threshold, float score_threshold );

private:

...

char* input_names[1]{nullptr};

float* input_image_{nullptr};

Ort::Value input_tensor_{(Ort::Value)nullptr};

std::array input_shape_;

Ort::Env env_ {ORT_LOGGING_LEVEL_ERROR, "EfficientDetOnnxEnv"};

Ort::Session session_ {nullptr};

char* output_names[3]{nullptr,nullptr,nullptr};

Ort::Value output_tensor_[3]{(Ort::Value)nullptr,(Ort::Value)nullptr,(Ort::Value)nullptr};

std::array output_shape_regression;

std::array output_shape_classification;

std::array output_shape_anchors;

float* results_regression{nullptr};

float* results_classification{nullptr};

float* results_anchors{nullptr};

...

}; EfficientDetOnnxRT::EfficientDetOnnxRT(std::string onnx_file,unsigned int numClasses) {

Ort::SessionOptions op;

op.SetLogSeverityLevel(ORT_LOGGING_LEVEL_ERROR);

int device_id = 0;

std::cout <<"onnxruntime loading onnx model..." <::max(),

0,

true

};

Ort::ThrowOnError(op.OrtSessionOptionsAppendExecutionProvider_CUDA(op, &cuda_options));

#endif

session_ = Ort::Session(env_, onnx_file.c_str(), op);

Ort::AllocatorWithDefaultOptions ort_alloc;

char* tmp = session_.GetInputName(0, ort_alloc);

input_names[0] = strdup(tmp);

ort_alloc.Free(tmp);

tmp = session_.GetOutputName(0, ort_alloc);

output_names[0] = strdup(tmp);

ort_alloc.Free(tmp);

tmp = session_.GetOutputName(1, ort_alloc);

output_names[1] = strdup(tmp);

ort_alloc.Free(tmp);

tmp = session_.GetOutputName(2, ort_alloc);

output_names[2] = strdup(tmp);

ort_alloc.Free(tmp);

Ort::TypeInfo info = session_.GetInputTypeInfo(0);

auto tensor_info = info.GetTensorTypeAndShapeInfo();

size_t dim_count = tensor_info.GetDimensionsCount();

std::vector dims(dim_count);

tensor_info.GetDimensions(dims.data(), dims.size());

channels_ = dims[1];

height_ = dims[2];

width_ = dims[3];

input_shape_[0]= dims[0];

input_shape_[1]= channels_;

input_shape_[2]= height_;

input_shape_[3]= width_;

info = session_.GetOutputTypeInfo(0);

auto tensor_info2 = info.GetTensorTypeAndShapeInfo();

dim_count = tensor_info.GetDimensionsCount();

dims.clear();

dims.resize(dim_count);

tensor_info2.GetDimensions(dims.data(), dims.size());

for (int i=0; i< dims.size();i++)

output_shape_regression[i] = dims[i];

info = session_.GetOutputTypeInfo(1);

auto tensor_info3 = info.GetTensorTypeAndShapeInfo();

dim_count = tensor_info3.GetDimensionsCount();

dims.clear();

dims.resize(dim_count);

tensor_info3.GetDimensions(dims.data(), dims.size());

for (int i=0; i< dims.size();i++)

output_shape_classification[i] = dims[i];

numClassScores_ = static_cast(dims[2]);

output_shape_classification[3] = numClassScores_;

...

info = session_.GetOutputTypeInfo(2);

auto tensor_info4 = info.GetTensorTypeAndShapeInfo();

dim_count = tensor_info4.GetDimensionsCount();

dims.clear();

dims.resize(dim_count);

tensor_info4.GetDimensions(dims.data(), dims.size());

for (int i=0; i< dims.size();i++)

output_shape_anchors[i] = dims[i];

...

int size_anchors = dims[1] * dims[2];

int size_classification = dims[1];

results_regression = new float[size_anchors];

results_classification = new float[size_classification];

results_anchors = new float[size_anchors];

int size_image_data = channels_ * width_ * height_;

input_image_ = new float[size_image_data];

auto memory_info = Ort::MemoryInfo::CreateCpu(OrtDeviceAllocator, OrtMemTypeCPU);

input_tensor_ = Ort::Value::CreateTensor(memory_info, input_image_, size_image_data, input_shape_.data(), input_shape_.size());

output_tensor_[0] = Ort::Value::CreateTensor(memory_info, results_regression, size_anchors,

output_shape_regression.data(), output_shape_regression.size());

output_tensor_[1] = Ort::Value::CreateTensor(memory_info, results_classification, size_classification,

output_shape_classification.data(), output_shape_classification.size());

output_tensor_[2] = Ort::Value::CreateTensor(memory_info, results_anchors, size_anchors,

output_shape_anchors.data(), output_shape_anchors.size());

}

...

std::vector> EfficientDetOnnxRT::infer(cv::Mat& cv_img, float nms_threshold, float score_threshold ) {

memset(input_image_,0, channels_ * height_ * width_ * sizeof(float));

processInput(input_image_,cv_img);

session_.Run(Ort::RunOptions{nullptr}, &input_names[0], &input_tensor_, 1, &output_names[0], &output_tensor_[0], 3);

return

processOutput(results_regression,results_classification,results_anchors,nms_threshold,score_threshold);

}

... C++代码的头文件需要包含onnxruntime_cxx_api.h这个onnxruntime的C++头文件

#include

另外,用到指定provider的话,还需要包含相关provider的头文件:

#include "providers.h"

可以把samples/c_cxx/include/providers.h这个文件拷贝到自己的项目中予以包含,因为这个文件是sample代码,并不在onnxruntime/include/onnxruntime/core/下,它的内容是:

#include "onnxruntime/core/providers/cpu/cpu_provider_factory.h"

#ifdef USE_CUDA

#include "onnxruntime/core/providers/cuda/cuda_provider_factory.h"

#endif

#ifdef USE_DNNL

#include "onnxruntime/core/providers/dnnl/dnnl_provider_factory.h"

#endif

#ifdef USE_NGRAPH

#include "onnxruntime/core/providers/ngraph/ngraph_provider_factory.h"

#endif

#ifdef USE_NUPHAR

#include "onnxruntime/core/providers/nuphar/nuphar_provider_factory.h"

#endif

#ifdef USE_TENSORRT

#include "onnxruntime/core/providers/tensorrt/tensorrt_provider_factory.h"

#include "onnxruntime/core/providers/cuda/cuda_provider_factory.h"

#endif

#ifdef USE_DML

#include "onnxruntime/core/providers/dml/dml_provider_factory.h"

#endif

#ifdef USE_MIGRAPHX

#include "onnxruntime/core/providers/migraphx/migraphx_provider_factory.h"

#endif包含了provider.h后,在Makefile里CFLAGS记得加上 -DUSE_TENSORRT

上面的代码中input/output的传入/出仍然采用老式的简单办法,嫌麻烦没有采用iobinding来写,多个output的names和tensors传入Run()时注意取地址的方式,弄错了是编译不过的,为何要这样取地址,而不能像C++ sample代码里那样写呢,翻看Session类的Run()的底层实现代码就明白了。

C++版的iobinding传input/output的用法可以参考API说明和python版示例代码里的写法翻译成C++的写法就是了。

关于env和session的定义也是有个坑,不要定义在类的构造函数内部,而是要定义成类成员,这样才能全局可用,否则infer()用到session时就会报错:

Program terminated with signal SIGSEGV, Segmentation fault.

#0 0x0000007f810c4240 in onnxruntime::logging::LoggingManager::Log(std::__cxx11::basic_string, std::allocator > const&, onnxruntime::logging::Capture const&) const () from /home/dev/app-ext/lib/libonnxruntime.so.1.5.2

[Current thread is 1 (Thread 0x7f52036010 (LWP 31523))]

(gdb) bt

#0 0x0000007f810c4240 in onnxruntime::logging::LoggingManager::Log(std::__cxx11::basic_string, std::allocator > const&, onnxruntime::logging::Capture const&) const () from /home/dev/app-ext/lib/libonnxruntime.so.1.5.2

#1 0x0000007f810c3c3c in onnxruntime::logging::Capture::~Capture() () from /home/dev/app-ext/lib/libonnxruntime.so.1.5.2

#2 0x0000007f8104c6b0 in onnxruntime::SequentialExecutor::Execute(onnxruntime::SessionState const&, std::vector > const&, std::vector > const&, std::vector > const&, std::vector >&, std::unordered_map, std::hash, std::equal_to, std::allocator > > > const&, onnxruntime::logging::Logger const&) ()

from /home/dev/app-ext/lib/libonnxruntime.so.1.5.2

#3 0x0000007f81039044 in onnxruntime::utils::ExecuteGraphImpl(onnxruntime::SessionState const&, onnxruntime::FeedsFetchesManager const&, std::vector > const&, std::vector >&, std::unordered_map, std::hash, std::equal_to, std::allocator > > > const&, ExecutionMode, bool const&, onnxruntime::logging::Logger const&, bool) ()

from /home/dev/app-ext/lib/libonnxruntime.so.1.5.2

#4 0x0000007f8103a6ec in onnxruntime::utils::ExecuteGraph(onnxruntime::SessionState const&, onnxruntime::FeedsFetchesManager&, std::vector > const&, std::vector >&, ExecutionMode, bool const&, onnxruntime::logging::Logger const&, bool) () from /home/dev/app-ext/lib/libonnxruntime.so.1.5.2

#5 0x0000007f808084ac in onnxruntime::InferenceSession::Run(OrtRunOptions const&, std::vector, std::allocator >, std::allocator, std::allocator > > > const&, std::vector > const&, std::vector, std::allocator >, std::allocator, std::allocator > > > const&, std::vector >*, std::vector > const*) () from /home/dev/app-ext/lib/libonnxruntime.so.1.5.2

#6 0x0000007f807d1e0c in OrtApis::Run(OrtSession*, OrtRunOptions const*, char const* const*, OrtValue const* const*, unsigned long, char const* const*, unsigned long, OrtValue**) ()

from /home/dev/app-ext/lib/libonnxruntime.so.1.5.2

#7 0x0000005562bbc0a0 in Ort::Session::Run (this=0x558186df88, run_options=..., input_names=0x558186df50, input_values=0x558186df60, input_count=1, output_names=0x558186df90,

output_values=0x558186dfa8, output_count=3) at /home/dev/laundry-app/ext/inc/onnxruntime/core/session/onnxruntime_cxx_inline.h:507

#8 0x0000005562bb9cd4 in EfficientDetOnnxRT::infer (this=0x558186df40, cv_img=..., nms_threshold=0.100000001, score_threshold=0.100000001) at effonnxrt.cpp:375

#9 0x0000005562bb56d8 in main (argc=1, argv=0x7fe2742d38) at test.cpp:21 把调用onnxruntime运行模型进行图像识别的应用程序编译出来后,如果是使用了TensorRT这个Provider,在第一次启动时会非常慢,我在Nano上是需要10分钟左右,原因就是TensorRT在解析和构建网络序列化生成engine文件非常慢,这个序列化engine文件默认是没有保存的,需要在运行程序前设置一下环境变量:

export ORT_TENSORRT_ENGINE_CACHE_ENABLE=1

export ORT_TENSORRT_ENGINE_CACHE_PATH="/home/dev/model"然后TensorRT在启动时会在上面设置的路径/home/dev/model/下生成一个序列化了的engine文件,名字类似这样:

TensorrtExecutionProvider_TRTKernel_graph_torch-jit-export_0_0_706245698641377.engine

下次启动时只要设置了上面两个环境变量,那么TensorRT会自动读取这个engine文件,这样就略过了onnx解析等步骤,很快就启动完毕。

关于使用TensorRT Provider时,有哪些环境变量可设置及其意义,参见https://github.com/microsoft/onnxruntime/blob/master/docs/execution_providers/TensorRT-ExecutionProvider.md,环境变量用得比较多点的除了上面的两个外,恐怕就是下面的几个了,可根据实际情况设置:

#override default max workspace size to 2GB

export ORT_TENSORRT_MAX_WORKSPACE_SIZE=2147483648

#Enable FP16 mode in TensorRT

export ORT_TENSORRT_FP16_ENABLE=1

#Enable INT8 mode in TensorRT

#export ORT_TENSORRT_INT8_ENABLE=1我测试了一下,使用了TensorRT的Provider时,做图像识别的推理速度会比CPU版快了不少,使用CUDA Provider时推理的速度居然比使用默认的CPU Provider(不设置任何Provider时默认使用它)时推理速度还慢,搞不懂为何,不过反正只是用来验证和对比模型精度,不是实际项目应用也就无所谓了。