Python数据分析与机器学习28-新闻分类

文章目录

- 一. 数据源介绍

- 二. 分词

- 三. 停用词

- 四. wordcloud

- 五. TF-IDF :提取关键词

- 六. LDA :主题模型

- 参考:

一. 数据源介绍

搜狗实验室的数据集,我看了下目前访问会直接跳转到搜狗的首页,暂时下载不了,我从其它渠道获取到数据集。

http://www.sogou.com/labs/resource/ca.php

来自若干新闻站点2012年6月-----7月期间国内、国际、体育、社会、娱乐等18个频道的新闻算数据,提供url和正文信息

数据格式:

页面URL

页面ID

页面标题

页面标题

注:content字段是去除了HTML标签,保存的是新闻正文文本

二. 分词

使用结吧分词器

代码:

import pandas as pd

import jieba

# 读取数据源

df_news = pd.read_table('E:/file/val.txt',names=['category','theme','URL','content'],encoding='utf-8')

df_news = df_news.dropna()

content = df_news.content.values.tolist()

#print (content[2000:3000])

# 使用jieba分词器进行分词

content_S = []

for line in content:

current_segment = jieba.lcut(line)

if len(current_segment) > 1 and current_segment != '\r\n': #换行符

content_S.append(current_segment)

#print(content_S[2000:3000])

df_content=pd.DataFrame({'content_S':content_S})

print(df_content.head())

三. 停用词

停用词概念:

- 语料中大量出现

- 没啥大用

- 留着过年嘛?

更新上一步代码:

import pandas as pd

import jieba

import numpy as np

# 读取数据源

df_news = pd.read_table('E:/file/val.txt',names=['category','theme','URL','content'],encoding='utf-8')

df_news = df_news.dropna()

content = df_news.content.values.tolist()

#print (content[2000:3000])

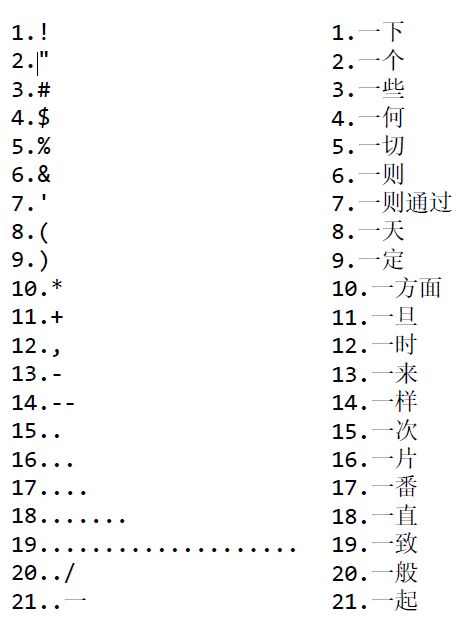

# 获取停用词

stopwords=pd.read_csv("E:/file/stopwords.txt",index_col=False,sep="\t",quoting=3,names=['stopword'], encoding='utf-8')

# stopwords.head(20)

# 使用jieba分词器进行分词

content_S = []

for line in content:

current_segment = jieba.lcut(line)

if len(current_segment) > 1 and current_segment != '\r\n': #换行符

content_S.append(current_segment)

#print(content_S[2000:3000])

df_content=pd.DataFrame({'content_S':content_S})

#print(df_content.head())

# 删除停用词

def drop_stopwords(contents, stopwords):

contents_clean = []

all_words = []

for line in contents:

line_clean = []

for word in line:

if word in stopwords:

continue

line_clean.append(word)

all_words.append(str(word))

contents_clean.append(line_clean)

return contents_clean, all_words

# print (contents_clean)

contents = df_content.content_S.values.tolist()

stopwords = stopwords.stopword.values.tolist()

contents_clean, all_words = drop_stopwords(contents, stopwords)

df_content=pd.DataFrame({'contents_clean':contents_clean})

print(df_content.head())

print("##########################")

df_all_words=pd.DataFrame({'all_words':all_words})

print(df_all_words.head())

print("##########################")

words_count=df_all_words.groupby(by=['all_words'])['all_words'].agg({"count":np.size})

words_count=words_count.reset_index().sort_values(by=["count"],ascending=False)

print(words_count.head())

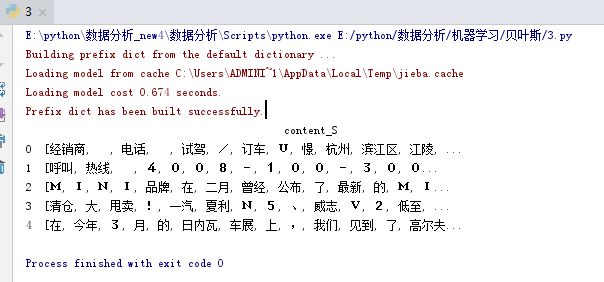

测试记录:

contents_clean

0 [经销商, 电话, 试驾, 订车, U, 憬, 杭州, 滨江区, 江陵, 路, 号, 转, ...

1 [呼叫, 热线, 服务, 邮箱, k, f, p, e, o, p, l, e, d, a,...

2 [M, I, N, I, 品牌, 二月, 公布, 最新, M, I, N, I, 新, 概念...

3 [清仓, 甩卖, 一汽, 夏利, N, 威志, V, 低至, 万, 启新, 中国, 一汽, ...

4 [日内瓦, 车展, 见到, 高尔夫, 家族, 新, 成员, 高尔夫, 敞篷版, 款, 全新,...

##########################

all_words

0 经销商

1 电话

2 试驾

3 订车

4 U

##########################

all_words count

4077 中 5199

4209 中国 3115

88255 说 3055

104747 S 2646

1373 万 2390

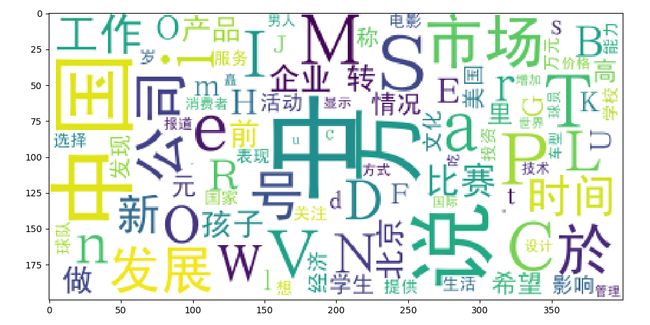

四. wordcloud

代码:

import pandas as pd

import jieba

import numpy as np

from wordcloud import WordCloud

import matplotlib.pyplot as plt

import matplotlib

# 读取数据源

df_news = pd.read_table('E:/file/val.txt',names=['category','theme','URL','content'],encoding='utf-8')

df_news = df_news.dropna()

content = df_news.content.values.tolist()

#print (content[2000:3000])

# 获取停用词

stopwords=pd.read_csv("E:/file/stopwords.txt",index_col=False,sep="\t",quoting=3,names=['stopword'], encoding='utf-8')

# stopwords.head(20)

# 使用jieba分词器进行分词

content_S = []

for line in content:

current_segment = jieba.lcut(line)

if len(current_segment) > 1 and current_segment != '\r\n': #换行符

content_S.append(current_segment)

#print(content_S[2000:3000])

df_content=pd.DataFrame({'content_S':content_S})

#print(df_content.head())

# 删除停用词

def drop_stopwords(contents, stopwords):

contents_clean = []

all_words = []

for line in contents:

line_clean = []

for word in line:

if word in stopwords:

continue

line_clean.append(word)

all_words.append(str(word))

contents_clean.append(line_clean)

return contents_clean, all_words

# print (contents_clean)

contents = df_content.content_S.values.tolist()

stopwords = stopwords.stopword.values.tolist()

contents_clean, all_words = drop_stopwords(contents, stopwords)

df_content=pd.DataFrame({'contents_clean':contents_clean})

#print(df_content.head())

#print("##########################")

df_all_words=pd.DataFrame({'all_words':all_words})

#print(df_all_words.head())

#print("##########################")

words_count=df_all_words.groupby(by=['all_words'])['all_words'].agg({"count":np.size})

words_count=words_count.reset_index().sort_values(by=["count"],ascending=False)

#print(words_count.head())

matplotlib.rcParams['figure.figsize'] = (10.0, 5.0)

wordcloud=WordCloud(font_path="E:/file/simhei.ttf",background_color="white",max_font_size=80)

word_frequence = {x[0]:x[1] for x in words_count.head(100).values}

wordcloud=wordcloud.fit_words(word_frequence)

plt.imshow(wordcloud)

plt.show()

五. TF-IDF :提取关键词

代码:

import pandas as pd

import jieba

import numpy as np

import jieba.analyse

# 读取数据源

df_news = pd.read_table('E:/file/val.txt',names=['category','theme','URL','content'],encoding='utf-8')

df_news = df_news.dropna()

content = df_news.content.values.tolist()

# 使用jieba分词器进行分词

content_S = []

for line in content:

current_segment = jieba.lcut(line)

if len(current_segment) > 1 and current_segment != '\r\n': #换行符

content_S.append(current_segment)

index = 2400

print (df_news['content'][index])

content_S_str = "".join(content_S[index])

print (" ".join(jieba.analyse.extract_tags(content_S_str, topK=5, withWeight=False)))

测试记录:

法国VS西班牙、里贝里VS哈维,北京时间6月24日凌晨一场的大战举世瞩目,而这场胜利不仅仅关乎两支顶级强队的命运,同时也是他们背后的球衣赞助商耐克和阿迪达斯之间的一次角逐。T谌胙”窘炫分薇的16支球队之中,阿迪达斯和耐克的势力范围也是几乎旗鼓相当:其中有5家球衣由耐克提供,而阿迪达斯则赞助了6家,此外茵宝有3家,而剩下的两家则由彪马赞助。而当比赛进行到现在,率先挺进四强的两支球队分别被耐克支持的葡萄牙和阿迪达斯支持的德国占据,而由于最后一场1/4决赛是茵宝(英格兰)和彪马(意大利)的对决,这也意味着明天凌晨西班牙同法国这场阿迪达斯和耐克在1/4决赛的唯一一次直接交手将直接决定两家体育巨头在此次欧洲杯上的胜负。8据评估,在2012年足球商品的销售额能总共超过40亿欧元,而单单是不足一个月的欧洲杯就有高达5亿的销售额,也就是说在欧洲杯期间将有700万件球衣被抢购一空。根据市场评估,两大巨头阿迪达斯和耐克的市场占有率也是并驾齐驱,其中前者占据38%,而后者占据36%。体育权利顾问奥利弗-米歇尔在接受《队报》采访时说:“欧洲杯是耐克通过法国翻身的一个绝佳机会!”C仔尔接着谈到两大赞助商的经营策略:“竞技体育的成功会燃起球衣购买的热情,不过即便是水平相当,不同国家之间的欧洲杯效应却存在不同。在德国就很出色,大约1/4的德国人通过电视观看了比赛,而在西班牙效果则差很多,由于民族主义高涨的加泰罗尼亚地区只关注巴萨和巴萨的球衣,他们对西班牙国家队根本没什么兴趣。”因此尽管西班牙接连拿下欧洲杯和世界杯,但是阿迪达斯只为西班牙足协支付每年2600万的赞助费#相比之下尽管最近两届大赛表现糟糕法国足协将从耐克手中每年可以得到4000万欧元。米歇尔解释道:“法国创纪录的4000万欧元赞助费得益于阿迪达斯和耐克竞逐未来15年欧洲市场的竞争。耐克需要笼络一个大国来打赢这场欧洲大陆的战争,而尽管德国拿到的赞助费并不太高,但是他们却显然牢牢掌握在民族品牌阿迪达斯手中。从长期投资来看,耐克给法国的赞助并不算过高。”

耐克 阿迪达斯 欧洲杯 球衣 西班牙

六. LDA :主题模型

格式要求:list of list形式,分词好的的整个语料

代码:

import pandas as pd

import jieba

import numpy as np

import jieba.analyse

from gensim import corpora, models, similarities

import gensim

from sklearn.model_selection import train_test_split

from sklearn.naive_bayes import MultinomialNB

from sklearn.feature_extraction.text import TfidfVectorizer

# 读取数据源

df_news = pd.read_table('E:/file/val.txt',names=['category','theme','URL','content'],encoding='utf-8')

df_news = df_news.dropna()

content = df_news.content.values.tolist()

# 获取停用词

stopwords=pd.read_csv("E:/file/stopwords.txt",index_col=False,sep="\t",quoting=3,names=['stopword'], encoding='utf-8')

# 使用jieba分词器进行分词

content_S = []

for line in content:

current_segment = jieba.lcut(line)

if len(current_segment) > 1 and current_segment != '\r\n': #换行符

content_S.append(current_segment)

df_content=pd.DataFrame({'content_S':content_S})

# 删除停用词

def drop_stopwords(contents, stopwords):

contents_clean = []

all_words = []

for line in contents:

line_clean = []

for word in line:

if word in stopwords:

continue

line_clean.append(word)

all_words.append(str(word))

contents_clean.append(line_clean)

return contents_clean, all_words

contents = df_content.content_S.values.tolist()

stopwords = stopwords.stopword.values.tolist()

contents_clean, all_words = drop_stopwords(contents, stopwords)

df_content=pd.DataFrame({'contents_clean':contents_clean})

#做映射,相当于词袋

dictionary = corpora.Dictionary(contents_clean)

corpus = [dictionary.doc2bow(sentence) for sentence in contents_clean]

#类似Kmeans自己指定K值

lda = gensim.models.ldamodel.LdaModel(corpus=corpus, id2word=dictionary, num_topics=10)

df_train=pd.DataFrame({'contents_clean':contents_clean,'label':df_news['category']})

label_mapping = {"汽车": 1, "财经": 2, "科技": 3, "健康": 4, "体育":5, "教育": 6,"文化": 7,"军事": 8,"娱乐": 9,"时尚": 0}

df_train['label'] = df_train['label'].map(label_mapping)

# 划分测试集和训练集

x_train, x_test, y_train, y_test = train_test_split(df_train['contents_clean'].values, df_train['label'].values, random_state=1)

words = []

for line_index in range(len(x_train)):

try:

#x_train[line_index][word_index] = str(x_train[line_index][word_index])

words.append(' '.join(x_train[line_index]))

except:

print (line_index)

test_words = []

for line_index in range(len(x_test)):

try:

#x_train[line_index][word_index] = str(x_train[line_index][word_index])

test_words.append(' '.join(x_test[line_index]))

except:

print (line_index)

# 将原始文档集合转换为TF-IDF特性矩阵

vectorizer = TfidfVectorizer(analyzer='word', max_features=4000, lowercase = False)

vectorizer.fit(words)

# 多项式朴素贝叶斯分类器适用于具有离散特征的分类(如文本分类的字数)。多项式分布通常需要整数特征计数。

# 然而,在实践中,像tf-idf这样的小数计数也可能有效。

classifier = MultinomialNB()

classifier.fit(vectorizer.transform(words), y_train)

print(classifier.score(vectorizer.transform(test_words), y_test))

测试记录:

0.8152

参考:

- https://study.163.com/course/introduction.htm?courseId=1003590004#/courseDetail?tab=1

- https://www.cnblogs.com/z-712/p/11108560.html