《PyTorch深度学习实践》Lecture_11 卷积神经网络进阶 Convolutional Neural Network

B站刘二大人老师的《PyTorch深度学习实践》Lecture_11 GoogLeNet+Deep Residual Learning

Lecture_11 卷积神经网络进阶 Convolutional Neural Network

GoogLeNet

要善于找到复杂代码中相同的模块写成函数/类→Inception Module

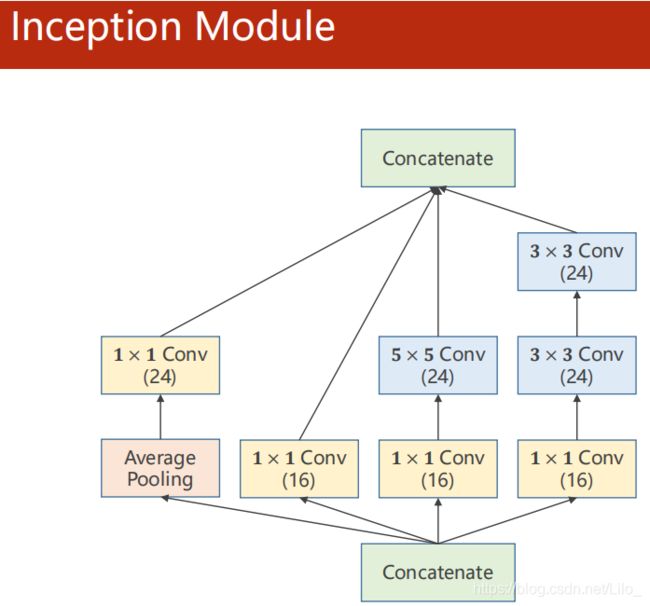

Inception Module

不知道哪个效果好,所以使用多种卷积进行堆叠,通过训练将好的增加权重,不好的降低权重

暴力枚举每种超参数,使用梯度下降自动选出最合适的

注意每条路的输入输出要一致

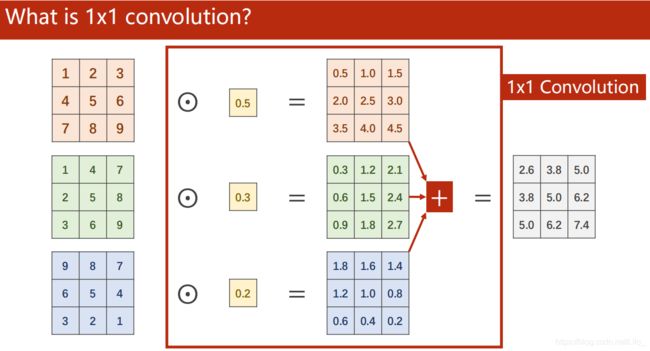

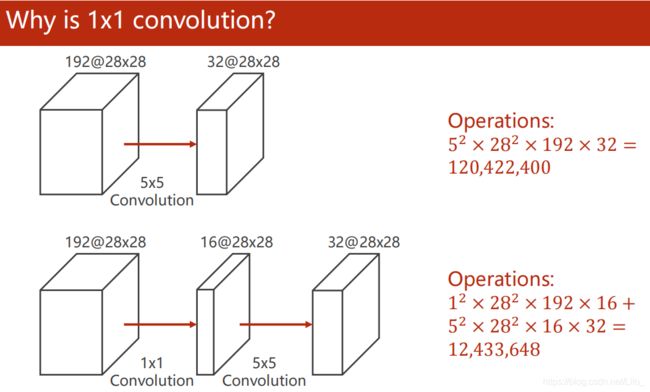

1x1 convolution?

Implementation of Inception Module

class InceptionA(nn.Module):

"""docstring for InceptionA"""

def __init__(self,in_channels):

super(InceptionA, self).__init__()

self.branch1x1 = nn.Conv2d(in_channels,16,kernel_size=1)

self.branch5x5_1 = nn.Conv2d(in_channels,16,kernel_size=1)

self.branch5x5_2 = nn.Conv2d(16,24,kernel_size=5,padding=2)

self.branch3x3_1 = nn.Conv2d(in_channels,16,kernel_size=1)

self.branch3x3_2 = nn.Conv2d(16,24,kernel_size=3,padding=1)

self.branch3x3_3 = nn.Conv2d(24,24,kernel_size=3,padding=1)

self.branch_pool = nn.Conv2d(in_channels,24,kernel_size=1)

def forward(self,x):

branch1x1 = self.branch1x1(x)

branch5x5 = self.branch5x5_1(x)

branch5x5 = self.branch5x5_2(branch5x5)

branch3x3 = self.branch3x3_1(x)

branch3x3 = self.branch3x3_2(branch3x3)

branch3x3 = self.branch3x3_3(branch3x3)

branch_pool = F.avg_pool2d(x,kernel_size=3,stride=1,padding=1)

branch_pool = self.branch_pool(branch_pool)

outputs = [branch1x1,branch5x5,branch3x3,branch_pool]

return torch.cat(outputs,dim=1) # 沿第一个(channel)拼接

Using Inception Module

class Net(nn.Module):

def __init__(self):

super(Net,self).__init__()

self.conv1 = nn.Conv2d(1,10,kernel_size=5)

self.conv2 = nn.Conv2d(88,20,kernel_size=5)

self.incep1 = InceptionA(in_channels=10)

self.incep2 = InceptionA(in_channels=20)

self.mp = nn.MaxPool2d(2)

self.fc = nn.Linear(1408,10)

def forward(self,x):

in_size = x.size(0)

x = F.relu(self.mp(self.conv1(x)))

x = self.incep1(x)

x = F.relu(self.mp(self.conv2(x)))

x = self.incep2(x)

x = x.view(in_size,-1)

x = self.fc(x)

return x

代码复现(输出曲线)

要注意观察test accuracy来决定训练轮次,如果某次测试集准确率达到新高,将其参数存盘

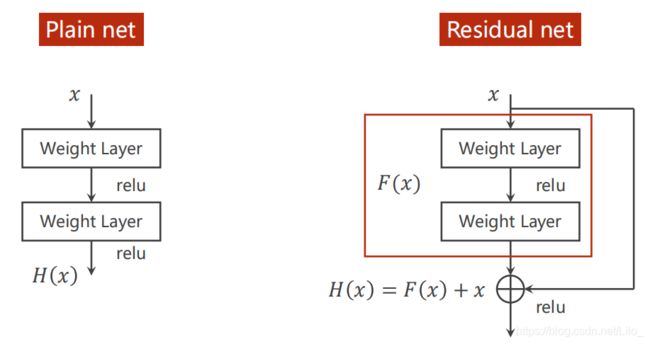

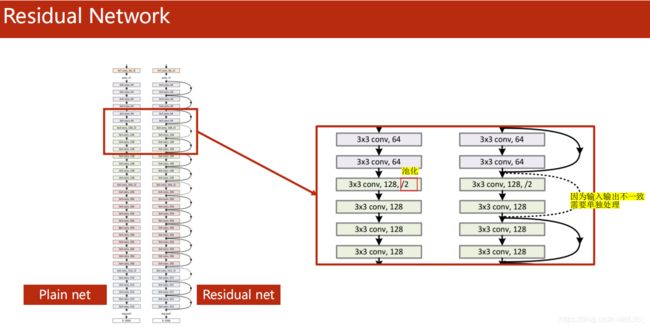

过度的增加网络层数会导致梯度消失!!!

Deep Residual Learning 残差网络

Residual Block

class ResidualBlock(nn.Module):

"""docstring for ResidualBlock"""

def __init__(self, channels):

super(ResidualBlock, self).__init__()

self.channels = channels

self.conv1 = nn.Conv2d(channels,channels,kernel_size=3,padding=1)

self.conv2 = nn.Conv2d(channels,channels,kernel_size=3,padding=1)

def forward(self,x):

y = F.relu(self,conv1(x))

y = self.conv2(y)

return F.relu(x+y)

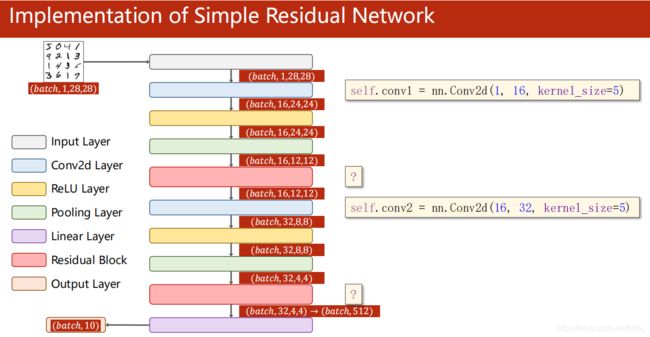

Implementation of Simple Residual Network

class Net(nn.Module):

def __init__(self):

super(Net, self).__init__()

self.conv1 = nn.Conv2d(1, 16, kernel_size=5)

self.conv2 = nn.Conv2d(16, 32, kernel_size=5)

self.mp = nn.MaxPool2d(2)

self.rblock1 = ResidualBlock(16)

self.rblock2 = ResidualBlock(32)

self.fc = nn.Linear(512, 10)

def forward(self, x):

in_size = x.size(0)

x = self.mp(F.relu(self.conv1(x)))

x = self.rblock1(x)

x = self.mp(F.relu(self.conv2(x)))

x = self.rblock2(x)

x = x.view(in_size, -1)

x = self.fc(x)

return x