深度学习——自定义层+读写文件(笔记)

一 自定义层

1.自定义层:①构造一个没有任何参数的自定义层

import torch

import torch.nn.functional as F

from torch import nn

class CenteredLayer(nn.Module):

def __init__(self):

super().__init__()

def forward(self, X):

return X - X.mean() # 输入x-x的均值

layer = CenteredLayer()

print(layer(torch.FloatTensor([1, 2, 3, 4, 5])))输出:tensor([-2., -1., 0., 1., 2.])

②将层作为组件合并到更复杂的模型中

net = nn.Sequential(nn.Linear(8, 128), CenteredLayer())

Y = net(torch.rand(4, 8))

print(Y.mean())2.带参数的图层

class MyLinear(nn.Module):

def __init__(self, in_units, units): # in_units 输入的维度 units输出的维度

super(MyLinear, self).__init__()

self.weight = nn.Parameter(torch.randn(in_units,units)) # 权重随机正态分布 in_units*units

self.bias = nn.Parameter(torch.randn(units, )) # 偏移为0 units

def forward(self, X):

linear = torch.matmul(X, self.weight.data) + self.bias.data

return F.relu(linear)

linear = MyLinear(5, 3)

print(linear.weight)输出:

二读写文件:加载和保存张量

1.读写文件,加载和保存张量

import torch

from torch import nn

from torch.nn import functional as F

# 读写文件 加载和保存张量

x = torch.arange(4)

torch.save(x, 'x-file') # 把x存放到当前目录x-file文件里面

x2 = torch.load('x-file') # 读取x-file文件里面的内容

print(x2)

输出:

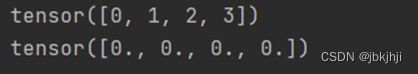

2.存储一个列表。

# 存储一个张量列表,把他读回内存

y = torch.zeros(4)

torch.save([x, y], 'xy-files') # 把[x,y]保存到list

x2, y2 = torch.load('xy-files') # 读取list 把x2=x,y2=y

print(x2)

print(y2)

输出:

3.存储字典

mydict = {'x': x, 'y': y}

torch.save(mydict, 'mydict')

mydict2 = torch.load('mydict')

print(mydict2)

4.①加载和保存模型参数

class MLP(nn.Module):

def __init__(self):

super().__init__()

self.hidden = nn.Linear(20, 256)

self.output = nn.Linear(256, 10)

def forward(self, x):

return self.output(F.relu(self.hidden(x)))

net = MLP()

X = torch.randn(size=(2, 20))

Y = net(X)

# 将模型参数存储到mlp.params文件 torch.save(net.state_dict(), 'mlp.params')

②读取文件的参数

# 读取文件中存储的参数

clone = MLP() # 先实例化网络,参数是随机的

clone.load_state_dict(torch.load("mlp.params")) # 让mlp.params参数拷贝过来

print(clone.eval())

③验证

X = torch.randn(size=(2, 20))

Y = net(X)

Y_clone = clone(X)

print(Y_clone == Y)