风格迁移:如何用油画的特性去渲染一张照片

目录

前言

整合程序

使用PyTorch进行neural transfer(风格转移)

简介

基本原理

导入第三方库并选择设备

以下是实现风格迁移所需的第三方库列表

加载图像

损失函数

内容损失

风格损失

导入模型

梯度下降

前言

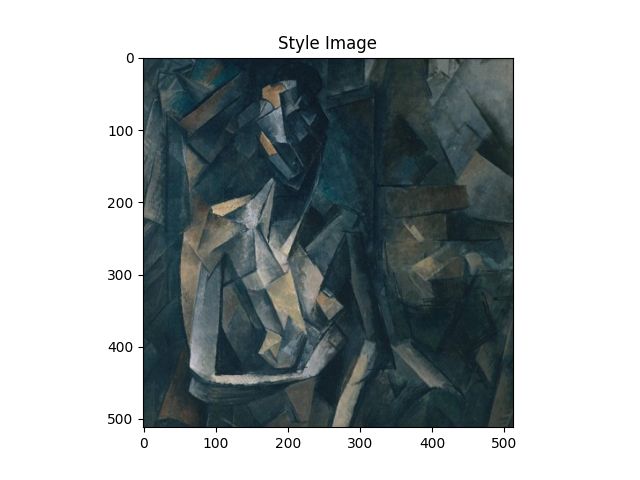

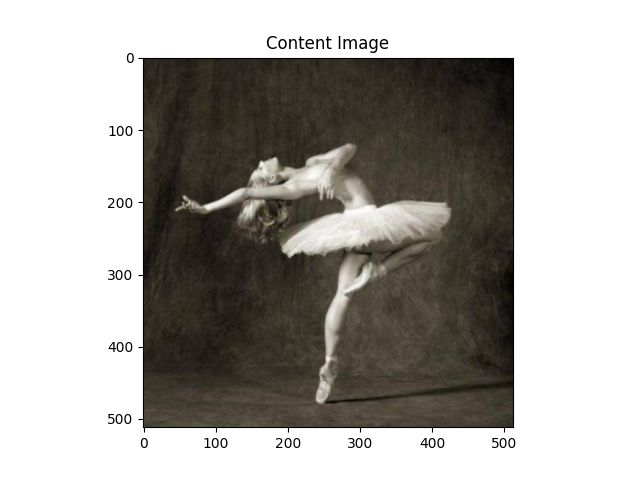

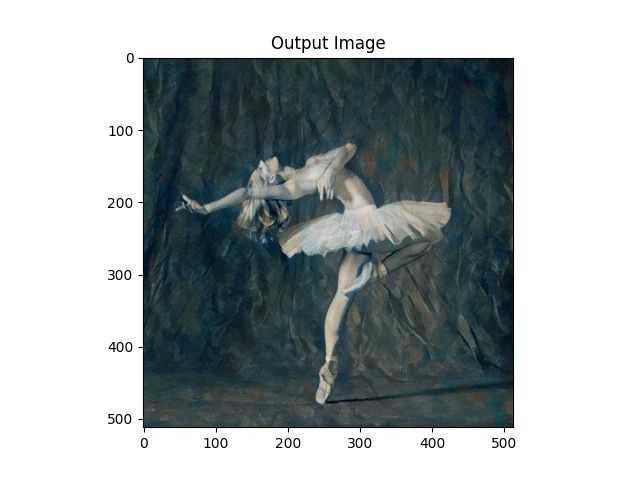

在浏览pytorch文档的过程中,发现了一篇风格迁移的文章,正好与最近感兴趣的数字油画相关,于是简单润了一下(后面的一些润不下去了(T ^ T) ),以供学习。该程序大概能够实现给两张图片,一张作为主要内容,一张作为风格渲染,生成第三张照片。如给一张普通的人物图像,和一张油画,能够将人物图像渲染成油画类型,当然可能会有点丑,所以还是挑选一些目测合适的吧。

对于源文档中的程序做了一些调整,其中以TODO的形式标在了整合程序中(谁叫pycharm里TODO可以标记定位呢ヽ(ー_ー)ノ),其中需要的的有以下三点:

- 第20行那里,如果GPU没配置好,是跑不了GPU的,速度会比较慢,但是也还Ok。如果想要图片更加精细,就把两个数字都调大点就好了(在分不清GPU有没有运作的情况下),大概128的倍数,当然时间也会增加。

- 第35、37行那里就是添加自己照片的地方,如果不想看代码的话,直接修改哪里的路径就可以了,需要注意的是两张照片的像素必须大小一致

- 第56、58行是在原来的程序里新添加的两行,如果不添加,紧接着的两行函数将会跑不了,报back_end出错,应该matplotlib里的画布没设置好,这个问题好像挺常见的

整合程序

from __future__ import print_function

import torch

import torch.nn as nn

import torch.nn.functional as F

import torch.optim as optim

from PIL import Image

import matplotlib.pyplot as plt

import torchvision.transforms as transforms

import torchvision.models as models

import copy

device = torch.device("cuda" if torch.cuda.is_available() else "cpu")

# desired size of the output image

#TODO:这里可以修改以下两个数来让图片更加的精细

imsize = 512 if torch.cuda.is_available() else 512 # use small size if no gpu

loader = transforms.Compose([

transforms.Resize(imsize), # scale imported image

transforms.ToTensor()]) # transform it into a torch tensor

def image_loader(image_name):

image = Image.open(image_name)

# fake batch dimension required to fit network's input dimensions

image = loader(image).unsqueeze(0)

return image.to(device, torch.float)

#TODO:以下可以替换成你想要的图像,改名即可,注意两张图像的尺寸大小应该是一致的

# style_img = image_loader("./images/picasso.jpg")

style_img = image_loader("./images/style.jpg")

# content_img = image_loader("./images/dancing.jpg")

content_img = image_loader("./images/content.jpg")

assert style_img.size() == content_img.size(), \

"we need to import style and content images of the same size"

unloader = transforms.ToPILImage() # reconvert into PIL image

plt.ion()

def imshow(tensor, title=None):

image = tensor.cpu().clone() # we clone the tensor to not do changes on it

image = image.squeeze(0) # remove the fake batch dimension

image = unloader(image)

plt.imshow(image)

if title is not None:

plt.title(title)

plt.pause(0.001) # pause a bit so that plots are updated

#TODO:弄清楚为什么要配置backend,添加如下两行才能正常使用画布

import matplotlib

matplotlib.use('TkAgg')

plt.figure()

imshow(style_img, title='Style Image')

plt.figure()

imshow(content_img, title='Content Image')

class ContentLoss(nn.Module):

def __init__(self, target,):

super(ContentLoss, self).__init__()

# we 'detach' the target content from the tree used

# to dynamically compute the gradient: this is a stated value,

# not a variable. Otherwise the forward method of the criterion

# will throw an error.

self.target = target.detach()

def forward(self, input):

self.loss = F.mse_loss(input, self.target)

return input

def gram_matrix(input):

a, b, c, d = input.size() # a=batch size(=1)

# b=number of feature maps

# (c,d)=dimensions of a f. map (N=c*d)

features = input.view(a * b, c * d) # resise F_XL into \hat F_XL

G = torch.mm(features, features.t()) # compute the gram product

# we 'normalize' the values of the gram matrix

# by dividing by the number of element in each feature maps.

return G.div(a * b * c * d)

class StyleLoss(nn.Module):

def __init__(self, target_feature):

super(StyleLoss, self).__init__()

self.target = gram_matrix(target_feature).detach()

def forward(self, input):

G = gram_matrix(input)

self.loss = F.mse_loss(G, self.target)

return input

cnn = models.vgg19(pretrained=True).features.to(device).eval()

cnn_normalization_mean = torch.tensor([0.485, 0.456, 0.406]).to(device)

cnn_normalization_std = torch.tensor([0.229, 0.224, 0.225]).to(device)

# create a module to normalize input image so we can easily put it in a

# nn.Sequential

class Normalization(nn.Module):

def __init__(self, mean, std):

super(Normalization, self).__init__()

# .view the mean and std to make them [C x 1 x 1] so that they can

# directly work with image Tensor of shape [B x C x H x W].

# B is batch size. C is number of channels. H is height and W is width.

self.mean = torch.tensor(mean).view(-1, 1, 1)

self.std = torch.tensor(std).view(-1, 1, 1)

def forward(self, img):

# normalize img

return (img - self.mean) / self.std

# desired depth layers to compute style/content losses :

content_layers_default = ['conv_4']

style_layers_default = ['conv_1', 'conv_2', 'conv_3', 'conv_4', 'conv_5']

def get_style_model_and_losses(cnn, normalization_mean, normalization_std,

style_img, content_img,

content_layers=content_layers_default,

style_layers=style_layers_default):

# normalization module

normalization = Normalization(normalization_mean, normalization_std).to(device)

# just in order to have an iterable access to or list of content/syle

# losses

content_losses = []

style_losses = []

# assuming that cnn is a nn.Sequential, so we make a new nn.Sequential

# to put in modules that are supposed to be activated sequentially

model = nn.Sequential(normalization)

i = 0 # increment every time we see a conv

for layer in cnn.children():

if isinstance(layer, nn.Conv2d):

i += 1

name = 'conv_{}'.format(i)

elif isinstance(layer, nn.ReLU):

name = 'relu_{}'.format(i)

# The in-place version doesn't play very nicely with the ContentLoss

# and StyleLoss we insert below. So we replace with out-of-place

# ones here.

layer = nn.ReLU(inplace=False)

elif isinstance(layer, nn.MaxPool2d):

name = 'pool_{}'.format(i)

elif isinstance(layer, nn.BatchNorm2d):

name = 'bn_{}'.format(i)

else:

raise RuntimeError('Unrecognized layer: {}'.format(layer.__class__.__name__))

model.add_module(name, layer)

if name in content_layers:

# add content loss:

target = model(content_img).detach()

content_loss = ContentLoss(target)

model.add_module("content_loss_{}".format(i), content_loss)

content_losses.append(content_loss)

if name in style_layers:

# add style loss:

target_feature = model(style_img).detach()

style_loss = StyleLoss(target_feature)

model.add_module("style_loss_{}".format(i), style_loss)

style_losses.append(style_loss)

# now we trim off the layers after the last content and style losses

for i in range(len(model) - 1, -1, -1):

if isinstance(model[i], ContentLoss) or isinstance(model[i], StyleLoss):

break

model = model[:(i + 1)]

return model, style_losses, content_losses

input_img = content_img.clone()

# if you want to use white noise instead uncomment the below line:

# input_img = torch.randn(content_img.data.size(), device=device)

# add the original input image to the figure:

plt.figure()

imshow(input_img, title='Input Image')

def get_input_optimizer(input_img):

# this line to show that input is a parameter that requires a gradient

optimizer = optim.LBFGS([input_img])

return optimizer

def run_style_transfer(cnn, normalization_mean, normalization_std,

content_img, style_img, input_img, num_steps=300,

style_weight=1000000, content_weight=1):

"""Run the style transfer."""

print('Building the style transfer model..')

model, style_losses, content_losses = get_style_model_and_losses(cnn,

normalization_mean, normalization_std, style_img, content_img)

# We want to optimize the input and not the model parameters so we

# update all the requires_grad fields accordingly

input_img.requires_grad_(True)

model.requires_grad_(False)

optimizer = get_input_optimizer(input_img)

print('Optimizing..')

run = [0]

while run[0] <= num_steps:

def closure():

# correct the values of updated input image

with torch.no_grad():

input_img.clamp_(0, 1)

optimizer.zero_grad()

model(input_img)

style_score = 0

content_score = 0

for sl in style_losses:

style_score += sl.loss

for cl in content_losses:

content_score += cl.loss

style_score *= style_weight

content_score *= content_weight

loss = style_score + content_score

loss.backward()

run[0] += 1

if run[0] % 50 == 0:

print("run {}:".format(run))

print('Style Loss : {:4f} Content Loss: {:4f}'.format(

style_score.item(), content_score.item()))

print()

return style_score + content_score

optimizer.step(closure)

# a last correction...

with torch.no_grad():

input_img.clamp_(0, 1)

return input_img

output = run_style_transfer(cnn, cnn_normalization_mean, cnn_normalization_std,

content_img, style_img, input_img)

plt.figure()

imshow(output, title='Output Image')

# sphinx_gallery_thumbnail_number = 4

plt.ioff()

plt.show()以下是润的文档

使用PyTorch进行neural transfer(风格转移)

作者: Alexis Jacq

编辑:Winston Herring

原文链接:Neural Transfer Using PyTorch — PyTorch Tutorials 1.12.1+cu102 documentation

(仅供学习,侵权联删)

简介

本教程解释了如何实现由Leon A. Gatys,、Alexander S. Ecker 和 Matthias Bethge发明的风格转移算法。Neural-Style或者叫 Neural-Transfer, 允许你放入一张照片并一种新的艺术风格重新制作它. 该算法需要三张图像, 一张input image(输入图像),一张content-image(内容图像), 和一张 style-image(风格图像), 并改变输入图像来使得图像同时拥有内容图像的内容和风格图像的风格。

基本原理

原理很简单:我们定义两个距离,一个用于内容(D_CDC)另一个用于样式 (D_SDS)。D_CDC测量的是两个图像(输入图像和内容图像)之间的内容差异,而D_SDS测量两个图像(输入图像和风格图像)之间的风格差异。然后,我们获取第三个图像,即输入图像,并对其进行转换,将其与内容图像的内容距离和与风格图像的样式距离降到最低。现在我们可以导入必要的包并开始风格迁移。

导入第三方库并选择设备

以下是实现风格迁移所需的第三方库列表

- torch, torch.nn, numpy (用PyTorch实现风格转移不可或缺的第三方库)

- torch.optim(高效的梯度下降)

- PIL, PIL.Image, matplotlib.pyplot (用于加载和显示图像)

- torchvision.transforms(将 PIL 图像转换为tensor张量)

- torchvision.models(训练或加载预先训练的模型)

- copy(深度复制模型;系统包)

导入如下:

from __future__ import print_function

import torch

import torch.nn as nn

import torch.nn.functional as F

import torch.optim as optim

from PIL import Image

import matplotlib.pyplot as plt

import torchvision.transforms as transforms

import torchvision.models as models

import copy

接下来,我们需要选择运行神经网络的终端,并引入内容图像和风格图像。在内存比较大的图像上运行风格转移算法需要更长的时间,如果在GPU上运行会跑的更快。我们可以用 torch.cuda.is_available() 来检测是否有可用的GPU。然后,我们将用torch.device.to(device)来预置以便后续教程的使用。该方法还可用于将张量或模块移动到所选择的设备。

device = torch.device("cuda" if torch.cuda.is_available() else "cpu")

加载图像

现在我们将导入风格图像和内容图像。原始PIL图像的值介于 0 和 255 之间,但转换为torch张量(torch tensor)时,其值将修改到介于 0 和 1 之间。同时图像还需要调整大小以具有相同的尺寸。一个需要注意的点是,torch库中的神经网络使用的是介于0到1的张量来进行训练。如果你尝试向该神经网络提供 介于0 到 255 的张量图像,则触发的特征图将无法感知预期的内容和风格。但是,来自Caffe库的预训练的神经网络却是使用介于0到255的张量图像进行训练的。

注意

以下是下载教程所需要的图片的的链接 picasso.jpg 和 dancing.jpg. 下载这两张图像并把它们加入到你最近的工作目录下命名为images 的文件夹里。

# 输出图像所期望的大小

imsize = 512 if torch.cuda.is_available() else 128 # 如果没有GPU请调小一点

loader = transforms.Compose([

transforms.Resize(imsize), # 调整导入的图像的大小

transforms.ToTensor()]) # 把图像转化为 torch tensor

def image_loader(image_name):

image = Image.open(image_name)

# 需要假的批处理维度来适应神经网络的输入维度

image = loader(image).unsqueeze(0)

return image.to(device, torch.float)

style_img = image_loader("./data/images/neural-style/picasso.jpg")

content_img = image_loader("./data/images/neural-style/dancing.jpg")

assert style_img.size() == content_img.size(), \

"we need to import style and content images of the same size"

现在,让我们创建一个函数,来将图像的副本转换为PIL格式并将其显示出来,同时通过plt.imshow来显示副本。我们将尝试显示内容图像和风格图像,以确保它们已正确导入。

unloader = transforms.ToPILImage() # 转化为 PIL 图像

plt.ion()

def imshow(tensor, title=None):

image = tensor.cpu().clone() # 我们克隆图像的 tensor 而不是直接在它上面改动

image = image.squeeze(0) # 删除假的批处理维度

image = unloader(image)

plt.imshow(image)

if title is not None:

plt.title(title)

plt.pause(0.001) # 暂停一点,以便plot能够更新

plt.figure()

imshow(style_img, title='Style Image')

plt.figure()

imshow(content_img, title='Content Image')

损失函数

内容损失

内容损失是一个函数,表示单个图层的内容距离的加权版本。该函数采用特征量(map) ![]() 来表示在处理X的神经网络里的图层L并返回加权内容距离

来表示在处理X的神经网络里的图层L并返回加权内容距离![]() 和图像X和内容图像C的距离

和图像X和内容图像C的距离![]() 。内容图像的特征图

。内容图像的特征图![]() 必须被函数知道来计算内容距离。我们通过一个将

必须被函数知道来计算内容距离。我们通过一个将![]() 作为输入的构造器来使得这个函数实现一个torch模块。距离

作为输入的构造器来使得这个函数实现一个torch模块。距离![]() 是两组特征图的均方差,可以用nn.MSELoss计算

是两组特征图的均方差,可以用nn.MSELoss计算

我们将直接在用于计算内容距离的卷积层之后添加此内容损失模块。这样,每次向神经网络提供输入图像时,都会在所需图层处计算内容损失,并且由于自动渐变,将会计算所有的梯度。现在,为了使内容损失层更加的清晰,我们必须定义一种计算内容损失的方法,然后返回该层的输入。计算的损失将保存为forward模块的一个参数.

class ContentLoss(nn.Module):

def __init__(self, target,):

super(ContentLoss, self).__init__()

#我们从所使用的树中“分离”了目标内容

#来动态计算梯度:这是一个声明的值,

#不是变量。否则forward方法的标准

#将抛出一个错误。

self.target = target.detach()

def forward(self, input):

self.loss = F.mse_loss(input, self.target)

return input

重要细节:虽然这个模块被命名为Content Loss,但它不是一个真正的PyTorch Loss函数。如果要将你的content loss定义为PyTorch Loss函数,则必须创建PyTorch自动降级函数以在backward方法中手动重新计算/实现梯度。

风格损失

风格丢失模块的实现方式与内容丢失模块类似。它将充当计算该层的风格损失神经网络中的透明层。为了计算风格损失,我们需要计算![]() 。格拉姆矩阵是将给定矩阵乘以其转置矩阵的结果。在这个应用中,给定的矩阵是图层L的特征图FXL的的重塑版本,

。格拉姆矩阵是将给定矩阵乘以其转置矩阵的结果。在这个应用中,给定的矩阵是图层L的特征图FXL的的重塑版本,![]() 被重塑来形成

被重塑来形成![]() ,一个 KxN 的矩阵, 其中K 是图层L的特征图的数量而N 是特征矢量图

,一个 KxN 的矩阵, 其中K 是图层L的特征图的数量而N 是特征矢量图![]() 的维度.的维度例如。例如

的维度.的维度例如。例如![]() 的第一行对应于第一个向量化的特征图

的第一行对应于第一个向量化的特征图![]()

最后,格汉姆矩阵必须通过将每个元素除以矩阵中元素的总数来标准化。这种标准化是为了抵消矩阵![]() 在格拉姆矩阵中的维度N。这些较大的值将导致第一个图层(在池化图层之前)在梯度下降期间产生更大的影响。风格特征往往位于网络的更深层,因此此规范化步骤至关重要。

在格拉姆矩阵中的维度N。这些较大的值将导致第一个图层(在池化图层之前)在梯度下降期间产生更大的影响。风格特征往往位于网络的更深层,因此此规范化步骤至关重要。

def gram_matrix(input):

a, b, c, d = input.size() # a=batch size(=1)

# b=number of feature maps

# (c,d)=dimensions of a f. map (N=c*d)

features = input.view(a * b, c * d) # resise F_XL into \hat F_XL

G = torch.mm(features, features.t()) # compute the gram product

# we 'normalize' the values of the gram matrix

# by dividing by the number of element in each feature maps.

return G.div(a * b * c * d)

现在,风格损失模块看起来几乎与内容损失模块完全相同。风格距离也是使用![]() 和

和![]()

class StyleLoss(nn.Module):

def __init__(self, target_feature):

super(StyleLoss, self).__init__()

self.target = gram_matrix(target_feature).detach()

def forward(self, input):

G = gram_matrix(input)

self.loss = F.mse_loss(G, self.target)

return input

导入模型

现在我们需要导入一个预先训练的神经网络。我们将使用19层VGG网络,就像本文中使用的网络一样。

PyTorch的VGG实现是一个模块,分为两个子模块:.Sequential(包含卷积层和池化层)和features(包含完全连接的层)。我们将使用该模块classifier,因为我们需要各个卷积层的输出来测量内容损失和风格损失。某些层在训练期间的行为与评估时的行为不同,因此我们必须使用.eval() 将网络设置为评估模式。

cnn = models.vgg19(pretrained=True).features.to(device).eval()

输出如下:

/opt/conda/lib/python3.7/site-packages/torchvision/models/_utils.py:209: UserWarning:

The parameter 'pretrained' is deprecated since 0.13 and will be removed in 0.15, please use 'weights' instead.

/opt/conda/lib/python3.7/site-packages/torchvision/models/_utils.py:223: UserWarning:

Arguments other than a weight enum or `None` for 'weights' are deprecated since 0.13 and will be removed in 0.15. The current behavior is equivalent to passing `weights=VGG19_Weights.IMAGENET1K_V1`. You can also use `weights=VGG19_Weights.DEFAULT` to get the most up-to-date weights.

Downloading: "https://download.pytorch.org/models/vgg19-dcbb9e9d.pth" to /var/lib/jenkins/.cache/torch/hub/checkpoints/vgg19-dcbb9e9d.pth

0%| | 0.00/548M [00:00

2%|1 | 10.5M/548M [00:00<00:05, 110MB/s]

6%|6 | 32.9M/548M [00:00<00:02, 183MB/s]

11%|# | 58.3M/548M [00:00<00:02, 221MB/s]

15%|#5 | 82.6M/548M [00:00<00:02, 230MB/s]

19%|#9 | 105M/548M [00:00<00:02, 222MB/s]

23%|##3 | 128M/548M [00:00<00:01, 231MB/s]

28%|##7 | 153M/548M [00:00<00:01, 239MB/s]

32%|###2 | 176M/548M [00:00<00:01, 238MB/s]

37%|###6 | 200M/548M [00:00<00:01, 244MB/s]

41%|#### | 224M/548M [00:01<00:01, 247MB/s]

45%|####5 | 248M/548M [00:01<00:01, 227MB/s]

49%|####9 | 270M/548M [00:01<00:01, 219MB/s]

53%|#####3 | 292M/548M [00:01<00:01, 223MB/s]

58%|#####7 | 316M/548M [00:01<00:01, 229MB/s]

62%|######1 | 338M/548M [00:01<00:00, 229MB/s]

66%|######5 | 360M/548M [00:01<00:00, 220MB/s]

70%|######9 | 383M/548M [00:01<00:00, 226MB/s]

74%|#######3 | 404M/548M [00:01<00:00, 225MB/s]

78%|#######7 | 426M/548M [00:02<00:00, 198MB/s]

81%|########1 | 445M/548M [00:02<00:00, 174MB/s]

86%|########5 | 469M/548M [00:02<00:00, 192MB/s]

90%|######### | 494M/548M [00:02<00:00, 211MB/s]

94%|#########3| 515M/548M [00:02<00:00, 203MB/s]

98%|#########8| 539M/548M [00:02<00:00, 216MB/s]

100%|##########| 548M/548M [00:02<00:00, 218MB/s]

此外,VGG网络在图像上进行训练,每个通道归一化为meanst=[0.485, 0.456, 0.406]和std=[0.229, 0.224, 0.225]。在将图像发送到网络之前,我们将使用它们对其进行标准。

cnn_normalization_mean = torch.tensor([0.485, 0.456, 0.406]).to(device)

cnn_normalization_std = torch.tensor([0.229, 0.224, 0.225]).to(device)

# create a module to normalize input image so we can easily put it in a

# nn.Sequential

class Normalization(nn.Module):

def __init__(self, mean, std):

super(Normalization, self).__init__()

# .view the mean and std to make them [C x 1 x 1] so that they can

# directly work with image Tensor of shape [B x C x H x W].

# B is batch size. C is number of channels. H is height and W is width.

self.mean = torch.tensor(mean).view(-1, 1, 1)

self.std = torch.tensor(std).view(-1, 1, 1)

def forward(self, img):

# normalize img

return (img - self.mean) / self.std

模块包含子模块的有序列表。例如,包含一个序列(Conv2d,ReLU,MaxPool2d,Conv2d,ReLU...),以正确的深度顺序对齐。我们需要在它们检测到的卷积层之后立即添加内容丢失和风格丢失层。为此,我们必须创建一个正确插入内容丢失和风格丢失模块的新模块。

# desired depth layers to compute style/content losses :

content_layers_default = ['conv_4']

style_layers_default = ['conv_1', 'conv_2', 'conv_3', 'conv_4', 'conv_5']

def get_style_model_and_losses(cnn, normalization_mean, normalization_std,

style_img, content_img,

content_layers=content_layers_default,

style_layers=style_layers_default):

# normalization module

normalization = Normalization(normalization_mean, normalization_std).to(device)

# just in order to have an iterable access to or list of content/syle

# losses

content_losses = []

style_losses = []

# assuming that cnn is a nn.Sequential, so we make a new nn.Sequential

# to put in modules that are supposed to be activated sequentially

model = nn.Sequential(normalization)

i = 0 # increment every time we see a conv

for layer in cnn.children():

if isinstance(layer, nn.Conv2d):

i += 1

name = 'conv_{}'.format(i)

elif isinstance(layer, nn.ReLU):

name = 'relu_{}'.format(i)

# The in-place version doesn't play very nicely with the ContentLoss

# and StyleLoss we insert below. So we replace with out-of-place

# ones here.

layer = nn.ReLU(inplace=False)

elif isinstance(layer, nn.MaxPool2d):

name = 'pool_{}'.format(i)

elif isinstance(layer, nn.BatchNorm2d):

name = 'bn_{}'.format(i)

else:

raise RuntimeError('Unrecognized layer: {}'.format(layer.__class__.__name__))

model.add_module(name, layer)

if name in content_layers:

# add content loss:

target = model(content_img).detach()

content_loss = ContentLoss(target)

model.add_module("content_loss_{}".format(i), content_loss)

content_losses.append(content_loss)

if name in style_layers:

# add style loss:

target_feature = model(style_img).detach()

style_loss = StyleLoss(target_feature)

model.add_module("style_loss_{}".format(i), style_loss)

style_losses.append(style_loss)

# now we trim off the layers after the last content and style losses

for i in range(len(model) - 1, -1, -1):

if isinstance(model[i], ContentLoss) or isinstance(model[i], StyleLoss):

break

model = model[:(i + 1)]

return model, style_losses, content_losses

接着我们选择输入图像,你可以使用内容图像的副本或者白底图

input_img = content_img.clone()

# if you want to use white noise instead uncomment the below line:

# input_img = torch.randn(content_img.data.size(), device=device)

# add the original input image to the figure:

plt.figure()

imshow(input_img, title='Input Image')

梯度下降

正如该算法的作者Leon Gatys在这里建议的那样,我们将使用L-BFGS算法来运行我们5的梯度下降。与训练网络不同,我们希望训练输入图像,以尽量减少内容/风格损失。我们将创建一个PyTorch L-BFGS优化器,并将我们的图像作为要优化的张量传递给它

def get_input_optimizer(input_img):

# this line to show that input is a parameter that requires a gradient

optimizer = optim.LBFGS([input_img])

return optimizer

最后,我们必须定义一个执行神经传递的函数。对于网络的每次迭代,它都会获得更新的输入并计算新的损失。我们将运行每个损失模块的方法来动态计算它们的梯度。优化器需要一个“闭包”函数,该函数重新评估模块并返回损失。

我们仍然有最后一个限制需要解决。神经网络可能会尝试使用超过图像的 0 到 1 张量范围的值来优化输入。我们可以通过在每次运行网络时将输入值更正为0到1来解决此问题。

def run_style_transfer(cnn, normalization_mean, normalization_std,

content_img, style_img, input_img, num_steps=300,

style_weight=1000000, content_weight=1):

"""Run the style transfer."""

print('Building the style transfer model..')

model, style_losses, content_losses = get_style_model_and_losses(cnn,

normalization_mean, normalization_std, style_img, content_img)

# We want to optimize the input and not the model parameters so we

# update all the requires_grad fields accordingly

input_img.requires_grad_(True)

model.requires_grad_(False)

optimizer = get_input_optimizer(input_img)

print('Optimizing..')

run = [0]

while run[0] <= num_steps:

def closure():

# correct the values of updated input image

with torch.no_grad():

input_img.clamp_(0, 1)

optimizer.zero_grad()

model(input_img)

style_score = 0

content_score = 0

for sl in style_losses:

style_score += sl.loss

for cl in content_losses:

content_score += cl.loss

style_score *= style_weight

content_score *= content_weight

loss = style_score + content_score

loss.backward()

run[0] += 1

if run[0] % 50 == 0:

print("run {}:".format(run))

print('Style Loss : {:4f} Content Loss: {:4f}'.format(

style_score.item(), content_score.item()))

print()

return style_score + content_score

optimizer.step(closure)

# a last correction...

with torch.no_grad():

input_img.clamp_(0, 1)

return input_img

最后,我们可以运行算法。

output = run_style_transfer(cnn, cnn_normalization_mean, cnn_normalization_std,

content_img, style_img, input_img)

plt.figure()

imshow(output, title='Output Image')

# sphinx_gallery_thumbnail_number = 4

plt.ioff()

plt.show()

Building the style transfer model..

/var/lib/jenkins/workspace/advanced_source/neural_style_tutorial.py:287: UserWarning:To copy construct from a tensor, it is recommended to use sourceTensor.clone().detach() or sourceTensor.clone().detach().requires_grad_(True), rather than torch.tensor(sourceTensor).

/var/lib/jenkins/workspace/advanced_source/neural_style_tutorial.py:288: UserWarning:

To copy construct from a tensor, it is recommended to use sourceTensor.clone().detach() or sourceTensor.clone().detach().requires_grad_(True), rather than torch.tensor(sourceTensor).

Optimizing..

run [50]:

Style Loss : 4.064722 Content Loss: 4.152285run [100]:

Style Loss : 1.145136 Content Loss: 3.035917run [150]:

Style Loss : 0.715922 Content Loss: 2.656822run [200]:

Style Loss : 0.485102 Content Loss: 2.500479run [250]:

Style Loss : 0.348987 Content Loss: 2.406870run [300]:

Style Loss : 0.265431 Content Loss: 2.350914