pytorch实现MNIST数据集分类

分为四个部分进行,第一步加载数据集,第二步构建模型,第三步构建损失函数和优化函数,第四步进行训练和测试。

首先导入需要用到的库

import torch

import torch.nn.functional as F

from torchvision import transforms

from torchvision import datasets

from torch.utils.data import DataLoader

import torch.optim as optim然后进行第一步加载数据集,需要对数据集进行转换

batch_size = 64 #设置训练的batch为64

#把训练数据转换成torch中的tensor

transform = transforms.Compose([

transforms.ToTensor(),

transforms.Normalize((0.1307,),(0.3081,))

])

train_dataset = datasets.MNIST(root='../dataset/mnist',

train=True,

download=False,

transform=transform)

train_loader = DataLoader(train_dataset,

shuffle=True,

batch_size=batch_size)

test_dataset = datasets.MNIST(root='../dataset/mnist',

train=False,

download=False,

transform=transform)

test_loader = DataLoader(train_dataset,

shuffle=False,

batch_size=batch_size)第二部就是构建模型,这里构建了5层全连接网络,每一层之后再接一层非线性激活层relu,最后输出大小为10,对应了10个类别

class Net(torch.nn.Module):

def __init__(self):

super(Net, self).__init__()

self.l1 = torch.nn.Linear(784, 512)

self.l2 = torch.nn.Linear(512, 256)

self.l3 = torch.nn.Linear(256, 128)

self.l4 = torch.nn.Linear(128, 64)

self.l5 = torch.nn.Linear(64, 10)

def forward(self, x):

x = x.view(-1, 784) #将32*32的数据集展开成1*784

x = F.relu(self.l1(x))

x = F.relu(self.l2(x))

x = F.relu(self.l3(x))

x = F.relu(self.l4(x))

return self.l5(x)

model = Net()第三步就是构建损失函数和优化函数,这里使用交叉熵损失和随机梯度下降的方法进行模型优化

criterion = torch.nn.CrossEntropyLoss()

optimizer = torch.optim.SGD(model.parameters(),lr=0.01, momentum=0.5)第四步就是模型的训练和测试

def train(epoch):

running_loss = 0 #损失初始化

for batch_idx, data in enumerate(train_loader,0):

input, target = data

optimizer.zero_grad() #将个batch的梯度清零

output = model(input)

loss = criterion(output, target) #计算损失

loss.backward() #梯度反向传导

optimizer.step() #单次优化参数更新

running_loss += loss.item()

#没300个batch打印一次结果

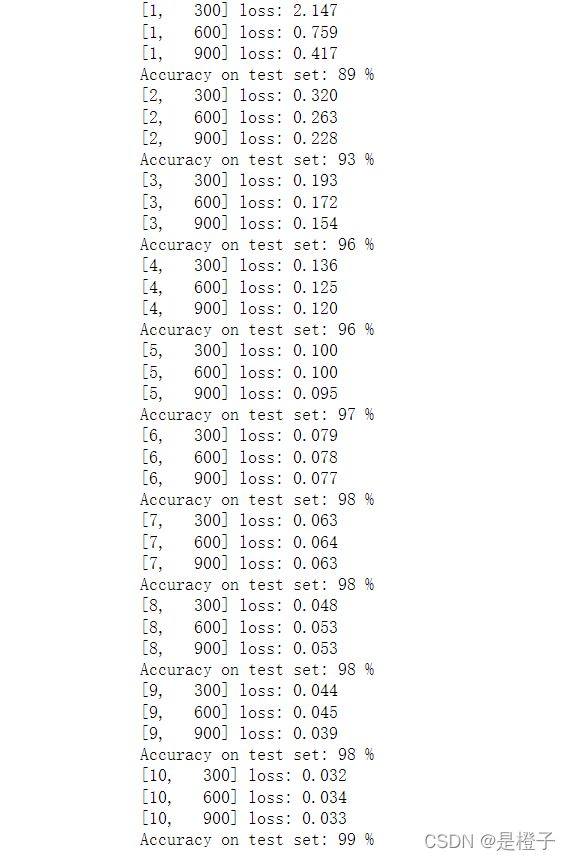

if batch_idx % 300 ==299:

print('[%d, %5d] loss: %.3f' % (epoch + 1, batch_idx + 1, running_loss / 300))

running_loss = 0.0def test():

correct = 0

total = 0

with torch.no_grad(): #测试过程中不用反向传导

for data in test_loader:

images, labels = data

output = model(images)

_, predicted = torch.max(output.data, dim=1) #找到输出值最大的类别

total += labels.size(0)

correct += (predicted == labels).sum().item() #计算准确率

print('Accuracy on test set: %d %%' % (100 * correct / total))最后运行

if __name__ == '__main__':

for epoch in range(10):

train(epoch)

test()最后经过10轮训练,测试集精度达到了99%,效果还不错