基于openpose的人体姿态识别部署详细过程

基于openpose的人体姿态识别部署详细过程

毕设的题目是这个问题,自己学了很久都没有看懂网上的各种各样的教程,终于在今天问老师之后解决了。具体过程如下。

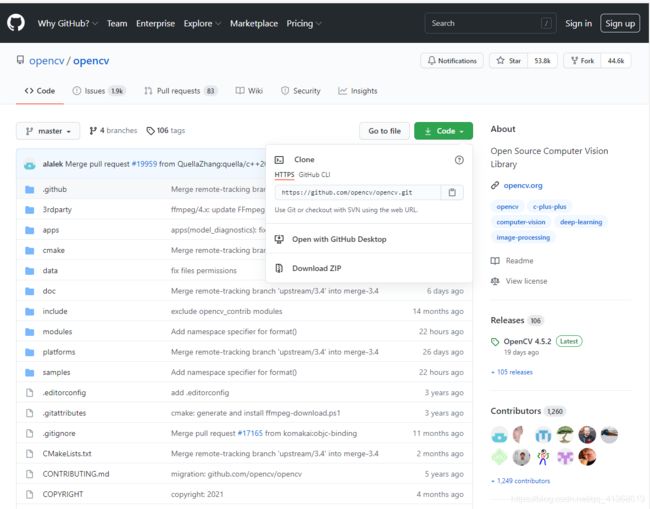

1. opencv的下载

2. 下载CUDA、cuDNN以及安装

3. python 3.6的下载

4. openpose文件的下载

5. 使用python配置命令行参数

opencv的下载

下载地址https://opencv.org/,opencv安装很简单,教程可以看这篇https://blog.csdn.net/qq_41277822/article/details/104018866

下载CUDA、cuDNN以及安装

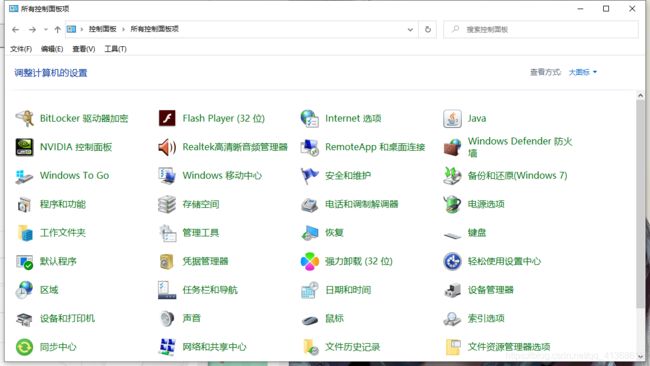

首先查看一下自己的电脑能用什么版本的CUDA,win+R,输入control,进入控制面板,把产看方式改为大图标或者小图标

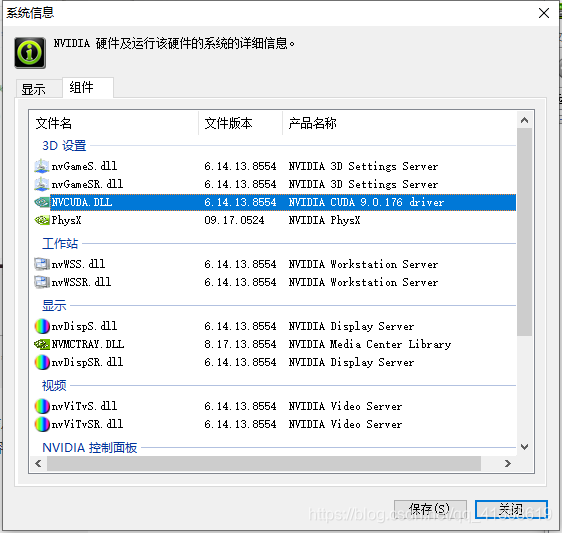

进入NVIDA控制面板,点击右上角帮助,系统信息,组件查看版本信息,可以看到我的电脑支持的版本号为9.0, 查看自己的版本号,我看网上说可以向下兼容,但是我安装8.0的时候并没有成功还是安装的9.0才成功的。而且因为CUDA的下载地址是国外的,国内的下载镜像经常不能用,就算能下载,速度也非常让人崩溃。所以文末会附上CUDA9.0和配套的CUDNN

CUDA的安装也很简单,不会的可以自行搜索。

附上下载CUDA地址:CUDA下载地址(不建议在官网上下载速度太慢)

CUDNN是CUDA的优化包,在CUDA安装完成后,将cuDNN压缩包CUDA文件夹下的文件解压到 C:\Program Files\NVIDIA GPU Computing Toolkit\CUDA\v9.0目录下

Python3.6的下载

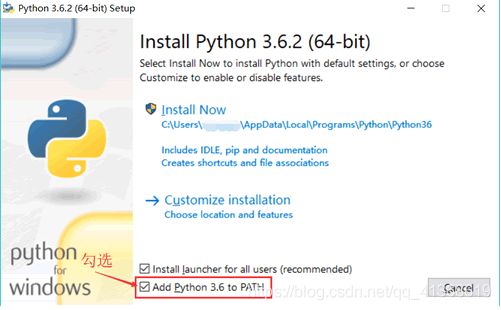

Python的版本无所谓,只要能用就可以

下载地址:python下载地址

安装python过程也非常简单,主要就是不要忘记把PATH勾上

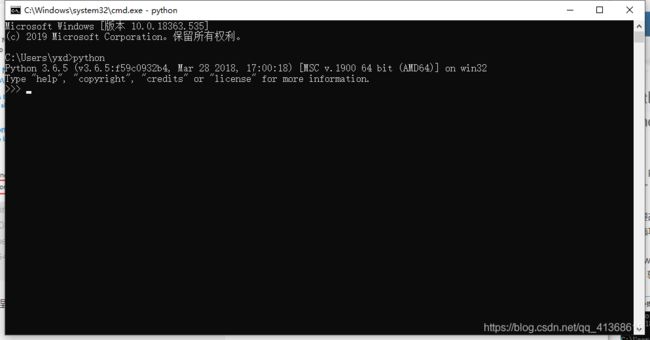

安装后可以在windows命令行程序中输入python查看是否安装成功如图所示

OPENPOSE文件的下载

1在这里使用的是opencv里的dnn中的openpose.py

opencv下载地址https://github.com/opencv/opencv

下载zip压缩文件,最近GitHub也总是进不去,文末也会一起附上网盘链接

由于还缺少COCO数据集所以还要下载COCO数据集

下载地址https://github.com/CMU-Perceptual-Computing-Lab/openpose/tree/master/models/pose

这里是COCO的文件,不知道怎么单独下载一个COCO文件所以我只能把整个openpose下载下来。

使用python配置命令行参数

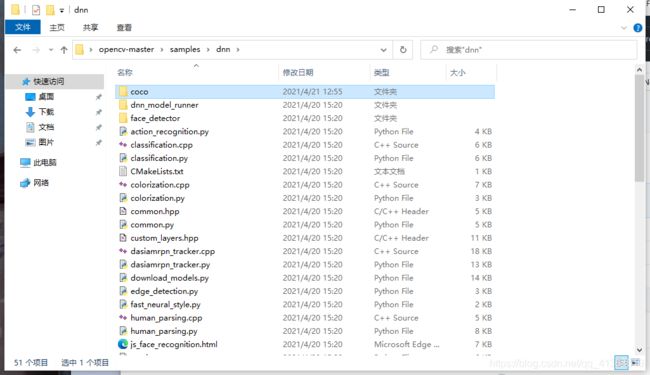

首先将下载下来的COCO文件夹放到dnn文件目录中如图所示。

打开openpose.py.

# To use Inference Engine backend, specify location of plugins:

# source /opt/intel/computer_vision_sdk/bin/setupvars.sh

import cv2 as cv

import numpy as np

import argparse

parser = argparse.ArgumentParser(

description='This script is used to demonstrate OpenPose human pose estimation network '

'from https://github.com/CMU-Perceptual-Computing-Lab/openpose project using OpenCV. '

'The sample and model are simplified and could be used for a single person on the frame.')

parser.add_argument('--input', help='Path to image or video. Skip to capture frames from camera')

parser.add_argument('--proto', help='Path to .prototxt')

parser.add_argument('--model', help='Path to .caffemodel')

parser.add_argument('--dataset', help='Specify what kind of model was trained. '

'It could be (COCO, MPI, HAND) depends on dataset.')

parser.add_argument('--thr', default=0.1, type=float, help='Threshold value for pose parts heat map')

parser.add_argument('--width', default=368, type=int, help='Resize input to specific width.')

parser.add_argument('--height', default=368, type=int, help='Resize input to specific height.')

parser.add_argument('--scale', default=0.003922, type=float, help='Scale for blob.')

args = parser.parse_args()

print(args.dataset)

if args.dataset == 'COCO':

BODY_PARTS = { "Nose": 0, "Neck": 1, "RShoulder": 2, "RElbow": 3, "RWrist": 4,

"LShoulder": 5, "LElbow": 6, "LWrist": 7, "RHip": 8, "RKnee": 9,

"RAnkle": 10, "LHip": 11, "LKnee": 12, "LAnkle": 13, "REye": 14,

"LEye": 15, "REar": 16, "LEar": 17, "Background": 18 }

POSE_PAIRS = [["Neck", "RShoulder"], ["Neck", "LShoulder"], ["RShoulder", "RElbow"],

["RElbow", "RWrist"], ["LShoulder", "LElbow"], ["LElbow", "LWrist"],

["Neck", "RHip"], ["RHip", "RKnee"], ["RKnee", "RAnkle"], ["Neck", "LHip"],

["LHip", "LKnee"], ["LKnee", "LAnkle"], ["Neck", "Nose"], ["REye", "REar"],

["Nose", "REye"], ["Nose", "LEye"], ["LEye", "LEar"]]

elif args.dataset == 'MPI':

BODY_PARTS = { "Head": 0, "Neck": 1, "RShoulder": 2, "RElbow": 3, "RWrist": 4,

"LShoulder": 5, "LElbow": 6, "LWrist": 7, "RHip": 8, "RKnee": 9,

"RAnkle": 10, "LHip": 11, "LKnee": 12, "LAnkle": 13, "Chest": 14,

"Background": 15 }

POSE_PAIRS = [ ["Head", "Neck"], ["Neck", "RShoulder"], ["RShoulder", "RElbow"],

["RElbow", "RWrist"], ["Neck", "LShoulder"], ["LShoulder", "LElbow"],

["LElbow", "LWrist"], ["Neck", "Chest"], ["Chest", "RHip"], ["RHip", "RKnee"],

["RKnee", "RAnkle"], ["Chest", "LHip"], ["LHip", "LKnee"], ["LKnee", "LAnkle"] ]

else:

assert(args.dataset == "HAND")

BODY_PARTS = { "Wrist": 0,

"ThumbMetacarpal": 1, "ThumbProximal": 2, "ThumbMiddle": 3, "ThumbDistal": 4,

"IndexFingerMetacarpal": 5, "IndexFingerProximal": 6, "IndexFingerMiddle": 7, "IndexFingerDistal": 8,

"MiddleFingerMetacarpal": 9, "MiddleFingerProximal": 10, "MiddleFingerMiddle": 11, "MiddleFingerDistal": 12,

"RingFingerMetacarpal": 13, "RingFingerProximal": 14, "RingFingerMiddle": 15, "RingFingerDistal": 16,

"LittleFingerMetacarpal": 17, "LittleFingerProximal": 18, "LittleFingerMiddle": 19, "LittleFingerDistal": 20,

}

POSE_PAIRS = [ ["Wrist", "ThumbMetacarpal"], ["ThumbMetacarpal", "ThumbProximal"],

["ThumbProximal", "ThumbMiddle"], ["ThumbMiddle", "ThumbDistal"],

["Wrist", "IndexFingerMetacarpal"], ["IndexFingerMetacarpal", "IndexFingerProximal"],

["IndexFingerProximal", "IndexFingerMiddle"], ["IndexFingerMiddle", "IndexFingerDistal"],

["Wrist", "MiddleFingerMetacarpal"], ["MiddleFingerMetacarpal", "MiddleFingerProximal"],

["MiddleFingerProximal", "MiddleFingerMiddle"], ["MiddleFingerMiddle", "MiddleFingerDistal"],

["Wrist", "RingFingerMetacarpal"], ["RingFingerMetacarpal", "RingFingerProximal"],

["RingFingerProximal", "RingFingerMiddle"], ["RingFingerMiddle", "RingFingerDistal"],

["Wrist", "LittleFingerMetacarpal"], ["LittleFingerMetacarpal", "LittleFingerProximal"],

["LittleFingerProximal", "LittleFingerMiddle"], ["LittleFingerMiddle", "LittleFingerDistal"] ]

inWidth = args.width

inHeight = args.height

inScale = args.scale

net = cv.dnn.readNet(cv.samples.findFile(args.proto), cv.samples.findFile(args.model))

cap = cv.VideoCapture(args.input if args.input else 0)##视频读取

while cv.waitKey(1) < 0:

hasFrame, frame = cap.read()

if not hasFrame:

cv.waitKey()

break

frameWidth = frame.shape[1]

frameHeight = frame.shape[0]

inp = cv.dnn.blobFromImage(frame, inScale, (inWidth, inHeight),

(0, 0, 0), swapRB=False, crop=False)

net.setInput(inp)

out = net.forward()

assert(len(BODY_PARTS) <= out.shape[1])

points = []

for i in range(len(BODY_PARTS)):

# Slice heatmap of corresponding body's part.

heatMap = out[0, i, :, :]

# Originally, we try to find all the local maximums. To simplify a sample

# we just find a global one. However only a single pose at the same time

# could be detected this way.

_, conf, _, point = cv.minMaxLoc(heatMap)

x = (frameWidth * point[0]) / out.shape[3]

y = (frameHeight * point[1]) / out.shape[2]

# Add a point if it's confidence is higher than threshold.

points.append((int(x), int(y)) if conf > args.thr else None)

for pair in POSE_PAIRS:

partFrom = pair[0]

partTo = pair[1]

assert(partFrom in BODY_PARTS)

assert(partTo in BODY_PARTS)

idFrom = BODY_PARTS[partFrom]

idTo = BODY_PARTS[partTo]

if points[idFrom] and points[idTo]:

cv.line(frame, points[idFrom], points[idTo], (0, 255, 0), 3)

cv.ellipse(frame, points[idFrom], (3, 3), 0, 0, 360, (0, 0, 255), cv.FILLED)

cv.ellipse(frame, points[idTo], (3, 3), 0, 0, 360, (0, 0, 255), cv.FILLED)

t, _ = net.getPerfProfile()

freq = cv.getTickFrequency() / 1000

cv.putText(frame, '%.2fms' % (t / freq), (10, 20), cv.FONT_HERSHEY_SIMPLEX, 0.5, (0, 0, 0))

cv.imshow('OpenPose using OpenCV', frame)

cv.waitKey(0)

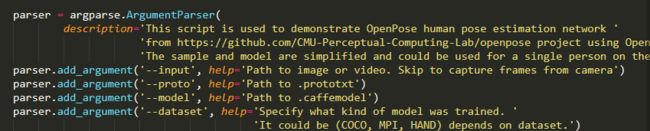

可以看见第十一行到十四行是需要输入的内容。

input要输入的视频地址,事先要下载一个比较清晰的一个人的视频在dnn文件夹中,proto是要输入的prototex的地址,model是要输入的caffemodel地址,这两个文件都在COCO文件夹中,dataset是选择的数据集COCO。

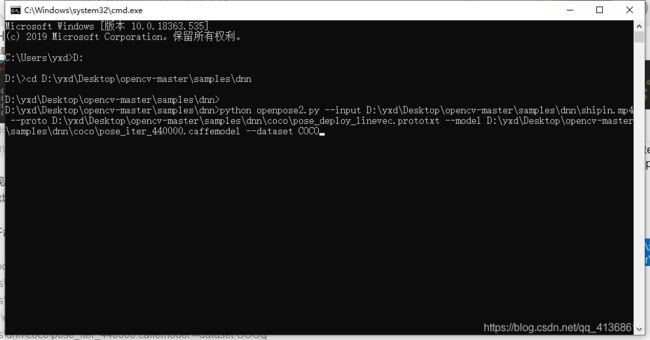

具体操作为打开命令行跳转到dnn文件夹下,输入一下内容

python openpose2.py --input D:\yxd\Desktop\opencv-master\samples\dnn\shipin.mp4 --proto D:\yxd\Desktop\opencv-master\samples\dnn\coco\pose_deploy_linevec.prototxt --model D:\yxd\Desktop\opencv-master\samples\dnn\coco\pose_iter_440000.caffemodel --dataset COCO

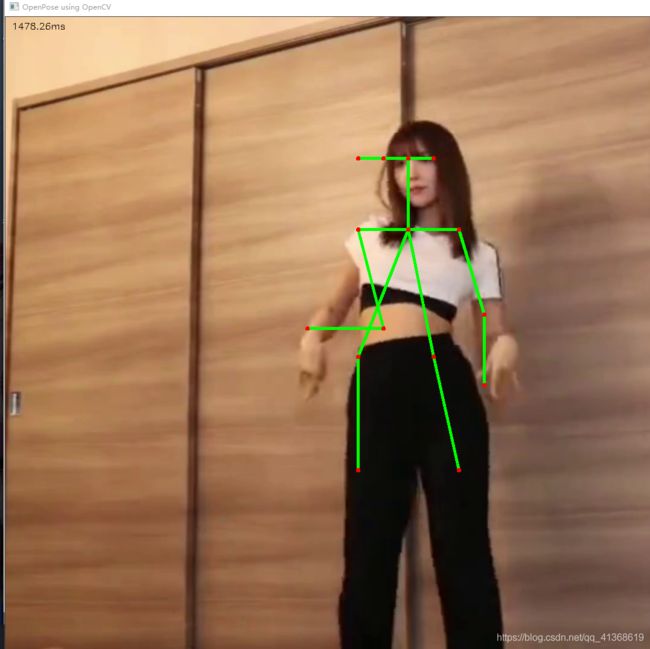

注意里面文件的地址要换成在你电脑中所在的位置。效果如下图

就能得到结果了。

电脑配置不是很好所以视频会卡的像图片一样。

如果想对图片进行骨骼提取和视频没有太大区别,但是需要把input换成图片所在地址。

代码如下

# To use Inference Engine backend, specify location of plugins:

# source /opt/intel/computer_vision_sdk/bin/setupvars.sh

import cv2 as cv

import numpy as np

import argparse

parser = argparse.ArgumentParser(

description='This script is used to demonstrate OpenPose human pose estimation network '

'from https://github.com/CMU-Perceptual-Computing-Lab/openpose project using OpenCV. '

'The sample and model are simplified and could be used for a single person on the frame.')

parser.add_argument('--input', help='Path to image or video. Skip to capture frames from camera')

parser.add_argument('--proto', help='Path to .prototxt')

parser.add_argument('--model', help='Path to .caffemodel')

parser.add_argument('--dataset', help='Specify what kind of model was trained. '

'It could be (COCO, MPI, HAND) depends on dataset.')

parser.add_argument('--thr', default=0.1, type=float, help='Threshold value for pose parts heat map')

parser.add_argument('--width', default=368, type=int, help='Resize input to specific width.')

parser.add_argument('--height', default=368, type=int, help='Resize input to specific height.')

parser.add_argument('--scale', default=0.003922, type=float, help='Scale for blob.')

args = parser.parse_args()

print('args::', args)

print(args.dataset)

if args.dataset == 'COCO':

BODY_PARTS = { "Nose": 0, "Neck": 1, "RShoulder": 2, "RElbow": 3, "RWrist": 4,

"LShoulder": 5, "LElbow": 6, "LWrist": 7, "RHip": 8, "RKnee": 9,

"RAnkle": 10, "LHip": 11, "LKnee": 12, "LAnkle": 13, "REye": 14,

"LEye": 15, "REar": 16, "LEar": 17, "Background": 18 }

POSE_PAIRS = [["Neck", "RShoulder"], ["Neck", "LShoulder"], ["RShoulder", "RElbow"],

["RElbow", "RWrist"], ["LShoulder", "LElbow"], ["LElbow", "LWrist"],

["Neck", "RHip"], ["RHip", "RKnee"], ["RKnee", "RAnkle"], ["Neck", "LHip"],

["LHip", "LKnee"], ["LKnee", "LAnkle"], ["Neck", "Nose"], ["REye", "REar"],

["Nose", "REye"], ["Nose", "LEye"], ["LEye", "LEar"]]

elif args.dataset == 'MPI':

BODY_PARTS = { "Head": 0, "Neck": 1, "RShoulder": 2, "RElbow": 3, "RWrist": 4,

"LShoulder": 5, "LElbow": 6, "LWrist": 7, "RHip": 8, "RKnee": 9,

"RAnkle": 10, "LHip": 11, "LKnee": 12, "LAnkle": 13, "Chest": 14,

"Background": 15 }

POSE_PAIRS = [ ["Head", "Neck"], ["Neck", "RShoulder"], ["RShoulder", "RElbow"],

["RElbow", "RWrist"], ["Neck", "LShoulder"], ["LShoulder", "LElbow"],

["LElbow", "LWrist"], ["Neck", "Chest"], ["Chest", "RHip"], ["RHip", "RKnee"],

["RKnee", "RAnkle"], ["Chest", "LHip"], ["LHip", "LKnee"], ["LKnee", "LAnkle"] ]

else:

assert(args.dataset == "HAND")

BODY_PARTS = { "Wrist": 0,

"ThumbMetacarpal": 1, "ThumbProximal": 2, "ThumbMiddle": 3, "ThumbDistal": 4,

"IndexFingerMetacarpal": 5, "IndexFingerProximal": 6, "IndexFingerMiddle": 7, "IndexFingerDistal": 8,

"MiddleFingerMetacarpal": 9, "MiddleFingerProximal": 10, "MiddleFingerMiddle": 11, "MiddleFingerDistal": 12,

"RingFingerMetacarpal": 13, "RingFingerProximal": 14, "RingFingerMiddle": 15, "RingFingerDistal": 16,

"LittleFingerMetacarpal": 17, "LittleFingerProximal": 18, "LittleFingerMiddle": 19, "LittleFingerDistal": 20,

}

POSE_PAIRS = [ ["Wrist", "ThumbMetacarpal"], ["ThumbMetacarpal", "ThumbProximal"],

["ThumbProximal", "ThumbMiddle"], ["ThumbMiddle", "ThumbDistal"],

["Wrist", "IndexFingerMetacarpal"], ["IndexFingerMetacarpal", "IndexFingerProximal"],

["IndexFingerProximal", "IndexFingerMiddle"], ["IndexFingerMiddle", "IndexFingerDistal"],

["Wrist", "MiddleFingerMetacarpal"], ["MiddleFingerMetacarpal", "MiddleFingerProximal"],

["MiddleFingerProximal", "MiddleFingerMiddle"], ["MiddleFingerMiddle", "MiddleFingerDistal"],

["Wrist", "RingFingerMetacarpal"], ["RingFingerMetacarpal", "RingFingerProximal"],

["RingFingerProximal", "RingFingerMiddle"], ["RingFingerMiddle", "RingFingerDistal"],

["Wrist", "LittleFingerMetacarpal"], ["LittleFingerMetacarpal", "LittleFingerProximal"],

["LittleFingerProximal", "LittleFingerMiddle"], ["LittleFingerMiddle", "LittleFingerDistal"] ]

inWidth = args.width

inHeight = args.height

inScale = args.scale

net = cv.dnn.readNet(cv.samples.findFile(args.proto), cv.samples.findFile(args.model))#加载模型

frame = cv.imread(args.input) ##读取图片

frameWidth = frame.shape[1] ##shape函数读取矩阵长度

frameHeight = frame.shape[0]

inp = cv.dnn.blobFromImage(frame, inScale, (inWidth, inHeight),

(0, 0, 0), swapRB=False, crop=False)##对图像进行预处理,裁剪缩放

net.setInput(inp)

out = net.forward()

assert(len(BODY_PARTS) <= out.shape[1])

points = []

for i in range(len(BODY_PARTS)):

# Slice heatmap of corresponding body's part.

heatMap = out[0, i, :, :]

# Originally, we try to find all the local maximums. To simplify a sample

# we just find a global one. However only a single pose at the same time

# could be detected this way.

_, conf, _, point = cv.minMaxLoc(heatMap)

x = (frameWidth * point[0]) / out.shape[3]

y = (frameHeight * point[1]) / out.shape[2]

# Add a point if it's confidence is higher than threshold.

points.append((int(x), int(y)) if conf > args.thr else None)

print(points)

for pair in POSE_PAIRS:

partFrom = pair[0]

partTo = pair[1]

assert(partFrom in BODY_PARTS)

assert(partTo in BODY_PARTS)

idFrom = BODY_PARTS[partFrom]

idTo = BODY_PARTS[partTo]

if points[idFrom] and points[idTo]:

cv.line(frame, points[idFrom], points[idTo], (0, 255, 0), 3)

cv.ellipse(frame, points[idFrom], (3, 3), 0, 0, 360, (0, 0, 255), cv.FILLED)

cv.ellipse(frame, points[idTo], (3, 3), 0, 0, 360, (0, 0, 255), cv.FILLED)

t, _ = net.getPerfProfile()

freq = cv.getTickFrequency() / 1000

cv.putText(frame, '%.2fms' % (t / freq), (10, 20), cv.FONT_HERSHEY_SIMPLEX, 0.5, (0, 0, 0))

cv.imshow('OpenPose using OpenCV', frame)

cv.waitKey(0)

资源下载

在这个项目中需要的资源都存到网盘里了,需要自取

链接:https://pan.baidu.com/s/16AQlgMpg1WHlyIo2_NbYeA

提取码:90tx

这里面的opencv-master里已经把COCO放进去了,还有两张示例图片以及视频。openpose.py是图片处理,openpose2.py是视频提取。

第一次写这个文章,里面可能有谬误,多多包涵。