机器学习Sklearn实战——手写线性回归

手写线性回归

import numpy as np

import matplotlib.pyplot as plt

from sklearn.linear_model import LinearRegression

X = np.linspace(2,10,20).reshape(-1,1)

y = np.random.randint(1,6,size = 1)*X + np.random.randint(-5,5,size = 1)

#噪声 加盐

y += np.random.randn(20,1)*0.8

plt.scatter(X,y,color = "red")

w = lr.coef_[0,0]

b = lr.intercept_[0]

print(w,b)

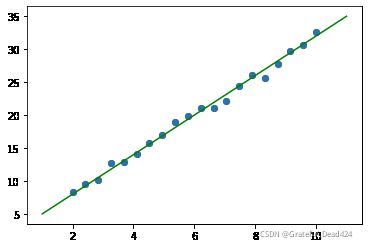

plt.scatter(X,y)

x = np.linspace(1,11,50)

plt.plot(x,w*x + b,color= "green")结果:

2.995391527138711 1.9801931425932864import numpy as np

import matplotlib.pyplot as plt

from sklearn.linear_model import LinearRegression

X = np.linspace(2, 10, 20).reshape(-1,1)

# f(x) = wx + b

y = np.random.randint(1, 6, size=1)*X + np.random.randint(-5, 5, size=1)

# 噪声,加盐

y += np.random.randn(20, 1)*0.8

plt.scatter(X, y, color = 'red')

w = lr.coef_[0, 0]

b = lr.intercept_[0]

x = np.linspace(1, 11, 50)

plt.plot(x, w*x + b, color='green')

# 使用梯度下降解决一元一次的线性问题:w,b

class LinearModel(object):

def __init__(self):

self.w = np.random.randn(1)[0]

self.b = np.random.randn(1)[0]

# 数学建模:将数据X和目标值关系用数学公式表达

def model(self,x):#model 模型,f(x) = wx + b

return self.w*x + self.b

def loss(self,x,y):#最小二乘

cost = (y - self.model(x))**2

# 梯度就是偏导数,求解两个未知数:w,b

gradient_w = 2*(y - self.model(x))*(-x)

gradient_b = 2*(y - self.model(x))*(-1)

return cost,gradient_w,gradient_b

# 梯度下降

def gradient_descent(self,gradient_w,gradient_b,learning_rate = 0.1):

# 更新w,b

self.w -= gradient_w*learning_rate

self.b -= gradient_b*learning_rate

# 训练fit

def fit(self,X,y):

count = 0 #算法执行优化了3000次,退出

tol = 0.0001

last_w = self.w + 0.1

last_b = self.b + 0.1

length = len(X)

while True:

if count > 3000:#执行的次数到了

break

# 求解的斜率和截距的精确度达到要求

if (abs(last_w - self.w) < tol) and (abs(last_b - self.b) < tol):

break

cost = 0

gradient_w = 0

gradient_b = 0

for i in range(length):

cost_,gradient_w_,gradient_b_ = self.loss(X[i,0],y[i,0])

cost += cost_/length

gradient_w += gradient_w_/length

gradient_b += gradient_b_/length

print('---------------------执行次数:%d。损失值是:%0.2f'%(count,cost))

last_w = self.w

last_b = self.b

# 更新截距和斜率

self.gradient_descent(gradient_w,gradient_b,0.01)

count+=1

def result(self):

return self.w,self.blm = LinearModel()

lm.fit(X,y)

lm.result()结果:

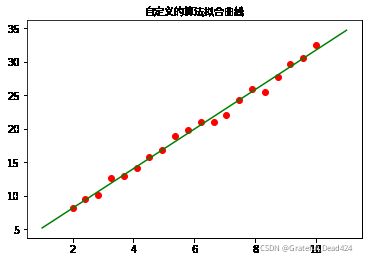

(2.9489680632625297, 2.2698211503362224)plt.scatter(X,y,color = "red")

plt.plot(x,2.94896*x + 2.2698211,color= "green")

plt.rcParams['font.sans-serif'] = ['Arial Unicode MS']

plt.title("自定义的算法拟合曲线")二元一次拟合

import numpy as np

import matplotlib.pyplot as plt

from sklearn.linear_model import LinearRegression

#f(x)w = w1*x**2 + w2*x + b

#一元二次

#f(x1,x2) = w1*x1 + w2*x2 +b

#二元一次

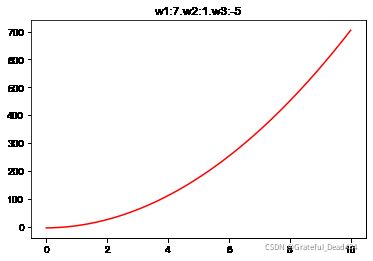

X = np.linspace(0,10,num = 50).reshape(-1,1)

X = np.concatenate([X**2,X],axis = 1)

X.shapew = np.random.randint(1,10,size = 2)

b = np.random.randint(-5,5,size = 1)

#矩阵乘法

y = X.dot(w) + b

plt.plot(X[:,1],y,c="r")

plt.title("w1:%d.w2:%d.w3:%d"%(w[0],w[1],b[0]))结果:

Text(0.5, 1.0, 'w1:7.w2:1.w3:-5')

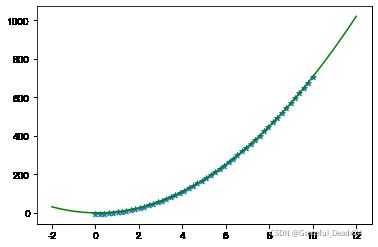

#使用sklearn自带的算法进行预测

from sklearn.linear_model import LinearRegression

lr = LinearRegression()

lr.fit(X,y)

print(lr.coef_,lr.intercept_)

plt.scatter(X[:,1],y,marker = "*")

x = np.linspace(-2,12,100)

plt.plot(x,7*x**2 + 1*x + -4.99,c="g")结果:

[7. 1.] -4.999999999999972手写线性回归,拟合多属性,多元方程

# epoch 训练的次数,梯度下降训练多少

def gradient_descent(X,y,lr,epoch,w,b):

# 一批量多少,长度

batch = len(X)

for i in range(epoch):

# d_lost:是损失的梯度

d_loss = 0

# 梯度,斜率梯度

dw = [0 for _ in range(len(w))]

# 截距梯度

db = 0

for j in range(batch):

y_ = 0 #预测的值 预测方程 y_ = f(x) = w1*x1 + w2*x2 + b

for n in range(len(w)):

y_ += X[j][n]*w[n]

y_ += b

# (y - y_)**2 -----> 2*(y - y_)*(-1)

# (y_- y)**2 -----> 2*(y_ - y)*(1)

d_loss = -(y[j] - y_)

for n in range(len(w)):

dw[n] += X[j][n]*d_loss/float(batch)

db += 1*d_loss/float(batch)

# 更新一下系数和截距,梯度下降

for n in range(len(w)):

w[n] -= dw[n]*lr[n]

b -= db*lr[0]

return w,blr = [0.0001,0.001]

w = np.random.randn(2)

b = np.random.randn(1)[0]

gradient_descent(X,y,lr,500,w,b)结果:

(array([ 7.18689265, -1.25846592]), 0.6693960269813103)plt.scatter(X[:,1],y,marker = "*")

plt.plot(x,7.1868*x**2 - 1.2584*x + 0.6694,c="g")继续优化

- x的num变大,从50变成500

- 学习率调小