pytorch笔记:搭建简易CNN

CNN的理论部分可见机器学习笔记:CNN卷积神经网络_刘文巾的博客-CSDN博客

1 导入库

import torch

import torch.nn as nn

import torch.utils.data as Data

import torchvision

import matplotlib.pyplot as plt2 超参数定义

EPOCH=1

BATCH_SIZE=50

LR=0.001

DOWNLOAD_MNIST=False

#如果已经事先下载好了 mnist数据,那么DOWNLOAD_MNIST就是False,否则就是True3 加载数据

train_data=torchvision.datasets.MNIST(

root='./mnist/',

#从这个路径找mnist数据/下载mnist数据到这个路径下

train=True,

#这时候数据是训练集(是训练集还是测试集对dropout等会有影响)

transform=torchvision.transforms.ToTensor()

#将mnist数据集中的数据类型转换为Tensor形式,

download=DOWNLOAD_MNIST)4 数据集信息及可视化

print(train_data.data.shape)

#torch.Size([60000, 28, 28])

#60000条数据,每条数据是28*28的像素点

train_data.targets.shape

#torch.Size([60000])

#训练集数据的标签,每条数据对应一个标签,代表这张图片是哪个数字

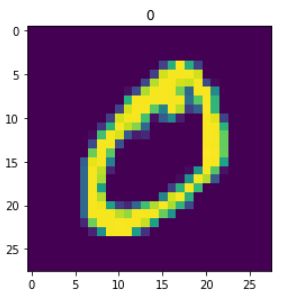

#可视化

plt.imshow(train_data.data[1])

plt.title("{}".format(train_data.targets.data[1]))5 dataloader生成

#生成dataloader

train_loader=Data.DataLoader(

dataset=train_data,

batch_size=BATCH_SIZE,

shuffle=True

)6 CNN模型定义

class CNN(nn.Module):

def __init__(self):

super(CNN,self).__init__()

self.conv1=nn.Sequential(

nn.Conv2d(

in_channels=1,

#输入shape (1,28,28)

out_channels=16,

#输出shape(16,28,28),16也是卷积核的数量

kernel_size=5,

stride=1,

padding=2),

#如果想要conv2d出来的图片长宽没有变化,那么当stride=1的时候,padding=(kernel_size-1)/2

nn.ReLU(),

nn.MaxPool2d(kernel_size=2)

#在2*2空间里面下采样,输出shape(16,14,14)

)

self.conv2=nn.Sequential(

nn.Conv2d(

in_channels=16,

#输入shape (16,14,14)

out_channels=32,

#输出shape(32,14,14)

kernel_size=5,

stride=1,

padding=2),

#输出shape(32,7,7),

nn.ReLU(),

nn.MaxPool2d(kernel_size=2)

)

self.fc=nn.Linear(32*7*7,10)

#输出一个十维的东西,表示我每个数字可能性的权重

def forward(self,x):

x=self.conv1(x)

x=self.conv2(x)

x=x.view(x.shape[0],-1)

x=self.fc(x)

return x

cnn=CNN()

print(cnn)

'''

CNN(

(conv1): Sequential(

(0): Conv2d(1, 16, kernel_size=(5, 5), stride=(1, 1), padding=(2, 2))

(1): ReLU()

(2): MaxPool2d(kernel_size=2, stride=2, padding=0, dilation=1, ceil_mode=False)

)

(conv2): Sequential(

(0): Conv2d(16, 32, kernel_size=(5, 5), stride=(1, 1), padding=(2, 2))

(1): ReLU()

(2): MaxPool2d(kernel_size=2, stride=2, padding=0, dilation=1, ceil_mode=False)

)

(fc): Linear(in_features=1568, out_features=10, bias=True)

)

'''

7 定义优化函数和损失函数

optimizer=torch.optim.SGD(cnn.parameters(),lr=LR)

loss_func=torch.nn.CrossEntropyLoss()

#损失函数定义为交叉熵

loss_his=[]8 训练模型

for epoch in range(EPOCH):

for step,(b_x,b_y) in enumerate(train_loader):

output=cnn(b_x)

loss=loss_func(output,b_y)

loss_his.append(loss)

optimizer.zero_grad()

#清除上一次参数更新的残余梯度

loss.backward()

#损失函数后向传播

optimizer.step()

#参数更新9 损失函数可视化

plt.figure(figsize=(10,5))

plt.plot(loss_his)10 结果验证

tmp=train_data.data[3]

print(tmp.shape)

#torch.Size([28, 28])

plt.imshow(tmp)

print(train_data.targets[3])

#1

tmp=tmp.reshape(1,1,28,28)

#reshape一下,这样可以送入模型中

cnn(tmp.type(torch.FloatTensor))

#type那一部分必须要,否则报错“#RuntimeError: expected scalar type Byte but found Float”

#tensor([[-74.7790, 140.8805, 54.7678, 2.0222, -28.6432, -62.3598, -20.4240,

# -44.9795, 110.6059, -37.1277]], grad_fn=)

torch.max(cnn(tmp.type(torch.FloatTensor)),axis=1)[1]

#tensor([1])