Detectron2 自定义数据集 完成训练与测试

安装Detectron2 请移步 win10下 detectron2的安装和测试_d597797974的博客-CSDN博客

1、安装标注软件 labelme

pip install labelme=3.3.1

conda install pyqt

2、自定义数据集

数据结构

----my_data

--------annotations 存放标注文件

--------train 存放训练集图片

--------val 存放测试集图片

使用labelme标注数据

通过labelme2coco.py 将标注好的多个json文件转换为一个json文件. 训练集和测试集的标注文件都转换一下

将转换后的train.json文件和test.json文件放入annotations文件夹下

#labeelme2coco.py 代码

# -*- coding:utf-8 -*-

import json

from labelme import utils

import numpy as np

import glob

import PIL.Image

class MyEncoder(json.JSONEncoder):

def default(self, obj):

if isinstance(obj, np.integer):

return int(obj)

elif isinstance(obj, np.floating):

return float(obj)

elif isinstance(obj, np.ndarray):

return obj.tolist()

else:

return super(MyEncoder, self).default(obj)

class labelme2coco(object):

def __init__(self, labelme_json=[], save_json_path='./train.json'):

'''

:param labelme_json: 所有labelme的json文件路径组成的列表

:param save_json_path: json保存位置

'''

self.labelme_json = labelme_json

self.save_json_path = save_json_path

self.images = []

self.categories = []

self.annotations = []

# self.data_coco = {}

self.label = []

self.annID = 1

self.height = 0

self.width = 0

self.save_json()

def data_transfer(self):

for num, json_file in enumerate(self.labelme_json):

with open(json_file, 'r') as fp:

data = json.load(fp) # 加载json文件

self.images.append(self.image(data, num))

for shapes in data['shapes']:

label = shapes['label']

if label not in self.label:

self.categories.append(self.categorie(label))

self.label.append(label)

points = shapes['points'] # 这里的point是用rectangle标注得到的,只有两个点,需要转成四个点

points.append([points[0][0], points[1][1]])

points.append([points[1][0], points[0][1]])

self.annotations.append(self.annotation(points, label, num))

self.annID += 1

def image(self, data, num):

image = {}

img = utils.img_b64_to_arr(data['imageData']) # 解析原图片数据

# img=io.imread(data['imagePath']) # 通过图片路径打开图片

# img = cv2.imread(data['imagePath'], 0)

height, width = img.shape[:2]

img = None

image['height'] = height

image['width'] = width

image['id'] = num + 1

image['file_name'] = data['imagePath'].split('/')[-1]

self.height = height

self.width = width

return image

def categorie(self, label):

categorie = {}

categorie['supercategory'] = 'Cancer'

categorie['id'] = len(self.label) + 1 # 0 默认为背景

categorie['name'] = label

return categorie

def annotation(self, points, label, num):

annotation = {}

annotation['segmentation'] = [list(np.asarray(points).flatten())]

annotation['iscrowd'] = 0

annotation['image_id'] = num + 1

# annotation['bbox'] = str(self.getbbox(points)) # 使用list保存json文件时报错(不知道为什么)

# list(map(int,a[1:-1].split(','))) a=annotation['bbox'] 使用该方式转成list

annotation['bbox'] = list(map(float, self.getbbox(points)))

annotation['area'] = annotation['bbox'][2] * annotation['bbox'][3]

# annotation['category_id'] = self.getcatid(label)

annotation['category_id'] = self.getcatid(label) # 注意,源代码默认为1

annotation['id'] = self.annID

return annotation

def getcatid(self, label):

for categorie in self.categories:

if label == categorie['name']:

return categorie['id']

return 1

def getbbox(self, points):

# img = np.zeros([self.height,self.width],np.uint8)

# cv2.polylines(img, [np.asarray(points)], True, 1, lineType=cv2.LINE_AA) # 画边界线

# cv2.fillPoly(img, [np.asarray(points)], 1) # 画多边形 内部像素值为1

polygons = points

mask = self.polygons_to_mask([self.height, self.width], polygons)

return self.mask2box(mask)

def mask2box(self, mask):

'''从mask反算出其边框

mask:[h,w] 0、1组成的图片

1对应对象,只需计算1对应的行列号(左上角行列号,右下角行列号,就可以算出其边框)

'''

# np.where(mask==1)

index = np.argwhere(mask == 1)

rows = index[:, 0]

clos = index[:, 1]

# 解析左上角行列号

left_top_r = np.min(rows) # y

left_top_c = np.min(clos) # x

# 解析右下角行列号

right_bottom_r = np.max(rows)

right_bottom_c = np.max(clos)

# return [(left_top_r,left_top_c),(right_bottom_r,right_bottom_c)]

# return [(left_top_c, left_top_r), (right_bottom_c, right_bottom_r)]

# return [left_top_c, left_top_r, right_bottom_c, right_bottom_r] # [x1,y1,x2,y2]

return [left_top_c, left_top_r, right_bottom_c - left_top_c,

right_bottom_r - left_top_r] # [x1,y1,w,h] 对应COCO的bbox格式

def polygons_to_mask(self, img_shape, polygons):

mask = np.zeros(img_shape, dtype=np.uint8)

mask = PIL.Image.fromarray(mask)

xy = list(map(tuple, polygons))

PIL.ImageDraw.Draw(mask).polygon(xy=xy, outline=1, fill=1)

mask = np.array(mask, dtype=bool)

return mask

def data2coco(self):

data_coco = {}

data_coco['images'] = self.images

data_coco['categories'] = self.categories

data_coco['annotations'] = self.annotations

return data_coco

def save_json(self):

self.data_transfer()

self.data_coco = self.data2coco()

# 保存json文件

json.dump(self.data_coco, open(self.save_json_path, 'w'), indent=4, cls=MyEncoder) # indent=4 更加美观显示

labelme_json = glob.glob(r'F:\my_data\annotations\*.json') #换成自己json文件所在路径

labelme2coco(labelme_json,"test.json")

print("完成")3、数据集的注册

创建 fruitsnuts_data.py 注册数据集

from detectron2.data.datasets import register_coco_instances

from detectron2.data import MetadataCatalog

import os

#声明类别,尽量保持

CLASS_NAMES =["cat","dog"]

# 数据集路径

DATASET_ROOT = r'E:\models\detectron2-master\my_data\data'

#标注文件夹路径

ANN_ROOT = os.path.join(DATASET_ROOT, 'annotations')

#训练图片路径

TRAIN_PATH = os.path.join(DATASET_ROOT, 'train')

#测试图片路径

VAL_PATH = os.path.join(DATASET_ROOT, 'val')

#训练集的标注文件

TRAIN_JSON = os.path.join(ANN_ROOT, 'train.json')

#验证集的标注文件

# VAL_JSON = os.path.join(ANN_ROOT, 'val.json')

#测试集的标注文件

VAL_JSON = os.path.join(ANN_ROOT, 'test.json')

register_coco_instances("my_train", {}, TRAIN_JSON, TRAIN_PATH)

MetadataCatalog.get("my_train").set(thing_classes=CLASS_NAMES, # 可以选择开启,但是不能显示中文,这里需要注意,中文的话最好关闭

evaluator_type='coco', # 指定评估方式

json_file=TRAIN_JSON,

image_root=TRAIN_PATH)

register_coco_instances("my_val", {}, VAL_JSON, VAL_PATH)

MetadataCatalog.get("my_val").set(thing_classes=CLASS_NAMES, # 可以选择开启,但是不能显示中文,这里需要注意,中文的话最好关闭

evaluator_type='coco', # 指定评估方式

json_file=VAL_JSON,

image_root=VAL_PATH)4、训练

from detectron2.engine import DefaultTrainer

from detectron2.config import get_cfg

from detectron2.utils.logger import setup_logger

import os

setup_logger()

import fruitsnuts_data #导入注册文件,完成注册

if __name__ == "__main__":

cfg = get_cfg()

cfg.merge_from_file(

"../configs/COCO-InstanceSegmentation/mask_rcnn_R_50_FPN_3x.yaml"

)

cfg.DATASETS.TRAIN = ("my_train",)

cfg.DATASETS.TEST = ("my_val",) # 没有不用填

cfg.DATALOADER.NUM_WORKERS = 2

#预训练模型文件

#没有可以下载

cfg.MODEL.WEIGHTS = r"detectron2://COCO-InstanceSegmentation/mask_rcnn_R_50_FPN_3x/137849600/model_final_f10217.pkl"

#或者使用自己的预训练模型

# cfg.MODEL.WEIGHTS = "../tools/output/model_0003191.pth"

cfg.SOLVER.IMS_PER_BATCH = 2

cfg.SOLVER.BASE_LR = 0.0025

#最大迭代次数

cfg.SOLVER.MAX_ITER = (2500)

cfg.MODEL.ROI_HEADS.BATCH_SIZE_PER_IMAGE = (128) # faster, and good enough for this toy dataset

cfg.MODEL.ROI_HEADS.NUM_CLASSES = 2 # 3 classes (data, fig, hazelnut)

os.makedirs(cfg.OUTPUT_DIR, exist_ok=True)

trainer = DefaultTrainer(cfg)

trainer.resume_or_load(resume=False)

trainer.train()如果使用验证集验证出现如下报错:

No evaluator found. Use `DefaultTrainer.test(evaluators=)`, or implement its `build_evaluator` method.参考: https://blog.csdn.net/weixin_42899627/article/details/119831887

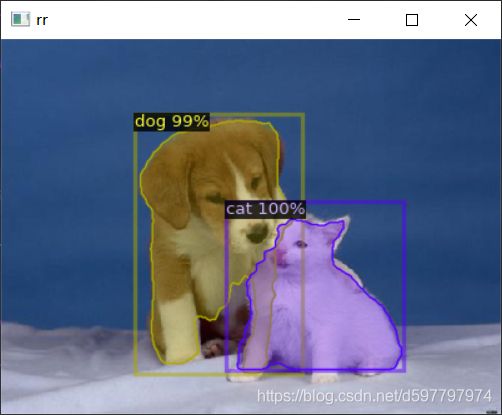

5、测试

from detectron2.utils.visualizer import Visualizer

from detectron2.data.catalog import MetadataCatalog

import cv2

from detectron2.config import get_cfg

import os

from detectron2.engine.defaults import DefaultPredictor

from detectron2.utils.visualizer import ColorMode

import fruitsnuts_data #导入注册文件,完成注册

fruits_nuts_metadata = MetadataCatalog.get("my_train") #换成自己注册的数据集

if __name__ == "__main__":

cfg = get_cfg()

#加载模型文件

cfg.merge_from_file(

"../configs/COCO-InstanceSegmentation/mask_rcnn_R_50_FPN_3x.yaml"

)

#加载训练好的模型文件

cfg.MODEL.WEIGHTS = os.path.join(cfg.OUTPUT_DIR, "model_final.pth")

print('loading from: {}'.format(cfg.MODEL.WEIGHTS))

cfg.MODEL.ROI_HEADS.SCORE_THRESH_TEST = 0.5 # 阈值

cfg.MODEL.ROI_HEADS.NUM_CLASSES = 2 #类别数

cfg.DATASETS.TEST = ("my_val", )

predictor = DefaultPredictor(cfg)

data_f = 'test1.jpg' #测试图片

im = cv2.imread(data_f)

outputs = predictor(im)

v = Visualizer(im[:, :, ::-1],

metadata=fruits_nuts_metadata,

scale=0.8,

instance_mode=ColorMode.IMAGE_BW # remove the colors of unsegmented pixels

)

v = v.draw_instance_predictions(outputs["instances"].to("cpu"))

img = v.get_image()[:, :, ::-1]

cv2.imshow('rr', img)

cv2.waitKey(0)