NNDL 实验七 循环神经网络(1)RNN记忆能力实验

文章目录

- 6.1 循环神经网络的记忆能力实验

-

- 6.1.1 数据集构建

-

- 6.1.1.1 数据集的构建函数

- 6.1.1.2 加载数据并进行数据划分

- 6.1.1.3 构造Dataset类

- 6.1.2 模型构建

-

- 6.1.2.1 嵌入层

- 6.1.2.2 SRN层

- 6.1.2.3 线性层

- 6.1.2.4 模型汇总

- 6.1.3 模型训练

-

- 6.1.3.1 训练指定长度的数字预测模型

- 6.1.3.2 多组训练

- 6.1.3.3 损失曲线展示

- 6.1.4 模型评价

- 总结

-

- 心得体会

- 参考链接

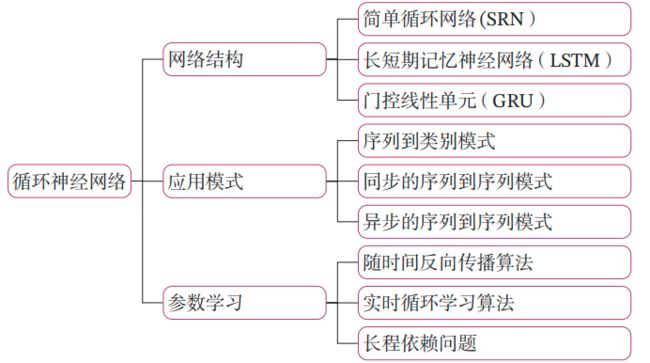

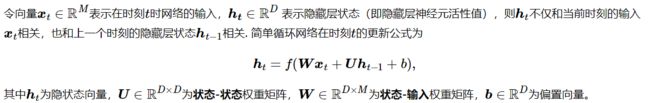

循环神经网络(Recurrent Neural Network,RNN)是一类具有短期记忆能力的神经网络.在循环神经网络中,神经元不但可以接受其他神经元的信息,也可以接受自身的信息,形成具有环路的网络结构.和前馈神经网络相比,循环神经网络更加符合生物神经网络的结构.目前,循环神经网络已经被广泛应用在语音识别、语言模型以及自然语言生成等任务上.

简单循环网络在参数学习时存在长程依赖问题,很难建模长时间间隔(Long Range)的状态之间的依赖关系。

为了测试简单循环网络的记忆能力,本节构建一个【数字求和任务】进行实验。

数字求和任务的输入是一串数字,前两个位置的数字为0-9,其余数字随机生成(主要为0),预测目标是输入序列中前两个数字的加和。图6.3展示了长度为10的数字序列.

6.1 循环神经网络的记忆能力实验

循环神经网络的一种简单实现是简单循环网络(Simple Recurrent Network,SRN)

简单循环网络在参数学习时存在长程依赖问题,很难建模长时间间隔(Long Range)的状态之间的依赖关系。为了测试简单循环网络的记忆能力,本节构建一个数字求和任务进行实验。

数字求和任务的输入是一串数字,前两个位置的数字为0-9,其余数字随机生成(主要为0),预测目标是输入序列中前两个数字的加和。图6.3展示了长度为10的数字序列.

如果序列长度越长,准确率越高,则说明网络的记忆能力越好.因此,我们可以构建不同长度的数据集,通过验证简单循环网络在不同长度的数据集上的表现,从而测试简单循环网络的长程依赖能力.

6.1.1 数据集构建

我们首先构建不同长度的数字预测数据集DigitSum.

6.1.1.1 数据集的构建函数

由于在本任务中,输入序列的前两位数字为 0 − 9,其组合数是固定的,所以可以穷举所有的前两位数字组合,并在后面默认用0填充到固定长度. 但考虑到数据的多样性,这里对生成的数字序列中的零位置进行随机采样,并将其随机替换成0-9的数字以增加样本的数量.

我们可以通过设置k的数值来指定一条样本随机生成的数字序列数量.当生成某个指定长度的数据集时,会同时生成训练集、验证集和测试集。当k=3时,生成训练集。当k=1时,生成验证集和测试集. 代码实现如下:

import random

import numpy as np

# 固定随机种子

random.seed(0)

np.random.seed(0)

def generate_data(length, k, save_path):

if length < 3:

raise ValueError("The length of data should be greater than 2.")

if k == 0:

raise ValueError("k should be greater than 0.")

# 生成100条长度为length的数字序列,除前两个字符外,序列其余数字暂用0填充

base_examples = []

for n1 in range(0, 10):

for n2 in range(0, 10):

seq = [n1, n2] + [0] * (length - 2)

label = n1 + n2

base_examples.append((seq, label))

examples = []

# 数据增强:对base_examples中的每条数据,默认生成k条数据,放入examples

for base_example in base_examples:

for _ in range(k):

# 随机生成替换的元素位置和元素

idx = np.random.randint(2, length)

val = np.random.randint(0, 10)

# 对序列中的对应零元素进行替换

seq = base_example[0].copy()

label = base_example[1]

seq[idx] = val

examples.append((seq, label))

# 保存增强后的数据

with open(save_path, "w", encoding="utf-8") as f:

for example in examples:

# 将数据转为字符串类型,方便保存

seq = [str(e) for e in example[0]]

label = str(example[1])

line = " ".join(seq) + "\t" + label + "\n"

f.write(line)

print(f"generate data to: {save_path}.")

# 定义生成的数字序列长度

lengths = [5, 10, 15, 20, 25, 30, 35]

for length in lengths:

# 生成长度为length的训练数据

save_path = f"./datasets/{length}/train.txt"

k = 3

generate_data(length, k, save_path)

# 生成长度为length的验证数据

save_path = f"./datasets/{length}/dev.txt"

k = 1

generate_data(length, k, save_path)

# 生成长度为length的测试数据

save_path = f"./datasets/{length}/test.txt"

k = 1

generate_data(length, k, save_path)

运行结果:

generate data to: ./datasets/5/train.txt.

generate data to: ./datasets/5/dev.txt.

generate data to: ./datasets/5/test.txt.

generate data to: ./datasets/10/train.txt.

generate data to: ./datasets/10/dev.txt.

generate data to: ./datasets/10/test.txt.

generate data to: ./datasets/15/train.txt.

generate data to: ./datasets/15/dev.txt.

generate data to: ./datasets/15/test.txt.

generate data to: ./datasets/20/train.txt.

generate data to: ./datasets/20/dev.txt.

generate data to: ./datasets/20/test.txt.

generate data to: ./datasets/25/train.txt.

generate data to: ./datasets/25/dev.txt.

generate data to: ./datasets/25/test.txt.

generate data to: ./datasets/30/train.txt.

generate data to: ./datasets/30/dev.txt.

generate data to: ./datasets/30/test.txt.

generate data to: ./datasets/35/train.txt.

generate data to: ./datasets/35/dev.txt.

generate data to: ./datasets/35/test.txt.

进程已结束,退出代码为 0

6.1.1.2 加载数据并进行数据划分

为方便使用,本实验提前生成了长度分别为5、10、 15、20、25、30和35的7份数据,存放于“./datasets”目录下,读者可以直接加载使用。代码实现如下:

import os

# 加载数据

def load_data(data_path):

# 加载训练集

train_examples = []

train_path = os.path.join(data_path, "train.txt")

with open(train_path, "r", encoding="utf-8") as f:

for line in f.readlines():

# 解析一行数据,将其处理为数字序列seq和标签label

items = line.strip().split("\t")

seq = [int(i) for i in items[0].split(" ")]

label = int(items[1])

train_examples.append((seq, label))

# 加载验证集

dev_examples = []

dev_path = os.path.join(data_path, "dev.txt")

with open(dev_path, "r", encoding="utf-8") as f:

for line in f.readlines():

# 解析一行数据,将其处理为数字序列seq和标签label

items = line.strip().split("\t")

seq = [int(i) for i in items[0].split(" ")]

label = int(items[1])

dev_examples.append((seq, label))

# 加载测试集

test_examples = []

test_path = os.path.join(data_path, "test.txt")

with open(test_path, "r", encoding="utf-8") as f:

for line in f.readlines():

# 解析一行数据,将其处理为数字序列seq和标签label

items = line.strip().split("\t")

seq = [int(i) for i in items[0].split(" ")]

label = int(items[1])

test_examples.append((seq, label))

return train_examples, dev_examples, test_examples

# 设定加载的数据集的长度

length = 5

# 该长度的数据集的存放目录

data_path = f"./datasets/{length}"

# 加载该数据集

train_examples, dev_examples, test_examples = load_data(data_path)

print("dev example:", dev_examples[:2])

print("训练集数量:", len(train_examples))

print("验证集数量:", len(dev_examples))

print("测试集数量:", len(test_examples))

运行结果:

dev example: [([0, 0, 6, 0, 0], 0), ([0, 1, 0, 0, 8], 1)]

训练集数量: 300

验证集数量: 100

测试集数量: 100

6.1.1.3 构造Dataset类

为了方便使用梯度下降法进行优化,我们构造了DigitSum数据集的Dataset类,函数__getitem__负责根据索引读取数据,并将数据转换为张量。代码实现如下:

from torch.utils.data import Dataset

class DigitSumDataset(Dataset):

def __init__(self, data):

self.data = data

def __getitem__(self, idx):

example = self.data[idx]

seq = paddle.to_tensor(example[0], dtype="int64")

label = paddle.to_tensor(example[1], dtype="int64")

return seq, label

def __len__(self):

return len(self.data)

6.1.2 模型构建

使用SRN模型进行数字加和任务的模型结构为如图6.4所示.

整个模型由以下几个部分组成:

(1) 嵌入层:将输入的数字序列进行向量化,即将每个数字映射为向量;

(2) SRN 层:接收向量序列,更新循环单元,将最后时刻的隐状态作为整个序列的表示;

(3) 输出层:一个线性层,输出分类的结果.

6.1.2.1 嵌入层

本任务输入的样本是数字序列,为了更好地表示数字,需要将数字映射为一个嵌入(Embedding)向量。嵌入向量中的每个维度均能用来刻画该数字本身的某种特性。由于向量能够表达该数字更多的信息,利用向量进行数字求和任务,可以使得模型具有更强的拟合能力。

提醒:为了和代码的实现保持一致性,这里使用形状为(样本数量×序列长度×特征维度)的张量来表示一组样本。

import torch.nn as nn

import torch

class Embedding(nn.Module):

def __init__(self, num_embeddings, embedding_dim):

super(Embedding, self).__init__()

self.W = nn.init.xavier_uniform_(torch.empty(num_embeddings, embedding_dim),gain=1.0)

def forward(self, inputs):

# 根据索引获取对应词向量

embs = self.W[inputs]

return embs

emb_layer = Embedding(10, 5)

inputs = torch.tensor([0, 1, 2, 3])

emb_layer(inputs)

6.1.2.2 SRN层

import torch

import torch.nn as nn

import torch.nn.functional as F

torch.manual_seed(0)

# SRN模型

class SRN(nn.Module):

def __init__(self, input_size, hidden_size, W_attr=None, U_attr=None, b_attr=None):

super(SRN, self).__init__()

# 嵌入向量的维度

self.input_size = input_size

# 隐状态的维度

self.hidden_size = hidden_size

# 定义模型参数W,其shape为 input_size x hidden_size

if W_attr==None:

W=torch.zeros(size=[input_size, hidden_size], dtype=torch.float32)

else:

W=torch.tensor(W_attr,dtype=torch.float32)

self.W = torch.nn.Parameter(W)

# 定义模型参数U,其shape为hidden_size x hidden_size

if U_attr==None:

U=torch.zeros(size=[hidden_size, hidden_size], dtype=torch.float32)

else:

U=torch.tensor(U_attr,dtype=torch.float32)

self.U = torch.nn.Parameter(U)

# 定义模型参数b,其shape为 1 x hidden_size

if b_attr==None:

b=torch.zeros(size=[1, hidden_size], dtype=torch.float32)

else:

b=torch.tensor(b_attr,dtype=torch.float32)

self.b = torch.nn.Parameter(b)

# 初始化向量

def init_state(self, batch_size):

hidden_state = torch.zeros(size=[batch_size, self.hidden_size], dtype=torch.float32)

return hidden_state

# 定义前向计算

def forward(self, inputs, hidden_state=None):

# inputs: 输入数据, 其shape为batch_size x seq_len x input_size

batch_size, seq_len, input_size = inputs.shape

# 初始化起始状态的隐向量, 其shape为 batch_size x hidden_size

if hidden_state is None:

hidden_state = self.init_state(batch_size)

# 循环执行RNN计算

for step in range(seq_len):

# 获取当前时刻的输入数据step_input, 其shape为 batch_size x input_size

step_input = inputs[:, step, :]

# 获取当前时刻的隐状态向量hidden_state, 其shape为 batch_size x hidden_size

hidden_state = F.tanh(torch.matmul(step_input, self.W) + torch.matmul(hidden_state, self.U) + self.b)

return hidden_state

## 初始化参数并运行

U_attr = [[0.0, 0.1], [0.1,0.0]]

b_attr = [[0.1, 0.1]]

W_attr=[[0.1, 0.2], [0.1,0.2]]

srn = SRN(2, 2, W_attr=W_attr, U_attr=U_attr, b_attr=b_attr)

inputs = torch.tensor([[[1, 0],[0, 2]]], dtype=torch.float32)

hidden_state = srn(inputs)

print("hidden_state", hidden_state)

运行结果:

hidden_state tensor([[0.3177, 0.4775]], grad_fn=<TanhBackward0>)

Torch框架内置了SRN的API torch.nn.RNN

## 初始化参数并运行

U_attr = [[0.0, 0.1], [0.1,0.0]]

b_attr = [[0.1, 0.1]]

W_attr=[[0.1, 0.2], [0.1,0.2]]

srn = SRN(2, 2, W_attr=W_attr, U_attr=U_attr, b_attr=b_attr)

inputs = torch.tensor([[[1, 0],[0, 2]]], dtype=torch.float32)

hidden_state = srn(inputs)

print("hidden_state", hidden_state)

# 这里创建一个随机数组作为测试数据,数据shape为batch_size x seq_len x input_size

batch_size, seq_len, input_size = 8, 20, 32

inputs = torch.randn([batch_size, seq_len, input_size])

# 设置模型的hidden_size

hidden_size = 32

torch_srn = nn.RNN(input_size, hidden_size)

self_srn = SRN(input_size, hidden_size)

self_hidden_state = self_srn(inputs)

torch_outputs, torch_hidden_state = torch_srn(inputs)

print("self_srn hidden_state: ", self_hidden_state.shape)

print("torch_srn outpus:", torch_outputs.shape)

print("torch_srn hidden_state:", torch_hidden_state.shape)

运行结果:

hidden_state tensor([[0.3177, 0.4775]], grad_fn=<TanhBackward0>)

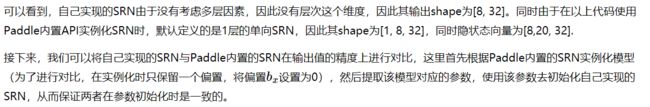

self_srn hidden_state: torch.Size([8, 32])

torch_srn outpus: torch.Size([8, 20, 32])

torch_srn hidden_state: torch.Size([1, 20, 32])

在进行实验时,首先定义输入数据inputs,然后将该数据分别传入Paddle内置的SRN与自己实现的SRN模型中,最后通过对比两者的隐状态输出向量。代码实现如下:

import time

# 计算自己实现的SRN运算速度

model_time = 0

for i in range(100):

strat_time = time.time()

out = self_srn(inputs)

if i < 10:

continue

end_time = time.time()

model_time += (end_time - strat_time)

avg_model_time = model_time / 90

print('self_srn speed:', avg_model_time, 's')

# 计算torch内置的SRN运算速度

model_time = 0

for i in range(100):

strat_time = time.time()

out = torch_srn(inputs)

# 预热10次运算,不计入最终速度统计

if i < 10:

continue

end_time = time.time()

model_time += (end_time - strat_time)

avg_model_time = model_time / 90

print('torch_srn speed:', avg_model_time, 's')

运行结果:

self_srn speed: 0.0010015858544243706 s

torch_srn speed: 0.0003858698738945855 s

可以看到,由于Paddle内部相关算子由C++实现,Paddle框架实现的SRN的运行效率显著高于自己实现的SRN效率。

6.1.2.3 线性层

提醒:在分类问题的实践中,我们通常只需要模型输出分类的对数几率(Logits),而不用输出每个类的概率。这需要损失函数可以直接接收对数几率来损失计算。

线性层直接使用paddle.nn.Linear算子。

6.1.2.4 模型汇总

在定义了每一层的算子之后,我们定义一个数字求和模型Model_RNN4SeqClass,该模型会将嵌入层、SRN层和线性层进行组合,以实现数字求和的功能.

具体来讲,Model_RNN4SeqClass会接收一个SRN层实例,用于处理数字序列数据,同时在__init__函数中定义一个Embedding嵌入层,其会将输入的数字作为索引,输出对应的向量,最后会使用paddle.nn.Linear定义一个线性层。

提醒:为了方便进行对比实验,我们将SRN层的实例化放在\code{Model_RNN4SeqClass}类外面。通常情况下,模型内部算子的实例化是放在模型里面。

在forward函数中,调用上文实现的嵌入层、SRN层和线性层处理数字序列,同时返回最后一个位置的隐状态向量。代码实现如下:

# 基于RNN实现数字预测的模型

class Model_RNN4SeqClass(nn.Module):

def __init__(self, model, num_digits, input_size, hidden_size, num_classes):

super(Model_RNN4SeqClass, self).__init__()

# 传入实例化的RNN层,例如SRN

self.rnn_model = model

# 词典大小

self.num_digits = num_digits

# 嵌入向量的维度

self.input_size = input_size

# 定义Embedding层

self.embedding = Embedding(num_digits, input_size)

# 定义线性层

self.linear = nn.Linear(hidden_size, num_classes)

def forward(self, inputs):

# 将数字序列映射为相应向量

inputs_emb = self.embedding(inputs)

# 调用RNN模型

hidden_state = self.rnn_model(inputs_emb)

# 使用最后一个时刻的状态进行数字预测

logits = self.linear(hidden_state)

return logits

# 实例化一个input_size为4, hidden_size为5的SRN

srn = SRN(4, 5)

# 基于srn实例化一个数字预测模型实例

model = Model_RNN4SeqClass(srn, 10, 4, 5, 19)

# 生成一个shape为 2 x 3 的批次数据

inputs = torch.tensor([[1, 2, 3], [2, 3, 4]])

# 进行模型前向预测

logits = model(inputs)

print(logits)

运行结果:

tensor([[-0.1311, -0.0006, -0.4136, -0.3404, -0.0430, -0.1545, 0.4348, -0.0957,

-0.3732, 0.3813, -0.2891, 0.3702, -0.0454, 0.0878, -0.4385, 0.4058,

-0.0050, -0.2243, -0.3191],

[-0.1311, -0.0006, -0.4136, -0.3404, -0.0430, -0.1545, 0.4348, -0.0957,

-0.3732, 0.3813, -0.2891, 0.3702, -0.0454, 0.0878, -0.4385, 0.4058,

-0.0050, -0.2243, -0.3191]], grad_fn=<AddmmBackward0>)

6.1.3 模型训练

6.1.3.1 训练指定长度的数字预测模型

基于RunnerV3类进行训练,只需要指定length便可以加载相应的数据。设置超参数,使用Adam优化器,学习率为 0.001,实例化模型,使用第4.5.4节定义的Accuracy计算准确率。使用Runner进行训练,训练回合数设为500。代码实现如下:

import os

import random

import torch

import numpy as np

from metric import Accuracy

from Runner import RunnerV3

# 训练轮次

num_epochs = 500

# 学习率

lr = 0.001

# 输入数字的类别数

num_digits = 10

# 将数字映射为向量的维度

input_size = 32

# 隐状态向量的维度

hidden_size = 32

# 预测数字的类别数

num_classes = 19

# 批大小

batch_size = 8

# 模型保存目录

save_dir = "./checkpoints"

# 通过指定length进行不同长度数据的实验

def train(length):

print(f"\n====> Training SRN with data of length {length}.")

# 加载长度为length的数据

data_path = f"./datasets/{length}"

train_examples, dev_examples, test_examples = load_data(data_path)

train_set, dev_set, test_set = DigitSumDataset(train_examples), DigitSumDataset(dev_examples), DigitSumDataset(test_examples)

train_loader = DataLoader(train_set, batch_size=batch_size)

dev_loader = DataLoader(dev_set, batch_size=batch_size)

test_loader = DataLoader(test_set, batch_size=batch_size)

# 实例化模型

base_model = SRN(input_size, hidden_size)

model = Model_RNN4SeqClass(base_model, num_digits, input_size, hidden_size, num_classes)

# 指定优化器

optimizer = torch.optim.Adam(lr=lr, params=model.parameters())

# 定义评价指标

metric = Accuracy()

# 定义损失函数

loss_fn = nn.CrossEntropyLoss()

# 基于以上组件,实例化Runner

runner = RunnerV3(model, optimizer, loss_fn, metric)

# 进行模型训练

model_save_path = os.path.join(save_dir, f"best_srn_model_{length}.pdparams")

runner.train(train_loader, dev_loader, num_epochs=num_epochs, eval_steps=100, log_steps=100, save_path=model_save_path)

return runner

6.1.3.2 多组训练

接下来,分别进行数据长度为10, 15, 20, 25, 30, 35的数字预测模型训练实验,训练后的runner保存至runners字典中。

srn_runners = {}

lengths = [10, 15, 20, 25, 30, 35]

for length in lengths:

runner = train(length)

srn_runners[length] = runner

运行结果:

====> Training SRN with data of length 10.

[Train] epoch: 494/500, step: 18800/19000, loss: 0.01014

[Evaluate] dev score: 0.64000, dev loss: 1.73659

[Train] epoch: 497/500, step: 18900/19000, loss: 1.97520

[Evaluate] dev score: 0.35000, dev loss: 3.42333

[Evaluate] dev score: 0.52000, dev loss: 2.78493

====> Training SRN with data of length 15.

[Train] epoch: 494/500, step: 18800/19000, loss: 0.03588

[Evaluate] dev score: 0.39000, dev loss: 3.39435

[Train] epoch: 497/500, step: 18900/19000, loss: 0.03047

[Evaluate] dev score: 0.39000, dev loss: 3.43460

[Evaluate] dev score: 0.38000, dev loss: 3.45770

====> Training SRN with data of length 20.

[Train] epoch: 494/500, step: 18800/19000, loss: 0.40579

[Evaluate] dev score: 0.19000, dev loss: 3.77184

[Train] epoch: 497/500, step: 18900/19000, loss: 0.75745

[Evaluate] dev score: 0.19000, dev loss: 3.87705

[Evaluate] dev score: 0.19000, dev loss: 3.82624

====> Training SRN with data of length 25.

[Train] epoch: 494/500, step: 18800/19000, loss: 1.55447

[Evaluate] dev score: 0.12000, dev loss: 3.99913

[Train] epoch: 497/500, step: 18900/19000, loss: 1.02947

[Evaluate] dev score: 0.16000, dev loss: 3.89307

[Evaluate] dev score: 0.14000, dev loss: 4.03331

====> Training SRN with data of length 30.

[Train] epoch: 494/500, step: 18800/19000, loss: 0.59770

[Evaluate] dev score: 0.14000, dev loss: 4.28721

[Train] epoch: 497/500, step: 18900/19000, loss: 0.84703

[Evaluate] dev score: 0.16000, dev loss: 4.30710

[Evaluate] dev score: 0.10000, dev loss: 4.31215

====> Training SRN with data of length 35.

[Train] epoch: 494/500, step: 18800/19000, loss: 1.24212

[Evaluate] dev score: 0.12000, dev loss: 4.29184

[Train] epoch: 497/500, step: 18900/19000, loss: 0.76860

[Evaluate] dev score: 0.11000, dev loss: 4.35739

[Evaluate] dev score: 0.13000, dev loss: 4.19013

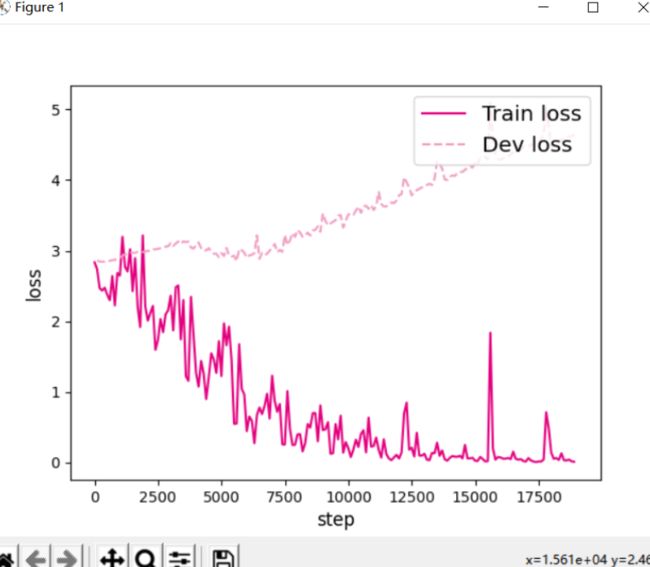

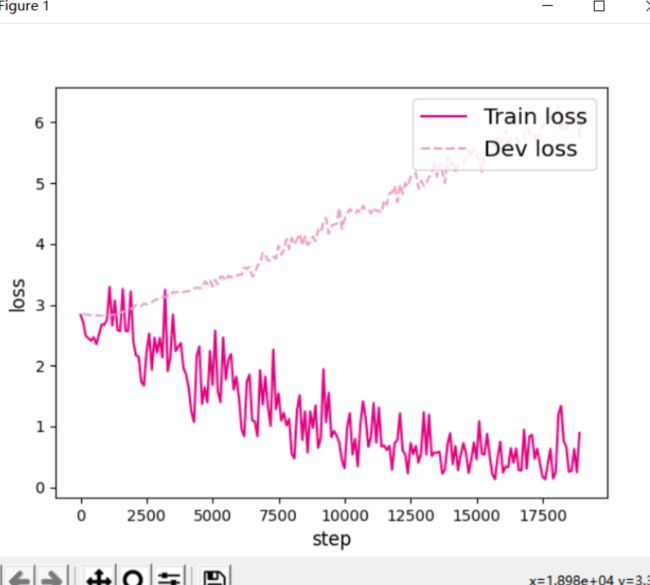

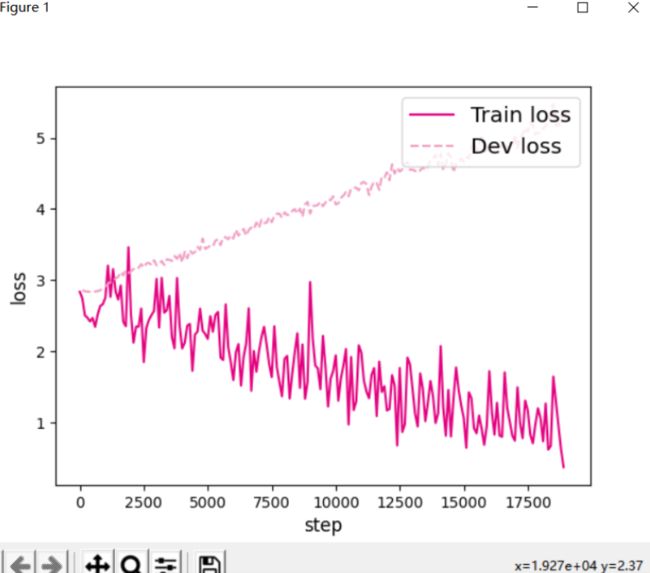

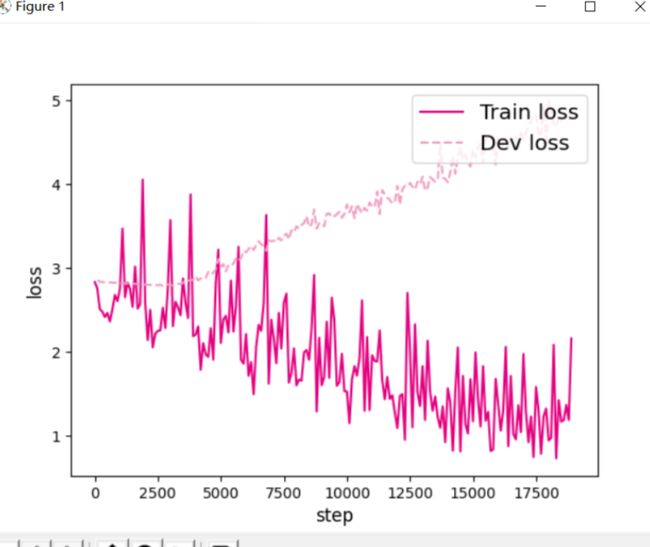

6.1.3.3 损失曲线展示

定义plot_training_loss函数,分别画出各个长度的数字预测模型训练过程中,在训练集和验证集上的损失曲线,实现代码实现如下:

import matplotlib.pyplot as plt

def plot_training_loss(runner, fig_name, sample_step):

plt.figure()

train_items = runner.train_step_losses[::sample_step]

train_steps = [x[0] for x in train_items]

train_losses = [x[1] for x in train_items]

plt.plot(train_steps, train_losses, color='#e4007f', label="Train loss")

dev_steps = [x[0] for x in runner.dev_losses]

dev_losses = [x[1] for x in runner.dev_losses]

plt.plot(dev_steps, dev_losses, color='#f19ec2', linestyle='--', label="Dev loss")

# 绘制坐标轴和图例

plt.ylabel("loss", fontsize='large')

plt.xlabel("step", fontsize='large')

plt.legend(loc='upper right', fontsize='x-large')

plt.savefig(fig_name)

plt.show()

# 画出训练过程中的损失图

for length in lengths:

runner = srn_runners[length]

fig_name = f"./images/6.6_{length}.pdf"

plot_training_loss(runner, fig_name, sample_step=100)

运行结果:

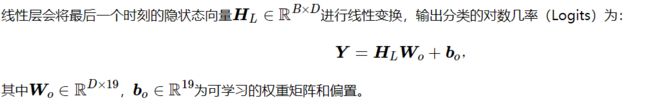

图6.6展示了在6个数据集上的损失变化情况,数据集的长度分别为10、15、20、25、30和35. 从输出结果看,随着数据序列长度的增加,虽然训练集损失逐渐逼近于0,但是验证集损失整体趋向越来越大,这表明当序列变长时,SRN模型保持序列长期依赖能力在逐渐变弱,越来越无法学习到有用的知识.

6.1.4 模型评价

在模型评价时,加载不同长度的效果最好的模型,然后使用测试集对该模型进行评价,观察模型在测试集上预测的准确度. 同时记录一下不同长度模型在训练过程中,在验证集上最好的效果。代码实现如下。

srn_dev_scores = []

srn_test_scores = []

for length in lengths:

print(f"Evaluate SRN with data length {length}.")

runner = srn_runners[length]

# 加载训练过程中效果最好的模型

model_path = os.path.join(save_dir, f"best_srn_model_{length}.pdparams")

runner.load_model(model_path)

# 加载长度为length的数据

data_path = f"./datasets/{length}"

train_examples, dev_examples, test_examples = load_data(data_path)

test_set = DigitSumDataset(test_examples)

test_loader = DataLoader(test_set, batch_size=batch_size)

# 使用测试集评价模型,获取测试集上的预测准确率

score, _ = runner.evaluate(test_loader)

srn_test_scores.append(score)

srn_dev_scores.append(max(runner.dev_scores))

for length, dev_score, test_score in zip(lengths, srn_dev_scores, srn_test_scores):

print(f"[SRN] length:{length}, dev_score: {dev_score}, test_score: {test_score: .5f}")

import matplotlib.pyplot as plt

plt.plot(lengths, srn_dev_scores, '-o', color='#e4007f', label="Dev Accuracy")

plt.plot(lengths, srn_test_scores,'-o', color='#f19ec2', label="Test Accuracy")

#绘制坐标轴和图例

plt.ylabel("accuracy", fontsize='large')

plt.xlabel("sequence length", fontsize='large')

plt.legend(loc='upper right', fontsize='x-large')

fig_name = "./images/6.7.pdf"

plt.savefig(fig_name)

plt.show()

运行结果:

Evaluate SRN with data length 10.

Evaluate SRN with data length 15.

Evaluate SRN with data length 20.

Evaluate SRN with data length 25.

Evaluate SRN with data length 30.

Evaluate SRN with data length 35.

[SRN] length:10, dev_score: 0.68, test_score: 0.60000

[SRN] length:15, dev_score: 0.43, test_score: 0.47000

[SRN] length:20, dev_score: 0.25, test_score: 0.14000

[SRN] length:25, dev_score: 0.2, test_score: 0.07000

[SRN] length:30, dev_score: 0.18, test_score: 0.09000

[SRN] length:35, dev_score: 0.17, test_score: 0.06000

总结

心得体会

通过本次实验了解了如何处理长程依赖问题,也深刻理解了RNN的有关内容。

循环神经网络(RNN)是一类用于处理序列数据的神经网络。

RNN: 借助循环核(cell)提取特征后,送入后续网络(如全连接网络 Dense)

进行预测等操作。RNN 借助循环核从时间维度提取信息,循环核参数时间共享。

参考链接

NNDL 实验6(上) - HBU_DAVID - 博客园 (cnblogs.com)

NNDL 实验七 循环神经网络(1)RNN记忆能力实验

循环神经网络(Recurrent Neural Network, RNN)

循环神经网络