YOLOv5白皮书-第Y5周:yolo.py文件解读

目录

- 一、课题背景和开发环境

-

- 开发环境

- 二、代码解析

-

- 0.导入需要的包和基本配置

- 1.parse_model函数

- 2.Detect类

- 3.Model类

- 4.资料

- 三、调整模型

- 四、运行&打印模型查看

一、课题背景和开发环境

第Y5周:yolo.py文件解读

- 语言:Python3、Pytorch

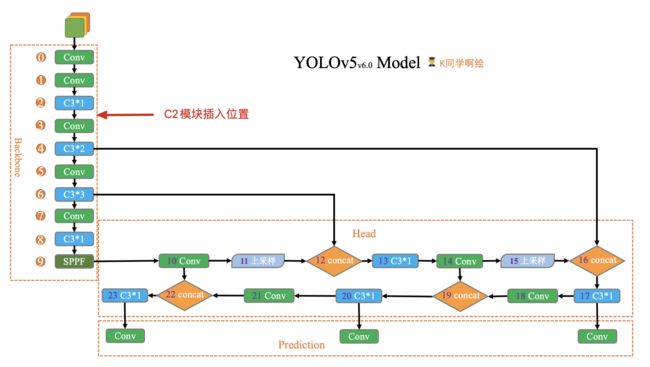

- 本周任务:将yolov5s网络模型中的C3模块按照下图方式修改形成C2模块,并将C2模块插入第2层与第3层之间,且跑通yolov5。

- 任务提示:

– 提示1:需要修改./models/common.py、./models/yolo.py、./models/yolov5s.yaml文件

– 提示2:C2模块与C3模块是非常相似的两个模块,我们要插入C2到模型当中,只需要找到哪里有C3模块,然后在其附近加上C2即可。

文件位置:

./models/yolo.py

这个文件是YOLOv5网络模型的搭建文件,如果想改进YOLOv5,那么这个文件是必须要进行修改的文件之一。文件内容看起来多,其实真正有用的代码不多,重点理解好parse_model函数和Detect、Model两个类即可。

注:由于YOLOv5版本众多,同一个文件对于细节处我们可能会看到不同的版本,不用担心,都是正常的,注意把握好整体架构即可。

开发环境

- 电脑系统:Windows 10

- 语言环境:Python 3.8.2

- 编译器:无(直接在cmd.exe内运行)

- 深度学习环境:Pytorch 1.8.1+cu111

- 显卡及显存:NVIDIA GeForce GTX 1660 Ti 12G

- CUDA版本:Release 10.2, V10.2.89(

cmd输入nvcc -V或nvcc --version指令可查看) - YOLOv5开源地址:YOLOv5开源地址

- 数据:水果检测

二、代码解析

0.导入需要的包和基本配置

import argparse # 解析命令行参数模块

import contextlib

import os

import platform

import sys # sys系统模块,包含了与Python解释器和它的环境有关的函数

from copy import deepcopy # 数据拷贝模块,深拷贝

from pathlib import Path # Path将str转换为Path对象,使字符串路径易于操作

FILE = Path(__file__).resolve()

ROOT = FILE.parents[1] # YOLOv5 root directory

if str(ROOT) not in sys.path:

sys.path.append(str(ROOT)) # add ROOT to PATH

if platform.system() != 'Windows':

ROOT = Path(os.path.relpath(ROOT, Path.cwd())) # relative

from models.common import *

from models.experimental import *

from utils.autoanchor import check_anchor_order

from utils.general import LOGGER, check_version, check_yaml, make_divisible, print_args

from utils.plots import feature_visualization

from utils.torch_utils import (fuse_conv_and_bn, initialize_weights, model_info, profile, scale_img, select_device,

time_sync)

# 导入thop包,用于计算FLOPs

try:

import thop # for FLOPs computation

except ImportError:

thop = None

1.parse_model函数

这个函数用于将模型的模块拼接起来,搭建完整的网络模型。后续如果需要动模型框架的话,需要对这个函数做相应的改动。

def parse_model(d, ch): # model_dict, input_channels(3)

# Parse a YOLOv5 model.yaml dictionary

''' 用在上面DetectionModel模块中

解析模型文件(字典形式),并搭建网络结构

这个函数其实主要做的就是:

更新当前层的args(参数),计算c2(当前层的输出channel)

->使用当前层的参数搭建当前层

->生成 layers + save

:params d: model_dict模型文件,字典形式{dice: 7}(yolov5s.yaml中的6个元素 + ch)

:params ch: 记录模型每一层的输出channel,初始ch=[3],后面会删除

:return nn.Sequential(*layers): 网络的每一层的层结构

:return sorted(save): 把所有层结构中的from不是-1的值记下,并排序[4,6,10,14,17,20,23]

'''

LOGGER.info(f"\n{'':>3}{'from':>18}{'n':>3}{'params':>10} {'module':<40}{'arguments':<30}")

# 读取字典d中的anchors和parameters(nc,depth_multiple,width_multiple)

anchors, nc, gd, gw, act = d['anchors'], d['nc'], d['depth_multiple'], d['width_multiple'], d.get('activation')

if act:

Conv.default_act = eval(act) # redefine default activation, i.e. Conv.default_act = nn.SiLU()

LOGGER.info(f"{colorstr('activation:')} {act}") # print

# na: number of anchors 每一个predict head上的anchor数=3

na = (len(anchors[0]) // 2) if isinstance(anchors, list) else anchors # number of anchors

# no: number of outputs 每一个predict head层的输出channel=anchors*(classes+5)=75(VOC)

no = na * (nc + 5) # number of outputs = anchors * (classes + 5)

''' 开始搭建网络

layers: 保存每一层的层结构

save: 记录下所有层结构中from不是-1的层结构序号

c2: 保存当前层的输出channel

'''

layers, save, c2 = [], [], ch[-1] # layers, savelist, ch out

# from: 当前层输入来自哪些层

# number: 当前层数,初定

# module: 当前层类别

# args: 当前层类参数,初定

# 遍历backbone和head的每一层

for i, (f, n, m, args) in enumerate(d['backbone'] + d['head']): # from, number, module, args

# 得到当前层的真实类名,例如:m = Focus -> 2.Detect类

Detect模块是用来构建Detect层的,将输入的feature map通过一个卷积操作和公式计算到我们想要的shape,为后面的计算损失率或者NMS做准备。

class Detect(nn.Module):

# YOLOv5 Detect head for detection models

''' Detect模块是用来构建Detect层的

将输入的feature map通过一个卷积操作和公式计算到我们想要的shape,为后面的计算损失率或者NMS做准备

'''

stride = None # strides computed during build

dynamic = False # force grid reconstruction

export = False # export mode

def __init__(self, nc=80, anchors=(), ch=(), inplace=True): # detection layer

''' detection layer 相当于yolov3中的YOLO Layer层

:params nc: number of classes

:params anchors: 传入3个feature map上的所有anchor的大小(P3/P4/P5)

:params ch: [128,256,512] 3个输出feature map的channel

'''

super().__init__()

self.nc = nc # number of classes VOC: 20

self.no = nc + 5 # number of outputs per anchor VOC: 5(xywhc)+20(classes)=25

self.nl = len(anchors) # number of detection layers Detect的个数=3

self.na = len(anchors[0]) // 2 # number of anchors 每个feature map的anchor个数=3

self.grid = [torch.empty(0) for _ in range(self.nl)] # init grid {list: 3} tensor([0.])X3

self.anchor_grid = [torch.empty(0) for _ in range(self.nl)] # init anchor grid

''' 模型中需要保存的参数一般有两种:

一种是反向传播需要被optimizer更新的,称为parameter;另一种不需要被更新,称为buffer

buffer的参数更新是在forward中,而optim.step只能更新nn.parameter参数

'''

self.register_buffer('anchors', torch.tensor(anchors).float().view(self.nl, -1, 2)) # shape(nl,na,2)

# output conv 对每个输出的feature map都要调用一次conv1 x 1

self.m = nn.ModuleList(nn.Conv2d(x, self.no * self.na, 1) for x in ch) # output conv

# 一般都是True,默认不使用AWS,Inferentia加速

self.inplace = inplace # use inplace ops (e.g. slice assignment)

def forward(self, x):

'''

:return train: 一个tensor list,存放三个元素

[bs, anchor_num, grid_w, grid_h, xywh+c+classes]

分别是[1,3,80,80,25] [1,3,40,40,25] [1,3,20,20,25]

inference: 0 [1,19200+4800+1200,25]=[bs,anchor_num*grid_w*grid_h,xywh+c+classes]

'''

z = [] # inference output

for i in range(self.nl): # 对3个feature map分别进行处理

x[i] = self.m[i](x[i]) # conv xi[bs,128/256/512,80,80] to [bs,75,80,80]

bs, _, ny, nx = x[i].shape # x(bs,255,20,20) to x(bs,3,20,20,85)

# [bs,75,80,80] to [1,3,25,80,80] to [1,3,80,80,25]

x[i] = x[i].view(bs, self.na, self.no, ny, nx).permute(0, 1, 3, 4, 2).contiguous()

''' 构造网格

因为推理返回的不是归一化后的网络偏移量,需要加上网格的位置,得到最终的推理坐标,再送入NMS

所以这里构建网络就是为了记录每个grid的网格坐标,方便后面使用

'''

if not self.training: # inference

if self.dynamic or self.grid[i].shape[2:4] != x[i].shape[2:4]:

self.grid[i], self.anchor_grid[i] = self._make_grid(nx, ny, i)

if isinstance(self, Segment): # (boxes + masks)

xy, wh, conf, mask = x[i].split((2, 2, self.nc + 1, self.no - self.nc - 5), 4)

xy = (xy.sigmoid() * 2 + self.grid[i]) * self.stride[i] # xy

wh = (wh.sigmoid() * 2) ** 2 * self.anchor_grid[i] # wh

y = torch.cat((xy, wh, conf.sigmoid(), mask), 4)

else: # Detect (boxes only)

xy, wh, conf = x[i].sigmoid().split((2, 2, self.nc + 1), 4)

xy = (xy * 2 + self.grid[i]) * self.stride[i] # xy

wh = (wh * 2) ** 2 * self.anchor_grid[i] # wh

y = torch.cat((xy, wh, conf), 4)

# z是一个tensor list,有三个元素,分别是[1,19200,25] [1,4800,25] [1,1200,25]

z.append(y.view(bs, self.na * nx * ny, self.no))

return x if self.training else (torch.cat(z, 1),) if self.export else (torch.cat(z, 1), x)

def _make_grid(self, nx=20, ny=20, i=0, torch_1_10=check_version(torch.__version__, '1.10.0')):

''' 构造网格 '''

d = self.anchors[i].device

t = self.anchors[i].dtype

shape = 1, self.na, ny, nx, 2 # grid shape

y, x = torch.arange(ny, device=d, dtype=t), torch.arange(nx, device=d, dtype=t)

yv, xv = torch.meshgrid(y, x, indexing='ij') if torch_1_10 else torch.meshgrid(y, x) # torch>=0.7 compatibility

grid = torch.stack((xv, yv), 2).expand(shape) - 0.5 # add grid offset, i.e. y = 2.0 * x - 0.5

anchor_grid = (self.anchors[i] * self.stride[i]).view((1, self.na, 1, 1, 2)).expand(shape)

return grid, anchor_grid

3.Model类

这个模块是整个模型的搭建模块。且yolov5的作者将这个模块的功能写的很全,不光包含模型的搭建,还扩展了很多功能,如:特征可视化、打印模型信息、TTA推理增强、融合Conv + BN加速推理、模型搭载NMS功能、Autoshape函数(模型包含前处理、推理、后处理的模块(预处理 + 推理 + NMS))。感兴趣的可以仔细看看,不感兴趣的可以直接看__init__、forward两个函数即可。

class BaseModel(nn.Module):

# YOLOv5 base model

def forward(self, x, profile=False, visualize=False):

return self._forward_once(x, profile, visualize) # single-scale inference, train

def _forward_once(self, x, profile=False, visualize=False):

'''

:params x: 输入图像

:params profile: True 可以做一些性能评估

:params visualize: True 可以做一些特征可视化

:return train: 一个tensor,存放三个元素 [bs, anchor_num, grid_w, grid_h, xywh+c+classes]

inference: 0 [1,19200+4800+1200,25]=[bs,anchor_num*grid_w*grid_h,xywh+c+classes]

'''

# y: 存放着self.save=True的每一层的输出,因为后面的层结构Concat等操作要用到

# dt: 在profile中做性能评估时使用

y, dt = [], [] # outputs

for m in self.model:

# 前向推理每一层结构 m.i=index; m.f=from; m.type=类名; m.np=number of parameters

if m.f != -1: # if not from previous layer m.f=当前层的输入来自哪一层的输出,-1表示上一层

# 这里需要做4个Concat操作和一个Detect操作

# Concat: 如m.f=[-1,6] x就有两个元素,一个是上一层的输出,一个是index=6的层的输出,再送到x=m(x)做Concat操作

# Detect: 如m.f=[17, 20, 23] x就有三个元素,分别存放第17层第20层第23层的输出,再送到x=m(x)做Detect的forward

x = y[m.f] if isinstance(m.f, int) else [x if j == -1 else y[j] for j in m.f] # from earlier layers

# 打印日志信息 FLOPs time等

if profile:

self._profile_one_layer(m, x, dt)

x = m(x) # run 正向推理

# 存放着self.save的每一层的输出,因为后面需要用来做Concat等操作,不在self.save层的输出就为None

y.append(x if m.i in self.save else None) # save output

# 特征可视化,可以自己改动想要那层的特征进行可视化

if visualize:

feature_visualization(x, m.type, m.i, save_dir=visualize)

return x

def _profile_one_layer(self, m, x, dt):

c = m == self.model[-1] # is final layer, copy input as inplace fix

o = thop.profile(m, inputs=(x.copy() if c else x,), verbose=False)[0] / 1E9 * 2 if thop else 0 # FLOPs

t = time_sync()

for _ in range(10):

m(x.copy() if c else x)

dt.append((time_sync() - t) * 100)

if m == self.model[0]:

LOGGER.info(f"{'time (ms)':>10s} {'GFLOPs':>10s} {'params':>10s} module")

LOGGER.info(f'{dt[-1]:10.2f} {o:10.2f} {m.np:10.0f} {m.type}')

if c:

LOGGER.info(f"{sum(dt):10.2f} {'-':>10s} {'-':>10s} Total")

def fuse(self): # fuse model Conv2d() + BatchNorm2d() layers

''' 用在detect.py、val.py中

fuse model Conv2d() + BatchNorm2d() layers

调用torch_utils.py中的fuse_conv_and_bn函数和common.py中的forward_fuse函数

'''

LOGGER.info('Fusing layers... ') # 日志

for m in self.model.modules(): # 遍历每一层结构

# 如果当前层是卷积层Conv且有BN结构,那么就调用fuse_conv_and_bn函数将Conv和BN进行融合,加速推理

if isinstance(m, (Conv, DWConv)) and hasattr(m, 'bn'):

m.conv = fuse_conv_and_bn(m.conv, m.bn) # update conv 融合

delattr(m, 'bn') # remove batchnorm 移除BN

m.forward = m.forward_fuse # update forward 更新前向传播(反向传播不用管,因为这个过程只用再推理阶段)

self.info() # 打印Conv+BN融合后的模型信息

return self

def info(self, verbose=False, img_size=640): # print model information

''' 用在上面的__init__函数上

调用torch_utils.py下model_info函数打印模型信息

'''

model_info(self, verbose, img_size)

def _apply(self, fn):

# Apply to(), cpu(), cuda(), half() to model tensors that are not parameters or registered buffers

self = super()._apply(fn)

m = self.model[-1] # Detect()

if isinstance(m, (Detect, Segment)):

m.stride = fn(m.stride)

m.grid = list(map(fn, m.grid))

if isinstance(m.anchor_grid, list):

m.anchor_grid = list(map(fn, m.anchor_grid))

return self

class DetectionModel(BaseModel):

# YOLOv5 detection model

def __init__(self, cfg='yolov5s.yaml', ch=3, nc=None, anchors=None): # model, input channels, number of classes

'''

:params cfg: 模型配置文件

:params ch: input img channels 一般是3(RGB文件)

:params nc: number of classes 数据集的类别个数

:params anchors: 一般是None

'''

super().__init__()

if isinstance(cfg, dict):

self.yaml = cfg # model dict

else: # is *.yaml 一般执行这里

import yaml # for torch hub

self.yaml_file = Path(cfg).name # cfg file name = 'yolov5s.yaml'

# 如果配置文件中有中文,打开时要加encoding参数

with open(cfg, encoding='ascii', errors='ignore') as f: # encoding='utf-8'

self.yaml = yaml.safe_load(f) # model dict

# Define model

ch = self.yaml['ch'] = self.yaml.get('ch', ch) # input channels ch=3

# 设置类别数,一般不执行,因为nc=self.yaml['nc']恒成立

if nc and nc != self.yaml['nc']:

LOGGER.info(f"Overriding model.yaml nc={self.yaml['nc']} with nc={nc}")

self.yaml['nc'] = nc # override yaml value

# 重写anchors,一般不执行,因为传进来的anchors一般都是None

if anchors:

LOGGER.info(f'Overriding model.yaml anchors with anchors={anchors}')

self.yaml['anchors'] = round(anchors) # override yaml value

# 创建网络模型

# self.model: 初始化的整个网络模型(包括Detect层结构)

# self.save: 所有层结构中from不等于-1的序号,并排好序 [4,6,10,14,17,20,23]

self.model, self.save = parse_model(deepcopy(self.yaml), ch=[ch]) # model, savelist

# default class names ['0','1','2',...,'19']

self.names = [str(i) for i in range(self.yaml['nc'])] # default names

# self.inplace=True 默认True,不使用加速推理

# AWS Inferentia Inplace compatiability

# https://github.com/ultralytics/yolov5/pull/2953

self.inplace = self.yaml.get('inplace', True)

# Build strides, anchors

# 获取Detect模块的stride(相对输入图像的下采样率)和anchors在当前Detect输出的feature map的尺寸

m = self.model[-1] # Detect()

if isinstance(m, (Detect, Segment)):

s = 256 # 2x min stride

m.inplace = self.inplace

forward = lambda x: self.forward(x)[0] if isinstance(m, Segment) else self.forward(x)

# 计算三个feature map的anchor大小,如[10,13]/8 -> [1.25,1.625]

m.stride = torch.tensor([s / x.shape[-2] for x in forward(torch.zeros(1, ch, s, s))]) # forward

# 检查anchor顺序与stride顺序是否一致

check_anchor_order(m)

m.anchors /= m.stride.view(-1, 1, 1)

self.stride = m.stride

self._initialize_biases() # only run once 初始化偏置

# Init weights, biases

initialize_weights(self) # 调用torch_utils.py下initialize_weights初始化模型权重

self.info() # 打印模型信息

LOGGER.info('')

def forward(self, x, augment=False, profile=False, visualize=False):

# 是否在测试时也使用数据增强 Test Time Augmentation(TTA)

if augment:

return self._forward_augment(x) # augmented inference, None 上下flip/左右flip

# 默认执行,正常前向推理

return self._forward_once(x, profile, visualize) # single-scale inference, train

def _forward_augment(self, x):

''' TTA Test Time Augmentation '''

img_size = x.shape[-2:] # height, width

s = [1, 0.83, 0.67] # scales

f = [None, 3, None] # flips (2-ud上下, 3-lr左右)

y = [] # outputs

for si, fi in zip(s, f):

# scale_img缩放图片尺寸

xi = scale_img(x.flip(fi) if fi else x, si, gs=int(self.stride.max()))

yi = self._forward_once(xi)[0] # forward

# cv2.imwrite(f'img_{si}.jpg', 255 * xi[0].cpu().numpy().transpose((1, 2, 0))[:, :, ::-1]) # save

# _descale_pred将推理结果恢复到相对原图图片尺寸

yi = self._descale_pred(yi, fi, si, img_size)

y.append(yi)

y = self._clip_augmented(y) # clip augmented tails

return torch.cat(y, 1), None # augmented inference, train

def _descale_pred(self, p, flips, scale, img_size):

# de-scale predictions following augmented inference (inverse operation)

''' 用在上面的__init__函数上

将推理结果恢复到原图图片尺寸上 TTA中用到

:params p: 推理结果

:params flips: 翻转标记(2-ud上下, 3-lr左右)

:params scale: 图片缩放比例

:params img_size: 原图图片尺寸

'''

# 不同的方式前向推理使用公式不同,具体可看Detect函数

if self.inplace: # 默认执行True,不使用AWS Inferentia

p[..., :4] /= scale # de-scale

if flips == 2:

p[..., 1] = img_size[0] - p[..., 1] # de-flip ud

elif flips == 3:

p[..., 0] = img_size[1] - p[..., 0] # de-flip lr

else:

x, y, wh = p[..., 0:1] / scale, p[..., 1:2] / scale, p[..., 2:4] / scale # de-scale

if flips == 2:

y = img_size[0] - y # de-flip ud

elif flips == 3:

x = img_size[1] - x # de-flip lr

p = torch.cat((x, y, wh, p[..., 4:]), -1)

return p

def _clip_augmented(self, y):

# Clip YOLOv5 augmented inference tails

nl = self.model[-1].nl # number of detection layers (P3-P5)

g = sum(4 ** x for x in range(nl)) # grid points

e = 1 # exclude layer count

i = (y[0].shape[1] // g) * sum(4 ** x for x in range(e)) # indices

y[0] = y[0][:, :-i] # large

i = (y[-1].shape[1] // g) * sum(4 ** (nl - 1 - x) for x in range(e)) # indices

y[-1] = y[-1][:, i:] # small

return y

def _initialize_biases(self, cf=None): # initialize biases into Detect(), cf is class frequency

''' 用在上面的__init__函数上 '''

# https://arxiv.org/abs/1708.02002 section 3.3

# cf = torch.bincount(torch.tensor(np.concatenate(dataset.labels, 0)[:, 0]).long(), minlength=nc) + 1.

m = self.model[-1] # Detect() module

for mi, s in zip(m.m, m.stride): # from

b = mi.bias.view(m.na, -1) # conv.bias(255) to (3,85)

b.data[:, 4] += math.log(8 / (640 / s) ** 2) # obj (8 objects per 640 image)

b.data[:, 5:5 + m.nc] += math.log(0.6 / (m.nc - 0.99999)) if cf is None else torch.log(cf / cf.sum()) # cls

mi.bias = torch.nn.Parameter(b.view(-1), requires_grad=True)

Model = DetectionModel # retain YOLOv5 'Model' class for backwards compatibility

4.资料

- yolov5代码解读 --common.py

- YoloV5系列(2)-model解析

- 【YOLOV5-5.x 源码解读】common.py

三、调整模型

./models/common.py 参考C3模块结构增加C2模块

class C2(nn.Module):

# CSP Bottleneck with 3 convolutions

def __init__(self, c1, c2, n=1, shortcut=True, g=1, e=0.5): # ch_in, ch_out, number, shortcut, groups, expansion

super().__init__()

c_ = int(c2 * 0.5) # hidden channels

self.cv1 = Conv(c1, c_, 1, 1)

self.cv2 = Conv(c1, c_, 1, 1)

self.m = nn.Sequential(*(Bottleneck(c_, c_, shortcut, g, e=1.0) for _ in range(n)))

def forward(self, x):

# 移除cv3卷积层后,若要保持最终输出的channel仍为c2,则中间层的channel需为c2/2

# 设置e=0.5即可,取默认值不变

return torch.cat((self.m(self.cv1(x)), self.cv2(x)), 1)

class C3(nn.Module):

# CSP Bottleneck with 3 convolutions

def __init__(self, c1, c2, n=1, shortcut=True, g=1, e=0.5): # ch_in, ch_out, number, shortcut, groups, expansion

''' 在C3RT模块和yolo.py的parse_model函数中被调用

:params c1: 整个C3的输入channel

:params c2: 整个C3的输出channel

:params n: 有n个子模块[Bottleneck/CrossConv]

:params shortcut: bool值,子模块[Bottlenec/CrossConv]中是否有shortcut,默认True

:params g: 子模块[Bottlenec/CrossConv]中的3x3卷积类型,=1普通卷积,>1深度可分离卷积

:params e: expansion ratio,e*c2=中间其它所有层的卷积核个数=中间所有层的的输入输出channel

'''

super().__init__()

c_ = int(c2 * e) # hidden channels

self.cv1 = Conv(c1, c_, 1, 1)

self.cv2 = Conv(c1, c_, 1, 1)

self.cv3 = Conv(2 * c_, c2, 1) # optional act=FReLU(c2)

self.m = nn.Sequential(*(Bottleneck(c_, c_, shortcut, g, e=1.0) for _ in range(n)))

# 实验性 CrossConv

#self.m = nn.Sequential(*[CrossConv(c_, c_, 3, 1, g, 1.0, shortcut) for _ in range(n)])

def forward(self, x):

return self.cv3(torch.cat((self.m(self.cv1(x)), self.cv2(x)), 1))

./models/yolo.py 在parse_model中增加对C2的解析

def parse_model(d, ch): # model_dict, input_channels(3)

# Parse a YOLOv5 model.yaml dictionary

''' 用在上面DetectionModel模块中

解析模型文件(字典形式),并搭建网络结构

这个函数其实主要做的就是:

更新当前层的args(参数),计算c2(当前层的输出channel)

->使用当前层的参数搭建当前层

->生成 layers + save

:params d: model_dict模型文件,字典形式{dice: 7}(yolov5s.yaml中的6个元素 + ch)

:params ch: 记录模型每一层的输出channel,初始ch=[3],后面会删除

:return nn.Sequential(*layers): 网络的每一层的层结构

:return sorted(save): 把所有层结构中的from不是-1的值记下,并排序[4,6,10,14,17,20,23]

'''

LOGGER.info(f"\n{'':>3}{'from':>18}{'n':>3}{'params':>10} {'module':<40}{'arguments':<30}")

# 读取字典d中的anchors和parameters(nc,depth_multiple,width_multiple)

anchors, nc, gd, gw, act = d['anchors'], d['nc'], d['depth_multiple'], d['width_multiple'], d.get('activation')

if act:

Conv.default_act = eval(act) # redefine default activation, i.e. Conv.default_act = nn.SiLU()

LOGGER.info(f"{colorstr('activation:')} {act}") # print

# na: number of anchors 每一个predict head上的anchor数=3

na = (len(anchors[0]) // 2) if isinstance(anchors, list) else anchors # number of anchors

# no: number of outputs 每一个predict head层的输出channel=anchors*(classes+5)=75(VOC)

no = na * (nc + 5) # number of outputs = anchors * (classes + 5)

''' 开始搭建网络

layers: 保存每一层的层结构

save: 记录下所有层结构中from不是-1的层结构序号

c2: 保存当前层的输出channel

'''

layers, save, c2 = [], [], ch[-1] # layers, savelist, ch out

# from: 当前层输入来自哪些层

# number: 当前层数,初定

# module: 当前层类别

# args: 当前层类参数,初定

# 遍历backbone和head的每一层

for i, (f, n, m, args) in enumerate(d['backbone'] + d['head']): # from, number, module, args

# 得到当前层的真实类名,例如:m = Focus -> ./models/yolov5s.yaml 在原第2层和原第3层之间插入C2模块

# YOLOv5 v6.0 backbone

backbone:

# [from, number, module, args]

[[-1, 1, Conv, [64, 6, 2, 2]], # 0-P1/2

[-1, 1, Conv, [128, 3, 2]], # 1-P2/4

[-1, 3, C3, [128]],

[-1, 3, C2, [128]], # 在原第2层和原第3层之间插入C2模块

[-1, 1, Conv, [256, 3, 2]], # 3-P3/8

[-1, 6, C3, [256]],

[-1, 1, Conv, [512, 3, 2]], # 5-P4/16

[-1, 9, C3, [512]],

[-1, 1, Conv, [1024, 3, 2]], # 7-P5/32

[-1, 3, C3, [1024]],

[-1, 1, SPPF, [1024, 5]], # 9

]

四、运行&打印模型查看

python train.py --img 640 --batch 8 --epoch 1 --data data/fruits.yaml --cfg models/yolov5s.yaml --weights weights/yolov5s.pt --device 0

(Pytorch) E:\WorkSpace_GuanXiang\0.学习资料\365天深度学习训练营\2.YOLOv5白皮书\yolov5-master>python train.py --img 640 --batch 8 --epoch 1 --data data/fruits.yaml --cfg models/yolov5s.yaml --weights weights/yolov5s.pt --device 0

train: weights=weights/yolov5s.pt, cfg=models/yolov5s.yaml, data=data/fruits.yaml, hyp=data\hyps\hyp.scratch-low.yaml, epochs=1, batch_size=8, imgsz=640, rect=False, resume=False, nosave=False, noval=False, noautoanchor=False, noplots=False, evolve=None, bucket=, cache=None, image_weights=False, device=0, multi_scale=False, single_cls=False, optimizer=SGD, sync_bn=False, workers=8, project=runs\train, name=exp, exist_ok=False, quad=False, cos_lr=False, label_smoothing=0.0, patience=100, freeze=[0], save_period=-1, seed=0, local_rank=-1, entity=None, upload_dataset=False, bbox_interval=-1, artifact_alias=latest

github: skipping check (not a git repository), for updates see https://github.com/ultralytics/yolov5

YOLOv5 2022-12-8 Python-3.8.12 torch-1.8.1+cu111 CUDA:0 (NVIDIA GeForce GTX 1660 Ti, 6144MiB)

hyperparameters: lr0=0.01, lrf=0.01, momentum=0.937, weight_decay=0.0005, warmup_epochs=3.0, warmup_momentum=0.8, warmup_bias_lr=0.1, box=0.05, cls=0.5, cls_pw=1.0, obj=1.0, obj_pw=1.0, iou_t=0.2, anchor_t=4.0, fl_gamma=0.0, hsv_h=0.015, hsv_s=0.7, hsv_v=0.4, degrees=0.0, translate=0.1, scale=0.5, shear=0.0, perspective=0.0, flipud=0.0, fliplr=0.5, mosaic=1.0, mixup=0.0, copy_paste=0.0

ClearML: run 'pip install clearml' to automatically track, visualize and remotely train YOLOv5 in ClearML

Comet: run 'pip install comet_ml' to automatically track and visualize YOLOv5 runs in Comet

TensorBoard: Start with 'tensorboard --logdir runs\train', view at http://localhost:6006/

Overriding model.yaml nc=80 with nc=4

from n params module arguments

0 -1 1 3520 models.common.Conv [3, 32, 6, 2, 2]

1 -1 1 18560 models.common.Conv [32, 64, 3, 2]

2 -1 1 18816 models.common.C3 [64, 64, 1]

3 -1 1 18816 models.common.C2 [64, 64, 1]

4 -1 1 73984 models.common.Conv [64, 128, 3, 2]

5 -1 2 115712 models.common.C3 [128, 128, 2]

6 -1 1 295424 models.common.Conv [128, 256, 3, 2]

7 -1 3 625152 models.common.C3 [256, 256, 3]

8 -1 1 1180672 models.common.Conv [256, 512, 3, 2]

9 -1 1 1182720 models.common.C3 [512, 512, 1]

10 -1 1 656896 models.common.SPPF [512, 512, 5]

11 -1 1 131584 models.common.Conv [512, 256, 1, 1]

12 -1 1 0 torch.nn.modules.upsampling.Upsample [None, 2, 'nearest']

13 [-1, 6] 1 0 models.common.Concat [1]

14 -1 1 361984 models.common.C3 [512, 256, 1, False]

15 -1 1 33024 models.common.Conv [256, 128, 1, 1]

16 -1 1 0 torch.nn.modules.upsampling.Upsample [None, 2, 'nearest']

17 [-1, 4] 1 0 models.common.Concat [1]

18 -1 1 90880 models.common.C3 [256, 128, 1, False]

19 -1 1 147712 models.common.Conv [128, 128, 3, 2]

20 [-1, 14] 1 0 models.common.Concat [1]

21 -1 1 329216 models.common.C3 [384, 256, 1, False]

22 -1 1 590336 models.common.Conv [256, 256, 3, 2]

23 [-1, 10] 1 0 models.common.Concat [1]

24 -1 1 1313792 models.common.C3 [768, 512, 1, False]

25 [17, 20, 23] 1 38097 models.yolo.Detect [4, [[10, 13, 16, 30, 33, 23], [30, 61, 62, 45, 59, 119], [116, 90, 156, 198, 373, 326]], [256, 384, 768]]

YOLOv5s summary: 232 layers, 7226897 parameters, 7226897 gradients, 17.0 GFLOPs

Transferred 49/379 items from weights\yolov5s.pt

AMP: checks passed

optimizer: SGD(lr=0.01) with parameter groups 62 weight(decay=0.0), 65 weight(decay=0.0005), 65 bias

train: Scanning E:\WorkSpace_GuanXiang\0.学习资料\365天深度学习训练营\2.YOLOv5白皮书\yolov5-master\paper_data\train...

train: WARNING Cache directory E:\WorkSpace_GuanXiang\0.\365\2.YOLOv5\yolov5-master\paper_data is not writeable: [WinError 183] : 'E:\\WorkSpace_GuanXiang\\0.\\365\\2.YOLOv5\\yolov5-master\\paper_data\\train.cache.npy' -> 'E:\\WorkSpace_GuanXiang\\0.\\365\\2.YOLOv5\\yolov5-master\\paper_data\\train.cache'

val: Scanning E:\WorkSpace_GuanXiang\0.学习资料\365天深度学习训练营\2.YOLOv5白皮书\yolov5-master\paper_data\val.cache..

AutoAnchor: 5.35 anchors/target, 1.000 Best Possible Recall (BPR). Current anchors are a good fit to dataset

Plotting labels to runs\train\exp2\labels.jpg...

Image sizes 640 train, 640 val

Using 8 dataloader workers

Logging results to runs\train\exp2

Starting training for 1 epochs...

Epoch GPU_mem box_loss obj_loss cls_loss Instances Size

0/0 2.02G nan nan nan 42 640: 100%|██████████| 20/20 00:23

Class Images Instances P R mAP50 mAP50-95: 100%|██████████| 2/2 00:01

all 20 60 0 0 0 0

1 epochs completed in 0.007 hours.

Optimizer stripped from runs\train\exp2\weights\last.pt, 14.8MB

Optimizer stripped from runs\train\exp2\weights\best.pt, 14.8MB

Validating runs\train\exp2\weights\best.pt...

Fusing layers...

YOLOv5s summary: 170 layers, 7217201 parameters, 0 gradients, 16.8 GFLOPs

Class Images Instances P R mAP50 mAP50-95: 100%|██████████| 2/2 00:00

all 20 60 0 0 0 0

Results saved to runs\train\exp2