cs224w-图机器学习-Colab 3

这里写自定义目录标题

- 一、GCN

- 二、GAT

-

- 1.forward函数

- 2.message函数

- 3.aggregate函数

- 4.总结

一、GCN

二、GAT

1.forward函数

下面是库中的实现。

def forward(self, x: Union[Tensor, OptPairTensor], edge_index: Adj,

size: Size = None, return_attention_weights=None):

# type: (Union[Tensor, OptPairTensor], Tensor, Size, NoneType) -> Tensor # noqa

# type: (Union[Tensor, OptPairTensor], SparseTensor, Size, NoneType) -> Tensor # noqa

# type: (Union[Tensor, OptPairTensor], Tensor, Size, bool) -> Tuple[Tensor, Tuple[Tensor, Tensor]] # noqa

# type: (Union[Tensor, OptPairTensor], SparseTensor, Size, bool) -> Tuple[Tensor, SparseTensor] # noqa

r"""

Args:

return_attention_weights (bool, optional): If set to :obj:`True`,

will additionally return the tuple

:obj:`(edge_index, attention_weights)`, holding the computed

attention weights for each edge. (default: :obj:`None`)

"""

#分别对应head数(即有几套注意力系数)和下层特征维度

H, C = self.heads, self.out_channels

x_l: OptTensor = None

x_r: OptTensor = None

alpha_l: OptTensor = None

alpha_r: OptTensor = None

if isinstance(x, Tensor):

assert x.dim() == 2, 'Static graphs not supported in `GATConv`.'

#这是把本层特征变换到输出空间中。

x_l = x_r = self.lin_l(x).view(-1, H, C)

#这是准备计算$e_{i,j}$,把两个向量算好

alpha_l = (x_l * self.att_l).sum(dim=-1)

alpha_r = (x_r * self.att_r).sum(dim=-1)

else:

x_l, x_r = x[0], x[1]

assert x[0].dim() == 2, 'Static graphs not supported in `GATConv`.'

x_l = self.lin_l(x_l).view(-1, H, C)

alpha_l = (x_l * self.att_l).sum(dim=-1)

if x_r is not None:

x_r = self.lin_r(x_r).view(-1, H, C)

alpha_r = (x_r * self.att_r).sum(dim=-1)

assert x_l is not None

assert alpha_l is not None

if self.add_self_loops:

if isinstance(edge_index, Tensor):

num_nodes = x_l.size(0)

if x_r is not None:

num_nodes = min(num_nodes, x_r.size(0))

if size is not None:

num_nodes = min(size[0], size[1])

edge_index, _ = remove_self_loops(edge_index)

edge_index, _ = add_self_loops(edge_index, num_nodes=num_nodes)

elif isinstance(edge_index, SparseTensor):

edge_index = set_diag(edge_index)

# propagate_type: (x: OptPairTensor, alpha: OptPairTensor)

#这个函数很神奇。传进去的alpha=(alpha_l, alpha_r)这俩参数,到了message函数中,就自动变成

#205*head维度的了。其中205是边数,head是注意力个数。

#而且,这俩参数自动加上后缀_i和_j,就在message函数中,就自动变成alpha_i和alpha_j了

out = self.propagate(edge_index, x=(x_l, x_r),

alpha=(alpha_l, alpha_r), size=size)

alpha = self._alpha

self._alpha = None

if self.concat:

out = out.view(-1, self.heads * self.out_channels)

else:

out = out.mean(dim=1)

if self.bias is not None:

out += self.bias

if isinstance(return_attention_weights, bool):

assert alpha is not None

if isinstance(edge_index, Tensor):

return out, (edge_index, alpha)

elif isinstance(edge_index, SparseTensor):

return out, edge_index.set_value(alpha, layout='coo')

else:

return out

2.message函数

def message(self, x_j: Tensor, alpha_j: Tensor, alpha_i: OptTensor,

index: Tensor, ptr: OptTensor,

size_i: Optional[int]) -> Tensor:

# alpha_j 和 alpha_i都是从propagate函数传来的。分别对应着每个边头结点的alpha和尾结点的alpha

#这样,相加后,205个数据分别对应着每个边的注意力系数

alpha = alpha_j if alpha_i is None else alpha_j + alpha_i

alpha = F.leaky_relu(alpha, self.negative_slope)

alpha = softmax(alpha, index, ptr, size_i)

self._alpha = alpha

alpha = F.dropout(alpha, p=self.dropout, training=self.training)

#返回乘以注意力系数后的表示

return x_j * alpha.unsqueeze(-1)

3.aggregate函数

这个函数的作用很单一:接收边序列和边序列对应的节点表示,然后求和。所以在调用这个函数之前,所有参数都应该算好了。因此message函数的负担很大。

4.总结

公式中,除了求和操作是aggregate函数实现的,其他操作都是在forward和message中实现的。因此具体在哪实现应该不太重要。仿照别人写的写就行了。一般都是在forward中进行线性投影,准备好一些向量。其他涉及中心节点、边序列的操作,只能在message中完成。因为调用message之前,propagate函数早就做好了准备,把_i和_j数据准备好了。

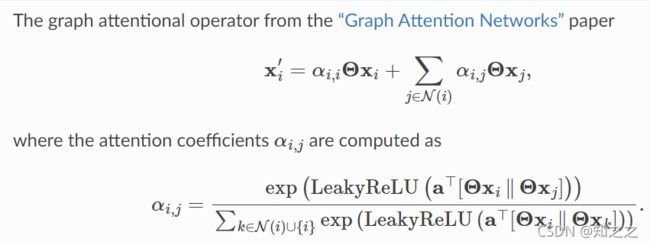

GAT实现中,在forward中实现下面的红色部分:

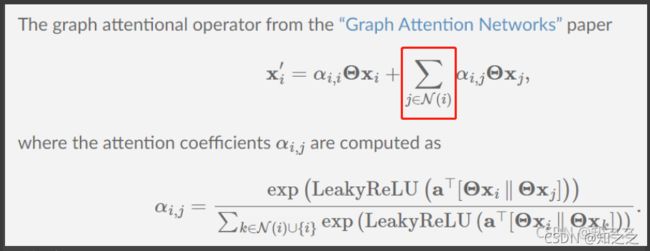

在message中实现了如下红色部分:

在aggregate中实现了如下:

上面几个截图都忽略了自环的部分。