训练一个分类器(Pytorch官方教程)

文章目录

-

- @[toc]

- 准备

- 数据

- 训练一个图像分类器

-

- 1.加载并规范化CIFAR10

- 展示一些训练图片

- 2. 定义卷积神经网络

- 3.定义损失函数和优化器

- 4.训练网络

- 5.测试网络

文章目录

-

- @[toc]

- 准备

- 数据

- 训练一个图像分类器

-

- 1.加载并规范化CIFAR10

- 展示一些训练图片

- 2. 定义卷积神经网络

- 3.定义损失函数和优化器

- 4.训练网络

- 5.测试网络

准备

下载Anaconda3并安装,ubuntu打开终端执行

bash Anaconda3-5.1.0-Linux-x86_64.sh

安装完成执行

conda install pytorch-gpu=1.3 torchvision=0.4

详细安装过程

数据

当需要处理图像、文本、音频或视频数据时,可以使用标准的python包将数据加载到numpy数组中。然后你可以把这个数组转换成torch.*Tensor。

对于视觉,torchvision包含用于常见数据集(如Imagenet、CIFAR10、MNIST等)的数据加载器和用于图像的数据转换器,即torchvision.datasets和torch.utils.data.DataLoader。

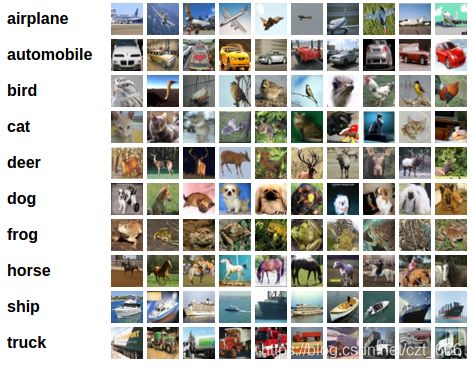

在本教程中,我们将使用CIFAR10数据集。它的类别有:“飞机”、“汽车”、“鸟”、“猫”、“鹿”、“狗”、“青蛙”、“马”、“船”、“卡车”(‘airplane’,‘automobile’, ‘bird’, ‘cat’, ‘deer’, ‘dog’, ‘frog’, ‘horse’, ‘ship’, ‘truck’)。CIFAR-10中的图像大小为3x32x32,即大小为32x32像素的3通道彩色图像。

训练一个图像分类器

我们将按顺序执行以下步骤:

- 使用

torchvision加载并规范化CIFAR10训练和测试数据集 - 定义一个卷积神经网络

- 定义损失函数

- 根据训练数据训练网络

- 在测试数据上测试网络

1.加载并规范化CIFAR10

使用torchvision,装载CIFAR10非常容易。

torchvision数据集的输出是范围为[0,1]的PILImage图像。我们把它们转换成归一化范围[-1,1]的张量。

打开项目文件夹,右键打开终端,执行

touch TrainingClassifiter.py && gedit TrainingClassifiter.py

//拷贝`下载数据代码`并保存,再执行

python TrainingClassifiter.py

//数据data 下载至项目文件夹

下载数据代码

import torch

import torchvision

import torchvision.transforms as transforms

transform = transforms.Compose(

[transforms.ToTensor(),

transforms.Normalize((0.5, 0.5, 0.5), (0.5, 0.5, 0.5))])

batch_size = 4

trainset = torchvision.datasets.CIFAR10(root='./data', train=True,

download=True, transform=transform)

trainloader = torch.utils.data.DataLoader(trainset, batch_size=batch_size,

shuffle=True, num_workers=2)

testset = torchvision.datasets.CIFAR10(root='./data', train=False,

download=True, transform=transform)

testloader = torch.utils.data.DataLoader(testset, batch_size=batch_size,

shuffle=False, num_workers=2)

classes = ('plane', 'car', 'bird', 'cat',

'deer', 'dog', 'frog', 'horse', 'ship', 'truck')

展示一些训练图片

执行命令

gedit TrainingClassifiter.py

//添加显示图片代码并保存,再执行

python TrainingClassifiter.py

显示图片代码

import matplotlib.pyplot as plt

import numpy as np

# functions to show an image

def imshow(img):

img = img / 2 + 0.5 # unnormalize

npimg = img.numpy()

plt.imshow(np.transpose(npimg, (1, 2, 0)))

plt.show()

# get some random training images

dataiter = iter(trainloader)

images, labels = dataiter.next()

# show images

imshow(torchvision.utils.make_grid(images))

# print labels

print(' '.join('%5s' % classes[labels[j]] for j in range(batch_size)))

2. 定义卷积神经网络

import torch.nn as nn

import torch.nn.functional as F

class Net(nn.Module):

def __init__(self):

super().__init__()

self.conv1 = nn.Conv2d(3, 6, 5)

self.pool = nn.MaxPool2d(2, 2)

self.conv2 = nn.Conv2d(6, 16, 5)

self.fc1 = nn.Linear(16 * 5 * 5, 120)

self.fc2 = nn.Linear(120, 84)

self.fc3 = nn.Linear(84, 10)

def forward(self, x):

x = self.pool(F.relu(self.conv1(x)))

x = self.pool(F.relu(self.conv2(x)))

x = torch.flatten(x, 1) # 展平除批次外的所有尺寸

x = F.relu(self.fc1(x))

x = F.relu(self.fc2(x))

x = self.fc3(x)

return x

net = Net()

3.定义损失函数和优化器

import torch.optim as optim

criterion = nn.CrossEntropyLoss()

optimizer = optim.SGD(net.parameters(), lr=0.001, momentum=0.9)

4.训练网络

for epoch in range(2): # loop over the dataset multiple times

running_loss = 0.0

for i, data in enumerate(trainloader, 0):

# get the inputs; data is a list of [inputs, labels]

inputs, labels = data

# zero the parameter gradients

optimizer.zero_grad()

# forward + backward + optimize

outputs = net(inputs)

loss = criterion(outputs, labels)

loss.backward()

optimizer.step()

# print statistics

running_loss += loss.item()

if i % 2000 == 1999: # print every 2000 mini-batches

print('[%d, %5d] loss: %.3f' %

(epoch + 1, i + 1, running_loss / 2000))

running_loss = 0.0

print('Finished Training')

5.测试网络

我们通过训练数据集对网络进行了2次训练。但我们需要检查一下网络是否学到了什么。

我们将通过预测神经网络输出的类标签来检查这一点,并根据基本事实进行检查。如果预测是正确的,我们将样本添加到正确预测的列表中。

好的,第一步。让我们从测试集中显示一个图像以熟悉它。

dataiter = iter(testloader)

images, labels = dataiter.next()

# print images

imshow(torchvision.utils.make_grid(images))

print('GroundTruth: ', ' '.join('%5s' % classes[labels[j]] for j in range(4)))

接下来,让我们加载回我们保存的模型(注意:这里不需要保存和重新加载模型,我们这样做只是为了说明如何这样做):

net = Net()

net.load_state_dict(torch.load(PATH))

好的,现在让我们看看神经网络是怎么想的上面这些例子是:

outputs = net(images)

输出是10个类的能量。一个类的能量越高,网络就越认为该图像属于特定的类。那么,让我们得到最高能量的指数:

_, predicted = torch.max(outputs, 1)

print('Predicted: ', ' '.join('%5s' % classes[predicted[j]]

for j in range(4)))

结果看起来不错。

让我们看看网络在整个数据集上的表现。

correct = 0

total = 0

# since we're not training, we don't need to calculate the gradients for our outputs

with torch.no_grad():

for data in testloader:

images, labels = data

# calculate outputs by running images through the network

outputs = net(images)

# the class with the highest energy is what we choose as prediction

_, predicted = torch.max(outputs.data, 1)

total += labels.size(0)

correct += (predicted == labels).sum().item()

print('Accuracy of the network on the 10000 test images: %d %%' % (

100 * correct / total))

这看起来比偶然性要好得多,偶然性是10%的准确率(从10个类中随机挑选一个类)。网络好像学到了什么。

嗯,哪些课程表现好,哪些课程表现不好:

# prepare to count predictions for each class

correct_pred = {classname: 0 for classname in classes}

total_pred = {classname: 0 for classname in classes}

# again no gradients needed

with torch.no_grad():

for data in testloader:

images, labels = data

outputs = net(images)

_, predictions = torch.max(outputs, 1)

# collect the correct predictions for each class

for label, prediction in zip(labels, predictions):

if label == prediction:

correct_pred[classes[label]] += 1

total_pred[classes[label]] += 1

# print accuracy for each class

for classname, correct_count in correct_pred.items():

accuracy = 100 * float(correct_count) / total_pred[classname]

print("Accuracy for class {:5s} is: {:.1f} %".format(classname,

accuracy))

就像你把张量转移到GPU上一样,你把神经网络转移到GPU上。

让我们首先将我们的设备定义为第一个可见的cuda设备,如果我们有可用的cuda:

device = torch.device("cuda:0" if torch.cuda.is_available() else "cpu")

# Assuming that we are on a CUDA machine, this should print a CUDA device:

print(device)

本节的其余部分假设设备是CUDA设备。

然后这些方法将递归地遍历所有模块,并将其参数和缓冲区转换为CUDA张量:

net.to(device)

请记住,您还必须将每个步骤的输入和目标发送到GPU:

inputs, labels = data[0].to(device), data[1].to(device)