Pytorch用dataloader自定义数据训练模型

总结

Dataset可以遍历数据集,每次输出一组(feature,label)。dataloader相当于是dataset的接口,顺便可以Dataset做一些调整,比如shuffle、batchsize……

因此,自定义dalaloader的关键是定义Dataset!

一. 用到的库

import numpy as np

import torch

import torch.nn as nn

import torch.nn.functional as F

import torch.optim as optim

import matplotlib.pyplot as plt

from torch.utils.data import Dataset, DataLoader

from PIL import Image

import os

import pandas as pd

from torchvision.transforms import ToTensor, Lambda

from torchvision import datasets, models, transforms

二. 定义Dataset类

class TrainDataset(Dataset):

def __init__(self, root_dir, csv_file, transform=None, target_transform=None):

self.root_dir = root_dir

self.labels = pd.read_csv(csv_file)

# features的归一化:

self.transform = transform

# labels的归一化:

self.target_transform = target_transform

def __len__(self):

return self.labels.shape[0]

def __getitem__(self, index):

img_name = os.path.join(self.root_dir,

self.labels.iloc[index, 0])

image = Image.open(img_name)

label = self.labels.iloc[index, 1:].astype(int).to_numpy()

label = list(label)[0].tolist()

if self.transform:

image = self.transform(image)

if self.target_transform:

label = self.target_transform(label)

# print(label)

return image, label

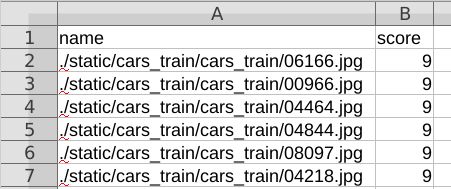

三. 创建Dataset

我这里root_dir=’'是因为我的label.csv文件中存储的是features的全路径:

# 1. Load and normalize training data

dataset = TrainDataset(

root_dir='',

csv_file='labels.csv',

transform=transforms.Compose([

transforms.Resize([224, 224]),

ToTensor(),

transforms.Normalize((0.5, 0.5, 0.5), (0.5, 0.5, 0.5))

]),

target_transform=None

)

三通道彩色图像:

transforms.Normalize((0.5, 0.5, 0.5), (0.5, 0.5, 0.5))

一通道的黑白图像:

transforms.Normalize((0.5,), (0.5,))

四. 创建自定义的dataloader

batch_size = 16

trainloader = torch.utils.data.DataLoader(dataset, batch_size=batch_size,

shuffle=True, num_workers=1)

classes = ('s0', 's1', 's2', 's3', 's4', 's5', 's6', 's7', 's8', 's9')

五. 定义神经网络(最开始就定义)

我这个和pytorch CIFAR-10分类器教程设置的一样。

区别是self.fc1 = nn.Linear(16 * 53 * 53, 120),这里是165353,原本是1655。修改原因是本来samples的size为3232,我这里resize到224224,网络设置也要随之改变。

class Net(nn.Module):

def __init__(self):

super().__init__()

self.conv1 = nn.Conv2d(3, 6, 5)

self.pool = nn.MaxPool2d(2, 2)

self.conv2 = nn.Conv2d(6, 16, 5)

self.fc1 = nn.Linear(16 * 53 * 53, 120)

self.fc2 = nn.Linear(120, 84)

self.fc3 = nn.Linear(84, 10)

def forward(self, x):

x = self.pool(F.relu(self.conv1(x)))

x = self.pool(F.relu(self.conv2(x)))

x = x.view(-1, 16 * 53 * 53)

x = F.relu(self.fc1(x))

x = F.relu(self.fc2(x))

x = self.fc3(x)

logits = x

return logits

六. 创建神经网络和优化器

# 2. Define a Convolutional Neural Network

net = Net()

# print(net)

# 3. Define a Loss function and optimizer

criterion = nn.CrossEntropyLoss()

# optimizer = optim.SGD(net.parameters(), lr=0.0001, momentum=0.9)

optimizer = optim.Adam(net.parameters(), lr=0.001)

七. 放到GPU

神经网络+训练features+训练labels 都要放到GPU

# training on GPU

device = 'cuda' if torch.cuda.is_available() else 'cpu'

print('Using {} device'.format(device))

net = net.to(device)

print('sent net to gpu')

八. 训练,对比预测值和真实值,并绘制Loss曲线

# 4. Train the network

epoch1 = 10

for epoch in range(epoch1): # loop over the dataset multiple times

running_loss = 0.0

Loss = []

for i, (inputs, labels) in enumerate(trainloader, 0):

# zero the parameter gradients

optimizer.zero_grad()

# forward + backward + optimize

inputs = inputs.to(device)

labels = labels.to(device)

# outputs是网络的输出,用Softmax转变为该sample属于各类别的概率:

outputs = net(inputs)

loss = criterion(outputs, labels)

softmax = nn.Softmax(dim=1)

pred_probab = softmax(outputs)

nb_batches = pred_probab.shape[0]

nb_classes = pred_probab.shape[1]

max_idxes = []

for j in range(nb_batches):

max = pred_probab[j][0]

max_idx = 0

for k in range(nb_classes):

prob = pred_probab[j][k]

if prob > max:

max = prob

max_idx = k

max_idxes.append(max_idx)

print(labels)

pred_label = []

for ii in max_idxes:

pred_label.append(classes[ii])

print(pred_label)

loss.backward()

optimizer.step()

# print statistics

running_loss += loss.item()

if i % 2000 == 1999: # print every 2000 mini-batches

Loss.append(running_loss)

print('[%d, %5d] loss: %.3f' %

(epoch + 1, i + 1, running_loss / 2000))

running_loss = 0.0

Loss0 = torch.tensor(Loss)

np.save('./loss/epoch_{}'.format(epoch), Loss0)

print('Finished Training')

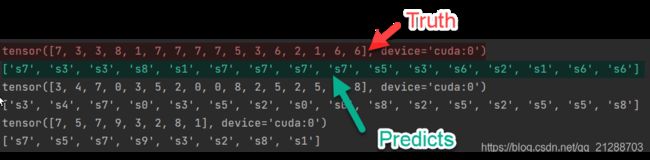

九. 使用少量数据验证

确保网络能够在小训练集上完全拟合,验证有没有错误。

在这里我设计了一个小训练集,十类数据,每一类20个,所以一共20*10=200个数据。设置epoch=10,通过输出predicts和ground truth对比,可以看到完全拟合: