【自然语言处理(NLP)】基于Transformer的英文自动文摘

【自然语言处理(NLP)】基于Transformer的英文自动文摘

![]()

作者简介:在校大学生一枚,华为云享专家,阿里云专家博主,腾云先锋(TDP)成员,云曦智划项目总负责人,全国高等学校计算机教学与产业实践资源建设专家委员会(TIPCC)志愿者,以及编程爱好者,期待和大家一起学习,一起进步~

.

博客主页:ぃ灵彧が的学习日志

.

本文专栏:人工智能

.

专栏寄语:若你决定灿烂,山无遮,海无拦

.

文章目录

- 【自然语言处理(NLP)】基于Transformer的英文自动文摘

- 前言

-

- (一)、任务描述

- (二)、环境配置

- 一、数据准备

-

- (一)、数据集加载

- (二)、构建英文词表词表

- (三)、创建数据集

- 二、构建基于Transformer的机器翻译模型

-

- (一)、定义超参数

- (二)、Encoder部分

- (三)、Decoder部分

- 三、模型训练

- 四、使用模型进行机器翻译

- 总结

前言

(一)、任务描述

本示例教程介绍如何使用飞桨完成一个机器翻译任务。

我们将会使用飞桨提供的开源框架完成基于Transformer的英文文本自动文摘模型。飞桨框架实现了Transformer的基本层,因此可以直接调用。

(二)、环境配置

本示例基于飞桨开源框架2.0版本。

import paddle

import paddle.nn.functional as F

import re

import numpy as np

print(paddle.__version__)

# cpu/gpu环境选择,在 paddle.set_device() 输入对应运行设备。

# device = paddle.set_device('gpu')

输出结果如下图1所示:

![]()

一、数据准备

(一)、数据集加载

统计数据集信息,确定句子长度,我们采用包含90%句子长度的长度值作为句子的长度

# 统计数据集中句子的长度等信息 en代表输入长文本 ch代表输出摘要

lines = open('data/data80310/train.txt','r',encoding='utf-8').readlines()

print(len(lines))

datas = []

dic_content = {}

dic_summary = {}

for line in lines:

ll = line.strip().split('\t')

if len(ll)<2:

continue

datas.append([ll[0].split(' '),ll[1].split(' ')])

# print(ll[0])

if len(ll[0].split(' ')) not in dic_content:

dic_content[len(ll[0].split(' '))] = 1

else:

dic_content[len(ll[0].split(' '))] +=1

if len(ll[1].split(' ')) not in dic_summary:

dic_summary[len(ll[1].split(' '))] = 1

else:

dic_summary[len(ll[1].split(' '))] +=1

keys_en = list(dic_content.keys())

keys_en.sort()

count = 0

# print('输入长度统计:')

for k in keys_en:

count += dic_content[k]

print(k,dic_content[k],count/len(lines))

keys_cn = list(dic_summary.keys())

keys_cn.sort()

count = 0

# print('输出长度统计:')

for k in keys_cn:

count += dic_summary[k]

print(k,dic_summary[k],count/len(lines))

en_length = 95

cn_length = 25

输出结果如下图2所示:

(二)、构建英文词表词表

# 构建英文词表

en_vocab = {}

en_vocab['' ], en_vocab['' ], en_vocab['' ] = 0, 1, 2

idx = 3

for en, cn in datas:

# print(en,cn)

for w in en:

if w not in en_vocab:

en_vocab[w] = idx

idx += 1

for w in cn:

if w not in en_vocab:

en_vocab[w] = idx

idx += 1

print(len(list(en_vocab)))

'''

英文词表长度:71428

'''

输出结果如下图3所示:

(三)、创建数据集

接下来根据词表,我们将会创建一份实际的用于训练的用numpy array组织起来的数据集。

- 所有的句子都通过补充成为了长度相同的句子。

- 对于英文句子(源语言),我们将其反转了过来,这会带来更好的翻译的效果。

- 所创建的padded_cn_label_sents是训练过程中的预测的目标,即,每个英文的当前词去预测下一个词是什么词。

padded_en_sents = []

padded_cn_sents = []

padded_cn_label_sents = []

for en, cn in datas:

if len(en)>en_length:

en = en[:en_length]

if len(cn)>cn_length:

cn = cn[:cn_length]

padded_en_sent = en + ['' ] + ['' ] * (en_length - len(en))

#padded_en_sent.reverse()

padded_cn_sent = ['' ] + cn + ['' ] + ['' ] * (cn_length - len(cn))

padded_cn_label_sent = cn + ['' ] + ['' ] * (cn_length - len(cn) + 1)

padded_en_sents.append(np.array([en_vocab[w] for w in padded_en_sent]))

padded_cn_sents.append(np.array([en_vocab[w] for w in padded_cn_sent]) )

padded_cn_label_sents.append(np.array([en_vocab[w] for w in padded_cn_label_sent]))

train_en_sents = np.array(padded_en_sents)

train_cn_sents = np.array(padded_cn_sents)

train_cn_label_sents = np.array(padded_cn_label_sents)

print(train_en_sents.shape)

print(train_cn_sents.shape)

print(train_cn_label_sents.shape)

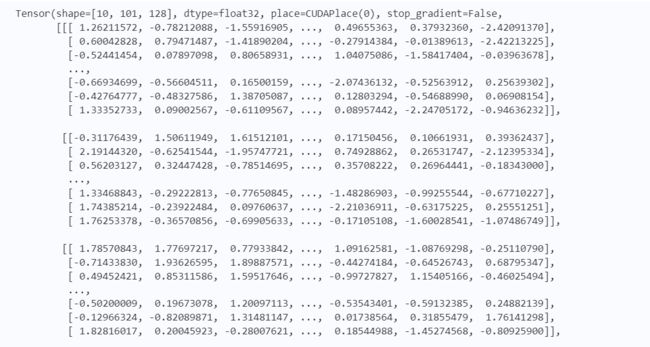

输出结果如下图4所示:

二、构建基于Transformer的机器翻译模型

(一)、定义超参数

- 首先定义超参数,用于后续模型的设计与训练

embedding_size = 128

hidden_size = 512

num_encoder_lstm_layers = 1

vocab_size = len(list(en_vocab))

epochs = 20

batch_size = 16

(二)、Encoder部分

- 使用TransformerEncoder定义Encoder

# encoder: simply learn representation of source sentence

class Encoder(paddle.nn.Layer):

def __init__(self,vocab_size, embedding_size,num_layers=2,head_number=2,middle_units=512):

super(Encoder, self).__init__()

self.emb = paddle.nn.Embedding(vocab_size, embedding_size,)

"""

d_model (int) - 输入输出的维度。

nhead (int) - 多头注意力机制的Head数量。

dim_feedforward (int) - 前馈神经网络中隐藏层的大小。

"""

encoder_layer = paddle.nn.TransformerEncoderLayer(embedding_size, head_number, middle_units)

self.encoder = paddle.nn.TransformerEncoder(encoder_layer, num_layers)

def forward(self, x):

x = self.emb(x)

en_out = self.encoder(x)

return en_out

(三)、Decoder部分

- 使用TransformerDecoder定义Decoder

class Decoder(paddle.nn.Layer):

def __init__(self,vocab_size, embedding_size,num_layers=2,head_number=2,middle_units=512):

super(Decoder, self).__init__()

self.emb = paddle.nn.Embedding(vocab_size, embedding_size)

decoder_layer = paddle.nn.TransformerDecoderLayer(embedding_size, head_number, middle_units)

self.decoder = paddle.nn.TransformerDecoder(decoder_layer, num_layers)

# for computing output logits

self.outlinear =paddle.nn.Linear(embedding_size, vocab_size)

def forward(self, x, encoder_outputs):

x = self.emb(x)

# dec_input, enc_output,self_attn_mask, cross_attn_mask

de_out = self.decoder(x, encoder_outputs)

output = self.outlinear(de_out)

output = paddle.squeeze(output)

return output

三、模型训练

接下来我们开始训练模型。

-

在每个epoch开始之前,我们对训练数据进行了随机打乱。

-

我们通过多次调用atten_decoder,在这里实现了解码时的recurrent循环。

-

teacher forcing策略: 在每次解码下一个词时,我们给定了训练数据当中的真实词作为了预测下一个词时的输入。相应的,你也可以尝试用模型预测的结果作为下一个词的输入。(或者混合使用)

encoder = Encoder(vocab_size, embedding_size)

decoder = Decoder(vocab_size, embedding_size)

opt = paddle.optimizer.Adam(learning_rate=0.0001,

parameters=encoder.parameters() + decoder.parameters())

for epoch in range(epochs):

print("epoch:{}".format(epoch))

# shuffle training data

perm = np.random.permutation(len(train_en_sents))

train_en_sents_shuffled = train_en_sents[perm]

train_cn_sents_shuffled = train_cn_sents[perm]

train_cn_label_sents_shuffled = train_cn_label_sents[perm]

# print(train_en_sents_shuffled.shape[0],train_en_sents_shuffled.shape[1])

for iteration in range(train_en_sents_shuffled.shape[0] // batch_size):

x_data = train_en_sents_shuffled[(batch_size*iteration):(batch_size*(iteration+1))]

sent = paddle.to_tensor(x_data)

en_repr = encoder(sent)

x_cn_data = train_cn_sents_shuffled[(batch_size*iteration):(batch_size*(iteration+1))]

x_cn_label_data = train_cn_label_sents_shuffled[(batch_size*iteration):(batch_size*(iteration+1))]

loss = paddle.zeros([1])

for i in range( cn_length + 2):

cn_word = paddle.to_tensor(x_cn_data[:,i:i+1])

cn_word_label = paddle.to_tensor(x_cn_label_data[:,i])

logits = decoder(cn_word, en_repr)

step_loss = F.cross_entropy(logits, cn_word_label)

loss += step_loss

loss = loss / (cn_length + 2)

if(iteration % 50 == 0):

print("iter {}, loss:{}".format(iteration, loss.numpy()))

loss.backward()

opt.step()

opt.clear_grad()

print("训练完成")

部分输出结果如下图6所示:

四、使用模型进行机器翻译

- 随机从训练集中抽取几句话来进行预测

encoder.eval()

decoder.eval()

num_of_exampels_to_evaluate = 10

indices = np.random.choice(len(train_en_sents), num_of_exampels_to_evaluate, replace=False)

x_data = train_en_sents[indices]

sent = paddle.to_tensor(x_data)

en_repr = encoder(sent)

word = np.array(

[[en_vocab['' ]]] * num_of_exampels_to_evaluate

)

word = paddle.to_tensor(word)

decoded_sent = []

for i in range(cn_length + 2):

logits = decoder(word, en_repr)

word = paddle.argmax(logits, axis=1)

decoded_sent.append(word.numpy())

word = paddle.unsqueeze(word, axis=-1)

results = np.stack(decoded_sent, axis=1)

for i in range(num_of_exampels_to_evaluate):

print('---------------------')

en_input = " ".join(datas[indices[i]][0])

ground_truth_translate = "".join(datas[indices[i]][1])

model_translate = ""

for k in results[i]:

w = list(en_vocab)[k]

if w != '' and w != '' :

model_translate += w

print(en_input)

print("true: {}".format(ground_truth_translate))

print("pred: {}".format(model_translate))

输出结果如下图7所示:

总结

本系列文章内容为根据清华社出版的《自然语言处理实践》所作的相关笔记和感悟,其中代码均为基于百度飞桨开发,若有任何侵权和不妥之处,请私信于我,定积极配合处理,看到必回!!!

最后,引用本次活动的一句话,来作为文章的结语~( ̄▽ ̄~)~:

【学习的最大理由是想摆脱平庸,早一天就多一份人生的精彩;迟一天就多一天平庸的困扰。】

ps:更多精彩内容还请进入本文专栏:人工智能,进行查看,欢迎大家支持与指教啊~( ̄▽ ̄~)~

![]()