NLP-Beginner:自然语言处理入门练习----task 1基于机器学习的文本分类

任务一:基于机器学习的文本分类

任务传送门

项目是在github上的,数据集需要在kaggle上下载,稍微有些麻烦。

wang盘:http://链接:https://pan.baidu.com/s/16gG4ILHrWmUPJkyrIZkNBA 提取码:cadj

一、任务介绍

本次任务是利用机器学习中的softmax regression来对文本的情感进行分类。

train.tsv 包含短语及其关联的情绪标签。我们还提供了一个 SentenceId,以便您可以跟踪哪些短语属于单个句子。

test.tsv 只包含短语。必须为每个短语分配一个情绪标签。

情绪标签包括:

0 - 负

1 - 有点负

2 - 中性

3 - 有点正

4 - 正

任务的解决流程:数据输入 --> 特征求解 --> 最优化求解 --> 结果输出

二、知识点总结

- Bag of Words(BOWs)即词袋模型,是对样本数据的一种表示方法,主要应用在NLP(自然语言处理)和 IR(信息检索)领域,近年也开始在 CV(计算机视觉)发挥作用。

- 对于文本,通常使用的是Bag of Words词袋模型表示特征,即将文本映射成为一个词的向量,向量的长度是词典的大小,每一位表示词典中的一个词,向量中的每一位上的数值表示该词在文本中出现的次数。对于一个文本,其词向量通常是稀疏的。词序的信息已经丢失,每个文档只看做一些单独词项的集合。

- n-gram(n元语法),从一个句子中提取n个连续的字的集合,可以获取到字的前后信息。一般2-gram或者3-gram比较常见。好处是可以获取更丰富的特征,提取字的前后信息,考虑了字之间的顺序性。这种方法没有解决数据稀疏和词表维度过高的问题,而且随着n的增大,词表维度会变得更高。

- logistic regression:解决分类问题,利用回归思想,二分类,逻辑回归是根据sigmoid将线性变成非线性,所以其实去掉激活函数与线性回归是一致的。 Logistic 回归采用交叉熵作为损失函数,并使用梯度下降法来对参数进行优化.

softmax regression:处理多分类。Softmax 回归往往需要使用正则化来约束其参数。![]()

损失函数: 损失函数用来评价模型的预测值和真实值不一样的程度,损失函数越好,通常模型的性能越好。不同的模型用的损失函数一般也不一样。

(随机)梯度下降:吴恩达机器学习的课程,SGD

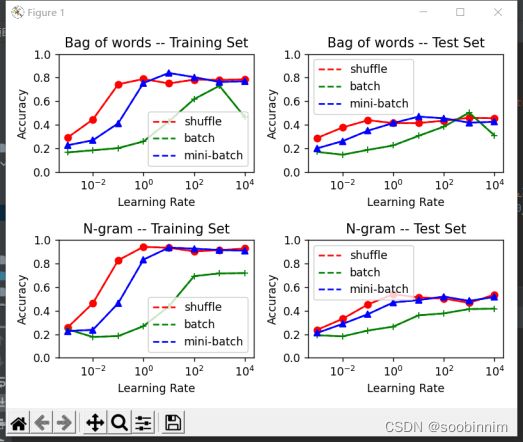

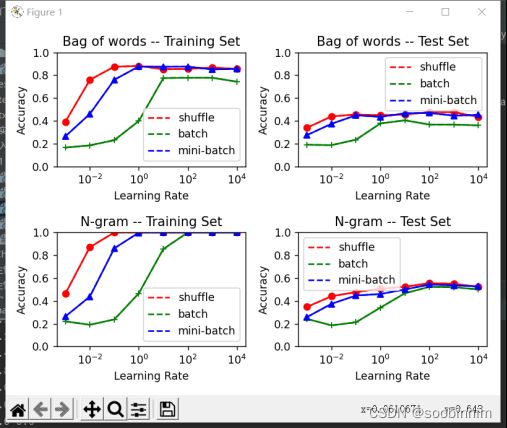

5.随机梯度下降(Shuffle) 、整批量梯度下降(Batch)、小批量梯度下降(Mini-Batch)三 种梯度下降的优劣,需要控制每种梯度下降中计算梯度的次数一致。

(以下是听李沐课程有关softmax的笔记)

1.线性回归是对n维输入的加权,外加偏差

2.使用平方损失来衡量预测值和真实值的差异

3.线性回归有显示解

4.线性回归可以看作是单层神经网络

5.梯度下降要通过不断沿着反梯度方向更新参数求解

6.小批量随机梯度下降是深度学习默认的求解算法

7.两个重要的超参数是批量大小和学习率

回归估计一个连续值

分类预测一个离散类别

softmax 交叉熵损失

softmax回归是一个多类分类模型

使用softmax操作子得到每个类的预测置信度

使用交叉熵来衡量预测和标号的区别

损失函数:

1.均方损失(L2 Loss) 1/2(真实值-预测值)的平方

2.绝对值损失(L1 Loss) |真实值-预测值| 在0点处不可导 梯度是一个常数

似然函数和两个值有关,梯度下降和学习率,在L2 Loss中梯度下降步长是不一致的,L1 Loss

步长一致。可以想象成是从一个很远的解逐步逼近最优解的过程。

三、实验设置

样本个数:1000

训练集:测试集:7:3

学习率:10-3~104

Min-batch大小:样本的1%

tips:为了看清这三种梯度下降的优劣,我们需要控制每种梯度下降的计算梯度的次数一致。

四、代码

主文件:main.py

# -*- coding: GBK -*-

# -*- coding: UTF-8 -*-

# coding=gbk

import numpy

import csv

import random

from feature import Bag,Gram

from compasion import alpha_gradient_plot

# 数据读取

with open('train.tsv') as f:

tsvreader = csv.reader(f, delimiter='\t')

temp = list(tsvreader)

# 初始化

data = temp[1:]

max_item=1000

random.seed(2021)

numpy.random.seed(2021)

# 特征提取

bag=Bag(data,max_item)

bag.get_words()

bag.get_matrix()

gram=Gram(data, dimension=2, max_item=max_item)

gram.get_words()

gram.get_matrix()

# 画图

alpha_gradient_plot(bag,gram,10000,10) # 计算10000次

alpha_gradient_plot(bag,gram,100000,10) # 计算100000次特征提取:feature.py

# -*- coding: GBK -*-

# -*- coding: UTF-8 -*-

# coding=gbk

import numpy

import random

def data_split(data, test_rate=0.3, max_item=1000):

"""把数据按一定比例划分成训练集和测试集"""

train = list()

test = list()

i = 0

for datum in data:

i += 1

if random.random() > test_rate:

train.append(datum)

else:

test.append(datum)

if i > max_item:

break

return train, test

class Bag:

"""Bag of words"""

def __init__(self, my_data, max_item=1000):

self.data = my_data[:max_item]

self.max_item = max_item

self.dict_words = dict() # 单词到单词编号的映射

self.len = 0 # 记录有几个单词

self.train, self.test = data_split(my_data, test_rate=0.3, max_item=max_item)

self.train_y = [int(term[3]) for term in self.train] # 训练集类别

self.test_y = [int(term[3]) for term in self.test] # 测试集类别

self.train_matrix = None # 训练集的0-1矩阵(每行一个句子)

self.test_matrix = None # 测试集的0-1矩阵(每行一个句子)

def get_words(self):

for term in self.data:

s = term[2]

s = s.upper() # 记得要全部转化为大写!!(或者全部小写,否则一个单词例如i,I会识别成不同的两个单词)

words = s.split() # 分割

for word in words: # 一个一个单词寻找

if word not in self.dict_words:

self.dict_words[word] = len(self.dict_words)

self.len = len(self.dict_words)

self.test_matrix = numpy.zeros((len(self.test), self.len)) # 初始化0-1矩阵

self.train_matrix = numpy.zeros((len(self.train), self.len)) # 初始化0-1矩阵

def get_matrix(self):

for i in range(len(self.train)): # 训练集矩阵

s = self.train[i][2]

words = s.split()

for word in words:

word = word.upper()

self.train_matrix[i][self.dict_words[word]] = 1

for i in range(len(self.test)): # 测试集矩阵

s = self.test[i][2]

words = s.split()

for word in words:

word = word.upper()

self.test_matrix[i][self.dict_words[word]] = 1

class Gram:

"""N-gram"""

def __init__(self, my_data, dimension=2, max_item=1000):

self.data = my_data[:max_item]

self.max_item = max_item

self.dict_words = dict() # 特征到t正编号的映射

self.len = 0 # 记录有多少个特征

self.dimension = dimension # 决定使用几元特征

self.train, self.test = data_split(my_data, test_rate=0.3, max_item=max_item)

self.train_y = [int(term[3]) for term in self.train] # 训练集类别

self.test_y = [int(term[3]) for term in self.test] # 测试集类别

self.train_matrix = None # 训练集0-1矩阵(每行代表一句话)

self.test_matrix = None # 测试集0-1矩阵(每行代表一句话)

def get_words(self):

for d in range(1, self.dimension + 1): # 提取 1-gram, 2-gram,..., N-gram 特征

for term in self.data:

s = term[2]

s = s.upper() # 记得要全部转化为大写!!(或者全部小写,否则一个单词例如i,I会识别成不同的两个单词)

words = s.split()

for i in range(len(words) - d + 1): # 一个一个特征找

temp = words[i:i + d]

temp = "_".join(temp) # 形成i d-gram 特征

if temp not in self.dict_words:

self.dict_words[temp] = len(self.dict_words)

self.len = len(self.dict_words)

self.test_matrix = numpy.zeros((len(self.test), self.len)) # 训练集矩阵初始化

self.train_matrix = numpy.zeros((len(self.train), self.len)) # 测试集矩阵初始化

def get_matrix(self):

for d in range(1, self.dimension + 1):

for i in range(len(self.train)): # 训练集矩阵

s = self.train[i][2]

s = s.upper()

words = s.split()

for j in range(len(words) - d + 1):

temp = words[j:j + d]

temp = "_".join(temp)

self.train_matrix[i][self.dict_words[temp]] = 1

for i in range(len(self.test)): # 测试集矩阵

s = self.test[i][2]

s = s.upper()

words = s.split()

for j in range(len(words) - d + 1):

temp = words[j:j + d]

temp = "_".join(temp)

self.test_matrix[i][self.dict_words[temp]] = 1softmax regression:mysoftmax_regression.py

# -*- coding: GBK -*-

# -*- coding: UTF-8 -*-

# coding=gbk

import numpy

import random

class Softmax:

"""Softmax regression"""

def __init__(self, sample, typenum, feature):

self.sample = sample # 训练集样本个数

self.typenum = typenum # (情感)种类个数

self.feature = feature # 0-1向量的长度

self.W = numpy.random.randn(feature, typenum) # 参数矩阵W初始化,randn函数返回一个或一组样本,具有标准正态分布。返回值为指定维度的array

def softmax_calculation(self, x):

"""x是向量,计算softmax值"""

exp = numpy.exp(x - numpy.max(x)) # 先减去最大值防止指数太大溢出

return exp / exp.sum()

def softmax_all(self, wtx):

"""wtx是矩阵,即许多向量叠在一起,按行计算softmax值"""

wtx -= numpy.max(wtx, axis=1, keepdims=True) # 先减去行最大值防止指数太大溢出

wtx = numpy.exp(wtx)

wtx /= numpy.sum(wtx, axis=1, keepdims=True)

return wtx

def change_y(self, y):

"""把(情感)种类转换为一个one-hot向量"""

ans = numpy.array([0] * self.typenum)

ans[y] = 1

return ans.reshape(-1, 1)

def prediction(self, X):

"""给定0-1矩阵X,计算每个句子的y_hat值(概率)"""

prob = self.softmax_all(X.dot(self.W))

return prob.argmax(axis=1)

def correct_rate(self, train, train_y, test, test_y):

"""计算训练集和测试集的准确率"""

# train set

n_train = len(train)

pred_train = self.prediction(train)

train_correct = sum([train_y[i] == pred_train[i] for i in range(n_train)]) / n_train

# test set

n_test = len(test)

pred_test = self.prediction(test)

test_correct = sum([test_y[i] == pred_test[i] for i in range(n_test)]) / n_test

print(train_correct, test_correct)

return train_correct, test_correct

def regression(self, X, y, alpha, times, strategy="mini", mini_size=100):

"""Softmax regression"""

if self.sample != len(X) or self.sample != len(y):

raise Exception("Sample size does not match!") # 样本个数不匹配

if strategy == "mini": # mini-batch

for i in range(times):

increment = numpy.zeros((self.feature, self.typenum)) # 梯度初始为0矩阵

for j in range(mini_size): # 随机抽K次

k = random.randint(0, self.sample - 1)

yhat = self.softmax_calculation(self.W.T.dot(X[k].reshape(-1, 1)))

increment += X[k].reshape(-1, 1).dot((self.change_y(y[k]) - yhat).T) # 梯度加和

# print(i * mini_size)

self.W += alpha / mini_size * increment # 参数更新

elif strategy == "shuffle": # 随机梯度

for i in range(times):

k = random.randint(0, self.sample - 1) # 每次抽一个

yhat = self.softmax_calculation(self.W.T.dot(X[k].reshape(-1, 1)))

increment = X[k].reshape(-1, 1).dot((self.change_y(y[k]) - yhat).T) # 计算梯度

self.W += alpha * increment # 参数更新

# if not (i % 10000):

# print(i)

elif strategy=="batch": # 整批量梯度

for i in range(times):

increment = numpy.zeros((self.feature, self.typenum)) ## 梯度初始为0矩阵

for j in range(self.sample): # 所有样本都要计算

yhat = self.softmax_calculation(self.W.T.dot(X[j].reshape(-1, 1)))

increment += X[j].reshape(-1, 1).dot((self.change_y(y[j]) - yhat).T) # 梯度加和

# print(i)

self.W += alpha / self.sample * increment # 参数更新

else:

raise Exception("Unknown strategy")结果和画图:compassion.py

# -*- coding: GBK -*-

# -*- coding: UTF-8 -*-

# coding=gbk

import matplotlib.pyplot

from mysoftmax_regression import Softmax

def alpha_gradient_plot(bag,gram, total_times, mini_size):

"""Plot categorization verses different parameters.情节分类与不同的参数。"""

alphas = [0.001, 0.01, 0.1, 1, 10, 100, 1000, 10000] # 学习率

# Bag of words

# Shuffle 随机梯度下降

shuffle_train = list()

shuffle_test = list()

for alpha in alphas:

soft = Softmax(len(bag.train), 5, bag.len)

soft.regression(bag.train_matrix, bag.train_y, alpha, total_times, "shuffle")

r_train, r_test = soft.correct_rate(bag.train_matrix, bag.train_y, bag.test_matrix, bag.test_y)

shuffle_train.append(r_train)

shuffle_test.append(r_test)

# Batch

batch_train = list()

batch_test = list()

for alpha in alphas:

soft = Softmax(len(bag.train), 5, bag.len)

soft.regression(bag.train_matrix, bag.train_y, alpha, int(total_times/bag.max_item), "batch")

r_train, r_test = soft.correct_rate(bag.train_matrix, bag.train_y, bag.test_matrix, bag.test_y)

batch_train.append(r_train)

batch_test.append(r_test)

# Mini-batch

mini_train = list()

mini_test = list()

for alpha in alphas:

soft = Softmax(len(bag.train), 5, bag.len)

soft.regression(bag.train_matrix, bag.train_y, alpha, int(total_times/mini_size), "mini",mini_size)

r_train, r_test= soft.correct_rate(bag.train_matrix, bag.train_y, bag.test_matrix, bag.test_y)

mini_train.append(r_train)

mini_test.append(r_test)

matplotlib.pyplot.subplot(2,2,1)

matplotlib.pyplot.semilogx(alphas,shuffle_train,'r--',label='shuffle')

matplotlib.pyplot.semilogx(alphas, batch_train, 'g--', label='batch')

matplotlib.pyplot.semilogx(alphas, mini_train, 'b--', label='mini-batch')

matplotlib.pyplot.semilogx(alphas,shuffle_train, 'ro-', alphas, batch_train, 'g+-',alphas, mini_train, 'b^-')

matplotlib.pyplot.legend()

matplotlib.pyplot.title("Bag of words -- Training Set")

matplotlib.pyplot.xlabel("Learning Rate")

matplotlib.pyplot.ylabel("Accuracy")

matplotlib.pyplot.ylim(0,1)

matplotlib.pyplot.subplot(2, 2, 2)

matplotlib.pyplot.semilogx(alphas, shuffle_test, 'r--', label='shuffle')

matplotlib.pyplot.semilogx(alphas, batch_test, 'g--', label='batch')

matplotlib.pyplot.semilogx(alphas, mini_test, 'b--', label='mini-batch')

matplotlib.pyplot.semilogx(alphas, shuffle_test, 'ro-', alphas, batch_test, 'g+-', alphas, mini_test, 'b^-')

matplotlib.pyplot.legend()

matplotlib.pyplot.title("Bag of words -- Test Set")

matplotlib.pyplot.xlabel("Learning Rate")

matplotlib.pyplot.ylabel("Accuracy")

matplotlib.pyplot.ylim(0, 1)

# N-gram

# Shuffle

shuffle_train = list()

shuffle_test = list()

for alpha in alphas:

soft = Softmax(len(gram.train), 5, gram.len)

soft.regression(gram.train_matrix, gram.train_y, alpha, total_times, "shuffle")

r_train, r_test = soft.correct_rate(gram.train_matrix, gram.train_y, gram.test_matrix, gram.test_y)

shuffle_train.append(r_train)

shuffle_test.append(r_test)

# Batch

batch_train = list()

batch_test = list()

for alpha in alphas:

soft = Softmax(len(gram.train), 5, gram.len)

soft.regression(gram.train_matrix, gram.train_y, alpha, int(total_times / gram.max_item), "batch")

r_train, r_test = soft.correct_rate(gram.train_matrix, gram.train_y, gram.test_matrix, gram.test_y)

batch_train.append(r_train)

batch_test.append(r_test)

# Mini-batch

mini_train = list()

mini_test = list()

for alpha in alphas:

soft = Softmax(len(gram.train), 5, gram.len)

soft.regression(gram.train_matrix, gram.train_y, alpha, int(total_times / mini_size), "mini", mini_size)

r_train, r_test = soft.correct_rate(gram.train_matrix, gram.train_y, gram.test_matrix, gram.test_y)

mini_train.append(r_train)

mini_test.append(r_test)

matplotlib.pyplot.subplot(2, 2, 3)

matplotlib.pyplot.semilogx(alphas, shuffle_train, 'r--', label='shuffle')

matplotlib.pyplot.semilogx(alphas, batch_train, 'g--', label='batch')

matplotlib.pyplot.semilogx(alphas, mini_train, 'b--', label='mini-batch')

matplotlib.pyplot.semilogx(alphas, shuffle_train, 'ro-', alphas, batch_train, 'g+-', alphas, mini_train, 'b^-')

matplotlib.pyplot.legend()

matplotlib.pyplot.title("N-gram -- Training Set")

matplotlib.pyplot.xlabel("Learning Rate")

matplotlib.pyplot.ylabel("Accuracy")

matplotlib.pyplot.ylim(0, 1)

matplotlib.pyplot.subplot(2, 2, 4)

matplotlib.pyplot.semilogx(alphas, shuffle_test, 'r--', label='shuffle')

matplotlib.pyplot.semilogx(alphas, batch_test, 'g--', label='batch')

matplotlib.pyplot.semilogx(alphas, mini_test, 'b--', label='mini-batch')

matplotlib.pyplot.semilogx(alphas, shuffle_test, 'ro-', alphas, batch_test, 'g+-', alphas, mini_test, 'b^-')

matplotlib.pyplot.legend()

matplotlib.pyplot.title("N-gram -- Test Set")

matplotlib.pyplot.xlabel("Learning Rate")

matplotlib.pyplot.ylabel("Accuracy")

matplotlib.pyplot.ylim(0, 1)

matplotlib.pyplot.tight_layout()

matplotlib.pyplot.show()五、实验结果数据

1.N-gram特征优于bag of words,因为其考虑了词序。

在不用的学习率下,小的学习率并未让模型收敛,大的学习率相对较好。

2.结果与第一次实验结果吻合,训练集准确率高达100%,也表明出现过拟合现象,测试集的准确率在55%左右,说明出后面的梯度下降并没有对模型的泛用性产生提升现象。尽管训练集的准确率看起来令人满意,但是过多的梯度下降只会使模型过拟合而不带来任何文本分类效果提升。

六、总结

本次实验借鉴的是https://blog.csdn.net/qq_42365109/article/details/114844020,感受到了理论结合具体实践的重要性,虽然这是入门项目但是对于我来说还是相对吃力的,完整写出一整个代码还是需要一些时间来磨练。