用pytorch搭建线性回归模型

线性回归

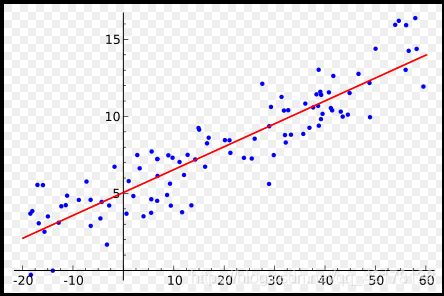

线性回归的定义:以一元线性回归为例,在一系列的点中,找一条直线,使得这条直线与这些点的距离之和最小。

代码编写

数据data

首先我们需要给出一系列的点作为线性回归的数据,使用numpy来存储这些点。

x_train = np.array([[3.3], [4.4], [5.5], [6.71], [6.93], [4.168],

[9.779], [6.182], [7.59], [2.167], [7.042],

[10.791], [5.313], [7.997], [3.1]], dtype=np.float32)

y_train = np.array([[1.7], [2.76], [2.09], [3.19], [1.694], [1.573],

[3.366], [2.596], [2.53], [1.221], [2.827],

[3.465], [1.65], [2.904], [1.3]], dtype=np.float32)

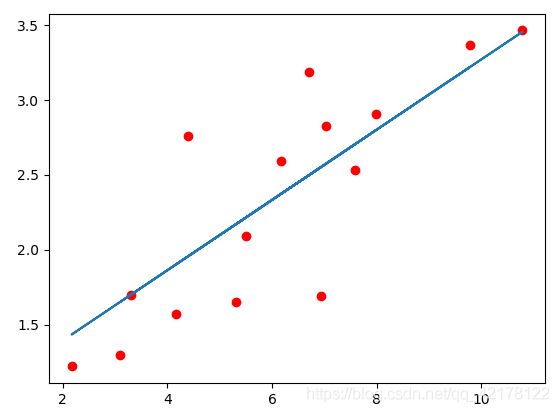

显示结果如下:

由于pytorch的基本处理单元是Tensor,我们需要将numpy转换成Tensor。

x_train = torch.from_numpy(x_train)

y_train = torch.from_numpy(y_train)

模型搭建

搭建线性回归模型

class LinearRegression(nn.Module):

def __init__(self):

super(LinearRegression, self).__init__()

self.linear = nn.Linear(1, 1) # input and output is 1 dimension

def forward(self, x):

out = self.linear(x)

return out

model = LinearRegression()

nn.Linear表示的是 y=w*x+b,里面的两个参数都是1,表示的是x是1维,y也是1维。

定义loss和optimizer,就是误差和优化函数

criterion = nn.MSELoss()

optimizer = optim.SGD(model.parameters(), lr=1e-4)

这里使用的是最小二乘loss(做分类问题时,多数使用的是cross entropy loss,交叉熵损失函数)

优化函数使用的是随机梯度下降,注意需要将model的参数model.parameters()传进去让这个函数知道他要优化的参数是那些。

train

接着开始训练

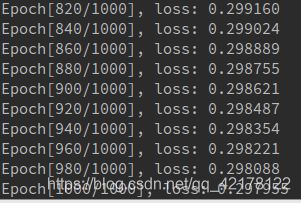

num_epochs = 1000

for epoch in range(num_epochs):

inputs = Variable(x_train)

target = Variable(y_train)

# forward

out = model(inputs) # 前向传播

loss = criterion(out, target) # 计算loss

# backward

optimizer.zero_grad() # 梯度归零

loss.backward() # 方向传播

optimizer.step() # 更新参数

if (epoch+1) % 20 == 0:

print('Epoch[{}/{}], loss: {:.6f}'.format(epoch+1,

num_epochs,

loss.data[0]))

第一个循环表示每个epoch,接着开始前向传播,然后计算loss,然后反向传播,接着优化参数,特别注意的是在每次反向传播的时候需要将参数的梯度归零,即

optimzier.zero_grad()

validation

训练完成之后我们就可以开始测试模型了

model.eval()

predict = model(Variable(x_train))

predict = predict.data.numpy()

特别注意的是需要用 model.eval(),让model变成测试模式,这主要是对dropout和batch normalization的操作在训练和测试的时候是不一样的

完整代码

# encoding: utf-8

"""

@author: liaoxingyu

@contact: [email protected]

"""

import matplotlib.pyplot as plt

import numpy as np

import torch

from torch import nn

from torch.autograd import Variable

x_train = np.array([[3.3], [4.4], [5.5], [6.71], [6.93], [4.168],

[9.779], [6.182], [7.59], [2.167], [7.042],

[10.791], [5.313], [7.997], [3.1]], dtype=np.float32)

y_train = np.array([[1.7], [2.76], [2.09], [3.19], [1.694], [1.573],

[3.366], [2.596], [2.53], [1.221], [2.827],

[3.465], [1.65], [2.904], [1.3]], dtype=np.float32)

x_train = torch.from_numpy(x_train)

y_train = torch.from_numpy(y_train)

# Linear Regression Model

class linearRegression(nn.Module):

def __init__(self):

super(linearRegression, self).__init__()

self.linear = nn.Linear(1, 1) # input and output is 1 dimension

def forward(self, x):

out = self.linear(x)

return out

model = linearRegression()

# 定义loss和优化函数

criterion = nn.MSELoss()

optimizer = torch.optim.SGD(model.parameters(), lr=1e-4)

# 开始训练

num_epochs = 1000

for epoch in range(num_epochs):

inputs = x_train

target = y_train

# forward

out = model(inputs)

loss = criterion(out, target)

# backward

optimizer.zero_grad()

loss.backward()

optimizer.step()

if (epoch+1) % 20 == 0:

print(f'Epoch[{epoch+1}/{num_epochs}], loss: {loss.item():.6f}')

model.eval()

with torch.no_grad():

predict = model(x_train)

predict = predict.data.numpy()

fig = plt.figure(figsize=(10, 5))

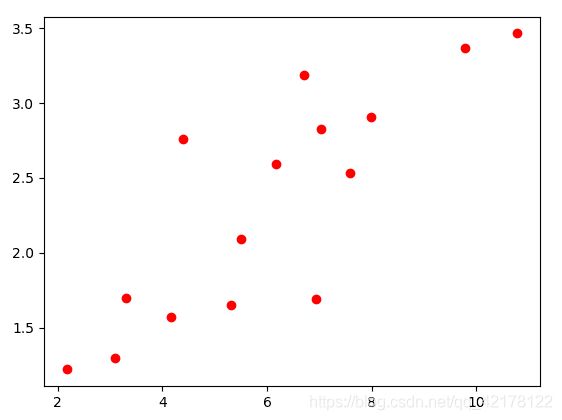

plt.plot(x_train.numpy(), y_train.numpy(), 'ro', label='Original data')

plt.plot(x_train.numpy(), predict, label='Fitting Line')

# 显示图例

plt.legend()

plt.show()

# 保存模型

torch.save(model.state_dict(), './linear.pth')