朴素贝叶斯分类器 注释

试编程实现拉普拉斯修正的朴素贝叶斯分类器,并以西瓜数据集3.0为训练集,对P.151“测1”进行判别。

代码全是《机器学习》上的,只是将其整合到了一起,能够运行手写体识别。

内容大部分进行了注释,可能有些注释不够精准或者不容理解,见谅!

代码

from numpy import *

def loadDataSet(): #创建实验样本

postingList=[['青绿','蜷缩','浊响','清晰','凹陷','硬滑',0.697,0.460],

['乌黑','蜷缩','沉闷','清晰','凹陷','硬滑',0.774,0.376],

['乌黑','蜷缩','浊响','清晰','凹陷','硬滑',0.634,0.264],

['青绿','蜷缩','沉闷','清晰','凹陷','硬滑',0.608,0.318],

['浅白','蜷缩','浊响','清晰','凹陷','硬滑',0.556,0.215],

['青绿','稍蜷','浊响','清晰','稍凹','软粘',0.403,0.237],

['乌黑','稍蜷','浊响','稍糊','稍凹','软粘',0.481,0.149],

['乌黑','稍蜷','浊响','清晰','稍凹','硬滑',0.437,0.211],

['乌黑','稍蜷','沉闷','稍糊','稍凹','硬滑',0.666,0.091],

['青绿','硬挺','清脆','清晰','平坦','软粘',0.243,0.267],

['浅白','硬挺','清脆','模糊','平坦','硬滑',0.245,0.057],

['浅白','蜷缩','浊响','模糊','平坦','软粘',0.343,0.099],

['青绿','稍蜷','浊响','稍糊','凹陷','硬滑',0.639,0.161],

['浅白','稍蜷','沉闷','稍糊','凹陷','硬滑',0.657,0.198],

['乌黑','稍蜷','浊响','清晰','稍凹','软粘',0.360,0.370],

['浅白','蜷缩','浊响','模糊','平坦','硬滑',0.593,0.042],

['青绿','蜷缩','沉闷','稍糊','稍凹','硬滑',0.719,0.103]]

classVec = [1,1,1,1,1,1,1,1,0,0,0,0,0,0,0,0,0] #1是好瓜,0不是好瓜

return postingList,classVec

def createVocabList(dataSet): #传入词条切分后的列表

vocabSet = set([]) #用set()创建空的集合

for document in dataSet:

vocabSet = vocabSet | set(document) #合并两个集合

return list(vocabSet)

def setOfWords2Vec(vocabList, inputSet): #传入一个集合和被检测列表

returnVec = [0]*len(vocabList) #初始化列表全为0

for word in inputSet: #遍历被检测列表

if word in vocabList: #检测被检测列表中是否有给定样本中的词汇

returnVec[vocabList.index(word)] = 1 #将该词出现的第一个位置的特征值标记为1

else: print( "the word: %s is not in my Vocabulary!" % word)

return returnVec

def trainNB0(trainMatrix,trainCategory): #拉普斯修改后的朴素贝叶斯算法

numTrainDocs = len(trainMatrix)

numWords = len(trainMatrix[0])

pAbusive = sum(trainCategory)/float(numTrainDocs) #计算坏瓜所占比例

p0Num = ones(numWords); p1Num = ones(numWords)

p0Denom = 2.0; p1Denom = 2.0

for i in range(numTrainDocs): #遍历每个文本

if trainCategory[i] == 1:

p1Num += trainMatrix[i]

p1Denom += sum(trainMatrix[i])

else:

p0Num += trainMatrix[i]

p0Denom += sum(trainMatrix[i])

p1Vect = log(p1Num/p1Denom) #计算每个属性被判为坏瓜属性的概率

p0Vect = log(p0Num/p0Denom) #基本同上,避免结果下溢

return p0Vect,p1Vect,pAbusive

def classifyNB(vec2Classify, p0Vec, p1Vec, pClass1):

p1 = sum(vec2Classify * p1Vec) + log(pClass1) #属性在出现0/1情况*概率 再求和 + 类别对数概率

p0 = sum(vec2Classify * p0Vec) + log(1.0 - pClass1)

if p1 > p0:

return 1

else:

return 0

def bagOfWords2VecMN(vocabList, inputSet):

returnVec = [0]*len(vocabList)

for word in inputSet:

if word in vocabList:

returnVec[vocabList.index(word)] += 1 #每个单词再集合中的出现次数

return returnVec

def testingNB(canshu):

listOPosts,listClasses = loadDataSet() #取样

myVocabList = createVocabList(listOPosts) #变为无重复集合

trainMat=[]

for postinDoc in listOPosts: #标记每个属性是否再大集合中出现

trainMat.append(setOfWords2Vec(myVocabList, postinDoc))

p0V,p1V,pAb = trainNB0(array(trainMat),array(listClasses))

testEntry = canshu

thisDoc = array(setOfWords2Vec(myVocabList, testEntry)) #标记testEntry属性再集合中的出现

print (testEntry,'classified as: ',classifyNB(thisDoc,p0V,p1V,pAb))

if __name__=='__main__':

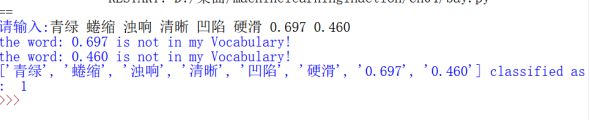

canshu=list(input("请输入:").split()) #将结果输入

testingNB(canshu)