【生成对抗网络】ACGAN的代码实现

文章目录

- 1. ACGAN简介

- 2. 基于TensorFlow2的ACGAN实现(MNIST数据集)

-

- 2.1 导包

- 2.2 数据准备

- 2.3 生成器模型

- 2.4 判别器模型

- 2.5 定义损失函数及优化器

- 2.6 定义批次训练函数

- 2.7 定义绘图函数

- 2.8 定义主训练函数

- 2.9 模型训练与结果展示

- 2.10 使用生成器

1. ACGAN简介

前面博客中介绍了一般的GAN代码实现,能生成一个图像,但是无法生成指定类别的图像,ACGAN则补充了这部分功能,通过将类别信息添加到生成器与判别器中,从而能够产生指定类别的数据。

ACGAN中生成器接收一个随机噪声和一个图像标签作为输入,从而生成一张图像。判别器则可以接收一张图像作为输入,同时输出图像的真假和图像标签。

- 生成器是一个多输入单输出的模型

- 判别器是一个单输入多输出的模型

2. 基于TensorFlow2的ACGAN实现(MNIST数据集)

2.1 导包

import tensorflow as tf # 2.6.3

from tensorflow import keras

from tensorflow.keras import layers

import matplotlib.pyplot as plt

%matplotlib inline

import numpy as np

2.2 数据准备

(images,labels),(_,_) = tf.keras.datasets.mnist.load_data()

images = images/127.5-1 # 归一化到-1至1之间(后续用tanh激活)0-255 -> -1-1

images = np.expand_dims(images,-1) # 扩展维度->(60000, 28, 28, 1)

dataset = tf.data.Dataset.from_tensor_slices((images,labels))

BATCH_SIZE = 256

noise_dim = 50

dataset = dataset.shuffle(60000).batch(BATCH_SIZE) # 乱序并设置batch大小

2.3 生成器模型

输入(噪声、标签)输出(指定标签的图像)

def generate():

seed = layers.Input(shape=(noise_dim,))

label = layers.Input(shape=(()))

x = layers.Embedding(10, 50, input_length=1)(label)

x = layers.Flatten()(x)

x = layers.concatenate([seed, x])

x = layers.Dense(3*3*128, use_bias=False)(x)

x = layers.Reshape((3, 3, 128))(x)

x = layers.BatchNormalization()(x)

x = layers.ReLU()(x)

x = layers.Conv2DTranspose(64, (3, 3), strides=(2, 2), use_bias=False)(x)

x = layers.BatchNormalization()(x)

x = layers.ReLU()(x) # 7*7

x = layers.Conv2DTranspose(32, (3, 3), strides=(2, 2), padding='same', use_bias=False)(x)

x = layers.BatchNormalization()(x)

x = layers.ReLU()(x) # 14*14

x = layers.Conv2DTranspose(1, (3, 3), strides=(2, 2), padding='same', use_bias=False)(x)

x = layers.Activation('tanh')(x)

model = tf.keras.Model(inputs=[seed,label], outputs=x)

return model

创建模型:

gen = generate()

2.4 判别器模型

输入(图像),输出(图像真假,分类标签)

def discriminator():

image = tf.keras.Input(shape=(28,28,1))

x = layers.Conv2D(32, (3, 3), strides=(2, 2), padding='same', use_bias=False)(image)

x = layers.BatchNormalization()(x)

x = layers.LeakyReLU()(x)

x = layers.Dropout(0.5)(x)

x = layers.Conv2D(32*2, (3, 3), strides=(2, 2), padding='same', use_bias=False)(x)

x = layers.BatchNormalization()(x)

x = layers.LeakyReLU()(x)

x = layers.Dropout(0.5)(x)

x = layers.Conv2D(32*4, (3, 3), strides=(2, 2), padding='same', use_bias=False)(x)

x = layers.BatchNormalization()(x)

x = layers.LeakyReLU()(x)

x = layers.Dropout(0.5)(x)

x = layers.Flatten()(x)

x1 = layers.Dense(1)(x) # 真假输出

x2 = layers.Dense(10)(x) # 分类输出

model = tf.keras.Model(inputs=image, outputs=[x1,x2])

return model

创建模型:

disc = discriminator()

2.5 定义损失函数及优化器

# 用于判断真假

bce = tf.keras.losses.BinaryCrossentropy(from_logits=True)

# 用于图像标签分类

cce = tf.keras.losses.SparseCategoricalCrossentropy(from_logits=True)

判别器损失函数:

# real_out:真实图像

# real_cls_out:真实图像分类标签的预测输出

# fake_out:生成器生成的图像

# label:真实图像分类标签y

def disc_loss(real_out,real_cls_out,fake_out,label):

# 计算真假损失

real_loss = bce(tf.ones_like(real_out),real_out)

fake_loss = bce(tf.zeros_like(fake_out),fake_out)

#计算分类损失

cat_loss = cce(label,real_cls_out)

return real_loss+fake_loss+cat_loss

生成器的损失函数:

# fake_out:生成的图像

# fake_cls_out:生成图像在判别器中的预测分类标签的输出

# label:对应的标签

def gen_loss(fake_out,fake_cls_out,label):

# 计算真假损失

fake_loss = bce(tf.ones_like(fake_out),fake_out)

# 计算分类损失

cat_loss = cce(label,fake_cls_out)

return fake_loss+cat_loss

定义Adam优化器:

gen_opt = tf.keras.optimizers.Adam(1e-5)

disc_opt = tf.keras.optimizers.Adam(1e-5)

2.6 定义批次训练函数

# image:输入的真实图像

# labels:对应的真实标签

@tf.function

def train_step(image,labels):

size = labels.shape[0] # 获取输入的图片数量(batch大小)

noise = tf.random.normal([size,noise_dim]) # 创建相同大小的噪声

with tf.GradientTape() as gen_tape,tf.GradientTape() as disc_tape:

gen_imgs = gen((noise,labels),training = True) # 生成假图像

fake_out,fake_cls_out = disc(gen_imgs,training = True) # 将生成的假图像输入判别器中,得到真假输出和分类输出

real_out,real_cls_out = disc(image,training = True)# 将真实图像输入到判别器中,得到真假输入和分类输出

# 调用损失函数,计算生成器与判别器的损失

d_loss = disc_loss(real_out,real_cls_out,fake_out,labels)

g_loss = gen_loss(fake_out,fake_cls_out,labels)

# 求梯度

gen_grad = gen_tape.gradient(g_loss,gen.trainable_variables)

disc_grad = disc_tape.gradient(d_loss,disc.trainable_variables)

# 根据梯度更新参数

gen_opt.apply_gradients(zip(gen_grad,gen.trainable_variables))

disc_opt.apply_gradients(zip(disc_grad,disc.trainable_variables))

2.7 定义绘图函数

用于查看生成器生成的效果

def plot_gen_image(model,noise,label,epoch_num):

print('\nEpoch:',epoch_num)

gen_image = model((noise,label),training = False)

fig = plt.figure(figsize=(10,1))

for i in range(gen_image.shape[0]):

plt.subplot(1,10,i+1) # 1行10列的第i张图片

plt.imshow((gen_image[i,:,:,0]+1)/2,cmap='gray') # 生成器最后时tanh -1至1 化为 0-1之间

plt.axis('off')

plt.show()

2.8 定义主训练函数

定义绘图用到的参数

num = 10 # 生成10张图像

noise_seed = tf.random.normal([num,noise_dim]) # 噪声

label_seed = np.random.randint(0,10,size=(num)) # 0-10之间生成num个的数据

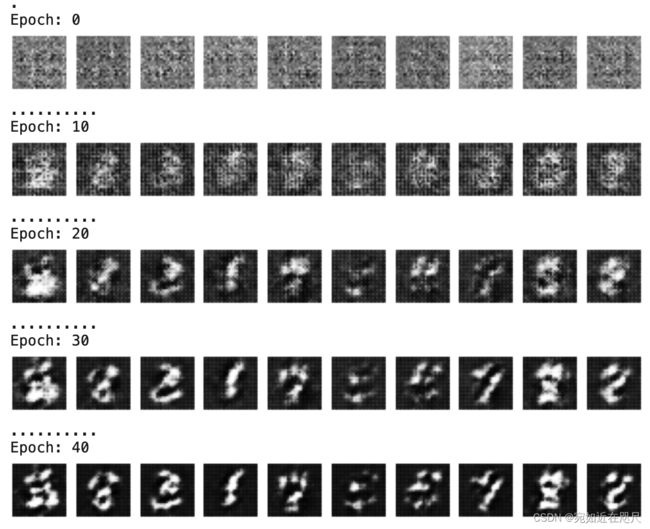

print(label_seed) # [2 8 3 1 7 5 5 7 8 8]

定义主训练函数

def train(dataset,epochs):

for epoch in range(epochs):

print(".",end="")

# 训练

for image_batch,label_batch in dataset:

train_step(image_batch,label_batch)

# 绘图

if epoch%10 == 0:

plot_gen_image(gen,noise_seed,label_seed,epoch)

plot_gen_image(gen,noise_seed,label_seed,epoch)

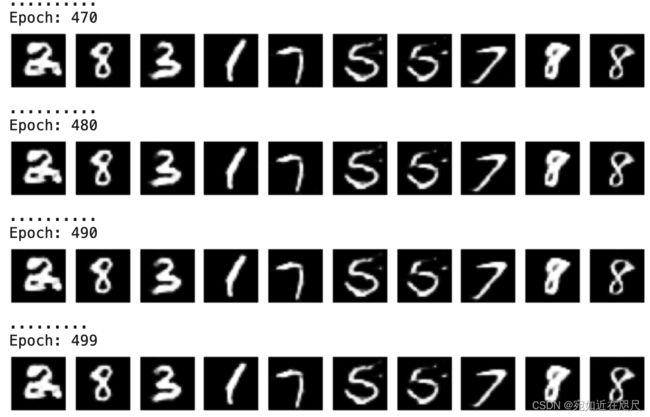

2.9 模型训练与结果展示

训练500批次,调用主训练函数

EPOCHS = 500

train(dataset,EPOCHS)

以上所有代码依次复制到jupyter notebook即可运行!

2.10 使用生成器

模型保存本地

gen.save('generateModel_acgan.h5')

依次生成0-9十个数字

num = 10

noise_seed = tf.random.normal([num, noise_dim])

cat_seed = np.array([1,2,3,4,5,6,7,8,9,0])

plot_gen_image(gen, noise_seed, cat_seed, 1)

num = 10

noise_seed = tf.random.normal([num, noise_dim])

cat_seed = np.array([6]*10)

plot_gen_image(gen, noise_seed, cat_seed, 1)