爬取近十年来的天气数据

本文内容简介内容,详细内容请去本专版第一篇进行自学习

1.安装以下库

import requests

from bs4 import BeautifulSoup as bs

import pandas as pd

from pandas import Series,DataFrame2.爬取数据

详细内容请参考于本专栏爬虫:python如何获取天气数据

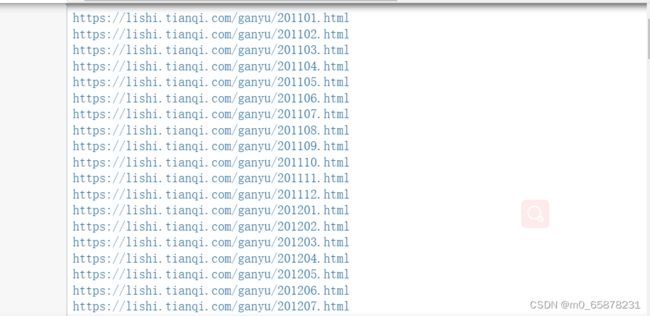

此处是获得 2011-01——2022-08的天气网址,看一看有没有问题

data_all=[]

mounths=['01','02','03','04','05','06','07','08','09','10','11','12']

for a in range(11,23):

if a ==22:

for b in range(8): #2022-08月份是此时的时刻

url='https://lishi.tianqi.com/ganyu/20{}{}.html'.format(a,mounths[b])

resp= requests.request("GET", url, headers=headers)

resp.encoding = 'utf-8'

soup = bs(resp.text,'html.parser')

tian_three=soup.find("div",{"class":"tian_three"})

print(url)

lishitable_content=tian_three.find_all("li")

for i in lishitable_content:

lishi_div=i.find_all("div")

data=[]

for j in lishi_div:

data.append(j.text)

data_all.append(data)

else :

for b in mounths: #除了2022年的其他年份天气数据

url='https://lishi.tianqi.com/ganyu/20{}{}.html'.format(a,b)

resp= requests.request("GET", url, headers=headers)

resp.encoding = 'utf-8'

soup = bs(resp.text,'html.parser')

tian_three=soup.find("div",{"class":"tian_three"})

print(url)

lishitable_content=tian_three.find_all("li")

for i in lishitable_content:

lishi_div=i.find_all("div")

data=[]

for j in lishi_div:

data.append(j.text)

data_all.append(data)通过print(url)观看哪里出了问题,中途会发生未知错误,单独拿出来运行就可以解决此问题

3 数据的整理与存储

将数据变成pd表,进行数据处理

weather=pd.DataFrame(data_all)

weather.columns=["当日信息","最高气温","最低气温","天气","风向信息"]

weather_shape=weather.shape进行数据预处理

原图像

weather['当日信息'].apply(str)

result = DataFrame(weather['当日信息'].apply(lambda x:Series(str(x).split(' '))))

result=result.loc[:,0:1]

result.columns=['日期','星期']

weather['风向信息'].apply(str)

result1 = DataFrame(weather['风向信息'].apply(lambda x:Series(str(x).split(' '))))

result1=result1.loc[:,0:1]

result1.columns=['风向','级数']

weather=weather.drop(columns='当日信息')

weather=weather.drop(columns='风向信息')

weather.insert(loc=0,column='日期', value=result['日期'])

weather.insert(loc=1,column='星期', value=result['星期'])

weather.insert(loc=5,column='风向', value=result1['风向'])

weather.insert(loc=6,column='级数', value=result1['级数'])结果类似如下:

![]()

最后对数据进行保存

weather.to_csv("XXX.csv",encoding="utf_8")完整代码

import requests

from bs4 import BeautifulSoup as bs

import pandas as pd

from pandas import Series,DataFrame

headers={'User-Agent': 'Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/104.0.5112.102 Safari/537.36 Edg/104.0.1293.63',

'Host':'lishi.tianqi.com',

'Accept-Encoding': "gzip, deflate",

'Connection': "keep-alive",

'cache-control': "no-cache"}

data_all=[]

mounths=['01','02','03','04','05','06','07','08','09','10','11','12']

for a in range(11,23):

if a ==22:

for b in range(8):

url='https://lishi.tianqi.com/ganyu/20{}{}.html'.format(a,mounths[b])

resp= requests.request("GET", url, headers=headers)

resp.encoding = 'utf-8'

soup = bs(resp.text,'html.parser')

tian_three=soup.find("div",{"class":"tian_three"})

print(url)

lishitable_content=tian_three.find_all("li")

for i in lishitable_content:

lishi_div=i.find_all("div")

data=[]

for j in lishi_div:

data.append(j.text)

data_all.append(data)

else :

for b in mounths:

url='https://lishi.tianqi.com/ganyu/20{}{}.html'.format(a,b)

resp= requests.request("GET", url, headers=headers)

resp.encoding = 'utf-8'

soup = bs(resp.text,'html.parser')

tian_three=soup.find("div",{"class":"tian_three"})

print(url)

lishitable_content=tian_three.find_all("li")

for i in lishitable_content:

lishi_div=i.find_all("div")

data=[]

for j in lishi_div:

data.append(j.text)

data_all.append(data)

weather=pd.DataFrame(data_all)

weather.columns=["当日信息","最高气温","最低气温","天气","风向信息"]

weather_shape=weather.shape

weather['当日信息'].apply(str)

result = DataFrame(weather['当日信息'].apply(lambda x:Series(str(x).split(' '))))

result=result.loc[:,0:1]

result.columns=['日期','星期']

weather['风向信息'].apply(str)

result1 = DataFrame(weather['风向信息'].apply(lambda x:Series(str(x).split(' '))))

result1=result1.loc[:,0:1]

result1.columns=['风向','级数']

weather=weather.drop(columns='当日信息')

weather=weather.drop(columns='风向信息')

weather.insert(loc=0,column='日期', value=result['日期'])

weather.insert(loc=1,column='星期', value=result['星期'])

weather.insert(loc=5,column='风向', value=result1['风向'])

weather.insert(loc=6,column='级数', value=result1['级数'])

weather.to_csv("赣榆的天气.csv",encoding="utf_8")